AWS News Blog

Category: Amazon EC2

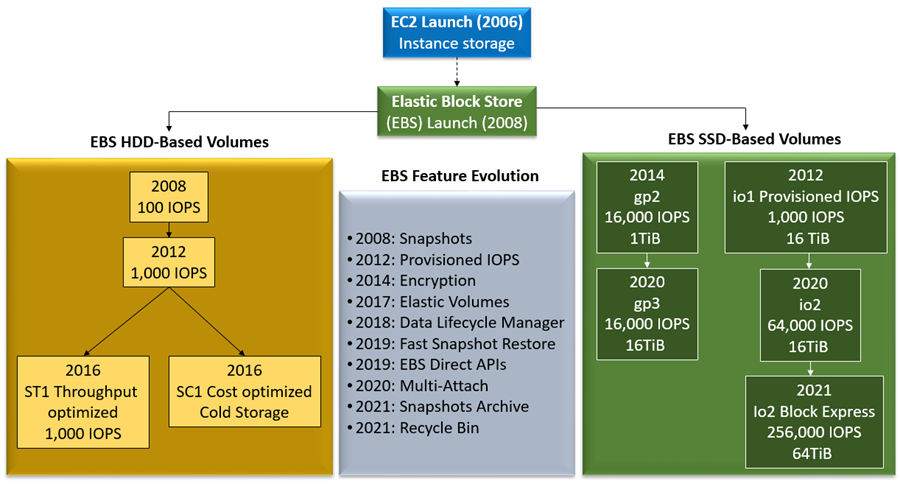

A Decade of Ever-Increasing Provisioned IOPS for Amazon EBS

Progress is often best appreciated in retrospect. It is often the case that a steady stream of incremental improvements over a long period of time ultimately adds up to a significant level of change. Today, ten years after we first launched the Provisioned IOPS feature for Amazon Elastic Block Store (Amazon EBS), I strongly believe […]

AWS Week in Review – August 8, 2022

As an ex-.NET developer, and now Developer Advocate for .NET at AWS, I’m excited to bring you this week’s Week in Review post, for reasons that will quickly become apparent! There are several updates, customer stories, and events I want to bring to your attention, so let’s dive straight in! Last Week’s launches .NET developers, […]

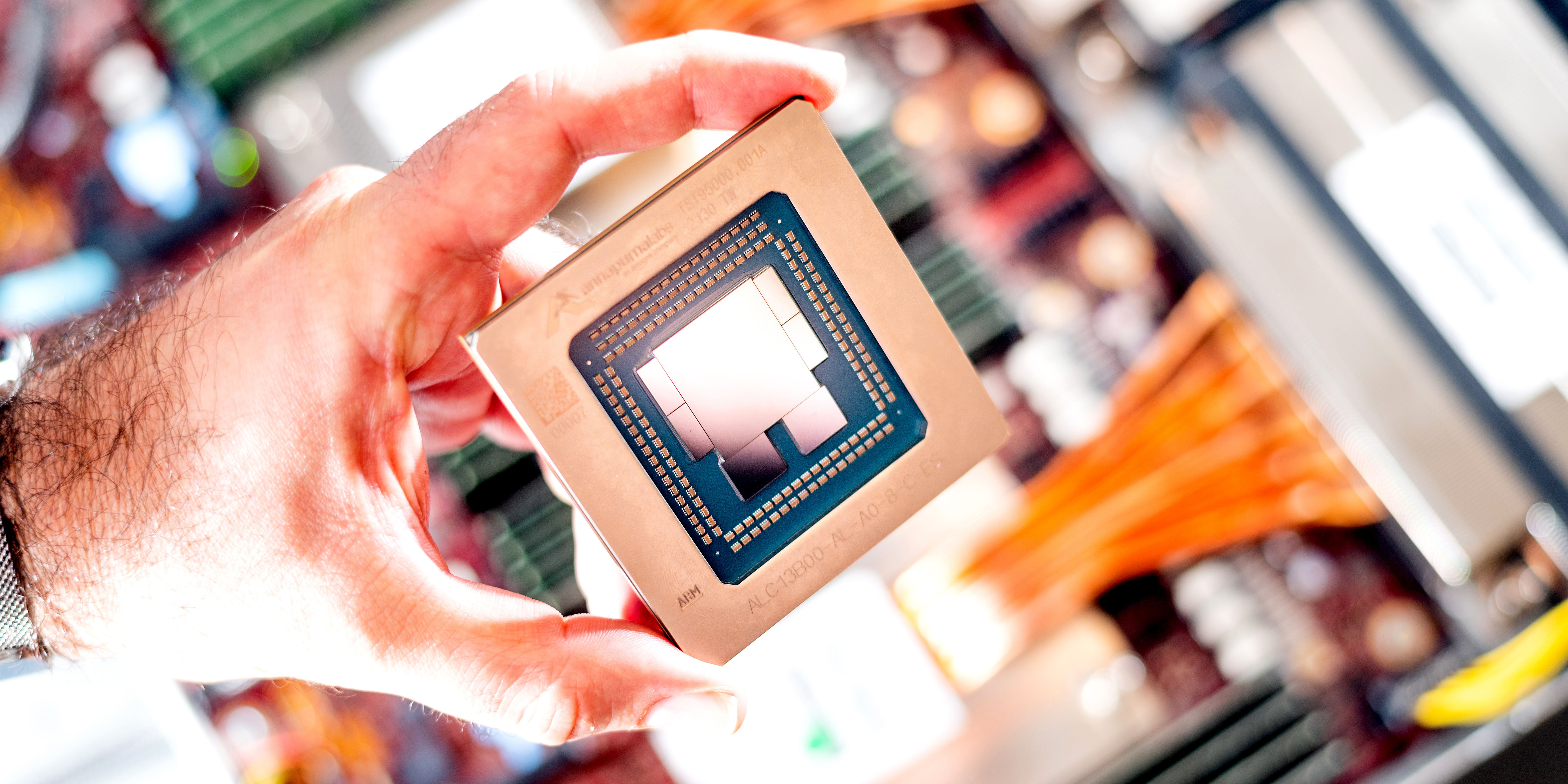

Graviton Fast Start – A New Program to Help Move Your Workloads to AWS Graviton

With the Graviton Challenge last year, we helped customers migrate to Graviton-based EC2 instances and get up to 40 percent price performance benefit in as little as 4 days. Tens of thousands of customers, including 48 of the top 50 Amazon Elastic Compute Cloud (Amazon EC2) customers, use AWS Graviton processors for their workloads. In […]

New – Run Visual Studio Software on Amazon EC2 with User-Based License Model

Today we are announcing the general availability of license-included Visual Studio software on Amazon Elastic Cloud Compute (Amazon EC2) instances. You can now purchase fully compliant AWS-provided licenses of Visual Studio with a per-user subscription fee. Amazon EC2 provides preconfigured Amazon Machine Images (AMIs) of Visual Studio Enterprise 2022 and Visual Studio Professional 2022. You […]

AWS Week In Review – July 25, 2022

A few weeks ago, we hosted the first EMEA AWS Heroes Summit in Milan, Italy. This past week, I had the privilege to join the Americas AWS Heroes Summit in Seattle, Washington, USA. Meeting with our community experts is always inspiring and a great opportunity to learn from each other. During the Summit, AWS Heroes […]

New – Amazon EC2 R6a Instances Powered by 3rd Gen AMD EPYC Processors for Memory-Intensive Workloads

We launched the general-purpose Amazon EC2 M6a instances at AWS re:Invent 2021 and compute-intensive C6a instances in February of this year. These instances are powered by the 3rd Gen AMD EPYC processors running at frequencies up to 3.6 GHz to offer up to 35 percent better price performance versus the previous generation instances. Today, we […]

New – Amazon EC2 M1 Mac Instances

Last year, during the re:Invent 2021 conference, I wrote a blog post to announce the preview of EC2 M1 Mac instances. I know many of you requested access to the preview, and we did our best but could not satisfy everybody. However, the wait is over. I have the pleasure of announcing the general availability […]

AWS Week in Review – June 20, 2022

This post is part of our Week in Review series. Check back each week for a quick roundup of interesting news and announcements from AWS! Last Week’s Launches It’s been a quiet week on the AWS News Blog, however a glance at What’s New page shows the various service teams have been busy as usual. […]