Containers

Planning Kubernetes Upgrades with Amazon EKS

In February, Amazon Elastic Kubernetes Service (Amazon EKS) released support for Kubernetes version 1.19. We announced this through the usual mechanisms with our What’s New post and updates in Amazon EKS documentation. After some conversations both internally and with our customers, we have decided to start regular AWS Containers blog posts on Amazon EKS Kubernetes version releases. In these posts, we’ll highlight new features as well as to call out specific changes that you should make note of when planning cluster upgrades.

We also believe that Kubernetes version upgrades are a significant thing to reason about to begin with. So, as we are working on our next Kubernetes version release internally, I’d like to share some thoughts in general about version releases in Amazon EKS as well as offer some guidance on planning and executing version upgrades with EKS. In the spirit of our plan to blog about Kubernetes versions, I will close out with some observations about Kubernetes version 1.19 in case you haven’t yet upgraded to that version. Going forward, we’ll be doing this for each Kubernetes version we release.

Amazon EKS and Kubernetes versions

Kubernetes is a fast-paced, evolving open source project. Thousands of changes go into each upstream release, contributed by a large open source community. AWS developers and engineers contribute to multiple projects within the Kubernetes ecosystem and actively participate in longer-term feature work across projects. We closely monitor changes across the entire project, specifically testing how they interact to prepare for a Kubernetes version’s eventual release in EKS. We ensure that components work together well within the service, and also test to ensure a highly-available upgrade path for your clusters. You trust Amazon EKS to manage your Kubernetes infrastructure on your behalf, and there are a lot of moving parts that need to work well together for that to be a transparent experience for you.

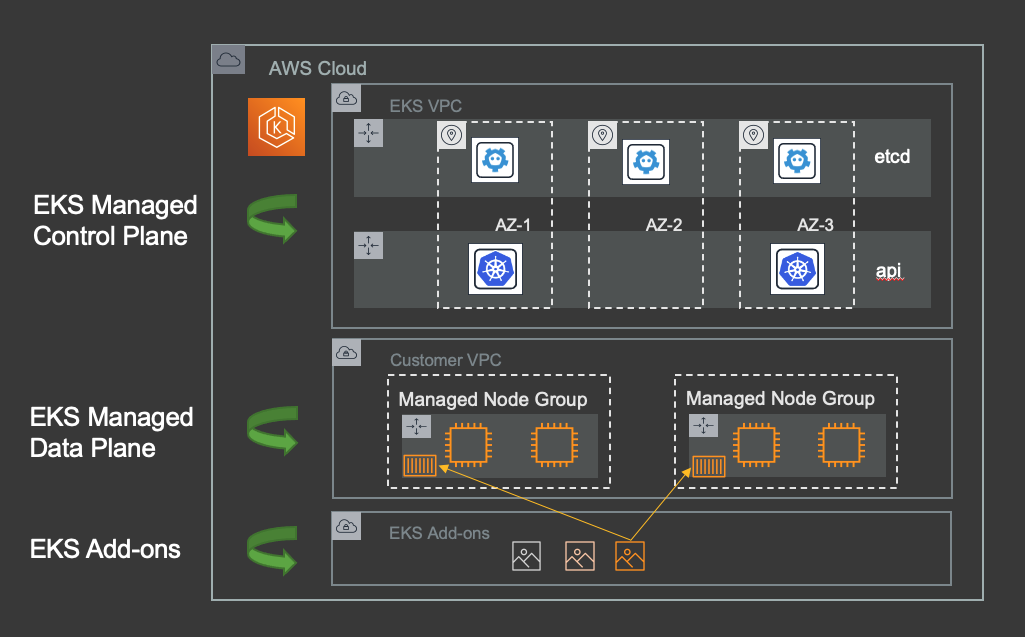

Amazon EKS helps you to deploy and scale your Kubernetes applications more easily by providing a managed control plane for your clusters, as well as features for fully managed nodes and integration with AWS Fargate for serverless containers. At re:Invent last year, we announced that EKS now supports the management of operational cluster add-on software, as well. We’ll be sharing more news about EKS add-ons in the coming weeks. As a core feature of EKS, management of your operational add-ons extends our goals of removing undifferentiated heavy lifting within the scope of cluster lifecycle management.

As aspects of a managed service, all of these features work together and are fully integrated within AWS so that your Kubernetes clusters are secure, highly available, and will scale with your needs. These features are all built to have a high level of awareness related to Kubernetes versions, especially through cluster upgrade operations. Amazon EKS offers highly available upgrades for your cluster control plane, managed node groups, and select operational add-ons.

Just as there are many aspects that make up a cluster in EKS, there are many aspects that go into a Kubernetes version upstream. A version release of Kubernetes is actually a lockstep point in time across multiple discrete pieces of software. From control plane components such as the API server, the controller manager, and the scheduler to node software components like kubelet, these multiple pieces of software are improved, maintained, and tested together over time to form a version release.

Through Kubernetes releases, new features are introduced and progressed through stages from alpha, to beta, and finally to stable. For any given minor version release, features have a fixed stage for the lifetime of that release. When a Kubernetes version is released for use in EKS, all stable Kubernetes features as well as all beta features, which are enabled by default upstream, are supported. We don’t support alpha features in EKS because development tends to be very active on them, and often they merge or become other features. Not enabling Alpha features in EKS is an opinionated decision that we feel gives our customers a more consistent experience moving from version to version. This may evolve over time. For example, as alpha features become stable over time, we are considering enabling up-version stable features in older versions within EKS.

Regarding versions over time, the Kubernetes project maintains release branches for the most recent three minor releases. Historically, these minor versions have been supported for 9 months from release. Starting with 1.19, the project will support a minor release for one year. This was motivated in part by a desire to have more Kubernetes users running supported versions, and will help give cluster administrators a bit more time to plan for upgrades over time.

Once released in EKS, a Kubernetes version is supported for 14 months. This surpasses that upstream support timeline as we know we have many customers with very large cluster fleets. During this support window, we will backport security fixes as needed and will support that version’s use. For current supported releases and support details at any time, refer to the Amazon EKS User Guide.

Planning and executing Kubernetes version upgrades in Amazon EKS

Putting all of this together, there is a lot that goes into a Kubernetes version release, and the EKS team does a great deal to ensure that when we release a version, it’s production-ready and that upgrades will be as seamless an experience for you and your workloads as possible. This is where AWS and our customers meet in the shared responsibility model. Amazon EKS will upgrade your components for you, but you have some work to do in order to ensure that things go smoothly. I’ll briefly walk through the upgrade process now, and provide some high level upgrade guidelines to help you prepare and manage your upgrades.

While EKS will support a Kubernetes release for a longer period than it is supported upstream, there is a limit to that support. As we add new versions over time, we also deprecate the oldest. When a version is deprecated, this means that it is no longer supported, the version is no longer maintained in EKS, and new clusters cannot be created with that version.

It is vital that you have an ongoing upgrade plan for your clusters in EKS. Kubernetes is a fast-paced project, and often the innovative features coming in the latest versions are compelling reason enough to upgrade your clusters. If that isn’t the case, also consider that the fast pace of development induces API churn, often with deprecation and breaking changes. These are always well documented upstream, but the more down-rev your configurations and specifications are, the more there is to untangle when upgrading to latest releases.

That said, consistent upgrades to the latest release is not the norm. We understand this. Upgrading a small cluster running basic workloads is fairly light work, but managing fleets of large clusters with complex networking configurations and persistent storage is much more complex.

Additionally, you may be several releases behind your target release. In-place upgrades are incremental to the next highest Kubernetes minor version, which means if you have several releases between your current and target versions, you’ll need to move through each one in sequence. In this scenario, it might be best to consider a blue/green infrastructure deployment strategy, which is a complex undertaking in its own right.

This is why upgrade planning and a regular upgrade cadence is critical. We are continuing to work on features to help with this process, but at the root of any successful Kubernetes upgrade is thorough planning and testing.

In its simplest form, an in-place Kubernetes cluster upgrade consists of the following phases:

- Update your Kubernetes manifests, as required

- Upgrade the cluster control plane

- Upgrade the nodes in your cluster

- Update your Kubernetes add-ons and custom controllers, as required

These phases are best done in a test environment to completion, so that you can discover any issues in your cluster configuration as well as your application manifests in advance of upgrade operations on your production clusters. Start with a cluster with your current Kubernetes version, with all of your production versions of add-ons, applications, and anything else that needs to be tested. Then run through the upgrade, deployment, and testing phases, making changes as needed.

Ideally, your cluster specifications, add-on configuration customizations, and application manifests are all maintained in a source code repository under version control. This provides a controlled and versioned approach to infrastructure and application management, leveraging immutable artifacts which can be used to manage and deploy to any number of clusters. Any cluster upgrade process starts in a test environment, with required changes being committed back to the source repo. Once done, everything can be deployed to production from there.

One of the great benefits of running your clusters in EKS is that you can upgrade your control plane with a single operation. These upgrades are a single fully-automated operation, keeping the cluster available through the provisioning and testing of new control plane resources. If any issues arise, the upgrade is rolled back, and your applications remain up and available. For more information, see the Amazon EKS User Guide.

Similarly, managed node group upgrades are also fully-automated, implemented as incremental rolling updates. New nodes are provisioned in EC2 and join the cluster, as old nodes are cordoned, drained, and removed. New nodes will use the EKS-optimized Amazon Linux 2 AMI by default, or optionally your custom AMI. Note you’ll need to create your own updated images when using your own custom AMIs, so you’ll need an updated Launch Template version as part of your node group upgrade. For more information on managed node group updates, see the EKS User Guide.

This handles the first two phases of a cluster upgrade, and it’s a lot to offload. Gathering up version upgrades of various software components running on multiple compute resources, merging configurations, and patching or reinstalling compute instances and reducing it down to a set of API calls or CLI invocations is a pretty good way to start. But, there is more work to be done beyond just clicking the ‘update’ button.

Handling complexity and testing for success

To help with the third phase, EKS now also offers lifecycle management of select add-ons. The VPC CNI controller is the only supported add-on as I’m writing this, with kube-proxy and core-dns on the way. We’ll be releasing more add-ons later this year, as well. But, you may be running multiple add-ons, custom controllers which may have their own custom resource definitions, and a number of other operational and customized software within your cluster. These components all need to be tested and potentially updated as well, ideally to the latest available release that supports your new Kubernetes version.

The bulk of the operational software update phase is about knowing the dependencies, updating the bits, and ensuring your configuration customization is still valid. You may have some updates to make, and a test environment is the place to discover this. Create a test cluster using your target Kubernetes version, and deploy everything a piece at a time. This is when complexity starts to play a role, so give yourself time and a safe runway.

As said above, it’s a good practice to store your configuration specifications or Helm charts in version control alongside your other Kubernetes specifications so that once testing is complete and changes are committed, you have a full set of immutable artifacts to roll out into production deployments.

This brings us to the most critical aspect of any upgrade: testing your manifest. Amazon EKS provides highly available, in-place upgrades of your clusters, and Kubernetes will do its best to migrate your object specifications along the way. But there are some changes that it cannot automatically make, and in the event of API version changes and working around potential deprecations, you’ll need to intervene. Again, such changes are always well documented so part of planning includes making note of API changes as you prepare. We call these out when breaking changes are part of a Kubernetes release, and the upstream docs are always an excellent resource.

Now with your test cluster running your target Kubernetes version and outfitted with your set of operational add-ons, and your Kubernetes applications deployed, you can test and observe. Ensure that everything is created and configured properly. Run your tests, check for errors, and address any issues. This is where keeping your application manifests, add-on configuration, and other components of your system in source control is central to a well-organized upgrade plan. Once tests are passing and configuration is all correctly in place, a commit provides you with the artifacts needed to deploy fleet-wide.

Of course, this is all quite simplified. You may have many applications, and any given application may consist of dozens or hundreds of microservices. Any given service can have its own unique, complex configuration and dependencies on custom resource definitions, which in turn depend on custom controllers. This modularity and extensibility is part of the power of Kubernetes, but it demands additional care around upgrade planning. Again, give yourself plenty of runway to thoroughly test what needs testing. Automation helps a great deal here, as anyone running hundreds of microservices would agree. As an ally in upgrades, nothing beats time which is why planning is so critical.

Amazon EKS and Kubernetes version 1.19

Now on to some thoughts about the most recent release, Kubernetes version 1.19. When we announce a new Kubernetes version in EKS, new clusters can be created with this version and existing clusters can be upgraded to it. In addition, Amazon EKS Distro builds become available through ECR Public Gallery and GitHub.

As with any Kubernetes version release on EKS, there are multiple things to consider. Newly-enabled Kubernetes features, changes and deprecations with existing features, and changes in EKS are all things that will play into your upgrade planning as discussed above. So spin up a test cluster and get ready to start upgrading components.

A good place to start with any Kubernetes version release is with the upstream announcement. Here is a link to the official release announcement for 1.19. These announcements provide a summary of the release, its motivations and major themes, and will always call out deprecations that you need to plan for. They will also link to the changelog for the release, which will include micro version updates. It’s best to consider the specific version in EKS and review the specific changes that roll up into that release. For example, when using Kubernetes version 1.19 on EKS, at the time of this writing the User Guide specifies version 1.19.8.

Kubernetes version 1.19 features

There are several Kubernetes features that are supported for Amazon EKS when using Kubernetes version 1.19. Moving from version to version, new features in EKS will be those that have graduated from alpha to beta and have been enabled by default, or potentially beta features that were previously disabled by default and are now enabled or have graduated to stable.

Ingress API is now a stable Kubernetes feature. This has been in use for so long at this point, we might not have considered it beta. Ingress allows you to configure access to internal cluster resources for external access requests. It may be used with load balancing, SSL termination, and name-based virtual hosting. For this release the functionality remains the same, just note that the apiVersion has changed from networking.k8s.io/v1beta1 to networking.k8s.io/v1, so be sure to update your manifests.

EndpointSlices is a beta feature which is now enabled by default. This is a feature with exciting implications for scalability of clusters that have many service endpoints. Endpoints have a one-to-one mapping with pods. As the number of pods backing a service increases, it can put a strain on the control plane as the number of Endpoints scales with the number of pods. The introduction of EndpointSlices is a mostly transparent feature, enabling the control plane to automatically group sets of Endpoints together into EndpointSlices, each of which can refer to up to 100 Endpoints. This helps alleviate this scaling pressure, and also paves the way for more advanced features in future like Topology Aware Routing.

Related to topology, Pod Topology Spread is now a stable feature. This is used to control how pods are scheduled across your cluster within topology domains, such as regions, zones, or other groups of nodes. Topology spread configuration relies on node labels to identify the topology domain(s) that each node is in. You can use node labels that are already created by EKS, or you can choose your own to arbitrarily define topology. These labels can then be used as topology keys in a topologySpreadConstraints spec, which is part of the pod spec. This give you more granularity and control in high availability configurations and can also can help increase resource utilization efficiency.

Immutable Secrets and ConfigMaps are now enabled by default. This allows to you create optionally immutable Secrets and ConfigMaps using the immutable field in their specifications. Once created, the object and its content will be immutable for their lifetime. These objects are constantly monitored for changes by kubelet, so there is a performance benefit in using this feature as that monitoring is not needed for immutable objects. Additionally, it can limit the impact of an inadvertent configuration change resulting in application outages. Since these objects are immutable, the only way to change the configuration is to explicitly delete them and create new objects.

The ExtendedResourceToleration admission controller has been enabled. This is a plugin that allows you to manage node taints and pod tolerations more transparently, by configuring nodes with extended resources such as GPUs, FPGAs, and other special resources that Kubernetes otherwise doesn’t know about. This controller automatically adds tolerations for such taints to pods requesting extended resources, so users don’t have to manually add these tolerations. For more information on extended resource configuration, see the Kubernetes documentation.

For Amazon EKS customers and customers running Kubernetes on AWS, Elastic Load Balancers provisioned by the in-tree Kubernetes service controller now support filtering the nodes to be included as instance targets. This can help prevent reaching target group limits in large clusters. See the service.beta.kubernetes.io/aws-load-balancer-target-node-labels annotation in Kubernetes documentation for more details. It should be noted that the in-tree controller is no longer recommended. The upcoming release of the AWS Load Balancer controller will provide NLB instance mode support, removing the last blocker for some specific use cases.

As mentioned above, you can refer to the complete Kubernetes 1.19 changelog for all of the changes in this release. Keep in mind the feature stages described above when determining which features are supported in EKS.

Amazon EKS changes related to Kubernetes version 1.19

When there is a new version of Kubernetes, there may be some changes to EKS configuration requirements. There are a few changes related to Kubernetes 1.19, which I’ll walk through here.

Amazon EKS optimized Amazon Linux 2 AMIs now ship with Linux kernel version 5.4 for Kubernetes version 1.19. This is a modernization, updating from the previous 4.14 kernel. Among other things, this enables eBPF use on your Kubernetes nodes when using the EKS optimized AMI in managed node groups.

Starting with Kubernetes 1.19, EKS no longer adds the kubernetes.io/cluster/<cluster-name> tag to subnets during cluster creation. This subnet tag is optional and only required if you want to influence where the Kubernetes service controller or AWS Load Balancer Controller places Elastic Load Balancers. See updates to Cluster VPC considerations in the Amazon EKS User Guide for more details around the requirements of subnets required for cluster creation.

It is no longer required to provide a security context for non-root containers that need to access the web identity token file for use with IAM roles for service accounts. See the Kubernetes enhancement and Amazon EKS documentation for more details.

The Pod Identity Webhook has been updated to address the issue of missing startup probes. The webhook also now supports an annotation to control token expiration.

CoreDNS version 1.8.0 is the recommended version for Amazon EKS 1.19 clusters. This version is installed by default in new EKS 1.19 clusters. See Installing or upgrading CoreDNS in the Amazon EKS User Guide for more details.

As always, refer to the Amazon EKS User Guide for a complete reference to cluster configuration and requirements.

Call to action for Amazon EKS customers running Kubernetes 1.15

Kubernetes version 1.15 will no longer be supported in EKS on May 3rd, 2021. You will no longer be able to create new clusters of this version, and eventually all clusters will be updated to version 1.16. If you are running 1.15 currently, it is very important that you start your upgrade planning, and upgrade to at least 1.16 as soon as practical.

It is always best to deploy clusters running a supported version of Kubernetes. Security, stability, and feature patches will no longer be provided for 1.15 clusters. In addition, the transition from 1.15 to 1.16 does introduce some API deprecation, and if your clusters are eventually upgraded without proper testing, your applications and services may no longer work.

For more information on the specific API deprecations, see the upstream documentation. For additional guidance on using some open source tools to get you started, see this recent blog.

Conclusion and Moving Forward

Kubernetes is a complex set of software, and just as with any complex system using it requires regular maintenance and careful planning. Amazon EKS will help with many aspects of this process, from highly available control plane updates, to automated node group updates that keep your applications up and running.

Hopefully this post has set some useful context and explained some of the important aspects you need to consider in the shared responsibility model. Moving forward, as mentioned at the outset of this post, we’ll be publishing more narrative posts to accompany our Kubernetes version releases on EKS. Let us know if you’d like more details, or if any particular aspects of the cluster upgrade process could use special attention.

We are working on some additional features which will make some aspects of upgrades even more automated and seamless, and we’re always excited to hear from you how we can improve things. Reach out any time, and check in on the AWS Containers Roadmap on GitHub to see our current plans, open issues to request new features, or upvote your favorite suggestions.