AWS Database Blog

Amazon DynamoDB auto scaling: Performance and cost optimization at any scale

September 2022: This post was reviewed for accuracy.

Scaling up database capacity can be a tedious and risky business. Even veteran developers and database administrators who understand the nuanced behavior of their database and application perform this work cautiously. Despite the current era of sharded NoSQL clusters, increasing capacity can take hours, days, or weeks. As anyone who has undertaken such a task can attest, the impact to performance while scaling up can be unpredictable or include downtime. Conversely, it would require rare circumstances for anyone to decide it’s worth the effort to scale down capacity because that comes with its own set of complex considerations. Why are database capacity planning and operations so fraught? If you underprovision your database, it can have a catastrophic impact on your application, and if you overprovision your database, you can waste tens or hundreds of thousands of dollars. No one wants to experience that.

Amazon DynamoDB is a fully managed database that developers and database administrators have relied on for more than 10 years. It delivers low-latency performance at any scale and greatly simplifies database capacity management. With minimal effort, you can have a fully provisioned table that is integrated easily with a wide variety of SDKs and AWS services. After you provision a table, you can change its capacity on the fly. If you discover that your application has become wildly popular overnight, you can easily increase capacity. On the other hand, if you optimize the application logic and reduce database throughput substantially, you can immediately realize cost savings by lowering provisioned capacity. DynamoDB adaptive capacity smoothly handles increasing and decreasing capacity behind the scenes.

In June 2017, DynamoDB released auto scaling to make it easier for you to manage capacity efficiently, and auto scaling continues to help DynamoDB users lower the cost of workloads that have a predictable traffic pattern. Before auto scaling, you would statically provision capacity in order to meet a table’s peak load plus a small buffer. In most cases, however, it isn’t cost-effective to statically provision a table above peak capacity. In this blog post, we show how auto scaling responds to changes in traffic, explain which workloads are best suited for auto scaling, demonstrate how to optimize a workload and calculate the cost benefit, and show how DynamoDB can perform at one million requests per second.

Background: How DynamoDB auto scaling works

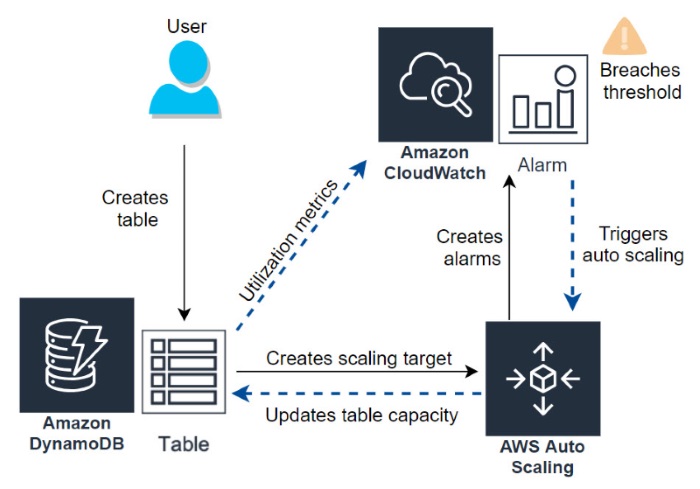

When you create a DynamoDB table, auto scaling is the default capacity setting, but you can also enable auto scaling on any table that does not have it active. Behind the scenes, as illustrated in the following diagram, DynamoDB auto scaling uses a scaling policy in Application Auto Scaling. To configure auto scaling in DynamoDB, you set the minimum and maximum levels of read and write capacity in addition to the target utilization percentage. Auto scaling uses Amazon CloudWatch to monitor a table’s read and write capacity metrics. To do so, it creates CloudWatch alarms that track consumed capacity.

The upper threshold alarm is triggered when consumed reads or writes breach the target utilization percent for two consecutive minutes. The lower threshold alarm is triggered after traffic falls below the target utilization minus 20 percent for 15 consecutive minutes. When an alarm is triggered, CloudWatch initiates auto scaling activity, which checks the consumed capacity and updates the provisioned capacity of the table. For example, if you set the read capacity units (RCUs) at 100 and the target utilization to 80 percent, auto scaling increases the provisioned capacity when utilization exceeds 80 RCUs for two consecutive minutes, or decreases capacity when consumption falls below 60 RCUs (80 percent minus 20 percent) for fifteen minutes.

The following chart illustrates how a table without auto scaling is statically provisioned. The red line is the provisioned capacity and the blue area is the consumed capacity. The consumed peak is almost 80 percent of the provisioned capacity, which represents a standard overhead buffer. The area between the red line and the blue area is the unused capacity.

DynamoDB auto scaling reduces the unused capacity in the area between the provisioned and consumed capacity. The following chart is from the workload example we use for this post, which has auto scaling enabled. You can see the improved ratio of consumed to provisioned capacity, which reduces the wasted overhead while providing sufficient operating capacity.

Through the remainder of this post, we show how DynamoDB auto scaling enables you to turn over most, if not all, capacity management to automation and lower costs.

Setting up the test

To demonstrate auto scaling for this blog post, we generated a 24-hour cyclical workload. The pattern of the workload represents many real-world applications whose traffic grows to a peak and then declines over the period of a day. Examples of this pattern include metrics for an Internet of Things (IoT) company, shopping cart data for an online retailer, and metadata used by a social media app.

We deployed a custom Java load generator to AWS Elastic Beanstalk and then created a CloudWatch dashboard. The dashboard, which is shown in the following screenshot, monitors key performance metrics: request rate, average request latency, provisioned capacity, and consumed capacity for reads and writes.

As the dashboard shows, the generator created a cyclical load that grew steadily, peaked at noon, and then decreased back to zero. The test generated reads and writes using randomly created items with a high-cardinality key distribution. For variation, there were 10 item sizes, which had an average size of 4 KB. To achieve a peak load of 1,000,000 requests per second, we used the average item size, request rate, 20 percent overhead, and read-to-write ratio to estimate that the table would require 2,000,000 WCUs and 800,000 RCUs (capacity calculation documentation). Following best practices, we also made sure that useTcpKeepAlive was enabled in the load generator application (ClientConfiguration javadocs). This setting can help lower overhead and improve performance as it signals for the client to reuse TCP connections rather than reestablish a connection for each request.

The test results

The test ultimately reached a peak of 1,100,000 requests per second. DynamoDB auto scaling actively matched the provisioned capacity to the generated traffic, which meant that we had a workload that was provisioned efficiently. The test’s average service-side request latency was 2.89 milliseconds for reads and 4.33 milliseconds for writes. Amazingly, the average request latency went down as load increased on the table.

The following screenshots are from the Metrics tab of the DynamoDB console. As you can see in the right screenshot, the read request latency dropped to almost 2.5 milliseconds at peak load.

Similarly, the write latency (shown in the following screenshot on the right) dropped to a little more than 4 milliseconds.

These results are surprising, to say the least. Traditionally, a database under load becomes increasingly slow relative to traffic, so seeing performance improve with load is remarkable. Some investigative work revealed that the lower latency is because of internal optimizations in DynamoDB, which were enhanced because all of the requests accessed the same table. The useTcpKeepAlive setting also resulted in more requests per connection, which helped translate to lower latency.

How well did auto scaling manage capacity? Auto scaling maintained a healthy provisioned-to-consumed ratio throughout the day. There was a brief period in the first hour when the load generator increased traffic faster than 80 percent per two-minute period. However, because of burst capacity, the impact was negligible. The metrics show that there were 15 throttled read events per 3.35 million read requests, which is four 10-thousandths of a percent. After the initial period, there was no throttling.

As described earlier in this post, CloudWatch monitors table activity and responds when a threshold is breached for a set period. The following snapshot from CloudWatch shows the activity for the target utilization alarms. As you can see, there are upper and lower thresholds that use two-minute and fifteen-minute periods to track consumed and provisioned capacity of reads and writes.

The utilization alarms then triggered scaling events, which you can see as auto scaling activities on the DynamoDB Capacity tab (shown in the following screenshot). Auto scaling independently changed the provisioned read and write capacity as consumed capacity crossed the thresholds.

The test ultimately exceeded one million requests per second. The following graph shows both reads (the blue area) and writes (the orange area): 66.5 million/60 second period = 1.11 million requests per second.

This test showed that auto scaling increased provisioned capacity every two minutes when traffic reached the 80 percent target utilization. You may have noticed in an earlier screenshot of this post that the scale-down period was slower. This is because auto scaling lowers capacity after consumption is 20 percent lower than the target utilization for 15 minutes. Decreasing capacity more slowly is by design, and it conforms to the limit on dial-downs per day.

The constant updating of DynamoDB auto scaling resulted in an efficiently provisioned table and, as we show in the next section, 30.8 percent savings. The test clearly demonstrated the consistent performance and low latency of DynamoDB at one million requests per second.

Auto scaling cost optimization: provisioned, on-demand, and reserved capacities

In this section, we compare the table costs of statically provisioned, auto scaling, and on-demand options. We also calculate how reserved capacity can optimize the cost model.

As you may recall, a traditional statically provisioned table sets capacity 20 percent above the expected peak load; for our test that would be 2,000,000 WCUs and 800,000 RCUs. Write and read capacity units are priced per hour so the monthly cost is calculated by multiplying the provisioned WCUs and RCUs by the cost per unit-hour, which is $.00013/RCU and $.00065/WCU, and then by the number of hours in an average month (730). All unit costs can be found on the DynamoDB Pricing page. As such, a statically provisioned table for our test would cost $1,024,920 per month:

$1,024,920 per month = 730 hours × ((2,000,000 × $.00065) + (800,000 × $.00013))

To find the actual cost of the auto scaling test that we ran, we use the AWS Usage Report, which is a component of billing that helps identify cost by service and date. Within the usage report, there are three primary cost units for DynamoDB, which are: WriteCapacityUnit-Hrs, ReadCapacityUnit-Hrs, and TimeStorage-ByteHrs. We focus on the first two, and can ignore the storage cost, because it’s minimal and is the same regardless of other settings. In the following screenshot, you can see the read and write costs of the test, which totaled $23,318.06 per day.

At 30.4 days per month (365 days/12 months), auto scaling would average $708,867 per month, which is $316,053 (or 30.8 percent) less than the statically provisioned table we calculated above ($1,024,920):

$316,053 monthly savings = $1,024,920 statically provisioned – $708,867 auto scaling

This shows how auto scaling lowered the cost of the table. In a production environment, auto scaling could reduce operations time associated with planning and managing a provisioned table. You can include those operational savings in the cost estimates of any of your workloads on DynamoDB.

How do the auto scaling savings compare to what we’d see with on-demand capacity mode? With on-demand, DynamoDB instantly allocates capacity as it is needed. There is no concept of provisioned capacity, and there is no delay waiting for CloudWatch thresholds or the subsequent table updates. On-demand is ideal for bursty, new, or unpredictable workloads whose traffic can spike in seconds or minutes, and when underprovisioned capacity would impact the user experience. On-demand is a perfect solution if your team is moving to a NoOps or serverless environment. As the cost benefit of a serverless workflow is outside the scope of this blog post, and our test represents gradually changing traffic, on-demand is not well suited for this comparison. However, let’s look at the costs anyway to understand how to calculate them.

On-demand capacity mode is priced $1.25 and $.25 per respective one million write or read units. To identify the total WCUs and RCUs used by our test in a single day, we return to our CloudWatch dashboard. If you recall, we tracked requests per minute. This was done by using the SampleCount metric of SuccessfulRequestLatency, as described in the metrics and dimensions documentation. By changing the period of this metric to a full day, we can see the total number of read and write requests, as shown in the following screenshot. With the request counts, we can use the average object size of the test (4 KB) to approximate how many WCUs and RCUs would be used in on-demand mode. Equations to calculate consumed capacity are described at length in the documentation.

By combining the RCU and WCU costs, we can approximate a monthly on-demand cost of $2,471,520:

$2,471,520/month = 30.4 days × ($5,800 + $75,500)

Compared to $708,867 per month on auto scaling, the higher cost of on-demand mode is not surprising because our test represents a predictable workload and doesn’t account for the real NoOps cost benefit. The takeaway is that this workload is a perfect candidate for auto scaling and cost optimization.

Auto scaling represents significant cost savings, but can we do more? How does reserved capacity factor into cost optimization? Reserved capacity for DynamoDB is consistent with the Amazon EC2 Reserved Instance model. You purchase reserved capacity for a one-year or three-year term and receive a significant discount. You purchase reserved capacity in 100-WCU or 100-RCU sets. For comparison’s sake, let’s use the one-year reservation model. A reserved capacity of 1 WCU works out to $.000299 per hour.

$.000299 per hour = (($150 reservation ÷ 8,760 hours/year) + $.0128 reserved WCU/hour) ÷100 units

That is 46 percent of the standard price, which is .00065, and a significant savings. The same cost savings is consistent for reserved RCUs as well, which is $.000059 per reserved RCU or $.00013 per standard RCU.

$.000059 per hour = (($30 reservation ÷ 8,760 hours/year) + $.0025 reserved RCU/hour) ÷ 100 units

Can auto scaling and reserved capacity work together? Yes! You can approximate a blend of reserved capacity and auto scaling by using the ratio of reserved to provisioned cost. For example, we know that reserved capacity costs 46 percent of standard pricing, so any portion of a capacity that is active more than 46 percent of a day should save money as reserved capacity. We can calculate the ratio transition point by using noon as our anchor. As such, 46 percent of 12 hours works out to 5.5 hours before noon, or 6:30 AM.

331 minutes before noon = 46% × (12 hours × 60 minutes)

DynamoDB is billed by the hour, and hourly usage metrics can be downloaded from the billing reports. We round up from 6:30 AM to 7:00 AM for the transition between reserved capacity and auto scaling. The CloudWatch dashboard shows that at 7:00 AM there were 1.74 million WCUs and 692,000 RCUs. To estimate the blended cost, we use the RCU and WCU reserved rates and add the auto scaling per hour usage from 7:00 AM to 7:00 PM (which is when the load drops below the reservation amount). The following screenshots show the blended ratio between reserved and auto scaling WCUs.

The hourly usage was downloaded from the billing console. The following table shows the cost per hour for the auto scaling portion of the blended ratio. The reserved capacity is subtracted from the RCUs and WCUs because that is added separately.

| StartTime | EndTime | RCU | RCU Minus Reserved | RCU Hourly | WCU | WCU Minus Reserved | WCU Hourly |

| 7:00:00 AM | 8:00:00 AM | 663642 | 0 | $0.00 | 1655866 | 0 | $0.00 |

| 8:00:00 AM | 9:00:00 AM | 752119 | 60119 | $7.82 | 1899080 | 159080 | $103.40 |

| 9:00:00 AM | 10:00:00 AM | 752119 | 60119 | $7.82 | 1899080 | 159080 | $103.40 |

| 10:00:00 AM | 11:00:00 AM | 786775 | 94775 | $12.32 | 1975145 | 235145 | $152.84 |

| 11:00:00 AM | 12:00:00 PM | 800000 | 108000 | $14.04 | 2000000 | 260000 | $169.00 |

| 12:00:00 PM | 1:00:00 PM | 800000 | 108000 | $14.04 | 2000000 | 260000 | $169.00 |

| 1:00:00 PM | 2:00:00 PM | 800000 | 108000 | $14.04 | 2000000 | 260000 | $169.00 |

| 2:00:00 PM | 3:00:00 PM | 800000 | 108000 | $14.04 | 2000000 | 260000 | $169.00 |

| 3:00:00 PM | 4:00:00 PM | 800000 | 108000 | $14.04 | 2000000 | 260000 | $169.00 |

| 4:00:00 PM | 5:00:00 PM | 800000 | 108000 | $14.04 | 2000000 | 260000 | $169.00 |

| 5:00:00 PM | 6:00:00 PM | 800000 | 108000 | $14.04 | 2000000 | 260000 | $169.00 |

| 6:00:00 PM | 7:00:00 PM | 599842 | 0 | $0.00 | 1485558 | 0 | $0.00 |

| Auto scaling daily total: | $1,668.88 | ||||||

To then find the reserved monthly cost, we multiply the total units by the reserved unit cost ($.000299 per WCU-hour and $.000059 per RCU-hour) and multiply by the hours in a month (730).

$379,789 per month = 1.74 million WCUs × $.000299 × 730 hours

$29,804 per month = 692,000 RCUs × $.000059 × 730 hours

Combined, reserved capacity totals $409,593 per month. By adding this to the portion of auto scaling, which is $50,734 ($1,668.88 x 30.4 days), we arrive at a blended monthly rate of $460,327. That is $248,540 less than the auto scaling estimate, which was $708,867.

You also can use the three-year reserved capacity option, which is a discount of more than 75 percent. At that deep discount, it is cost-effective to statically provision all reserved capacity.

The following table summarizes our optimization findings. It compares each scenario and highlights the associated savings in relation to a statically provisioned table. Your workload patterns will be different, and you will have to calculate the amount of savings based on your own patterns. By using the optimizations we’ve discussed, you can significantly lower your costs.

| Cost Per Month | |||

| Cost | Statically provisioned | WCUs: 2,000,000 RCUs: 800,000 |

$1,024,920 |

| Auto scaling | Variable capacity | $708,867 | |

| Blended auto scaling and one-year reserved capacity |

Variable capacity | $460,327 | |

| Three-year reserved capacity | WCUs: 2,000,000 RCUs: 800,000 |

$234,141 | |

| Savings | Auto scaling | 30.8 percent savings | $316,053 |

| Blended auto scaling and one-year reserved capacity |

55.1 percent savings | $564,593 | |

| Three-year reserved capacity | 77.1 percent savings | $790,779 |

Summary: Auto scaling and cost optimization takeaways

Let’s review the results of the DynamoDB auto scaling test in this post and the lessons we learned:

- How does auto scaling work? In this test, auto scaling used CloudWatch alarms to adapt quickly to traffic. Within minutes, auto scaling updated the table’s provisioned capacity to match the load. Auto scaling scales up more quickly than down, and you can tune it by setting the target utilization. In our test, auto scaling provided sufficient capacity while reducing overhead.

- How did auto scaling handle a nontrivial workload? Extremely well. We ran a load test that grew from zero to 1.1 million requests per second in 12 hours and auto scaling updated the table every time the target utilization threshold was breached. We checked auto scaling activity, which confirmed that it was updated accurately and quickly. Auto scaling did not degrade performance. In fact, as traffic grew to 1,100,000 requests per second, the average latency of each request decreased.

- Can auto scaling help reduce costs? In this test, auto scaling reduced costs by 30.8 percent. For the test, we used an 80 percent target utilization, but you should tune your target utilization for each workload to increase savings and ensure that capacity is appropriately allocated. We also showed in this post how to optimize costs further by using a blend of one-year reserved capacity and auto scaling, which in this test reduced costs by 55.1 percent.

As you can see, there are compelling reasons to use DynamoDB auto scaling with actively changing traffic. Auto scaling responds quickly and simplifies capacity management, which lowers costs by scaling your table’s provisioned capacity and reducing operational overhead.

About the authors

Daniel Yoder is an LA-based senior NoSQL specialist solutions architect at AWS.

Daniel Yoder is an LA-based senior NoSQL specialist solutions architect at AWS.

Sean Shriver is a Dallas-based senior NoSQL specialist solutions architect focused on DynamoDB.

Sean Shriver is a Dallas-based senior NoSQL specialist solutions architect focused on DynamoDB.