Artificial Intelligence

Iterate faster with Amazon Bedrock AgentCore Runtime direct code deployment

Amazon Bedrock AgentCore is an agentic platform for building, deploying, and operating effective agents securely at scale. Amazon Bedrock AgentCore Runtime is a fully managed service of Bedrock AgentCore, which provides low latency serverless environments to deploy agents and tools. It provides session isolation, supports multiple agent frameworks including popular open-source frameworks, and handles multimodal […]

Architect a mature generative AI foundation on AWS

In this post, we give an overview of a well-established generative AI foundation, dive into its components, and present an end-to-end perspective. We look at different operating models and explore how such a foundation can operate within those boundaries. Lastly, we present a maturity model that helps enterprises assess their evolution path.

Effectively manage foundation models for generative AI applications with Amazon SageMaker Model Registry

In this post, we explore the new features of Model Registry that streamline foundation model (FM) management: you can now register unzipped model artifacts and pass an End User License Agreement (EULA) acceptance flag without needing users to intervene.

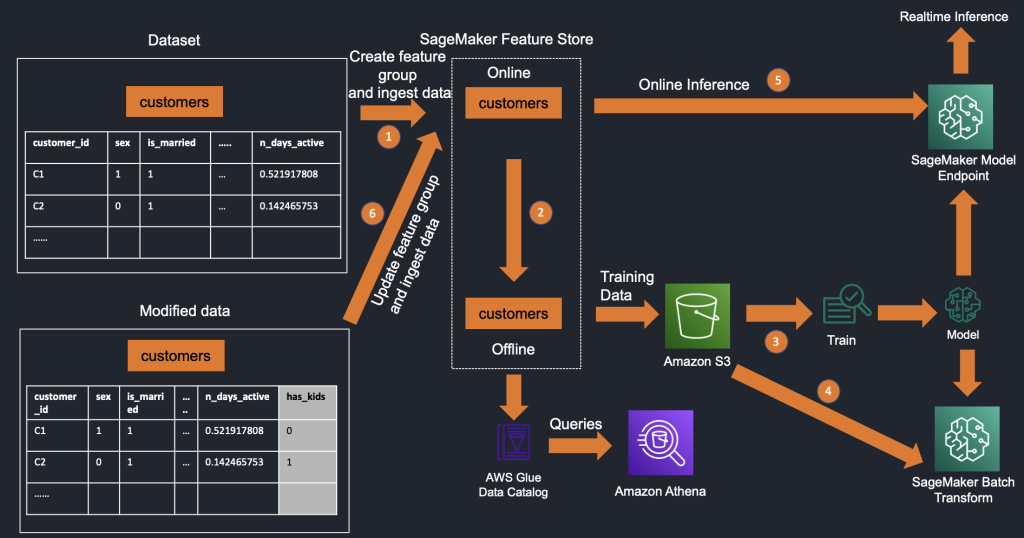

Simplify iterative machine learning model development by adding features to existing feature groups in Amazon SageMaker Feature Store

Feature engineering is one of the most challenging aspects of the machine learning (ML) lifecycle and a phase where the most amount of time is spent—data scientists and ML engineers spend 60–70% of their time on feature engineering. AWS introduced Amazon SageMaker Feature Store during AWS re:Invent 2020, which is a purpose-built, fully managed, centralized […]