AWS Machine Learning Blog

Optimizing costs for machine learning with Amazon SageMaker

Applications based on machine learning (ML) can provide tremendous business value. Using ML, we can solve some of the most complex engineering problems that previously were infeasible. One of the advantages of running ML on the AWS Cloud is that you can continually optimize your workloads and reduce your costs. In this post, we discuss how to apply such optimization to ML workloads. We consider available options such as elasticity, different pricing models in cloud, automation, advantage of scale, and more.

Developing, training, maintaining, and performance tuning ML models is an iterative process that requires continuous improvement. Determining the optimum state in the model while going through the permutations and combinations of model parameters and data dependencies to adjust is just one leg of the journey. There is more to optimizing the cost of ML than just algorithm performance and model tuning. There is also some effort required to integrate developed models into applications and realize their benefits. Throughout this process, you can keep the cost down in numerous ways. Amazon SageMaker has made most of this journey smooth so developers and data scientists can spend most of their time focusing on what matters the most—delivering business value.

Amazon SageMaker notebook instances

An Amazon SageMaker notebook instance is an ML compute instance running the Jupyter Notebook app. This notebook instance comes with sample notebooks, several optimized algorithms, and complete code walkthroughs. Amazon SageMaker manages the creation of this instance and related resources. Consider using Amazon SageMaker Studio notebooks for collaborative workloads and when you don’t need to set up compute instances and file storage beforehand.

You can follow these best practices to help reduce the cost of notebook instances.

GPU or CPU?

CPUs are best at handling single, more complex calculations sequentially, whereas GPUs are better at handling multiple but simple calculations in parallel. For many use cases, a standard current generation instance type from an instance family such as ml.m* provides enough computing power, memory, and network performance for many Jupyter notebooks to perform well. GPUs provide a great price/performance ratio if you take advantage of them effectively. However, GPUs also cost more, and you should choose GPU-based notebooks only when you really need them.

Ask yourself: Is my neural network relatively small scale? Is my network performing tons of calculations involving hundreds of thousands of parameters? Can my model take advantage of hardware parallelism such as P3 and P3dn instance families?

Depending on the model, the GPU communication overhead might even degrade performance. So, take a step back and start with what you think is the minimum requirement in terms of ml instance specification and work your way up to identifying the best instance type and family for your model.

If you’re using your notebook instance to train multiple jobs, decide when you need a GPU-enabled instance and when you don’t. If you need accelerated computing in your notebook environment, you can stop your m* family notebook instance, switch to a GPU-enabled P* family instance, and start it again. Don’t forget to switch it back when you no longer need that extra boost in your development environment.

If you’re using massive datasets for training and don’t want to wait for days or weeks to finish your training job, you can speed up the process by distributing training on multiple machines or processes in a cluster.

It’s recommended to use a small subset of your data for development in your notebook instance. You can use the full dataset for a training job that is distributed across optimized instances such as P2 or P3 GPU instances or an instance with powerful CPU, such as c5.

Maximize instance utilization

You can optimize your Amazon SageMaker notebook utilization many different ways. One simple way is to stop your notebook instance when you’re not using it and start when you need it. Consider auto-detecting idle notebook instances and managing their lifecycle using a lifecycle configuration script. For detailed implementation, see Right-sizing resources and avoiding unnecessary costs in Amazon SageMaker. Remember that the instance is only useful when you’re using the Jupyter notebook. If you’re not working on a notebook overnight or over the weekend, it’s a good idea to schedule a stop and start. Another way to save instance cost is by scheduling an AWS Lambda function. For example, you can stop all instances at 7:00 PM and start them at 7:00 AM.

You can also use Amazon CloudWatch Events to start and stop the instance based on an event. If you’re feeling geeky, connect it to your Amazon Rekognition based system to start a data scientist’s notebook instance when they step into the office or have Amazon Alexa do it as you grab a coffee.

Training jobs

The following are some best practices for saving costs on training jobs.

Use pre-trained models or even APIs

Pre-trained models eliminate the time spent gathering data and training models with that data. Consider using higher-level APIs such as provided by Amazon Rekognition or Amazon Comprehend to help you avoid spending on tasks that are already done for you. As an example, Amazon Comprehend simplifies topic modeling on a large corpus of documents. You can also use the Neural topic modeling (NTM) algorithm in Amazon SageMaker to get similar results with more effort. Although you have more control over hyperparameters when training your own model, your use case may not need it. A lot of engineering work and experience goes into creating ready-to-consume and highly optimized models, therefore an upfront ROI analysis is highly recommended if you’re embarking on a journey to develop similar models.

Use Pipe mode (where applicable) to reduce training time

Certain algorithms in Amazon SageMaker like Blazing text work on a large corpus of data. When these jobs are launched, significant time goes into downloading the data from Amazon Simple Storage Service (Amazon S3) into the local Amazon Elastic Block Storage (Amazon EBS) store. Your training jobs don’t start until this download finishes. These algorithms can take advantage of Pipe mode, in which training data is streamed from Amazon S3 into Amazon EBS and your training jobs start immediately. For example, training Blazing text on common crawl (3 TB) can take a few days, out of which a significant number of hours are just lost in download. This process can take advantage of Pipe mode to reduce significant training time.

Managed spot training in Amazon SageMaker

Managed spot training can optimize the cost of training models up to 90% over On-Demand Instances. Amazon SageMaker manages the Spot interruptions on your behalf. If your training job can be interrupted, use managed spot training. You can specify which training jobs use Spot Instances and a stopping condition that specifies how long Amazon SageMaker waits for a job to run using EC2 Spot Instances.

You may also consider using EC2 Spot Instances if you’re willing to do some extra work and if your algorithm is resilient enough to interruptions. For more information, see Managed Spot Training: Save Up to 90% On Your Amazon SageMaker Training Jobs.

Test your code locally

Resolve issues with code and data so you don’t need to pay to run training clusters for failed training jobs. This also saves you time spent initializing the training cluster. Before you submit a training job, try to run the fit function in local mode to fetch some early feedback:

Monitor the performance of your training jobs to identify waste

Amazon SageMaker is integrated with CloudWatch out of the box and publishes instance metrics of the training cluster in CloudWatch. You can use these metrics to see if you should make adjustments to your cluster, such as CPUs, memory, number of instances, and more. To view the CloudWatch metric for your training jobs, navigate to the Jobs page on the Amazon SageMaker console and choose View Instance metrics in the Monitor section.

Also, use Amazon SageMaker Debugger, which provides full visibility into model training by monitoring, recording, analyzing, and visualizing training process tensors. Debugger can dramatically reduce the time, resources, and cost needed to train models.

Find the right balance: Performance vs. accuracy

Compare the throughput of 16-bit floating point and 32-bit floating point calculations and determine what is right for your model. 32-bit (single precision or FP32) and even 64-bit (double precision or FP64) floating point variables are popular for many applications that require high precision. These are workloads like engineering simulations that simulate real-world behavior and need the mathematical model to be as exact as possible. In many cases, however, reducing memory usage and increasing speed gained by moving to half or mixed precision (16-bit or FP16) is worth the minor tradeoffs in accuracy. For more information, see Accelerating GPU computation through mixed-precision methods.

A similar trade-off also applies when deciding on the number of layers in your neural network for your classification algorithms, such as image classification.

Tuning (hyperparameter optimization) jobs

Use hyperparameter optimization (HPO) when needed and choose the hyperparameters and their ranges to tune on wisely.

Some API calls can result in a bill of hundreds or even thousands of dollars, and tuning jobs are one of those. A good tuning job can save you many working days of expensive data scientists’ time and provide a significant lift in model performance, which is highly beneficial. HPO in Amazon SageMaker finds good hyperparameters quicker if the search space is narrow (for example, a learning rate of 0.01–0.05 rather than 0.001–0.9). If you have some relevant prior knowledge about the hyperparameter range, start with that. For wide hyperparameter ranges, you may want to consider logarithmic transformations.

Amazon SageMaker also reduces the amount of time spent tuning models using built-in HPO. This technology automatically adjusts hundreds of different combinations of parameters to quickly arrive at the best solution for your ML problem. With high-performance algorithms, distributed computing, managed infrastructure, and HPO, Amazon SageMaker drastically decreases the training time and overall cost of building production grade systems. You can see examples of HPO in some of the Amazon SageMaker built-in algorithms.

For longer training jobs and as the training time for each training job gets longer, you may also want to consider early stopping of training jobs.

Hosting endpoints

The following section discusses how to save cost when hosting endpoints using Amazon SageMaker hosting services.

Delete endpoints that aren’t in use

Amazon SageMaker is great for testing new models because you can easily deploy them into an A/B testing environment. When you’re done with your tests and not using the endpoint extensively anymore, you should delete it. You can always recreate it when you need it again because the model is stored in Amazon S3.

Use Automatic Scaling

Auto Scaling your Amazon SageMaker endpoint doesn’t just provide high availability, better throughput, and better performance, it also optimizes the cost of your endpoint. Make sure that you configure Auto Scaling for your endpoint, monitor your model endpoint, and adjust the scaling policy based on the CloudWatch metrics. For more information, see Load test and optimize and Amazon SageMaker endpoint using automatic scaling.

Amazon Elastic Inference for deep learning

Selecting a GPU instance type that is big enough to satisfy the requirements of the most demanding resource for inference may not be a smart move. Even at peak load, a deep learning application may not fully utilize the capacity offered by a GPU. Consider using Amazon Elastic Inference, which allows you to attach low-cost GPU-powered acceleration to Amazon EC2 and Amazon SageMaker instances to reduce the cost of running deep learning inference by up to 75%.

Host multiple models with multi-model endpoints

You can create an endpoint that can host multiple models. Multi-model endpoints reduce hosting costs by improving endpoint utilization and provide a scalable and cost-effective solution to deploying a large number of models. Multi-model endpoints enable time-sharing of memory resources across models. It also reduces deployment overhead because Amazon SageMaker manages loading models in memory and scaling them based on traffic patterns to models.

Reducing labeling time with Amazon SageMaker Ground Truth

Data labeling is a key process of identifying raw data (such as images, text files, and videos) and adding one or more meaningful and informative labels to provide context so that an ML model can learn from it. This process is essential because the accuracy of trained model depends on accuracy of properly labeled dataset, or ground truth.

Amazon SageMaker Ground Truth uses combination of ML and a human workforce (vetted by AWS) to label images and text. Many ML projects are delayed because of insufficient labeled data. You can use Ground Truth to accelerate the ML cycle and reduce overall costs.

Tagging your resources

Consider tagging your Amazon SageMaker notebook instances and the hosting endpoints. Tags such as name of the project, business unit, environment (such as development, testing, or production) are useful for cost-optimization and can provide a clear visibility into where the money is spent. Cost allocation tags can help track and categorize your cost of ML. It can answer questions such as “Can I delete this resource to save cost?”

Keeping track of cost

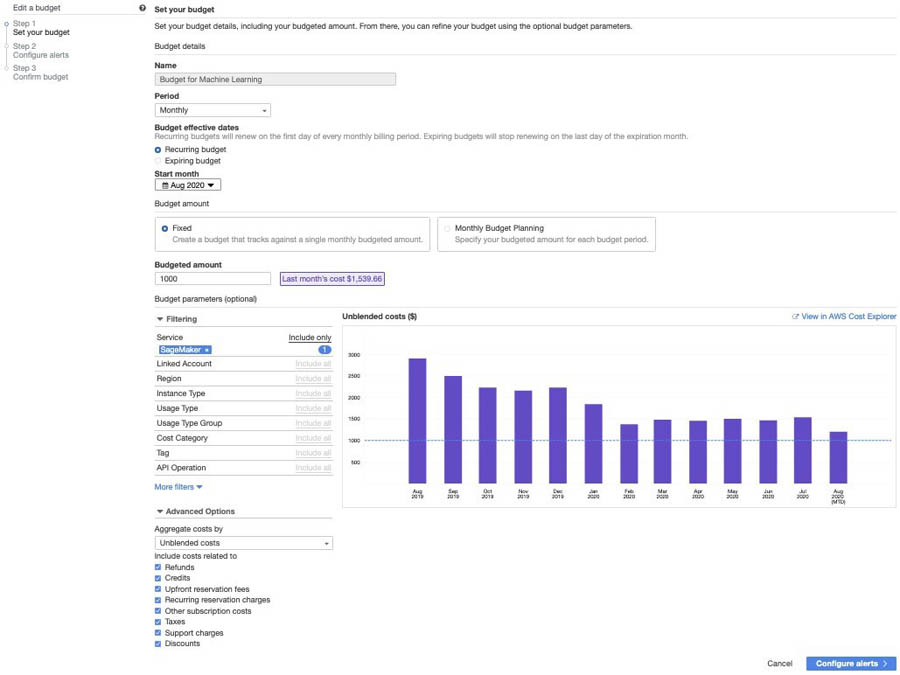

If you need visibility of your ML cost on AWS, use AWS Budgets. This helps you track your Amazon SageMaker cost, including development, training, and hosting. You can also set alerts and get a notification when your cost or usage exceeds (or is forecasted to exceed) your budgeted amount. After you create your budget, you can track the progress on the AWS Budgets console.

Conclusion

In this post, I highlighted a few approaches and techniques to optimize cost without compromising on the implementation flexibility so you can deliver best-in-class ML-based business applications.

For more information about optimizing costs, consider the following:

- Refer to more ways of optimizing your cost on the cloud by right-sizing your infrastructure. Also take a look at best practices.

- For an in-depth cost saving analysis when using an Elastic Inference accelerator, see Serving deep learning at Curalate with Apache MXNet, AWS Lambda, and Amazon Elastic Inference.

- Give Amazon SageMaker a try with any of the several sample Jupyter notebooks. For more information about getting started, see Amazon SageMaker – Accelerated Machine Learning.

- Learn more about managing ML projects in the whitepaper Managing Machine Learning Projects.

About the Author

BK Chaurasiya is a Principal Product Manager at Amazon Web Services R&D and Innovation team. He provides technical guidance, design advice, and thought leadership to some of the largest and successful AWS customers and partners. A technologist by heart, BK specializes in driving DevOps, continuous delivery, and large-scale cloud transformation initiatives to success.

BK Chaurasiya is a Principal Product Manager at Amazon Web Services R&D and Innovation team. He provides technical guidance, design advice, and thought leadership to some of the largest and successful AWS customers and partners. A technologist by heart, BK specializes in driving DevOps, continuous delivery, and large-scale cloud transformation initiatives to success.