Running batch jobs at scale for less

In this tutorial, you will learn how to run batch jobs using AWS Batch on a compute environment backed by Amazon EC2 Spot Instances. AWS Batch is a set of batch management capabilities that dynamically provision the optimal quantity and type of compute resources (e.g. CPU-optimized, memory-optimized and/or accelerated compute instances) based on the volume and specific resource requirements of the batch jobs you submit. AWS Batch’s native integration with EC2 Spot Instances means you can save up to 90% on your compute costs when compared to On-Demand pricing.

Prerequisites

If you are using a new AWS account or an account that has never used EC2 Spot Instances there are a set of IAM roles that are required on your account: AmazonEC2SpotFleetRole, AWSServiceRoleForEC2Spot and AWSServiceRoleForEC2SpotFleet. If you don’t have these roles on your AWS account, please follow the instructions here to create them.

This tutorial also makes use of the Default VPC of your AWS account. If your account doesn’t have a Default VPC, you can use your own VPC. In the last case, make sure your subnets meet the requirements documented here. It is also recommended that your VPC has a subnet mapped to each Availability Zone of the AWS region you are working on. You can run this tutorial on any AWS region where AWS Batch is available (you can find the list here).

| About this Tutorial | |

|---|---|

| Time | 10 minutes |

| Cost | Less than $1 |

| Use Case | Compute |

| Products | AWS Batch, EC2 Spot Instances |

| Level | 200 |

| Last Updated | February 10, 2020 |

Step 1: Create a compute environment backed by EC2 Spot Instances

1.1 — Open a browser and navigate to the AWS Batch console. If you already have an AWS account, login to the console. Otherwise, create a new AWS account to get started.

Already have an account? Log in to your account

1.4 — A compute environment is a set of compute resources that AWS Batch will use to place your jobs. To create one, click on the compute environments section on the left-hand side of the AWS Batch console and then click on the create environment button to open the compute environment creation wizard.

1.5 — On the compute environment type, leave the default selection to managed. This will create a compute environment managed by AWS Batch that will be automatically scaled out and scaled in as batch jobs are scheduled and compute capacity is needed.

Then, set up a compute environment name e.g. spot-compute-environment.

On the service role drop-down, select AWSBatchServiceRole if it exists on your account, otherwise select create new role. This is an IAM role that is used by AWS Batch to manage resources on your behalf.

On the instance role, select ecsInstanceRole IAM role if it exists on your account, otherwise select create new role to create it. This is an IAM role that will be attached to EC2 instances to make AWS API calls on your behalf (AWS Batch uses Amazon ECS to create the compute environment).

Then, on the EC2 key pair section, optionally select an EC2 key pair if you want to be able to SSH to the EC2 instances that AWS Batch will create.

1.6 — Now, scroll down and go to the configure your compute resources section.

Select Spot as provisioning model, leave maximum price blank (this will use the maximum price of the instances set to its On-Demand price, but you will pay always the current price).

Leave the default values for allowed instance types (optimal) and allocation strategy (SPOT_CAPACITY_OPTIMIZED). These settings will make AWS Batch pick the best instance types for your jobs from the m4, r4 and c4 instance families and also from the deepest Spot capacity pools, reducing the chances of Spot Instance interruptions to happen while your jobs are running (as interruptions happen when EC2 needs to claim capacity for a spike of demand of on-demand instances). You can add additional instance families or specific types, including GPU instance families (P2 and P3) if your jobs require it; AWS Batch will pick the optimal set of instances based on your jobs’ requirements, while benefiting from the 90% discount Spot Instances offer over On-Demand pricing.

1.7 — Leave the rest of the values on the configure your compute resources as default. Then, scroll-down to the networking section. By default, AWS Batch will select your Default VPC. If your account does not have a default VPC or you want to deploy your Batch compute environment on a specific VPC, select it here, as well as the subnets to use. If you are not using the default VPC, make sure your VPC configuration conforms with the networking requirements specified here.

Scroll-down to the very bottom of the page, and click on the create button to create the compute environment.

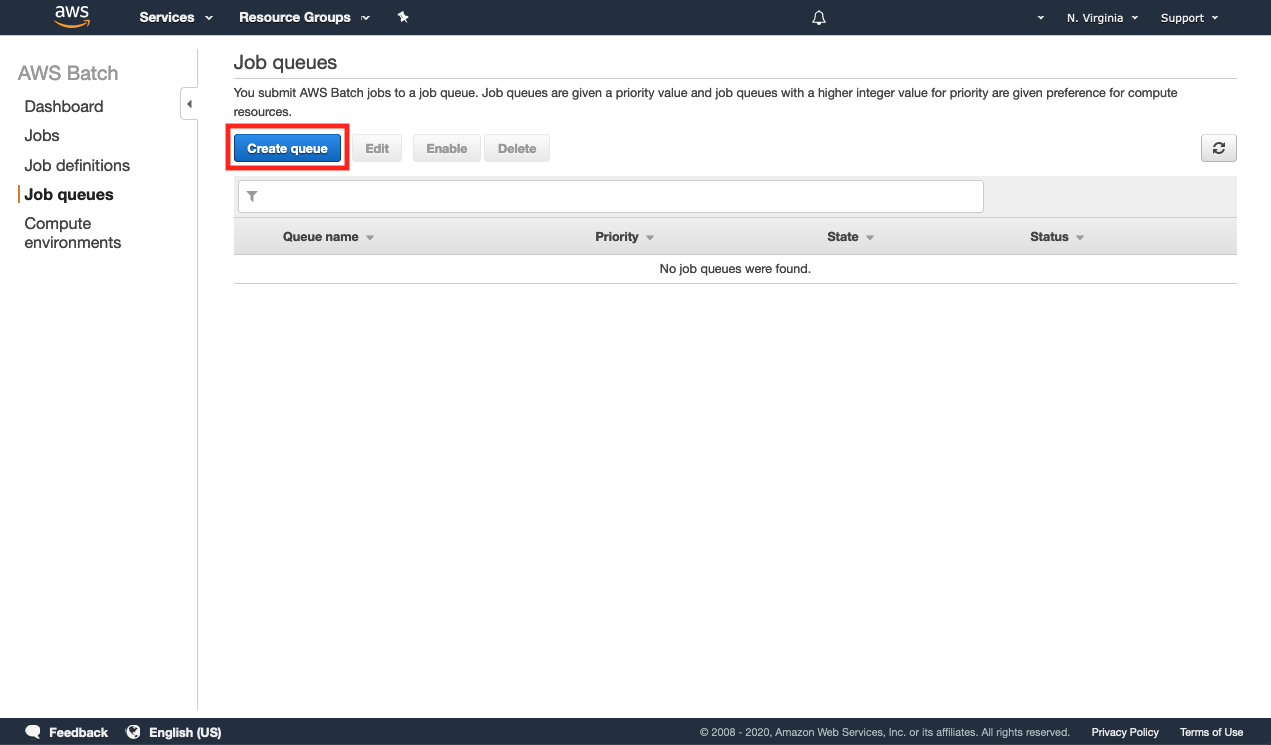

Step 2: Create a job queue

2.1 — Now that we have created a compute environment to execute our jobs, we need a job queue related to it, so submitted jobs can be scheduled on the Spot compute environment.

On the AWS Batch console, go to job queues and click on the create queue button.

Step 3: Create a job definition

3.1 — At this stage we have created a compute environment and a job queue connected to it, so we can now create a job definition for our job. Job definitions specify how jobs are to be run.

AWS Batch runs jobs within Docker containers and the job definition defines attributes like the Docker image to use for your job, how many vCPUs and how much memory does the job require, container properties, environment variables...

Go to the job definitions section of the console and click on the create button.

3.2 — On the job definition name give your job a name (e.g. my-first-batch-job). Job attempts define the number of times AWS Batch will try to execute your job. Although in average only less than 5% of Spot Instances are interrupted, interruptions can occur if EC2 needs the capacity back. To maximize uptime, set the job attempts value to 2 or higher so AWS Batch retries the execution if the instance where the job runs gets interrupted or fails. Optionally, define also an execution timeout value.

3.3 — Leave the parameters section blank (feel free to read more about Job definition parameters here).

Then, on the environment section leave job role blank (you can use this parameter to assign an IAM role to your job, so your job can invoke API calls to AWS services); set up amazonlinux as container image and on the command section, click on the JSON tab and paste the following into the text box: ["sh", "-c", "echo \"Hello world from job $AWS_BATCH_JOB_ID \". This is run attempt $AWS_BATCH_JOB_ATTEMPT"]. You will assign 1 vCPU and 256 MB of memory to this job.

This sample job will run on an amazonlinux container image and will print a “Hello world” message including its job ID assigned by AWS Batch and the execution attempt number. This data is provided by AWS Batch in the form of environment variables, and you can use it on your job. Additionally, you can pass additional environment variables to your jobs. To learn more about this feature, take a look at our documentation here.

Step 4: Execute your job and check out the results

4.1 — Now that we are all set, go to the jobs section and click on the submit job button.

4.3 — At this stage, your batch job has been placed on the queue and AWS Batch will take care of scaling compute resources on the compute environment linked to the queue; in our case selecting the most appropriate instance type to run our job from the deepest Spot capacity pool.

Go to the dashboard and keep refreshing the job queues section, so you see the job transitioning through the different states until it ends up on SUCCEEDED. If you refresh the compute environments section you will also see how Batch increases the Desired vCPUs to run our job. (NOTE: It will take ~2 minutes for AWS Batch to spin up the compute resources and run your job. Feel free to navigate through the console and get familiar with it).

4.4 — Once your job ends up SUCCEEDED click on the number below SUCCEEDED. You will be redirected to the jobs section of the console.

4.7 — Click on the view logs link to get redirected to Amazon CloudWatch Logs to see the logs for your job. A new window will open and you should see an output similar to the following with the logs of your job.

Step 5: Check the savings achieved

5.1 — Now that your job has been executed on Spot Instances managed by AWS Batch, it’s helpful to quantify the savings you have achieved compared to On-Demand pricing. To visualize your savings, go to the Spot Requests section of the EC2 console and click on the savings summary button. This will show you a summary of your achieved savings from using Spot Instances.

Step 6: Delete your resources

6.1 — Go to the job queues section of the AWS Batch console and select your queue. Then, click on the disable button.

Click on the circle arrow button to refresh the console and wait for a minute until the queue state is DISABLED (Status should be VALID); then go ahead and click delete to delete it. Wait until the queue is fully deleted (keep refreshing with the circular arrow button until the queue disappears from the console).

6.2 — Go to compute environments and select your compute environment, then click on disable. Keep refreshing the console using the circle arrow button and allow for some for AWS Batch to disable the compute environment. Status should be VALID and State should be DISABLED.

Then, select the compute environment and click on the delete button to delete the environment.

Congratulations

You have now learned how to run batch jobs on Amazon EC2 Spot Instances using AWS Batch. Amazon EC2 Spot Instances are a great fit for batch jobs as they are often fault-tolerant and instance flexible. AWS Batch does all the heavy lifting of managing server clusters, selecting instance types and autoscaling based on your workload needs, while you can focus on developing your batch jobs and run them at a fraction of the cost with Spot Instances.

Recommended next steps

AWS Batch on EC2 Spot Instances

Now that you’ve learned how to run AWS Batch with Spot in the AWS console, watch this video to learn how to run it via the AWS CLI.

Creating a simple "fetch & run" AWS Batch job

Need a refresher on setting up a Docker container? Read this blog to learn how to build a simple Docker container that you can run on Amazon ECS with AWS Batch.

Optimizing for cost, availablility and throughput by selecting your AWS Batch allocation strategy

Now that you know the basics of running AWS Batch with EC2 Spot Instances with the Spot Capacity Optimized allocation model, read this blog to learn how you can combine multiple EC2 allocation strategies to run your workload.