Networking & Content Delivery

Scale your Remote Access VPN on AWS

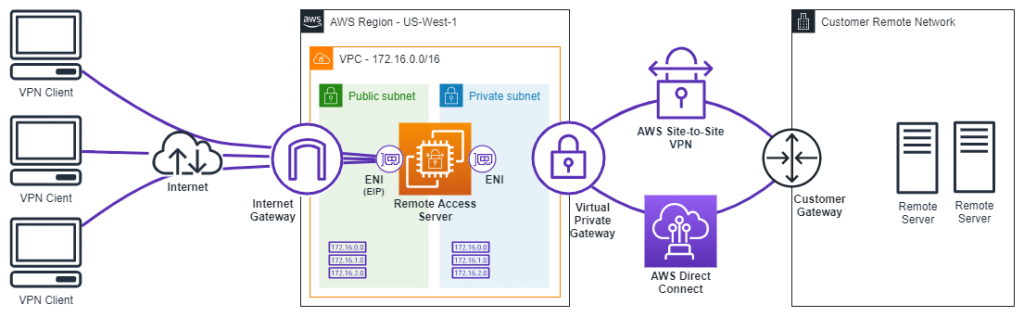

AWS gives you the ability to extend existing on-premises remote access VPN solutions to the cloud. This not only allows access to resources within AWS, but using hybrid connectivity, also to on-premises resources. VPN clients use AWS internet connectivity as an entry point, and the flexibility of Amazon EC2 to scale capacity behind remote access VPN. The benefit is the ability to elastically increase the number of concurrent VPN clients connecting to the network when required. While AWS offers the managed and elastic AWS Client VPN service, some AWS customers are already using third-party remote access solutions. AWS customers can also use their existing solutions on Amazon EC2. This includes well-known third-party software like Cisco AnyConnect, Palo Alto GlobalProtect, OpenVPN, and others. In this post, we specifically focus on third-party VPN software running on top of Amazon EC2.

Remote access VPN on Amazon EC2

Deploying VPN endpoints directly on Amazon EC2 helps customers implement and scale these solutions more quickly. Customers gain full access to AWS resources and to on-premises resources. However, the AWS network setup necessary to support third-party VPN solutions is not trivial. Here we look at common network architecture options.

Figure 1: Remote access solution on Amazon EC2 with third-party VPN software.

Remote access VPN software

There are many possible options for EC2-based remote access solutions available in the AWS Marketplace. Some solutions even provide AWS Quick Start guides, simplifying the deployment even further. We are not going to discuss all possible VPN software options in this blog post. Instead, we focus on IP address management, routing, and common architectural approaches.

Architecture patterns and IP address management

VPN clients require IP addresses to access network resources within Amazon VPCs or on-premises network. Two strategies for assigning and managing these addresses (or “IP pool”) within Amazon VPC are network address translation (NAT) and routed IP pools.

NAT: A VPN client is given an IP address once a tunnel to the remote access server is established. This IP address comes from the IP pool that is not known to the underlying network – Amazon VPC. To facilitate connectivity, the remote access server performs network address translation (NAT). More specifically, configures the source NAT (SNAT) on the remote access server that is running VPN software on an EC2 instance. This makes all VPN clients appear to be coming from the VPN instance’s internal IP address. This IP address is usually associated with the elastic network interface (ENI) of the remote access server in a private subnet. Even when using the NAT option, it is important that the IP addresses you pick for the IP pool don’t overlap with remote networks. With this approach, you can also re-use IP addresses across multiple remote access server instances.

Figure 2: Remote access solution on Amazon EC2 performing source network address translation (SNAT).

- Benefits: Allows re-use of IPv4 address space, for example, across multiple instances of remote access servers. Allows for simple hybrid network connectivity because there is no need for additional routing configuration.

- Constraints: Introduces the typical issues of clients behind NAT while accessing resources. For example, the inability to initiate a connection to the VPN client to perform remote device management. And, it does not support IPv6 IP pools, because IPv6 does not support NAT.

Routed IP pool: This architecture allocates IPv4/IPV6 address pools to each remote access server. This address pool must be routed within the internal network (AWS and on-premises) to the remote access server. To do this, an overlay CIDR within the VPC route table must be configured. Here, an overlay CIDR is an IP range that is outside of configured VPC CIDRs, yet appears in the VPC route table. The overlay CIDR points to the internal elastic network interface (ENI) of the VPN remote access server. We call this CIDR a remote access server IP pool or, more simply, a RAS IP pool. Next, the VPC that houses the VPN remote access server must connect to an AWS Transit Gateway. This is where a static route for the RAS IP pool inside the AWS Transit Gateway route table is created and pointed towards the VPC.

Figure 3: Remote access solution on Amazon EC2 with routed RAS IP pool using AWS Transit Gateway.

- Benefits: Supports IPv4 and IPv6 CIDRs. Allows internal traffic from outside the IP pool to reach VPN clients (for example, VoIP, RDP, and other protocols that initiate connection towards a VPN client). Remote servers and applications see client IPs instead of VPN instance IPs. Can optionally be configured with multiple RAS IP pools for different classes of users, and use network filtering to restrict access as needed.

- Constraints: Unique IPv4/IPV6 CIDRs per VPN endpoint device are required. You must configure routes in the RAS IP pool pointing to the remote access server’s internal elastic network interface (ENI). For networks outside of the VPC hosting the remote access server, steering traffic towards remote access server is only possible with AWS Transit Gateway. Amazon VPC peering or virtual private gateway (VGW) do not support Amazon VPC ingress routing.

- Considerations: Requires disabling the source/destination checking on the internal interface of the remote access server, as this ENI receives traffic destined for and originating from IP addresses within the overlay CIDR. As IP addresses from the overlay CIDR do not match the ENI’s IP address, the source/destination check must be disabled.

Configure on-premises connectivity when running a hybrid architecture

While using the NAT option, no additional changes are required. The VPC CIDR range is already advertised to on-premises over AWS Site-to-Site VPN or AWS Direct Connect. As long as you can communicate to the VPC hosting the remote access server, connectivity from your VPN clients will work (at least from a routing perspective). Be sure to allow traffic from the VPN instance’s internal IP address within your firewalls, as we are using SNAT.

The routed IP pool option requires routing configuration. Currently there is no way to configure Amazon VPC ingress routing if you are coming from a peered VPC. This is also true for AWS Site-to-Site VPN/AWS Direct Connect that use Virtual Private Gateways. And, there is no way to steer traffic to a VPN instance.

With AWS Transit Gateway, you can steer traffic towards the IP pool. To do this, use the VPC routing table assigned to the TGW attachment subnet(s). Additionally, you must configure AWS Transit Gateway and AWS Direct Connect Gateway to announce RAS IP pool over BGP. Customer gateway is made aware of the RAS IP pool network by BGP. Information on using AWS Transit Gateway with AWS Direct Connect along with many other useful architectures is available in this whitepaper.

Scale out your remote access VPN

Once you have your first remote access server up and running, you may need to scale your solution to support large numbers of VPN clients. Also, in order to improve resiliency, it is good practice to use multiple Availability Zones when deploying remote access servers.

One approach would be use vertical scaling (moving to an instance with a higher-performance CPU, RAM, and more network bandwidth). It is important to note that currently outbound network traffic to the internet is limited to 5 Gbps, even across multiple TCP flows.

Figure 4: Scale out architecture using Amazon Route 53 to direct clients to a fleet of remote access servers.

At some point, you reach the limit of a single EC2 instance and must scale horizontally. Depending on the software in use, one of the options below would allow horizontal scaling:

- Use Amazon Route 53 to configure a set of records representing remote access servers. VPN clients can round-robin, or use weighted records, when they resolve remote access servers. This can be combined with Amazon Route 53 health checks.

- In case the architecture is deployed across multiple AWS Regions, the Amazon Route 53 geolocation routing, geoproximity routing, or latency routing can be considered for reduced latency and improved user performance.

- If you are using SSL-based VPN, you could use AWS Network Load Balancer (NLB) to balance traffic between remote access servers. This has the added benefit of health checking. Various SSL-VPN products use Datagram Transport Layer Security (DTLS), a UDP-based extension of TLS. SSL-based VPN products that use DTLS require Sticky Sessions enabled on the AWS NLB. At this time, this is not supported in every AWS Region.

- Use inbuilt VPN client profile features (if available) to populate the Server List with multiple remote access servers. This can be a combination of EIPs of EC2 instances and existing remote access servers on-premises.

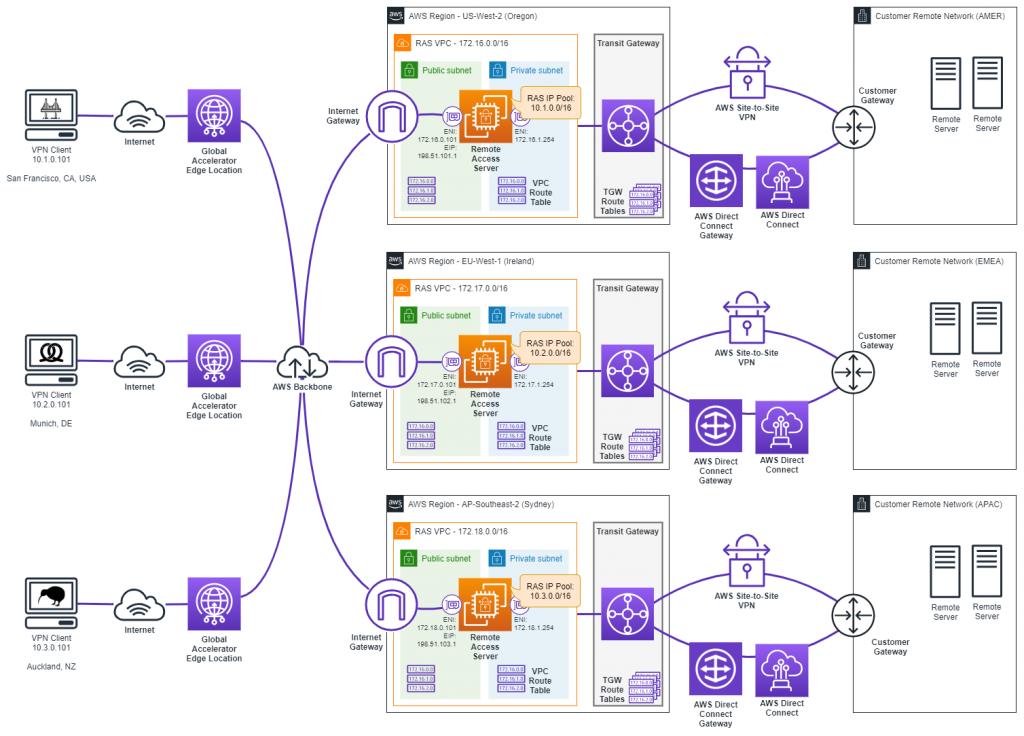

Taking your remote access VPN global

AWS has many options for supporting a global workforce, including the AWS global network, AWS edge locations, and AWS Global Accelerator. These improve latency and performance for remote VPN clients. You can use AWS Global Accelerator with client affinity set to Source IP. Also, you can use client IP address preservation to support Datagram Transport Layer Security (DTLS). In this case, traffic between VPN clients and the remote access server is routed to the optimal AWS Global Accelerator edge location. From there, traffic is sent over the AWS global network to the AWS Region hosting your remote access server. The route follows the optimal path based on the latency and performance between the AWS Global Accelerator point of presence and the AWS Region hosting your VPN endpoints. AWS Global Accelerator reacts quickly to changes in application health, your user’s location, and policies that you configure. For example, as in the diagram below, VPN clients in different locations are connected to the nearest AWS Region via AWS Global Accelerator.

Figure 5: AWS Global Accelerator routes traffic from VPN clients to the AWS edge location closest to the optimal remote access server.

Final thoughts

In this blog post, we have shown common architecture patterns for a scalable remote access VPN solution. Different approaches are available and we’ve listed benefits and constraints of each. AWS software defined networking with Amazon VPC and Amazon EC2 make it possible to achieve elasticity and flexibility of provisioning and scaling secure remote access VPN solutions. Finally, with AWS Global Accelerator and Route 53, you can create multi-region architecture.

| Blog: Using AWS Client VPN to securely access AWS and on-premises resources | ||

| Learn about AWS VPN services | ||

|

Watch re:Invent 2019: Connectivity to AWS and hybrid AWS network architectures |