AWS Compute Blog

Enabling DNS resolution for Amazon EKS cluster endpoints

Update – December 2019

Amazon EKS now supports automatic DNS resolution for private cluster endpoints. This feature works automatically for all EKS clusters. You can still implement the solution described below, but this is not required for the majority of use cases.

Learn more in the What’s New post or Amazon EKS documentation.

This post is contributed by Jeremy Cowan – Sr. Container Specialist Solution Architect, AWS

By default, when you create an Amazon EKS cluster, the Kubernetes cluster endpoint is public. While it is accessible from the internet, access to the Kubernetes cluster endpoint is restricted by AWS Identity and Access Management (IAM) and Kubernetes role-based access control (RBAC) policies.

At some point, you may need to configure the Kubernetes cluster endpoint to be private. Changing your Kubernetes cluster endpoint access from public to private completely disables public access such that it can no longer be accessed from the internet.

In fact, a cluster that has been configured to only allow private access can only be accessed from the following:

- The VPC where the worker nodes reside

- Networks that have been peered with that VPC

- A network that has been connected to AWS through AWS Direct Connect (DX) or a virtual private network (VPN)

However, the name of the Kubernetes cluster endpoint is only resolvable from the worker node VPC, for the following reasons:

- The Amazon Route 53 private hosted zone that is created for the endpoint is only associated with the worker node VPC.

- The private hosted zone is created in a separate AWS managed account and cannot be altered.

For more information, see Working with Private Hosted Zones.

This post explains how to use Route 53 inbound and outbound endpoints to resolve the name of the cluster endpoints when a request originates outside the worker node VPC.

Route 53 inbound and outbound endpoints

Route 53 inbound and outbound endpoints allow you to simplify the configuration of hybrid DNS. DNS queries for AWS resources are resolved by Route 53 resolvers and DNS queries for on-premises resources are forwarded to an on-premises DNS resolver. However, you can also use these Route 53 endpoints to resolve the names of endpoints that are only resolvable from within a specific VPC, like the EKS cluster endpoint.

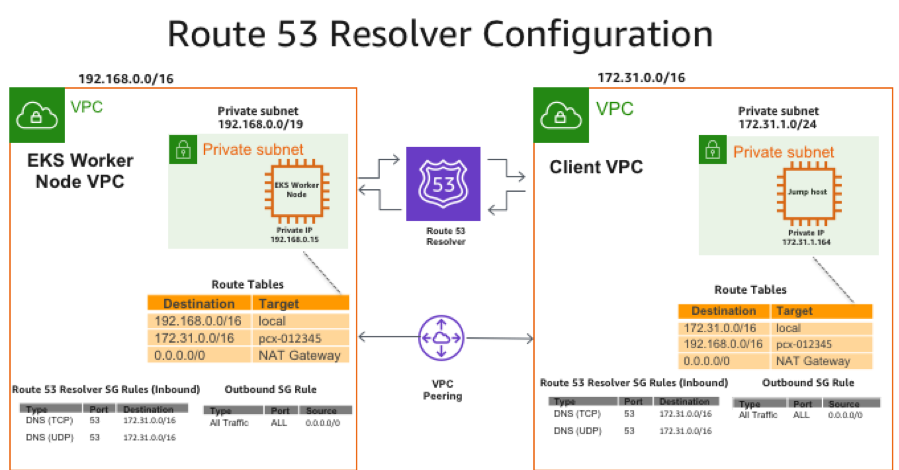

The following diagrams show how the solution works:

- A Route 53 inbound endpoint is created in each worker node VPC and associated with a security group that allows inbound DNS requests from external subnets/CIDR ranges.

- If the requests for the Kubernetes cluster endpoint originate from a peered VPC, those requests must be routed through a Route 53 outbound endpoint.

- The outbound endpoint, like the inbound endpoint, is associated with a security group that allows inbound requests that originate from the peered VPC or from other VPCs in the Region.

- A forwarding rule is created for each Kubernetes cluster endpoint. This rule routes the request through the outbound endpoint to the IP addresses of the inbound endpoints in the worker node VPC, where it is resolved by Route 53.

- The results of the DNS query for the Kubernetes cluster endpoint are then returned to the requestor.

If the request originates from an on-premises environment, you forego creating the outbound endpoints. Instead, you create a forwarding rule to forward requests for the Kubernetes cluster endpoint to the IP address of the Route 53 inbound endpoints in the worker node VPC.

Solution overview

For this solution, follow these steps:

- Create an inbound endpoint in the worker node VPC.

- Create an outbound endpoint in a peered VPC.

- Create a forwarding rule for the outbound endpoint that sends requests to the Route 53 resolver for the worker node VPC.

- Create a security group rule to allow inbound traffic from a peered network.

- (Optional) Create a forwarding rule in your on-premises DNS for the Kubernetes cluster endpoint.

Prerequisites

EKS requires that you enable DNS hostnames and DNS resolution in each worker node VPC when you change the cluster endpoint access from public to private. It is also a prerequisite for this solution and for all solutions that uses Route 53 private hosted zones.

In addition, you need a route that connects your on-premises network or VPC with the worker node VPC. In a multi-VPC environment, this can be accomplished by creating a peering connection between two or more VPCs and updating the route table in those VPCs. If you’re connecting from an on-premises environment across a DX or an IPsec VPN, you need a route to the worker node VPC.

Configuring the inbound endpoint

When you provision an EKS cluster, EKS automatically provisions two or more cross-account elastic network interfaces onto two different subnets in your worker node VPC. These network interfaces are primarily used when the control plane must initiate a connection with your worker nodes, for example, when you use kubectl exec or kubectl proxy. However, they can also be used by the workers to communicate with the Kubernetes API server.

When you change the EKS endpoint access to private, EKS associates a Route 53 private hosted zone with your worker node VPC. Within this private hosted zone, EKS creates resource records for the cluster endpoint. These records correspond to the IP addresses of the two cross-account elastic network interfaces that were created in your VPC when you provisioned your cluster.

When the IP addresses of these cross-account elastic network interfaces change, for example, when EKS replaces unhealthy control plane nodes, the resource records for the cluster endpoint are automatically updated. This allows your worker nodes to continue communicating with the cluster endpoint when you switch to private access. If you update the cluster to enable public access and disable private access, your worker nodes revert to using the public Kubernetes cluster endpoint.

By creating a Route 53 inbound endpoint in the worker node VPC, DNS queries are sent to the VPC DNS resolver of worker node VPC. This endpoint is now capable of resolving the cluster endpoint.

Create an inbound endpoint in the worker node VPC

- In the Route 53 console, choose Inbound endpoints, Create Inbound endpoint.

- For Endpoint Name, enter a value such as

<cluster_name>InboundEndpoint. - For VPC in the Region, choose the VPC ID of the worker node VPC.

- For Security group for this endpoint, choose a security group that allows clients or applications from other networks to access this endpoint. For an example, see the Route 53 resolver diagram shown earlier in the post.

- Under IP addresses section, choose an Availability Zone that corresponds to a subnet in your VPC.

- For IP address, choose Use an IP address that is selected automatically.

- Repeat steps 7 and 8 for the second IP address.

- Choose Submit.

Or, run the following AWS CLI command:

When you are done creating the inbound endpoint, select the endpoint from the console and choose View details. This shows you a summary of the configuration for the endpoint. Record the two IP addresses that were assigned to the inbound endpoint, as you need them later when configuring the forwarding rule.

Connecting from a peered VPC

An outbound endpoint is used to send DNS requests that cannot be resolved “locally” to an external resolver based on a set of rules.

If you are connecting to the EKS cluster from a peered VPC, create an outbound endpoint and forwarding rule in that VPC or expose an outbound endpoint from another VPC. For more information, see Forwarding Outbound DNS Queries to Your Network.

Create an outbound endpoint

- In the Route 53 console, choose Outbound endpoints, Create outbound endpoint.

- For Endpoint name, enter a value such as

<cluster_name>OutboundEnpoint. - For VPC in the Region, select the VPC ID of the VPC where you want to create the outbound endpoint, for example the peered VPC.

- For Security group for this endpoint, choose a security group that allows clients and applications from this or other network VPCs to access this endpoint. For an example, see the Route 53 resolver diagram shown earlier in the post.

- Under the IP addresses section, choose an Availability Zone that corresponds to a subnet in the peered VPC.

- For IP address, choose Use an IP address that is selected automatically.

- Repeat steps 7 and 8 for the second IP address.

- Choose Submit.

Or, run the following AWS CLI command:

This outputs the IP addresses that get assigned to the outbound endpoint.

Create a forwarding rule for the cluster endpoint

A forwarding rule is used to send DNS requests that cannot be resolved by the local resolver to another DNS resolver. For this solution to work, create a forwarding rule for each cluster endpoint to resolve through the outbound endpoint. For more information, see Values That You Specify When You Create or Edit Rules.

- In the Route 53 console, choose Rules, Create rule.

- Give your rule a name, such as

<cluster_name>Rule. - For Rule type, choose Forward.

- For Domain name, type the name of the cluster endpoint for your EKS cluster.

- For VPCs that use this rule, select all of the VPCs to which this rule should apply. If you have multiple VPCs that must access the cluster endpoint, include them in the list of VPCs.

- For Outbound endpoint, select the outbound endpoint to use to send DNS requests to the inbound endpoint of the worker node VPC.

- Under the Target IP addresses section, enter the IP addresses of the inbound endpoint that corresponds to the EKS endpoint that you entered in the Domain name field.

- Choose Submit.

Or, run the following AWS CLI command:

After creating the inbound and outbound endpoints and the DNS forwarding rule, you should be able to resolve the name of the cluster endpoints from the peered VPC.

Before you can access the cluster endpoint, you must add the IP address range of the peered VPCs to the EKS control plane security group. For more information, see Tutorial: Creating a VPC with Public and Private Subnets for Your Amazon EKS Cluster.

Add a rule to the EKS cluster control plane security group

- In the EC2 console, choose Security Groups.

- Find the security group associated with the EKS cluster control plane. If you used

eksctlto provision your cluster, the security group is named as follows:eksctl-<cluster_name>-cluster/ControlPlaneSecurityGroup. - Add a rule that allows port 443 inbound from the CIDR range of the peered VPC.

- Choose Save.

Run kubectl

With the proper security group rule in place, you should now be able to issue kubectl commands from a machine in the peered VPC against the cluster endpoint.

Connecting from an on-premises environment

To manage your EKS cluster from your on-premises environment, configure a forwarding rule in your on-premises DNS to forward DNS queries to the inbound endpoint of the worker node VPCs. I’ve provided brief descriptions for how to do this for BIND, dnsmasq, and Windows DNS below.

Create a forwarding zone in BIND for the cluster endpoint

Add the following to the BIND configuration file:

Create a forwarding zone in dnsmasq for the cluster endpoint

If you’re using dnsmasq, add the --server=/<cluster endpoint FQDN>/<inbound endpoint IP> flag to the startup options.

Create a forwarding zone in Windows DNS for the cluster endpoint

If you’re using Windows DNS, create a conditional forwarder. Use the cluster endpoint FQDN for the DNS domain and the IPs of the inbound endpoints for the IP addresses of the servers to which to forward the requests.

Add a security group rule to the cluster control plane

Follow the steps in Adding A Rule To The EKS Cluster Control Plane Security Group. This time, use the CIDR of your on-premises network instead of the peered VPC.

Conclusion

When you configure the EKS cluster endpoint to be private only, its name can only be resolved from the worker node VPC. To manage the cluster from another VPC or your on-premises network, you can use the solution outlined in this post to create an inbound resolver for the worker node VPC.

This inbound endpoint is a feature that allows your DNS resolvers to easily resolve domain names for AWS resources. That includes the private hosted zone that gets associated with your VPC when you make the EKS cluster endpoint private. For more information, see Resolving DNS Queries Between VPCs and Your Network. As always, I welcome your feedback about this solution.