- AWS Solutions Library›

- Guidance for Automated Deployment of Inference ready Amazon EKS Clusters

Guidance for Automated Deployment of Inference ready Amazon EKS Clusters

Overview

This Guidance demonstrates how to rapidly establish production-ready ML inference environments on Amazon EKS, delivering significant operational and cost benefits. It shows how to leverage optimized compute resources and GPUs for optimal inference performance while implementing industry best practices for auto-scaling and cluster management. The solution helps organizations accelerate their AI initiatives by providing a battle-tested architecture that seamlessly supports both experimental and large-scale production deployments of LLMs and generative AI models. By automating complex infrastructure setup and incorporating comprehensive observability, this guidance enables teams to focus on model deployment and business value rather than infrastructure management.

Benefits

Deploy AI inference workloads faster with pre-configured Amazon EKS clusters optimized for machine learning. This guidance provides ready-to-use Terraform templates and Helm charts that streamline the deployment process from cluster creation to model serving.

Balance performance and cost efficiency with topology-aware scheduling that keeps AI/ML workloads in the same Availability Zone. Karpenter's intelligent auto-scaling provisions the right compute resources on demand, helping you avoid over-provisioning while maintaining performance for inference workloads.

Gain comprehensive insights into your AI inference infrastructure with the pre-configured observability stack. The integrated FluentBit, Prometheus, and Grafana deployment automatically collects metrics and logs from AI/ML workloads, enabling faster troubleshooting and performance optimization.

How it works

Provision EKS

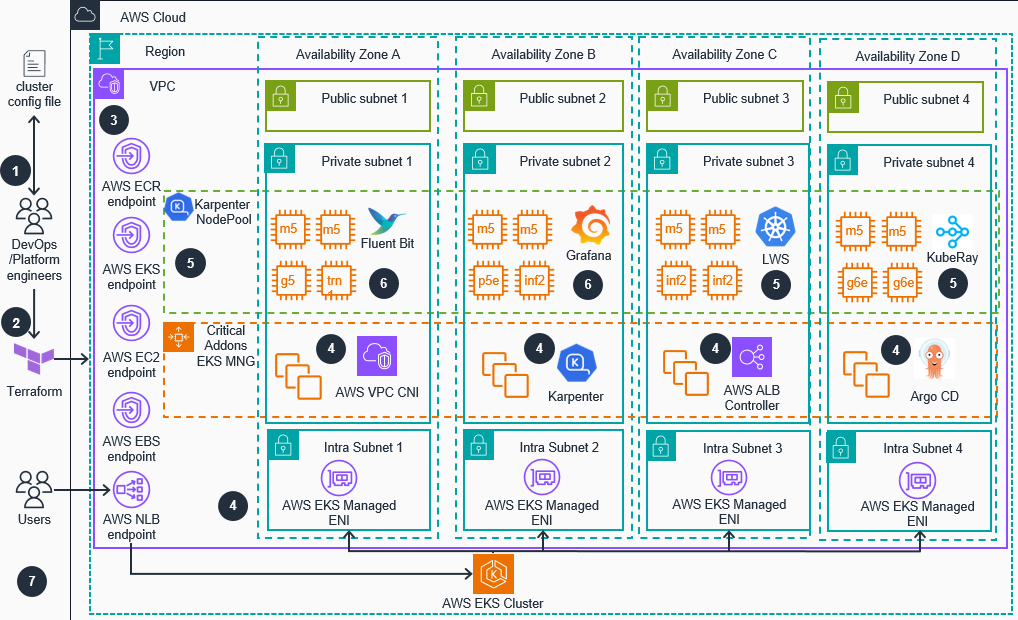

This architecture diagram shows how to provision an Amazon Elastic Kubernetes Service (EKS) Inference ready cluster with best practices configuration for AI workloads.

Deploying AI Models

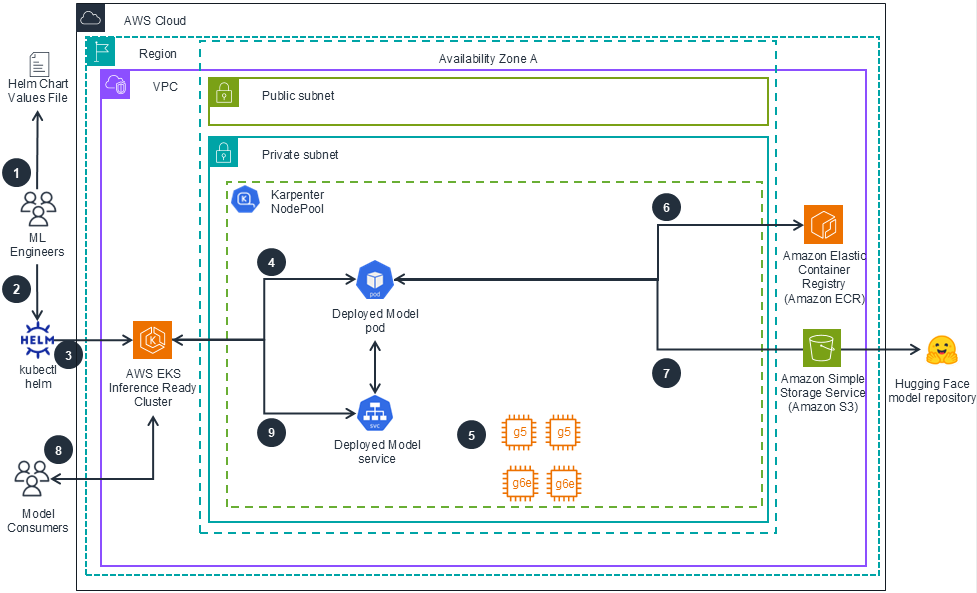

This architecture diagram shows highlights of deploying AI Models on the Inference-Ready Amazon EKS Cluster using Helm templates.

Disclaimer

The sample code; software libraries; command line tools; proofs of concept; templates; or other related technology (including any of the foregoing that are provided by our personnel) is provided to you as AWS Content under the AWS Customer Agreement, or the relevant written agreement between you and AWS (whichever applies). You should not use this AWS Content in your production accounts, or on production or other critical data. You are responsible for testing, securing, and optimizing the AWS Content, such as sample code, as appropriate for production grade use based on your specific quality control practices and standards. Deploying AWS Content may incur AWS charges for creating or using AWS chargeable resources, such as running Amazon EC2 instances or using Amazon S3 storage.

Did you find what you were looking for today?

Let us know so we can improve the quality of the content on our pages