Networking & Content Delivery

Using VPC Traffic Mirroring to monitor and secure your AWS infrastructure

VPC Traffic Mirroring is an AWS feature used to copy network traffic from the elastic network interface of an EC2 instance to a target for analysis. This makes a variety of network-based monitoring and analytics solutions possible on AWS. By capturing the raw packet data required for content inspection, VPC Traffic Mirroring enables agentless methods for acquiring network traffic from/to Amazon Elastic Compute Cloud (EC2) instances. This is used in network security, network performance monitoring, customer experience management, and network troubleshooting. In this blog, we will highlight some recent enhancements, key use cases, deployment models, and automation tips for VPC Traffic Mirroring.

What’s New: Support for non-Nitro instances

We recently extended support for VPC Traffic Mirroring to both AWS Nitro and non-Nitro compute instance types. If you are using a mix of compute instance types in your deployment, this expanded coverage simplifies operations, shortens time to resolution during incident response, and makes it easier to deploy solutions from AWS Partners. VPC Traffic Mirroring has now been extended to support 12 new non-Nitro compute instance types (C4, D2, G3, G3s, H1, I3, M4, P2, P3, R4, X1, and X1e).

Since the introduction of VPC Traffic Mirroring on Nitro compute instances, we’ve heard from our customers about their desire to gain pervasive network visibility into their cloud workloads to simplify their operations. However, the need to use agents on non-Nitro compute instances with native VPC Traffic Mirroring on Nitro compute instances created deployment friction. For example, it precluded some customers from inspecting all east-west traffic patterns or lengthened mean time to resolution (MTTR) due to inconsistent visibility.

VPC Traffic Mirroring for both Nitro and non-Nitro instances is now available in 22 Regions. VPC Traffic Mirroring is not supported on the T2, C3, R3, and I2 instance types and previous generation instances. For details, please refer to the VPC Traffic Mirroring documentation.

Key use cases

Network and network security engineers view traffic as the ‘source of truth’ because it provides a real-time view into communications between different entities in the infrastructure. VPC Traffic Mirroring enables network and security engineers to solve four major categories of use cases:

- Network security: Network security monitoring tools need pervasive access to network traffic to secure cloud infrastructure and workloads. There is a rich partner ecosystem of network security solutions that benefit from such access. These solutions apply a combination of deep packet inspection (content inspection), signature analysis, anomaly detection, and machine learning based techniques to provide further protection. Categories of such solutions include network detection and response (NDR), intrusion detection systems (IDS), security information and event management (SIEM), next-generation firewall (NGFW), advanced threat prevention, and network forensics. For example, VPC Traffic Mirroring provides access to granular network traffic that enables NDR solutions to build context for their advanced behavioral models. Such context is imperative in detecting stealth attacks arising from insider threats, critical misconfigurations and new types of ransomware.

- Network performance monitoring: This makes it possible for network performance monitoring solutions to identify performance bottlenecks and troubleshoot multi-tier applications. Agentless access to network traffic is the foundation for network observability, network performance management and diagnostics, packet capture systems and application performance management (APM).

- Customer experience management: VPC Traffic Mirroring helps provide network traffic to customer experience management systems, such as voice over IP (VoIP), and service quality analyzers.

- Network troubleshooting: VPC Traffic Mirroring assists with diagnosis of network issues, especially when visibility beyond what is available through VPC Flow Logs is needed. This includes situations when packet traces must be captured in a packet analyzer/packet capture appliance.

AWS Partner Support

Several AWS Partners are using VPC Traffic Mirroring to do more with their use cases. These AWS Partners are innovating in conjunction with VPC Traffic Mirroring:

- Blue Hexagon blog>>

- Check Point blog>>

- Corelight blog>>

- cPacket blog>>

- Darktrace blog>>

- ExtraHop blog>>

- FireEye blog>>

- Gigamon blog>>

- IronNet blog>>

- Kentik blog>>

- NETSCOUT blog>>

- Nubeva blog>>

- Palo Alto Networks blog>>

- Rapid7 blog>>

- Riverbed blog>>

- RSA blog>>

- Salt Security blog>>

- Vectra blog>>

- VIAVI blog>>

How VPC Traffic Mirroring works

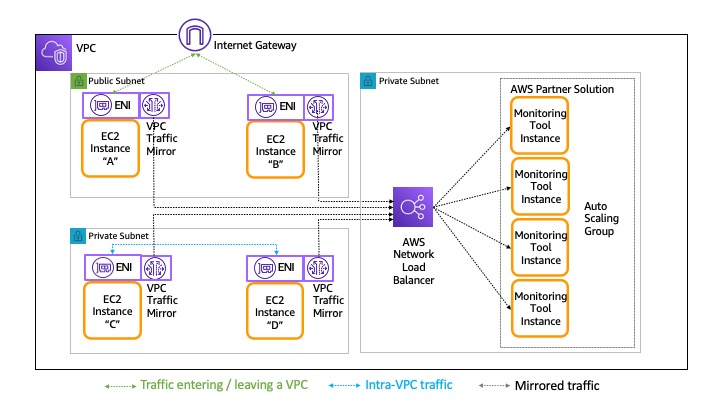

VPC Traffic Mirroring works by establishing a session between a mirroring source and a mirroring target. The following diagram (figure 1) shows how to monitor traffic that is entering/leaving a VPC from two EC2 instances (A and B) in the public subnet and concurrently monitor intra-VPC traffic between two EC2 instances (C and D) in the private subnet. In this example, each of these instances (A, B, C, and D) are mirroring sources. The traffic mirroring target can be another elastic network interface (ENI) attached to a virtual monitoring appliance running on an EC2 instance, or a Network Load Balancer (NLB) that balances traffic across multiple instances of a virtual monitoring appliance. In the following diagram (figure 1), these virtual monitoring appliance instances are front-ended by a Network Load Balancer and deployed as part of an Auto Scaling group, allowing the virtual monitoring appliance to scale-out or scale-in based on load. The monitoring appliance instances and the NLB can be placed in the same or different VPC. VPC Traffic Mirroring allows the user to configure filters to extract only the traffic that they are interested in. This minimizes load on the network. Note that mirrored traffic is counted as part of the instance bandwidth, so you must factor this into the sizing of the source instances.

Figure 1: Using VPC Traffic Mirroring to monitor traffic entering/leaving a VPC and intra-VPC traffic

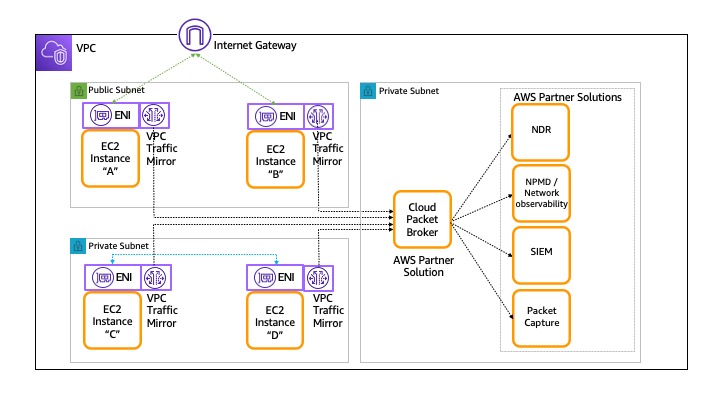

We hear from many customers that they must deploy multiple use cases simultaneously. In such a scenario, you may need to deploy multiple virtual monitoring appliances, and more than one appliance may need to inspect network traffic from the same source. That can be done by creating multiple VPC Traffic Mirroring sessions, but that approach can be administratively more complex to manage. In these situations, VPC Traffic Mirroring can be combined with a third-party cloud packet broker (for example, cPacket, Gigamon, NETSCOUT) to deploy multiple operational appliances in a fleet. This is shown in the following diagram (figure 2). Note that the cloud packet broker must be sized accordingly as it must process all mirrored traffic. The cloud packet broker may also perform advanced transformation functions (for example, TLS decryption, de-duplication etc.) before sending the right data to the right monitoring appliance. These optimizations are also valuable in conserving data transfer costs.

Figure 2: VPC Traffic Mirroring with multiple AWS Partner solutions

Deployment models

Many customers ask whether the deployment model should be distributed or centralized. In other words, should network-based tools be distributed closer to the workloads, or should the tools be centralized? The answer depends on the task at hand and the teams involved.

Distributed deployment model

There are at least three situations where a distributed deployment model is preferred.

- Tools close to workload being diagnosed: For example, it is typical to tackle troubleshooting scenarios with a distributed deployment model where tools are placed close to the workload being diagnosed. The following diagram (figure 3) shows an example where virtual monitoring appliances are deployed within a VPC, or within the same account. Deploying the monitoring appliance closer to the actual workload can often lower data transfer cost. When calculating total cost of deployment, you should also consider any costs arising from a larger number of locally deployed monitoring appliance instances.

Figure 3: Example distributed deployment model

- Ownership of app and monitoring responsibilities: If the team responsible for troubleshooting the workload also owns the workload and VPC, the app teams can spin up traffic mirroring sessions closer to their apps that sit in the VPCs that they own/control.

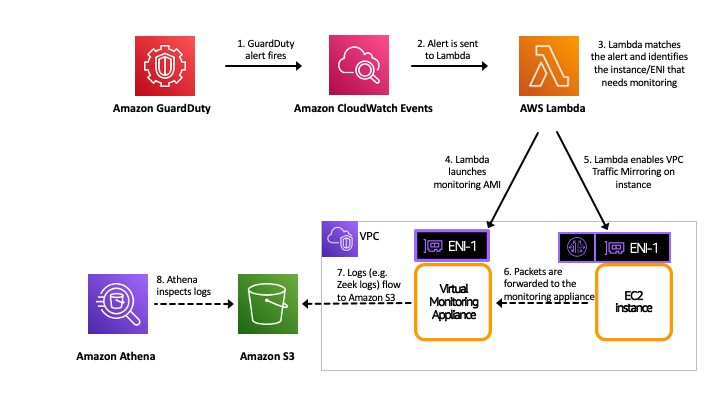

- Unplanned / on-demand traffic inspection: In some scenarios, network traffic may complement other security mechanisms that are already in place to provide more in-depth analysis. In these situations, VPC Traffic Mirroring sessions are brought up in real time to diagnose a use-case, and then shut down once done. For example, you can have critical Amazon GuardDuty alerts fire off an AWS Lambda function that dynamically sets up a virtual monitoring appliance, and then creates a VPC Traffic Mirroring session. This session mirrors traffic from the suspected source of the problem to the monitoring appliance. Once the diagnosis is complete, the Lambda function deletes the mirroring session and target instance. Since the session only lasts for a small amount of time, cost may not be a major factor—even in a distributed deployment model. The following diagram (figure 4) shows how to set up on-demand traffic inspection. (This diagram does not show the Lambda actions that tear down the VPC Traffic Mirroring session and monitoring appliance after analysis is completed.)

Figure 4: On-demand traffic inspection

Centralized deployment model

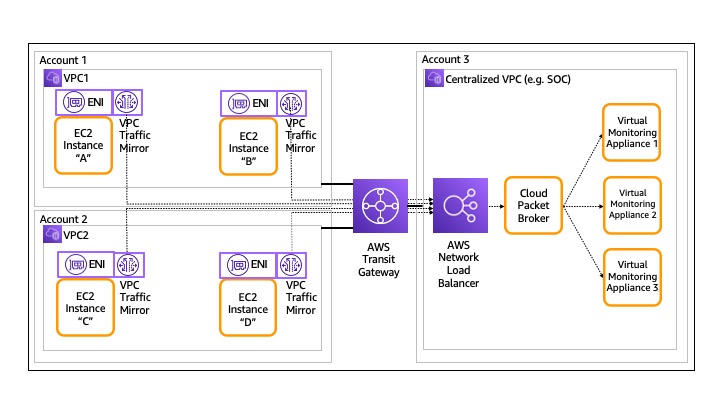

Organizations may have multiple owners when deploying a use case. For example, when one team is responsible for development and deployment of the application and another team is responsible for managing the operational health and/or security of the cloud infrastructure deployed. Another example is when an InfoSec team operating a Security Operations Center (SOC) is responsible for monitoring multiple accounts. This separation of responsibilities lends itself to a centralized monitoring architecture. In this architecture, shown in the following diagram (figure 5), virtual monitoring appliances are deployed to monitor traffic across multiple VPCs/accounts in the enterprise. However, app owners rarely, if ever, give the owner of a monitoring appliance permission to install agents on their workloads. App owners are more likely to provide the monitoring teams agentless access to network traffic. A centralized monitoring model is also a good option when monitoring a large amount of infrastructure.

Figure 5: A centralized deployment model

In a centralized deployment model, the target monitoring/security appliances must ingest and process data from multiple sources. Hence, we recommend using an NLB as a target with monitoring appliance instances behind it in an Auto Scaling group. Additionally, as discussed earlier, a cloud packet broker may be required if multiple types of monitoring appliances are used.

When considering a centralized model, it is important to estimate the data transfer costs (both inter-AZ and inter-Region), and perform a total cost of deployment analysis. This analysis is also useful in determining monitoring boundaries for the chosen deployment model.

Automation

VPC Traffic Mirroring currently operates at the elastic network interface of an EC2 compute instance. When the use cases we discussed earlier are implemented, administrators may need to enable traffic mirroring across all compute instances in a subnet. There are multiple ways to automate this process and implement a continuous monitoring strategy that detects new instances as they are spun up, and automatically adds them as sources for mirroring sessions. Security teams can use these automations to ensure the right coverage for their network security monitoring deployments in AWS. The following are two examples.

We have built a CloudFormation automation stack based on the AWS Serverless Application Model (AWS SAM) framework. This automation is used to quickly set up mirroring based on VPC, subnet, or input tags. Because VPC Traffic Mirroring is now supported on virtually all compute instances, this automation is handy when rapidly adding/deleting new traffic sources during troubleshooting. It is also helpful when you need to automatically mirror traffic as new instances are spun up, or as part of a scale-out policy in an Auto Scaling group. For details on how we did this, refer to this GitHub repository.

Another automation example is the AutoMirror open source project. The AutoMirror project automatically creates VPC Traffic Mirroring sessions using AWS Tags. AutoMirror is a Lambda function that monitors the state of EC2 compute instances. As instances are created/rebooted, the AutoMirror function is invoked that checks if the new instance holds the “Mirror” tag, and then creates a mirror session for supported compute instance types. The AutoMirror application is available in the AWS Serverless Application Repository, and users can get started with it from the AWS Management Console.

Conclusion

VPC Traffic Mirroring gives you deeper visibility into your AWS infrastructure. It does so by providing you with an agent-less method to acquire network traffic from/to Amazon Elastic Compute Cloud instances and sending it to a virtual monitoring/security appliance. This capability allows you to address use cases around network security, network performance monitoring, customer experience management, and network troubleshooting. We reviewed the basic operations of VPC Traffic Mirroring and how it can be deployed using distributed and centralized architecture models. In addition, customers can use VPC Traffic Mirroring with AWS Partner solutions to implement both continuous monitoring and on-demand monitoring strategies—and gain a deeper level of visibility into their VPCs and workloads.