AWS News Blog

AWS DeepComposer – Now Generally Available With New Features

|

|

AWS DeepComposer, a creative way to get started with machine learning, was launched in preview at AWS re:Invent 2019. Today, I’m extremely happy to announce that DeepComposer is now available to all AWS customers, and that it has been expanded with new features.

A primer on AWS DeepComposer

If you’re new to AWS DeepComposer, here’s how to get started.

- Log into the AWS DeepComposer console.

- Learn about the service and how it uses generative AI.

- Record a short musical tune, using either the virtual keyboard in the console, or a physical keyboard available for order on Amazon.com.

- Select a pretrained model for your favorite genre.

- Use this model to generate a new polyphonic composition based on your tune.

- Play the composition in the console.

- Export the composition, or share it on SoundCloud.

Now let’s look at the new features, which make it even easier to get started with generative AI.

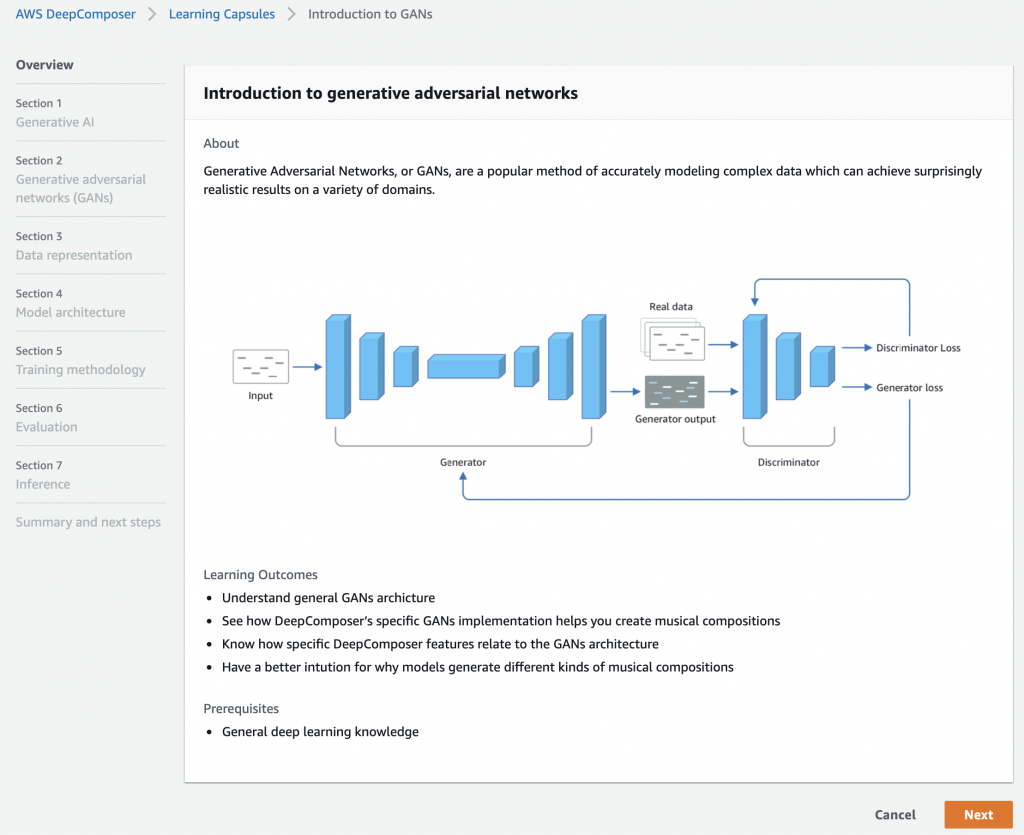

Learning Capsules

DeepComposer is powered by Generative Adversarial Networks (aka GANs, research paper), a neural network architecture built specifically to generate new samples from an existing data set. A GAN pits two different neural networks against each other to produce original digital works based on sample inputs: with DeepComposer, you can train and optimize GAN models to create original music.

Until now, developers interested in growing skills in GANs haven’t had an easy way to get started. In order to help them regardless of their background in ML or music, we are building a collection of easy learning capsules that introduce key concepts, and how to train and evaluate GANs. This includes an hands-on lab with step-by-step instructions and code to build a GAN model.

Once you’re familiar with GANs, you’ll be ready to move on to training your own model!

In-console Training

You now have the ability to train your own generative model right in the DeepComposer console, without having to write a single line of machine learning code.

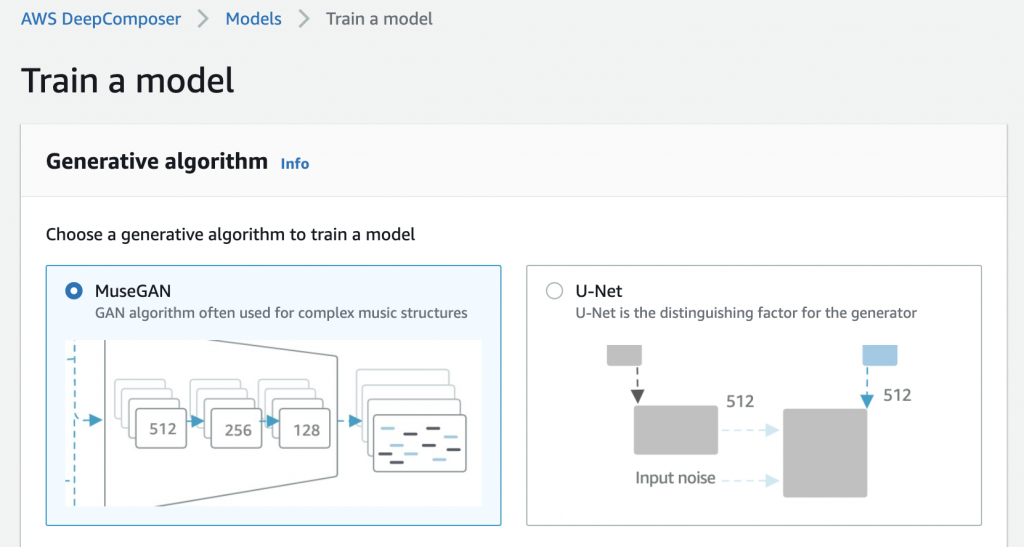

First, let’s select a GAN architecture:

- MuseGAN, by Hao-Wen Dong, Wen-Yi Hsiao, Li-Chia Yang and Yi-Hsuan Yang (research paper, Github): MuseGAN has been specifically designed for generating music. The generator in MuseGAN is composed of a shared network to learn a high level representation of the song, and a series of private networks to learn how to generate individual music tracks.

- U-Net, by Olaf Ronneberger, Philipp Fischer and Thomas Brox (research paper, project page): U-Net has been extremely successful in the image translation domain (e.g. converting winter images to summer images), and it can also be used for music generation. It’s a simpler architecture than MuseGAN, and therefore easier for beginners to understand. If you’re curious what’s happening under the hood, you can learn more about the U-Net architecture in this Jupyter notebook.

Let’s go with MuseGAN, and give the new model a name.

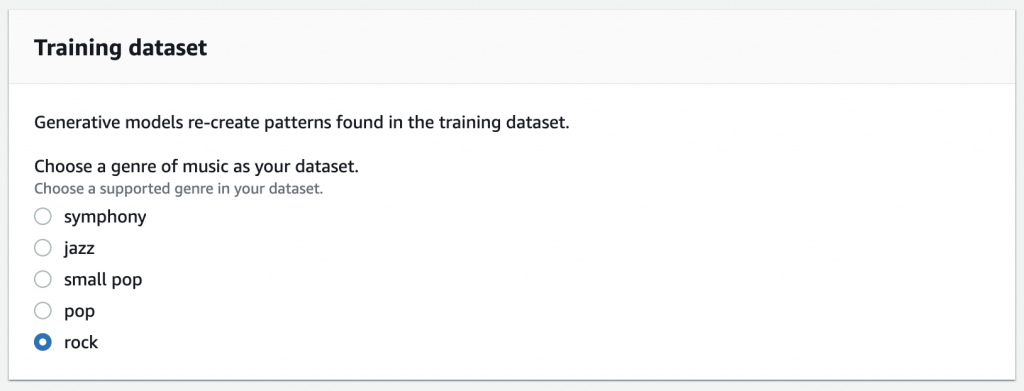

Next, I just have to pick the dataset I want to train my model on.

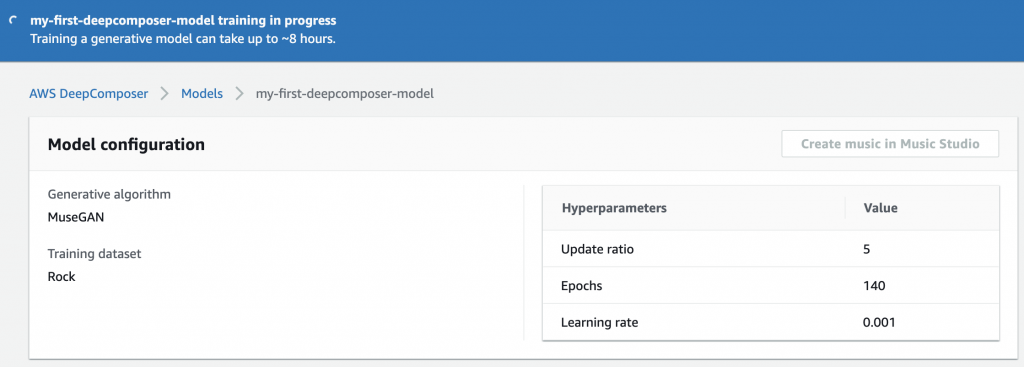

Optionally, I can also set hyperparameters (i.e. training parameters), but I’ll go with default settings this time. Finally, I click on ‘Start training’, and AWS DeepComposer fires up a training job, taking care of all the infrastructure and machine learning setup for me.

About 8 hours later, the model has been trained, and I can use it to generate compositions. Here, I can add the new ‘rhythm assist’ feature, that helps correct the timing of musical notes in your input, and make sure notes are in time with the beat.

Getting started

AWS DeepComposer is available today in the US East (N. Virginia) region.

The service includes a 12-month Free Tier for all AWS customers, so you can generate 500 compositions using our sample models at no cost.

In addition to the Free Tier, ordering the keyboard from Amazon.com in the US, and linking it to the DeepComposer console will get you another 3 months of free trial!

Give AWS DeepComposer a try, and let us know what you think! You can send your feedback through your usual AWS Support contacts, or on the AWS Forum for DeepComposer.

- Julien