AWS Compute Blog

Bringing Datacenter-Scale Hardware-Software Co-design to the Cloud with FireSim and Amazon EC2 F1 Instances

The recent addition of Xilinx FPGAs to AWS Cloud compute offerings is one way that AWS is enabling global growth in the areas of advanced analytics, deep learning and AI. The customized F1 servers use pooled accelerators, enabling interconnectivity of up to 8 FPGAs, each one including 64 GiB DDR4 ECC protected memory, with a dedicated PCIe x16 connection. That makes this a powerful engine with the capacity to process advanced analytical applications at scale, at a significantly faster rate. For example, AWS commercial partner Edico Genome is able to achieve an approximately 30X speedup in analyzing whole genome sequencing datasets using their DRAGEN platform powered with F1 instances.

While the availability of FPGA F1 compute on-demand provides clear accessibility and cost advantages, many mainstream users are still finding that the “threshold to entry” in developing or running FPGA-accelerated simulations is too high. Researchers at the UC Berkeley RISE Lab have developed “FireSim”, powered by Amazon FPGA F1 instances as an open-source resource, FireSim lowers that entry bar and makes it easier for everyone to leverage the power of an FPGA-accelerated compute environment. Whether you are part of a small start-up development team or working at a large datacenter scale, hardware-software co-design enables faster time-to-deployment, lower costs, and more predictable performance. We are excited to feature FireSim in this post from Sagar Karandikar and his colleagues at UC-Berkeley.

―Mia Champion, Sr. Data Scientist, AWS

As traditional hardware scaling nears its end, the data centers of tomorrow are trending towards heterogeneity, employing custom hardware accelerators and increasingly high-performance interconnects. Prototyping new hardware at scale has traditionally been either extremely expensive, or very slow. In this post, I introduce FireSim, a new hardware simulation platform under development in the computer architecture research group at UC Berkeley that enables fast, scalable hardware simulation using Amazon EC2 F1 instances.

FireSim benefits both hardware and software developers working on new rack-scale systems: software developers can use the simulated nodes with new hardware features as they would use a real machine, while hardware developers have full control over the hardware being simulated and can run real software stacks while hardware is still under development. In conjunction with this post, we’re releasing the first public demo of FireSim, which lets you deploy your own 8-node simulated cluster on an F1 Instance and run benchmarks against it. This demo simulates a pre-built “vanilla” cluster, but demonstrates FireSim’s high performance and usability.

Why FireSim + F1?

FPGA-accelerated hardware simulation is by no means a new concept. However, previous attempts to use FPGAs for simulation have been fraught with usability, scalability, and cost issues. FireSim takes advantage of EC2 F1 and open-source hardware to address the traditional problems with FPGA-accelerated simulation:

Problem #1: FPGA-based simulations have traditionally been expensive, difficult to deploy, and difficult to reproduce.

FireSim uses public-cloud infrastructure like F1, which means no upfront cost to purchase and deploy FPGAs. Developers and researchers can distribute pre-built AMIs and AFIs, as in this public demo (more details later in this post), to make experiments easy to reproduce. FireSim also automates most of the work involved in deploying an FPGA simulation, essentially enabling one-click conversion from new RTL to deploying on an FPGA cluster.

Problem #2: FPGA-based simulations have traditionally been difficult (and expensive) to scale.

Because FireSim uses F1, users can scale out experiments by spinning up additional EC2 instances, rather than spending hundreds of thousands of dollars on large FPGA clusters.

Problem #3: Finding open hardware to simulate has traditionally been difficult. Finding open hardware that can run real software stacks is even harder.

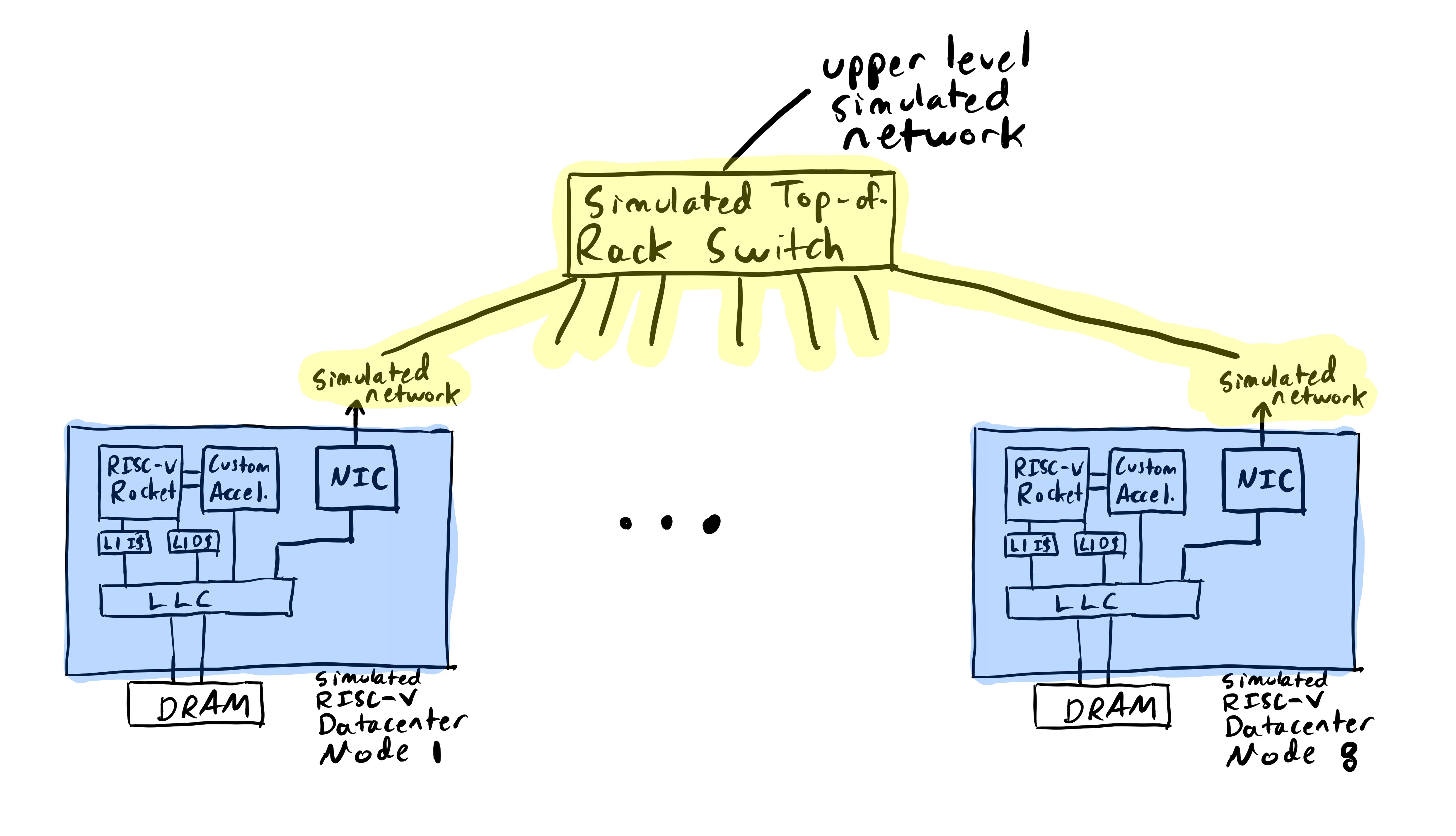

FireSim simulates RocketChip, an open, silicon-proven, RISC-V-based processor platform, and adds peripherals like a NIC and disk device to build up a realistic system. Processors that implement RISC-V automatically support real operating systems (such as Linux) and even support applications like Apache and Memcached. We provide a custom Buildroot-based FireSim Linux distribution that runs on our simulated nodes and includes many popular developer tools.

Problem #4: Writing hardware in traditional HDLs is time-consuming.

Both FireSim and RocketChip use the Chisel HDL, which brings modern programming paradigms to hardware description languages. Chisel greatly simplifies the process of building large, highly parameterized hardware components.

How to use FireSim for hardware/software co-design

FireSim drastically improves the process of co-designing hardware and software by acting as a push-button interface for collaboration between hardware developers and systems software developers. The following diagram describes the workflows that hardware and software developers use when working with FireSim.

The hardware developer’s view:

- Write custom RTL for your accelerator, peripheral, or processor modification in a productive language like Chisel.

- Run a software simulation of your hardware design in standard gate-level simulation tools for early-stage debugging.

- Run FireSim build scripts, which automatically build your simulation, run it through the Vivado toolchain/AWS shell scripts, and publish an AFI.

- Deploy your simulation on EC2 F1 using the generated simulation driver and AFI

- Run real software builds released by software developers to benchmark your hardware

The software developer’s view:

- Deploy the AMI/AFI generated by the hardware developer on an F1 instance to simulate a cluster of nodes (or scale out to many F1 nodes for larger simulated core-counts).

- Connect using SSH into the simulated nodes in the cluster and boot the Linux distribution included with FireSim. This distribution is easy to customize, and already supports many standard software packages.

- Directly prototype your software using the same exact interfaces that the software will see when deployed on the real future system you’re prototyping, with the same performance characteristics as observed from software, even at scale.

FireSim demo v1.0

This first public demo of FireSim focuses on the aforementioned “software-developer’s view” of the custom hardware development cycle. The demo simulates a cluster of 1 to 8 RocketChip-based nodes, interconnected by a functional network simulation. The simulated nodes work just like “real” machines: they boot Linux, you can connect to them using SSH, and you can run real applications on top. The nodes can see each other (and the EC2 F1 instance on which they’re deployed) on the network and communicate with one another. While the demo currently simulates a pre-built “vanilla” cluster, the entire hardware configuration of these simulated nodes can be modified after FireSim is open-sourced.

In this post, I walk through bringing up a single-node FireSim simulation for experienced EC2 F1 users. For more detailed instructions for new users and instructions for running a larger 8-node simulation, see FireSim Demo v1.0 on Amazon EC2 F1. Both demos walk you through setting up an instance from a demo AMI/AFI and booting Linux on the simulated nodes. The full demo instructions also walk you through an example workload, running Memcached on the simulated nodes, with YCSB as a load generator to demonstrate network functionality.

Deploying the demo on F1

In this release, we provide pre-built binaries for driving simulation from the host and a pre-built AFI that contains the FPGA infrastructure necessary to simulate a RocketChip-based node.

Starting your F1 instances

First, launch an instance using the free FireSim Demo v1.0 product available on the AWS Marketplace on an f1.2xlarge instance. After your instance has booted, log in using the user name centos. On the first login, you should see the message “FireSim network config completed.” This sets up the necessary tap interfaces and bridge on the EC2 instance to enable communicating with the simulated nodes.

AMI contents

The AMI contains a variety of tools to help you run simulations and build software for RISC-V systems, including the riscv64 toolchain, a Buildroot-based Linux distribution that runs on the simulated nodes, and the simulation driver program. For more details, see the AMI Contents section on the FireSim website.

Single-node demo

First, you need to flash the FPGA with the FireSim AFI. To do so, run:

[centos@ip-IP_ADDR ~]$ sudo fpga-load-local-image -S 0 -I agfi-00a74c2d615134b21

To start a simulation, run the following at the command line:

[centos@ip-IP_ADDR ~]$ boot-firesim-singlenode

This automatically calls the simulation driver, telling it to load the Linux kernel image and root filesystem for the Linux distro. This produces output similar to the following:

Simulations Started. You can use the UART console of each simulated node by attaching to the following screens:

There is a screen on:

2492.fsim0 (Detached)

1 Socket in /var/run/screen/S-centos.

You could connect to the simulated UART console by connecting to this screen, but instead opt to use SSH to access the node instead.

First, ping the node to make sure it has come online. This is currently required because nodes may get stuck at Linux boot if the NIC does not receive any network traffic. For more information, see Troubleshooting/Errata. The node is always assigned the IP address 192.168.1.10:

[centos@ip-IP_ADDR ~]$ ping 192.168.1.10

This should eventually produce the following output:

PING 192.168.1.10 (192.168.1.10) 56(84) bytes of data.

From 192.168.1.1 icmp_seq=1 Destination Host Unreachable

…

64 bytes from 192.168.1.10: icmp_seq=1 ttl=64 time=2017 ms

64 bytes from 192.168.1.10: icmp_seq=2 ttl=64 time=1018 ms

64 bytes from 192.168.1.10: icmp_seq=3 ttl=64 time=19.0 ms

…

At this point, you know that the simulated node is online. You can connect to it using SSH with the user name root and password firesim. It is also convenient to make sure that your TERM variable is set correctly. In this case, the simulation expects TERM=linux, so provide that:

[centos@ip-IP_ADDR ~]$ TERM=linux ssh root@192.168.1.10

The authenticity of host ‘192.168.1.10 (192.168.1.10)’ can’t be established.

ECDSA key fingerprint is 63:e9:66:d0:5c:06:2c:1d:5c:95:33:c8:36:92:30:49.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added ‘192.168.1.10’ (ECDSA) to the list of known hosts.

root@192.168.1.10’s password:

#

At this point, you’re connected to the simulated node. Run uname -a as an example. You should see the following output, indicating that you’re connected to a RISC-V system:

# uname -a

Linux buildroot 4.12.0-rc2 #1 Fri Aug 4 03:44:55 UTC 2017 riscv64 GNU/Linux

Now you can run programs on the simulated node, as you would with a real machine. For an example workload (running YCSB against Memcached on the simulated node) or to run a larger 8-node simulation, see the full FireSim Demo v1.0 on Amazon EC2 F1 demo instructions.

Finally, when you are finished, you can shut down the simulated node by running the following command from within the simulated node:

# poweroff

You can confirm that the simulation has ended by running screen -ls, which should now report that there are no detached screens.

Future plans

At Berkeley, we’re planning to keep improving the FireSim platform to enable our own research in future data center architectures, like FireBox. The FireSim platform will eventually support more sophisticated processors, custom accelerators (such as Hwacha), network models, and peripherals, in addition to scaling to larger numbers of FPGAs. In the future, we’ll open source the entire platform, including Midas, the tool used to transform RTL into FPGA simulators, allowing users to modify any part of the hardware/software stack. Follow @firesimproject on Twitter to stay tuned to future FireSim updates.

Acknowledgements

FireSim is the joint work of many students and faculty at Berkeley: Sagar Karandikar, Donggyu Kim, Howard Mao, David Biancolin, Jack Koenig, Jonathan Bachrach, and Krste Asanović. This work is partially funded by AWS through the RISE Lab, by the Intel Science and Technology Center for Agile HW Design, and by ASPIRE Lab sponsors and affiliates Intel, Google, HPE, Huawei, NVIDIA, and SK hynix.