Containers

Introducing the AWS Load Balancer Controller

The AWS ALB ingress controller allows you to easily provision an AWS Application Load Balancer (ALB) from a Kubernetes ingress resource. Kubernetes users have been using it in production for years and it’s a great way to expose your Kubernetes services in AWS.

We are pleased to announce that the ALB ingress controller is now the AWS Load Balancer Controller with added functionality and features such as:

- Network Load Balancers (NLB) for Kubernetes services

- Share ALBs with multiple Kubernetes ingress rules

- New TargetGroupBinding custom resource

- Support for fully private clusters

We’ll dive deeper into these new features but first let’s look at how Kubernetes exposes pods to external traffic with services and ingress.

Kubernetes uses services to expose pods outside of the cluster. One of the most popular ways to use services in AWS is with the loadBalancer type. With a simple YAML file declaring your service name, port, and label selector, the cloud controller will provision a load balancer for you automatically.

apiVersion: v1

kind: Service

metadata:

name: search-svc # the name of our service

spec:

type: loadBalancer

selector:

app: SearchApp # pods are deployed with the label app=SearchApp

ports:

- port: 80This is great because of how simple it is to put Elastic Load Balancing (ELB) in front of your application. The service spec been extended over the years with annotations and additional configuration. A second option is to use an ingress rule and an ingress controller to route external traffic into Kubernetes pods.

Ingress controllers in AWS use ELB to expose the ingress controller to outside traffic. They have added benefits such as advanced routing rules (e.g. path-based routing /service2) and consolidating services to a single entry point for lower cost and centralized configuration.

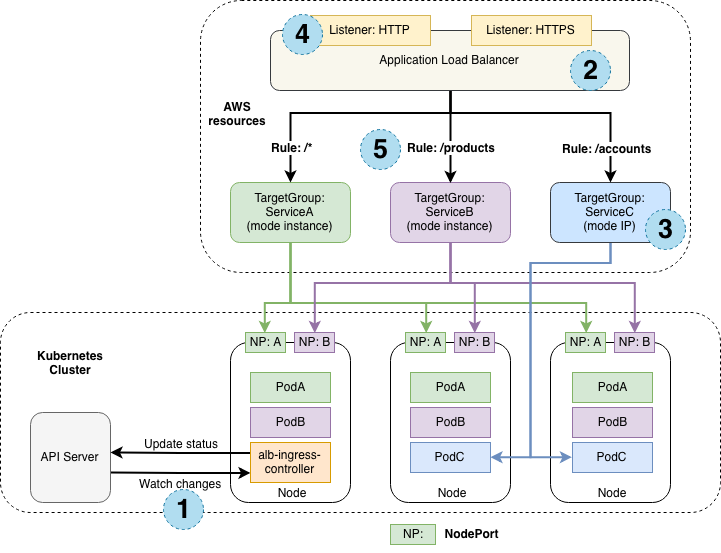

The ALB ingress controller is a popular way to expose Kubernetes services using Kubernetes ingress rules to create an ALB. The following diagram is from the original ALB ingress controller announcement to show benefits such as ingress path-based routing and the ability to route directly to pods in Kubernetes instead of relying on internal service IPs and kube-proxy.

Let’s take a closer look at the new features.

Network Load Balancers for Kubernetes services

Customers often need to run non-HTTP based services inside Kubernetes. Some examples of when you might want to use an NLB include game servers and services that use UDP communication. TCP/IP services work great on Kubernetes but exposing those services publicly has limited options. Exposing NodePorts and manually routing traffic to the correct instances have been popular options in the past.

The new Load Balancer Controller allows you to create NLBs for your Fargate pods with a simple annotation on the service.

kind: Service

apiVersion: v1

metadata:

name: nlb-ip-svc

annotations:

# route traffic directly to pod IPs

service.beta.kubernetes.io/aws-load-balancer-type: "nlb-ip"NLB IP targeting mode can also be useful outside the context of Fargate to optimize pod registration to NLBs. Using IP targeting mode, only the specific pods that belong to each service are added as targets. This allows your NLB to distribute traffic directly to pods, which decreases latency and improves scalability. This can also result in smaller Target Groups in large clusters, reducing management complexity.

Share an ALB with multiple Kubernetes ingress rules

In the AWS ALB ingress controller, prior to version 2.0, each ingress object you created in Kubernetes would get its own ALB. Customers wanted a way to lower their cost and duplicate configuration by sharing the same ALB for multiple services and namespaces.

By sharing an ALB, you can still use annotations for advanced routing but share a single load balancer for a team, or any combination of apps by specifying the alb.ingress.kubernetes.io/group.name annotation. All services with the same group.name will use the same load balancer.

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: SearchApp

annotations:

# share a single ALB with all ingress rules with search-app-ingress

alb.ingress.kubernetes.io/group.name: search-app-ingress

spec:

defaultBackend:

service:

name: search-svc # route traffic to the service search-svc

port:

number: 80TargetGroupBinding custom resource

When you use load balancers in AWS, you can set up different target groups to route traffic to service. In the new AWS Load Balancer Controller, you can now use a custom resource (CR) called TargetGroupBinding to expose your pods using an existing target group.

This will allow you to manage the load balancer completely outside of Kubernetes but still use that load balancer with the configuration that exists in Kubernetes objects. If your workflows require you to create load balancers outside of Kubernetes, this will allow you to use the ARN of the target group instead of Kubernetes annotations.

apiVersion: elbv2.k8s.aws/v1alpha1

kind: TargetGroupBinding

metadata:

name: SearchFilterApp # create a new TargetGroupBinding called SearchFilterApp

spec:

serviceRef:

name: search-svc # route traffic to the search-svc

port: 80

targetGroupARN: <arn-to-targetGroup>The TargetGroupBinding makes it easier to see the state of your grouped ingresses using the Kubernetes API because instead of switching between kubectl and aws, you can now see a more complete picture of your resources directly in kubectl.

If you’ve experienced API limits in the past, this new controller greatly reduces the API calls needed by using TargetGroupBindings. Instead of needing to update the ALB every time the target pods change (e.g. during scale events), the controller only needs to call the AWS APIs to update targets of TargetGroup directly.

AWS ALB Ingress Controller users and migration

If you’re using the AWS ALB Ingress Controller, you can seamlessly switch to the new AWS Load Balancer Controller. Your existing ingress rules and annotations will still work without changes. All of your existing rules and configuration will work the same way.

To take advantage of the new features, you’ll need to update to the new controller and start using the new annotations on your services and ingress objects. Check out the migration documentation for more information. If you have issues with the controller or would like to contribute, please get involved here.