AWS for Industries

Energy HPC Orchestrator powers collaborative, scalable energy computing

High-performance computing (HPC) is the backbone of modern seismic data processing. As seismic data volumes expand and geophysical workflows become increasingly compute-intensive, the industry is transitioning from traditional on-premises infrastructure toward flexible, cloud-based HPC solutions. Today’s seismic imaging and processing demands—driven by denser sensor arrays, higher resolution surveys, and more sophisticated algorithms—require unprecedented computational power. Energy companies must balance performance and cost while managing access, provisioning specialized workstations on demand, and maintaining stringent data security. Without proper orchestration tools, these environments can rapidly become fragmented, expensive, and unable to keep pace with growing seismic data volumes.

For decades, seismic data processing has remained largely vendor-specific, handled by a few processing companies using proprietary infrastructure and algorithms. This concentration created persistent bottlenecks: projects frequently experienced delays due to processor resource constraints, and the single-vendor nature prevented companies from achieving better results by combining superior solutions from multiple providers. Meanwhile, proprietary research often remained underutilized, and smaller technology providers struggled to bring innovative solutions to market due to limited workflow coverage.

Energy HPC Orchestrator on AWS

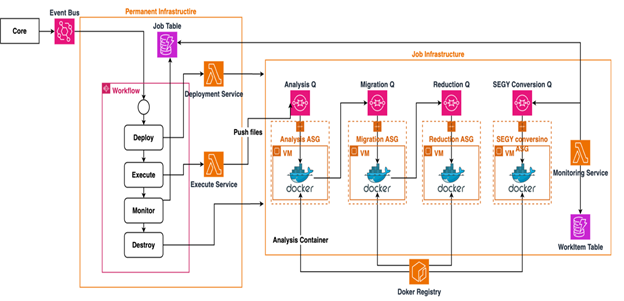

The Energy HPC Orchestrator (EHO) on AWS represents a new paradigm, built on components that work together to create a flexible, scalable processing environment. Rather than treating seismic processing as a monolithic workflow, the system deconstructs complex algorithms into specialized, decoupled services. The EHO’s Reverse Time Migration (RTM) template, shown in the following figure, demonstrates this approach.

The EHO workflow begins when the core component receives workflow requests and publishes events to the Amazon Simple Queue Service (Amazon SQS) event bus. The deployment step provisions the necessary infrastructure and routes job specifications to dedicated SQS queues for each processing pipeline. Each pipeline independently pulls messages, scales Amazon Elastic Compute Cloud (Amazon EC2) resources using Auto Scaling Groups, and executes containerized workloads. Throughout this process, the monitoring workflow tracks job execution across the pipelines, providing comprehensive observability and health monitoring. This architecture supports parallel processing of multiple workload types while maintaining separation between stable permanent infrastructure (deployment, execute, and monitoring workflows) and dynamic, on-demand job infrastructure that scales automatically.

RTM jobs infrastructure consists of the following services:

- Analysis service – Fragments work into independent processing units

- Migration service – Performs computationally intensive wave equation solving on GPU-accelerated instances

- Reduction service – Progressively stacks images

- Converter service – Handles output formatting

Because these services communicate through queue-based systems, each component scales independently based on workload demands, providing resilience and reducing processing bottlenecks.

Perhaps most transformative is the platform’s open marketplace ecosystem, which replaces single-vendor lock-in with true interoperability. Energy companies can now integrate commercial algorithms from multiple technology providers alongside proprietary internal research. The platform supports NVIDIA Energy Samples—reference implementations of key algorithms like RTM, Kirchhoff, and full waveform inversion (FWI) optimized for GPUs—helping organizations rapidly deploy GPU-accelerated seismic processing. Standardized data formats support seamless interoperability, helping geoscientists compose optimal workflows using the best algorithm for each processing step.

Intelligent resource management employs sophisticated automatic scaling that dynamically adjusts compute resources based on queued workload. Different processing tasks use appropriately optimized EC2 instance types: general-purpose instances for analysis, HPC-optimized instances for compute-intensive migration, and GPU-accelerated instances (G4dn, G5, G6, P4d, P5). The platform uses AWS Spot Instances for significant cost optimization while maintaining fault tolerance.

AWS Partners and the Energy HPC Orchestrator

AWS Partners power the Energy HPC Orchestrator (EHO) by contributing specialized seismic processing modules to an open, interoperable ecosystem—replacing single-vendor lock-in with operator choice at every stage of the workflow. EPAM Systems, an AWS Premier Tier Services Partner, co-developed the cloud-based EHO platform alongside AWS, contributing solution architecture and integration services. Processing companies such as S-Cube (with their XWI algorithm), Seiswave RTM, and Seimax RTM participate as algorithm providers in the open marketplace ecosystem, offering specialized seismic processing capabilities that can be seamlessly integrated into workflows. This partnership model establishes an open marketplace where multiple technology providers can contribute specialized algorithms, innovators can bring solutions to market, and energy companies can integrate best-of-breed capabilities from various vendors—mitigating traditional single-vendor constraints that previously limited innovation and delayed projects due to resource bottlenecks.

Academic-industry collaboration: University of Texas and Occidental

International energy company Occidental integrated the University of Texas (UT) Madagascar—an open source seismic processing framework developed at UT—into production workflows running on AWS. The EHO solution made it possible to combine Madagascar’s GPL-licensed algorithms with Occidental’s proprietary code and commercial tools in the same processing pipeline.

“The Energy HPC Orchestrator lets us run Madagascar’s open-source algorithms alongside our internal research and commercial solutions on AWS. This cut our development time and gave us the flexibility to swap in new technologies as needed,” says Klaas Koster, VP Subsurface Innovation, Occidental.

The key technical benefit is that organizations can build seismic workflows using whatever components work best—open source, commercial, or internal—while maintaining full vendor and framework independence. The platform handles the infrastructure, security, and scaling on AWS.

Future steps: EHO evolution with MCP server integration

The addition of a Model Context Protocol (MCP) server to the EHO makes building and scaling seismic workflows simple. By exposing the platform’s capabilities through standardized MCP interfaces, the orchestrator becomes accessible to AI assistants and large language models (LLMs), democratizing seismic workflow development. With MCP server integration, geoscientists can describe their processing objectives in natural language rather than navigating traditional interfaces. An AI assistant connected to the MCP server can interpret requests like:

“I’d like to build a seismic data processing workflow on the EHO platform. Create a sequential processing chain using the NMO and STACK applications. Use the XYZ gathers and ABC velocity volume as inputs. Execute the workflow.”

The MCP server translates this into the JSON parameters, service configurations, and resource specifications the orchestrator requires — no manual navigation needed. This makes the powerful multi-vendor ecosystem accessible to a broader audience and accelerates the journey from concept to production.

Conclusion

The Energy HPC Orchestrator introduces a new model for how the energy industry delivers seismic processing at scale — moving from restrictive single-vendor solutions to an open, collaborative ecosystem that provides geoscientists with unprecedented flexibility and control. With the integration of MCP servers, the platform further democratizes access to advanced HPC capabilities, helping teams use natural language interfaces for workflow creation and accelerating the path from concept to production.

Ready to transform your seismic processing workflows? Contact your AWS account team or visit the AWS Energy & Utilities page to learn how Energy HPC Orchestrator gives your organization the freedom to choose best-of-breed tools across vendors, accelerate time-to-insight, and unlock better outcomes with cloud-native processing at scale. EPAM Systems is ready to support your implementation with solution architecture and integration services—reach out today to start your journey toward more flexible, scalable, and cost-efficient seismic processing on AWS.