AWS for Industries

Modernizing life-saving workloads with AWS serverless

Introduction

Amazon Web Services (AWS) partnered with the National Marrow Donor Program (NMDP)—formerly known as Be The Match® and rebranded to NMDP℠ in early 2024 – to modernize their mission-critical bone marrow matching system, transforming it from traditional on-premises infrastructure to a cloud-native serverless architecture. This blog post chronicles NMDP’s decade-long modernization journey, highlighting how they leveraged AWS serverless technologies to improve operational efficiency, enhance security, achieve greater scalability, and ultimately accelerate their life-saving mission. Through incremental steps and disciplined innovation, NMDP demonstrates how organizations can successfully modernize legacy systems while maintaining the reliability required for critical healthcare applications.

This collaboration exemplifies AWS’s commitment to supporting nonprofit organizations through cloud technology, helping mission-critical healthcare systems achieve greater impact through modernization.

National Marrow Donor Program’s Mission and Goals

For patients with bone marrow disorders and blood cancers, bone marrow transplant is an essential treatment option, and NMDP℠, a nonprofit leader in cell therapy, serves as the critical bridge between patients and life-saving donors through allogeneic bone marrow transplants where patients receive bone marrow from living donors. Since its inception in 1987, NMDP has touched over 140,000 lives through cell therapy while helping patients live longer, healthier lives by providing comprehensive support to patients and families before, during, and after transplants, supporting research to improve the transplant process, and facilitating over $6.6 million in patient donations in 2023 alone. Operating a massive donor registry of 41 million potential bone marrow donors—including 9+ million U.S. donors—NMDP adds approximately 300,000 new donors annually and impacted 7,435 lives in 2023 through its sophisticated HapLogic℠ system that matches patients with donors, provides transplant coordination services, and shares consented data for biomedical research. The organization’s groundbreaking ‘Donor for All’ initiative has revolutionized transplant accessibility by demonstrating that patients can safely receive transplants from partially matched donors with outcomes comparable to fully matched donors, significantly expanding treatment options for patients without fully matched donors, while HapLogic searches the registry to find optimal donor-patient matches—many requiring completion in under a minute—and maintains detailed genetic profiles for each donor to support over 200 clinical trials and ongoing research studies.

To support this critical mission at scale, NMDP embarked on a decade-long modernization journey that would transform HapLogic from legacy infrastructure to a cloud-native serverless architecture.

Understanding HapLogic’s End-to-End Ecosystem

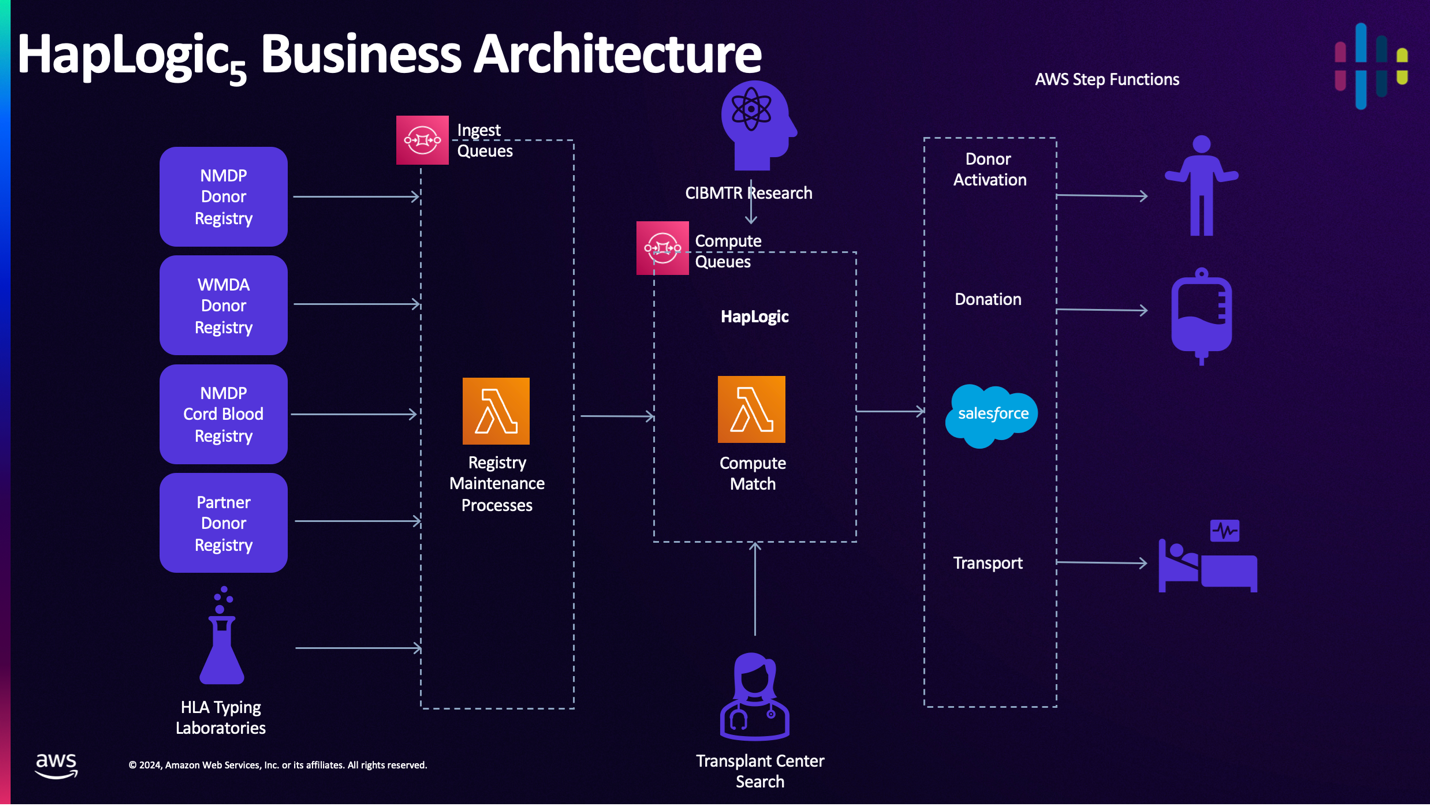

Before diving into the technical modernization details, it’s important to understand HapLogic’s role within NMDP’s broader operational ecosystem. HapLogic serves as the central matching engine that connects multiple donor registries—including NMDP’s own registry, the World Marrow Donor Association (WMDA) international registry, cord blood banks, and partner registries—with downstream processes that activate donors and coordinate transplants. The system ingests donor data and HLA typing results from laboratories, performs rapid parallel matching computations, and delivers results to transplant centers while simultaneously feeding data to NMDP’s research programs. Figure 1 illustrates how HapLogic orchestrates the complex workflow from donor registration through successful transplantation, touching multiple systems and stakeholders throughout the patient’s journey.

Figure 1: HapLogic Business Architecture

The search pattern that HapLogic uses is embarrassingly parallel: the HapLogic search algorithm can readily match patient profile with each donor profile independently, with minimal coordination between tasks. Embarrassingly parallel problems lend themselves to modern serverless architecture, but often, the journey to modernization starts with small steps rather than giant leaps. NMDPs modernization journey started with refactoring HapLogic to open source, rehosting it to hybrid cloud, and finally refactoring it to be cloud native.

The Journey

In 2014, HapLogic was using a traditional batch processing architecture that ran on-premises. NMDP used a number of proprietary, licensed technologies. As a result, their software license costs represented a significant part of the IT budget. Release cycles typically ranged from four to six years, which was far too long for their evolving needs.

By refactoring Java jobs as Scala/Spark and re-platforming their data management to Hadoop, NMDP significantly reduced these license costs, but they still had to manage 312 hosts, which represented a significant operational burden and slowed them down. This architecture required high IT capital hardware investments so they could stay on modern, supported infrastructure. It was too expensive to build the high availability and disaster recovery capabilities they would need to meet their system availability requirements.

For the next major release, NMDP rehosted HapLogic to the AWS cloud. AWS compute, networking, and storage environments were much simpler and easier to manage at scale on AWS than on-premise. NMDP could take advantage of the most advanced compute processors simply by relaunching their application. NMDP adopted a blue-green deployment strategy—maintaining two identical production environments where new releases could be fully deployed and tested in the ‘green’ environment while the current version continued serving patients in the ‘blue’ environment. Once testing confirmed the new release met all requirements, traffic could be instantly switched from blue to green with minimal downtime and the ability to quickly roll back if issues arose. This approach was particularly critical for a life-saving system where even brief outages could delay time-sensitive transplant searches. NMDP completed the rehosting project in just one year, significantly faster than their previous 4-6 year release cycles

Challenges remained, however, their Hadoop environments were expensive, labor-intensive, and scaled up and down slowly. They found it difficult to innovate within the constraints of the Hadoop platform.

Modernizing in the cloud

The next step was to modernize. NDMP refactored HapLogic into small components that they fit into AWS Lambda functions. They migrated their database layer to Amazon Aurora serverless v2. This decoupled their compute and storage and moved the complexity of provisioning database capacity and managing the database platform to the AWS side of the shared responsibility model. The compute, database, and networking services scaled smoothly and instantly, which made it easier to operate. AWS handles OS-and database-level patches and updates, which helped NMDP become InfoSec compliant for the first time. NDMP was able to build High Availability and Disaster Recovery capabilities affordably. NMDP estimates that this architecture has already delivered over $1 million in cost savings since going into production in 2024.

It took NMDP only 12 months to build this new architecture. Lives are at stake, so NMDP then ran the new implementation side-by-side with the previous implementation for six months before putting it into production.

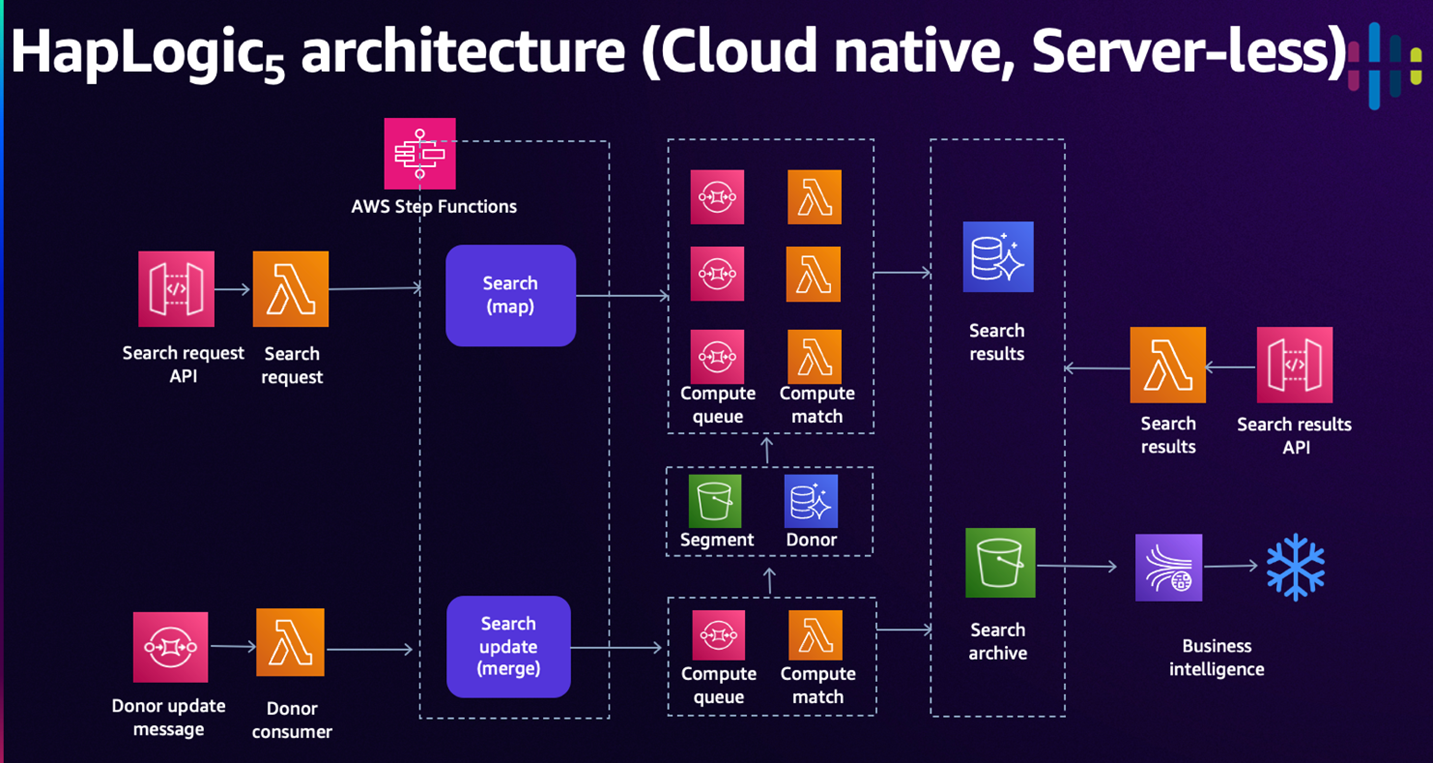

Figure 2: HapLogic v5 Architecture

Figure 2 illustrates the HapLogic v5 serverless architecture. In this design, search requests and donor updates arrive via an API or message service, where AWS Lambda functions initiate AWS Step Functions to execute workflows. For the search functions, HapLogic maps the donor records from the NMDP Donor Registry to parallel compute queues. Lambda functions perform the match logic and put results in an Amazon Aurora database. Search results are available via an API. Because the search is embarrassingly parallel it can use multiple compute queues in parallel, and can deliver results in seconds.

HapLogic archives search results in Amazon S3 buckets, where they are available for researchers and business intelligence users who query them using Snowflake. NDMP chose S3 buckets because they are highly durable, scalable, and cost-effective.

Technical lessons learned

When adopting AWS Lambda, NMDP initially encountered problems with cold starts and memory footprints. This led NMDP to a frugal approach to implementation. NMDP optimized the data structures, which reduced their memory footprint. They eliminated unnecessary frameworks and libraries, which reduced cold start times. They continuously simplify code, which reduces cost, increases performance, and helps them move faster. NDMP is also continuously optimizes their database. They normalized data structures to reduce cost and simplify operations.

NMDP is also using AWS native observability services like Amazon CloudWatch and AWS X-Ray to reduce time and effort required to detect, diagnose, and correct problems. Software development teams use the same tools to understand their application behaviors in development as they do in production, which simplifies operations without adding software license costs.

Involving the whole organization

NDMP learned that moving to a cloud-native modern architecture is a whole-team effort that involves not only developers, but also business, security, operations, testing, and finance teams. Here’s why:

- Business leaders have a responsibility to be skeptical of IT migration and modernization projects. IT should include business leaders early to explain what are doing and why, in terms of business outcomes. IT should conduct small experiments to demonstrate results and earn trust and should take measurable steps to show that patients and other stakeholders will not be put at risk.

- The AWS Cloud enables IT Operations to spin up and spin down resources at lightning speed. This can be a challenge for security teams. Developers should engage security and IT operations teams early. Cross-functional teams should work together to design security controls in applications; build Infrastructure as Code that embeds security controls in infrastructure; use managed services to offload security and operations burden; and use cloud-native services to provide unprecedented visibility at scale.

- The AWS Cloud enables development teams to accelerate delivery of new features and capabilities, but this can be a challenge to testing or QA teams that are using traditional methods. Test teams should modernize using approaches like automated unit testing, and should consider generating unit tests with AI services like Amazon Q Developer.

- The AWS Cloud can deliver significant total cost savings and reduce time-to-value for IT investments, as you pay for only what you use, when you use it. This new way of thinking can be a challenge for Finance teams that are accustomed to managing capital investments. It is a special challenge for nonprofits where significant grant or donor funding may be earmarked for capital expenditures. When migrating and modernizing in AWS Cloud, IT should engage Finance early to understand how to map funding to cloud expenditures. Also, IT and Finance must consider the Free Capacity Effect.

What’s the Free Capacity Effect?

In traditional on-premises deployment, IT will have excess capacity, for a number of reasons. Maybe the organization has bought enough capacity to address future IT needs but isn’t ready to use that capacity yet. Maybe IT bought more capacity than it needed, to make sure they didn’t run out. Or maybe an IT resource is no longer needed. In all of these situations, IT has idle capacity. Innovative developers have knack for finding that unused capacity to run experiments without having to get executive approval, because the organization doesn’t incur additional cost to use idle capacity.

In the cloud, organizations don’t have compute resources sitting idle, especially when they’re using serverless services. If someone spins up cloud resources so they can run an experiment, the IT costs for that experiment will typically show up on the next month’s AWS bill. Finance teams may not be prepared to have full visibility into every expenditure, including innovations and experiments that are inherently unplanned.

Traditionally, unplanned expenditures would go through a slow process to get business approval. To encourage rapid but managed innovation, Finance teams should consider a couple of small steps: Create an expedited approval process for promising innovations and allocate sandbox budgets so innovators can run limited experiments without waiting for executive approval.

Summary

Over the past ten years, NMDP used AWS services to simplify and streamline operations, improve their security posture, achieve greater reliability, scalability and performance efficiency, and optimize costs. This led to an increased pace of innovation. They did not start with a huge leap, but with small steps and disciplined innovations. They adopted a modern architecture that is well-suited to their application and have included their whole organization in the transformation. As a result, they can deliver innovative services to their community faster, more efficiently, more effectively, and at a larger scale.

Ready to start your modernization journey? Visit the AWS Serverless Blog to explore best practices and implementation guides, or dive deeper into this transformation story by watching the complete AWS re:Invent session Saving lives with serverless: Modernizing transplant matching on AWS (IMP206). Contact your AWS account team today to begin modernizing your critical workloads.