Agentic AI with AWS Databases

The role of databases in agentic AI

To be effective at operating autonomously, AI agents require the right context and memory. Here is how AWS Databases empower your AI agents:

- Agentic development. AWS Databases are available with agentic application development platforms, such as Kiro and Vercel v0, so you can go quickly from ideation to prototyping to production-ready applications with enterprise security and reliability out-of-the-box.

- Agentic grounding. AWS Databases anchor your AI agents to your data stored in your operational databases so they are grounded with context. When vector search is needed, you can use native vector search capabilities in databases such as Aurora, ElastiCache for Valkey, and Neptune. For more advanced use cases, you can utilize built-in Amazon Bedrock integrations for GraphRAG.

- Statefulness. AI agents require persistent memory so they can maintain context over long time periods. AWS Databases provide long-term, short-term, and episodic memory, and offer native integrations with open source agentic frameworks so you can build agents that remember user preferences, past conversations, and decisions.

MCP servers and AWS Databases

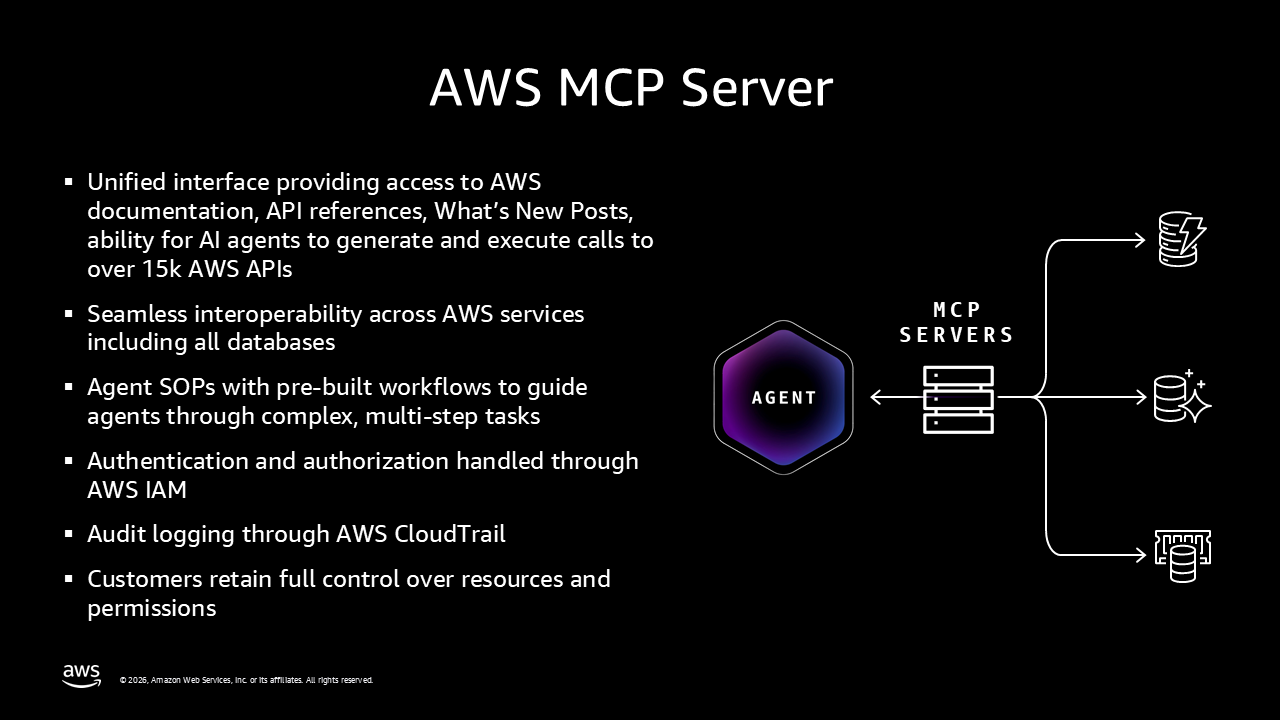

Each AWS database offers a MCP (Model Context Protocol) server that can be used with your choice of agentic IDE including Kiro, VS Code, and Cursor.

Natural language interactions

Aurora DSQL MCP server converts natural language questions and commands into structure PostgreSQL-compatible SQL queries and executes them in the configured Aurora DSQL database. This is also available for Aurora PostgreSQL and Aurora MySQL. In addition, the Aurora DSQL MCP server has built-in access to documentation, search, and best practice recommendations. AI agents can also perform administrative tasks such as creating and managing Aurora clusters.

Our workshop teaches you how to configure and integrate AI agents with various MCP servers and build autonomous agentic workflows using frameworks. You will become familiar with evaluations, policy and long term memory.

Powerful tools for AI agents to act as a solution architect

We’ve invested in additional tooling to bolster what AI agents can accomplish. The DynamoDB MCP server provides 8 tools that enable AI agents to act as DynamoDB solution architects, such as AI-assisted data modeling for DynamoDB and AI-powered MySQL to DynamoDB modernization.

Managed remote MCP server

AWS MCP Server helps AI agents and AI-native IDEs perform real-world, multi-step tasks across AWS services, including all of AWS Databases. It enables you to ask AI assistants to perform tasks like provisioning databases using Agent SOPs (step-by-step guidance). The AWS MCP server handles authentication and authorization through AWS IAM and provides audit logging through AWS CloudTrail. You have full control over resources and permissions as AI agents execute tasks across multiple AWS services, enabling you to complete real-world tasks faster.

How AWS frontier agents work with AWS Databases

Frontier agent for operational excellence

AWS DevOps Agent discovers databases as resources, collect telemetry data from them via services like Amazon CloudWatch, and use this information to perform autonomous root cause analysis and provide mitigation plans during operational incidents. Anything that is in CloudWatch and Performance Insights is available to AWS DevOps Agent.

Frontier agent for more secure applications

AWS Security Agent interacts with the applications that uses AWS Databases to find vulnerabilities, and helps you ship secure applications. It can review designs before implementation using your defined security requirements and organizational standards.

AWS Security Agent also analyzes code in pull requests and provide developers with tailored remediation guidance. It can pentest applications on demand by discovering and validating vulnerabilities through tailored attack scenarios using your application context, and offers ready-to-implement fixes that accelerate remediation. As new features release like deletion protection, these features will be automatically enabled with AWS Security Agent.

Frontier development agent that extends your flow

Kiro autonomous agent can design, provision, and optimize database infrastructure through natural language. It integrates with MCP servers to bridge the gap between AI reasoning and technical control of databases.

- Infrastructure as Code (IaC) Generation: Kiro can take a high-level requirement (e.g., "deploy a 3-tier application") and automatically generate production-ready Terraform, CDK, or CloudFormation scripts to provision the database with best practices for security and networking.

- Performance Optimization: Kiro can analyze real-time performance data and suggest optimizations such as changing instance types (e.g., rightsizing from a t3.medium to t3.small).

- DBA Task Automation: Using the Kiro CLI, database professionals can use natural language to explore schemas or write complex SQL queries.

Components of agentic AI applications

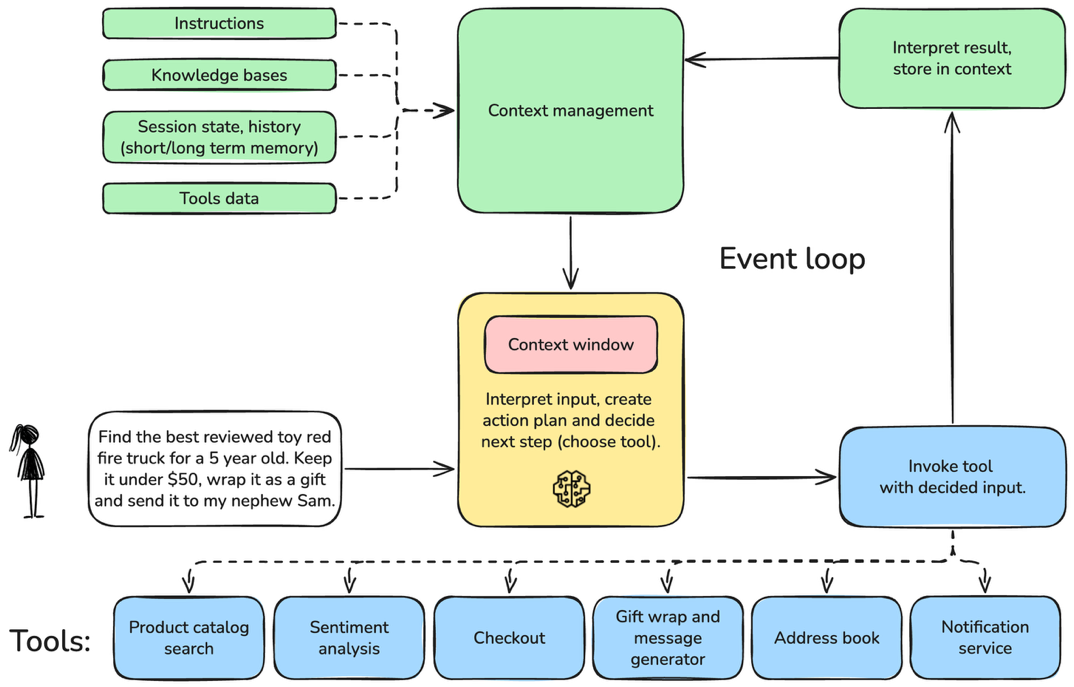

An agentic AI application consists of three components.

- The context management component gathers and filters relevant information—such as conversation state, stored memories, and tool outputs to provide the most useful context for each model interaction.

- The reasoning and planning component interprets user intent, incorporates the available context, and determines whether to respond directly or to create and execute a plan of actions, updating memory as progress is made.

- The tool or action execution component carries out those planned actions using available tools and feeds the results back into context management, enabling continuous refinement until the user’s task is completed.

Practitioner's guide to agentic AI

The session teaches you the skills needed to deploy end-to-end agentic AI applications using your most valuable data, focusing on data management processes like MCP servers and Retrieval Augmented Generation (RAG). It provides concepts that can be applied to customizing agentic AI applications. You will learn best practice architectures using databases along with data lake, governance, and data quality concepts, and how Amazon Bedrock AgentCore and Bedrock Knowledge Bases tie solution components together.

Symbolic AI in the age of LLMs

While generative AI captures headlines, the untapped potential lies in combining it with decades-proven symbolic AI techniques. This session explores how you can leverage symbolic AI, such as ontologies and logic-based reasoning to build knowledge graphs and to enhance your AI capabilities. It dives deep into these topics to understand what ontologies look like, where semantics comes from, and what it means to build working knowledge graphs. You will learn concrete strategies for defining and adopting ontologies and how to use reasoning for your benefit.

Build AI agents for database operations monitoring

When databases can discover, recommend, and optimize on their own, they stop being reactive systems and become intelligent partners. You will learn how to build intelligent AI agents that detect performance bottlenecks, surface query pattern issues, and suggest optimization strategies for AWS Databases, including Amazon Aurora and Amazon RDS.

Optimize agentic AI apps with semantic caching

Multi-agent AI systems now orchestrate complex workflows requiring frequent LLM calls. In this session, you will learn how to reduce latencies from seconds to single-digit milliseconds using semantic caching - powered by Amazon ElastiCache for Valkey vector search - in agentic AI applications, while also reducing the cost incurred in production workloads. By implementing semantic caching in agentic architectures like RAG-powered assistants and autonomous agents, you can create performant and cost-effective production-scale agentic AI solutions.