Containers

Fully private local clusters for Amazon EKS on AWS Outposts powered by VPC Endpoints

Introduction

Recently, Amazon Elastic Kubernetes Service (Amazon EKS) added support for local clusters on AWS Outposts racks. In a nutshell, this deployment option allows our customers to run the entire Kubernetes cluster (i.e., control plane and worker nodes) on AWS Outposts racks. The rationale behind this deployment option is often described as static stability.

In practice, this means that stability is translated into continued operations during temporary, unplanned network disconnects of the AWS Outposts rack network connection from its Parent Region.

Outposts remains a fully connected offering, which acts as an extension of the AWS Cloud in your datacenter. In the event of a network disconnect between your AWS Outposts service and the AWS Parent Region, your top priority and focus should be troubleshooting your service link connection in aim to restore the connectivity as early as possible.

If your use case requires a primarily disconnected environment or your environment isn’t equipped with a highly available and reliable consistent connectivity to the AWS Parent Region, then we advise to look into Amazon EKS Anywhere or Amazon EKS Distro as the deployment options.

In this post we’ll show two Amazon EKS local clusters for AWS Outposts design patterns, (i.e., pattern A and pattern B). We’ll focus on pattern B, which enables a deployment of a local cluster communicating privately with the mandatory AWS in-region service endpoints, powered by Virtual Private Cloud (VPC) Endpoint Services. Privately means that all network traffic stays within the VPC boundaries.

We’ll also provide a high-level walkthrough and an eksctl config yaml, which builds the cluster.

This post assumes a solid familiarity with AWS Outposts and local clusters, here’s a good start following a deeper dive.

The challenge

For the launch of Amazon EKS local clusters for AWS Outposts, the cluster required public access to connect to the in-region AWS service endpoints. This access means that customers had to create an in-region public subnet and deploy a NAT Gateway which routes the traffic to the AWS service endpoints (publicly) via an Amazon VPC Internet Gateway (IGW). Many customers told us that they prefer to use their own existing on-premises internet egress paths.

At the same time most often their internet egress security posture does not permit them to deploy a VPC with an Internet Gateway.

With VPC endpoints, customers can remove this constraint, and enable the traffic to securely and privately communicate with the mandatory in-region VPC service endpoints.

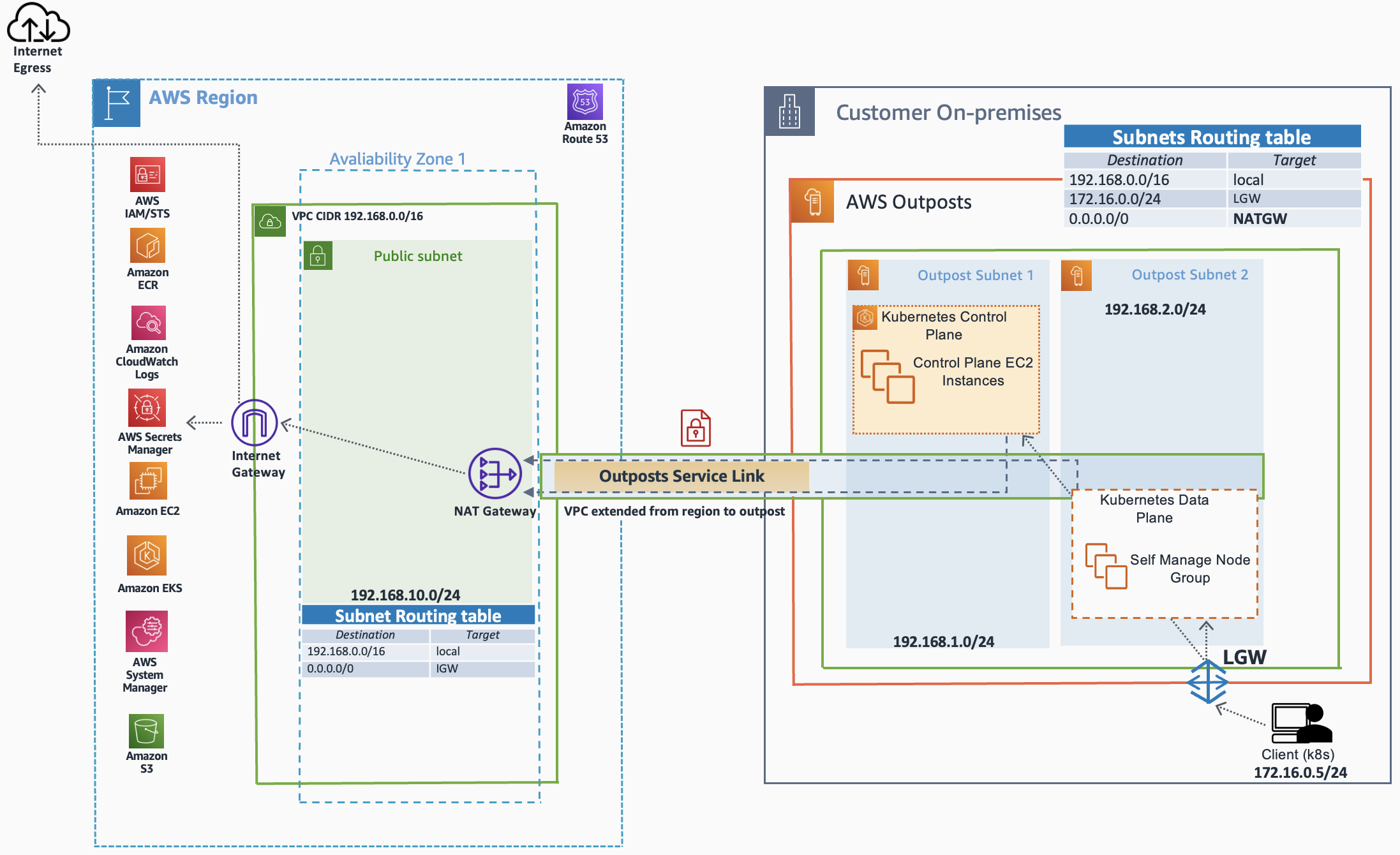

The following diagram depicts the service endpoints access (public, via in-region NAT Gateway):

Design Pattern A – Public Egress

Solution overview

Working backward from our customers, we recently added support for fully private Amazon EKS local clusters on Outposts leveraging VPC endpoints.

This essentially means that you’re no longer required to create an in-region public subnet and NAT Gateway for the sake of connecting the local cluster to its mandatory regional service endpoints.

In simple words, this new design pattern allows the in-outpost Kubernetes control plane and data plane nodes to privately communicate with the regional AWS service endpoints. This is only possible given that the VPC is deployed with the required VPC service endpoints, prior to the deployment of the local cluster.

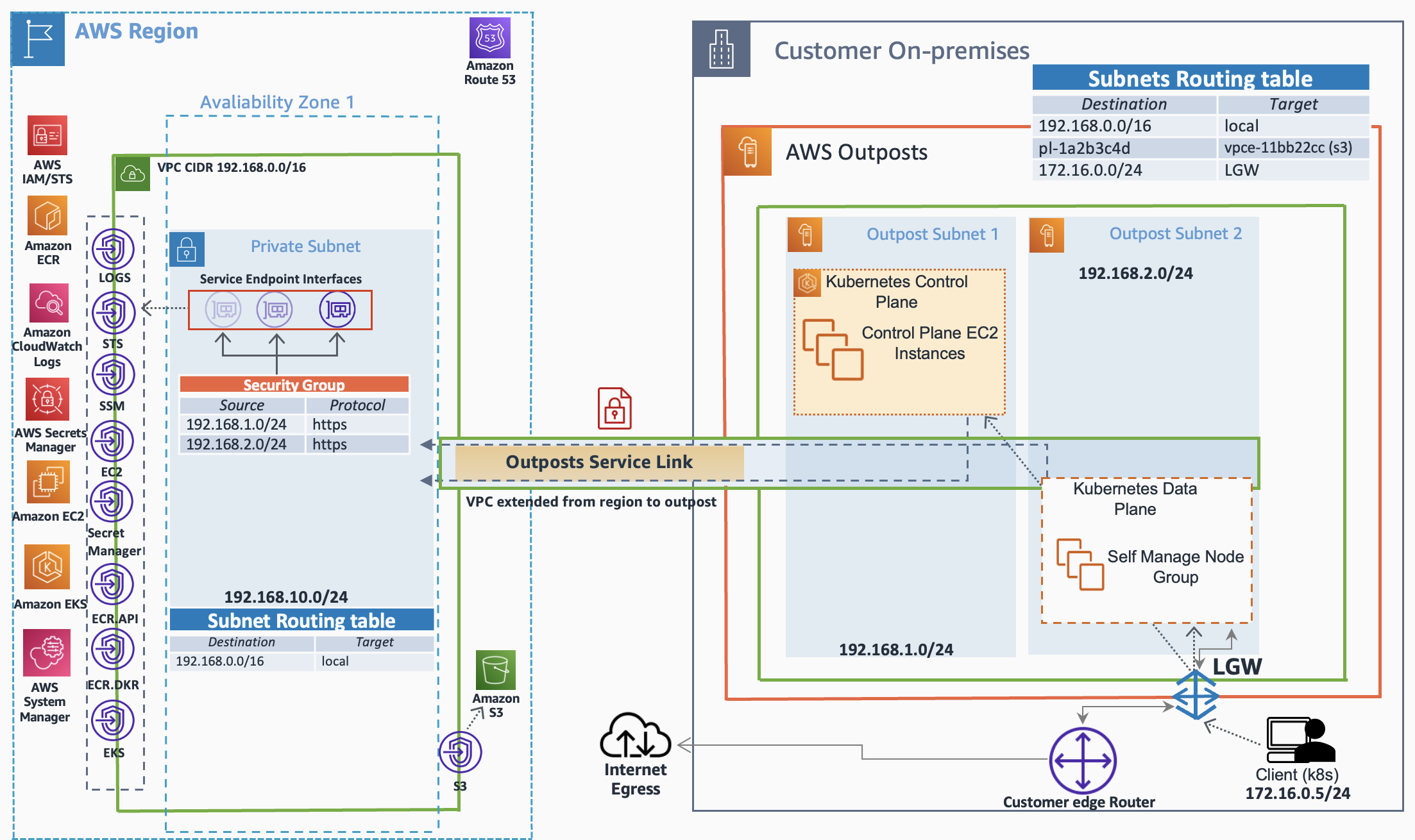

The following diagram depicts the private access (via in-region VPC Endpoint Services):

Design Pattern B – Fully Private

When diving deep into design pattern B above, we observe the following:

- The dependency on an in-region public subnet with a NAT Gateway no longer exists (pattern A)

- In principle, the VPC in this design can be deployed without an attached Internet Gateway (IGW)

- The internet egress point (outposts) allows the use of an existing on-premises upstream routing paths.

Let’s build pattern B.

Walkthrough

- Foundation: Deployment of the Amazon VPC and its requirements to support pattern B

- Local Clusters: Deployment of the Kubernetes local cluster into the designated VPC

- Validation: Probing the cluster status

Prerequisites

AWS Outposts

This walkthrough requires a fully operational AWS Outposts (rack based) environment.

Specifically:

- The racks Service link is up

- An Amazon VPC with all the required subnets, routing and VPC endpoints design (see foundation phase)

- Direct VPC routing and Local Gateway(LGW) are both configured.

- In our scenario, pattern B relies on direct VPC routing mode

- Internet egress follows the (current) on-premises upstream path (see foundation phase)

General

- An AWS Account

- Bastion host (on-premises LAN), deployed with:

- AWS Command Line Interface (AWS CLI), configured with the proper AWS IAM Credentials to bootstrap the environment

- Kubectl (vented by AWS)

- Amazon EKS command line tool (eksctl), Installed and configured.

- Internet egress connectivity

1. Foundation

For this walkthrough we use an Outposts and VPC design that is already created and configured. At the same time, we chose to describe the design in fine details using the following sub-sections.

VPC, endpoints, and subnets

Pattern B is based on a VPC design which consists of:

- An Amazon VPC (CIDR: 192.168.0.0/16)

- Two private subnets (in-outpost)

- Subnet outposts 1 (192.168.1.0/24), which hosts the kubernetes cluster control plane

- Subnet outposts 2 (192.168.2.0/24), which hosts the kubernetes cluster data plane

- Single private subnet (in-region), which hosts the service endpoint interfaces

- Mandatory Interface VPC Endpoint Services

| Note: Please make sure that this in-region subnet will be in the same availability zone which the Outposts is associated with. Otherwise, you run the risk of local cluster failure in case of an in-region AZ Failure (AZ which is not aligned with the outposts AZ).

- Mandatory Interface VPC Endpoint Services

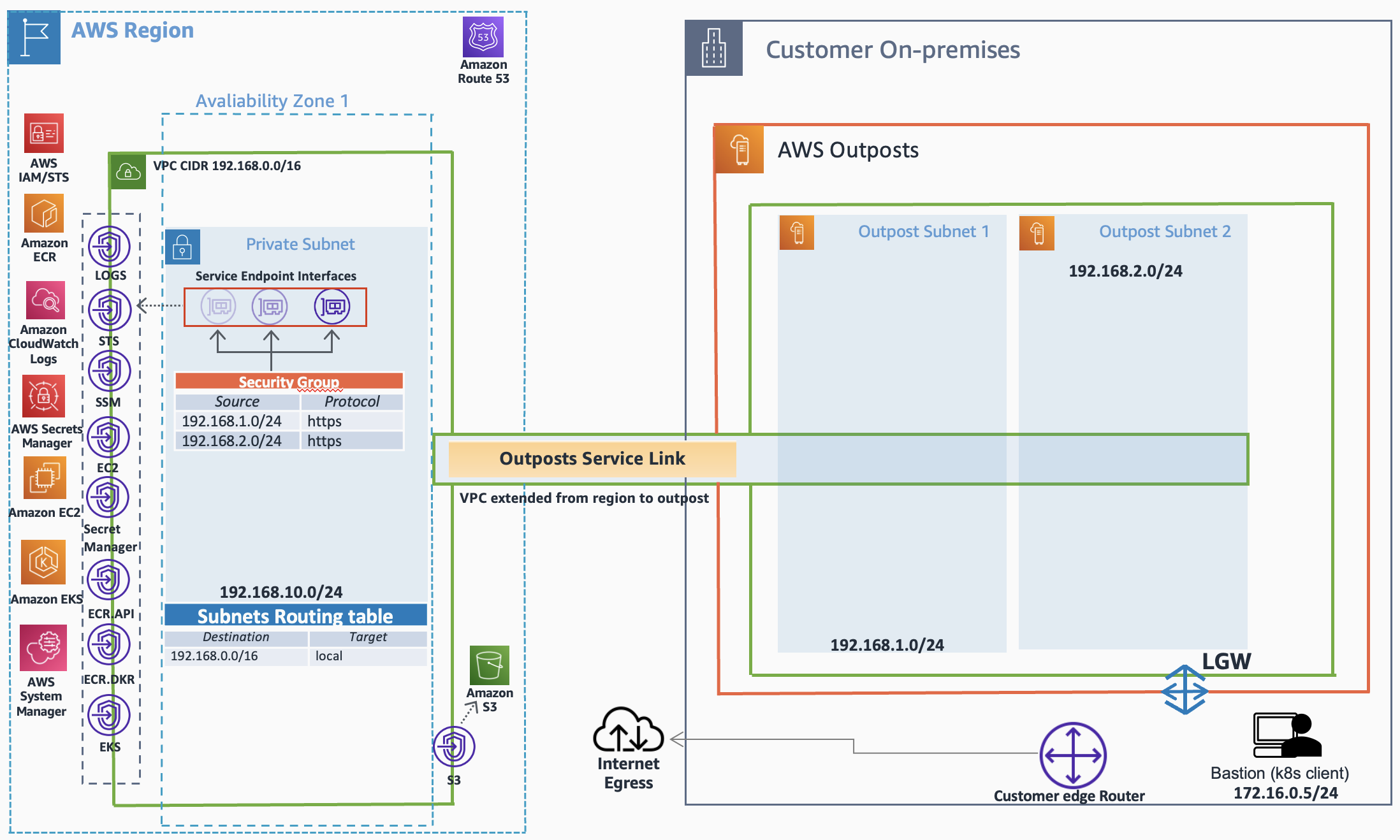

VPC design foundation

Routing and access control

The successfully deploy the local cluster, the following routing primitives must be configured and function:

- All the VPC subnets (in-outpost and in-region) are part of the VPC local (implicit) routing.

- The AWS Outpost LGW routing table is associated with the created VPC (vpc-id).

- The in-outpost VPC private subnets routing table contains a routing entry, which routes the traffic back to the bastion host network via the local gateway (see the following diagram).

- The on-premises local network (where the bastion host resides) is able to route to the outpost VPC range.

- The upstream internet egress is using the existing on-premises path(s).

- The service endpoint interfaces (in-region) security group is configured to allow access from the in-outpost subnets.

VPC design foundation (Routing)

2. Local clusters

Prior on deploying the Amazon EKS cluster, lets’ probe the outpost rack to learn which Amazon Elastic Compute Cloud (Amazon EC2) instances are available.

Get the available Amazon EC2 instances

On the bastion host:

In your capacity planning secure three Amazon EC2 (slotted) instances for the entire lifetime of the cluster to satisfy the Control Plane requirements (does not include the data plane/worker nodes).

In our example, we use m5.xlarge instance types for the control plane and c5.large for the data plane/worker nodes.

Create the cluster

On the bastion host create the eksctl yaml:

| Note: replace all fields marked with <> with your own real-world IDs

Create the cluster:

The cluster creation takes few minutes. You can query the status of your cluster:

3. Validation

To access the Kubernetes Cluster application programming interface (API) endpoints (i.e., private only access) the bastion host IP needs to be whitelisted using a simple HTTPS inbound rule. Edit the cluster security group (eks-cluster-sg-ekslocal-*) and add an inbound rule.

From the bastion host:

| Note: The control plane nodes will appear in NotReady state, you can safely ignore this (no Container Network Interface (CNI) plugins are installed on those control plane nodes)

Considerations

- AWS Outposts remains a fully connected offering.

Therefore, It is advisable to follow the below guidelines in order to prepare for network disconnect/interruption events: https://docs.aws.amazon.com/eks/latest/userguide/eks-outposts-network-disconnects.html

- Add-ons: to date, local clusters support self-managed add-ons. core-dns, kube-proxy and the vpc-cni will be installed by default

- Local clusters (to date) support self-managed node groups with Amazon EKS-optimized Amazon Linux AMI

Cleanup

Destroy the cluster

From the bastion host:

Conclusion

In this post, we showed you how to deploy Fully private local clusters for Amazon EKS on AWS Outposts and discussed two architecture patterns. We’re very excited to bring VPC endpoint services into local clusters unlocking many customer workloads. This is just the start—we are working diligently to add additional capabilities to local clusters to address your concerns.