Containers

Policy-based countermeasures for Kubernetes – Part 1

Choosing the right policy-as-code solution

This is Part 1 in a two part series where we discuss policy-as-code solutions.

As more organizations adopt containerization as a delivery strategy, the need for automated security, compliance, and privacy controls that detect, prevent, reduce, and counteract known and unknown threats, has increased. Out of this increased need for controls, policy-as-code (PaC) solutions have emerged to meet these demands. PaC solutions provide organizations with the ability to manage policy-as-code artifacts. These artifacts, in conjunction with policy engines, implement controls (a.k.a. countermeasures) as code artifacts. With this approach, organizations can reuse DevOps and GitOps strategies to manage and apply these countermeasures across fleets of container clusters.

Enforcing behaviors and limiting the scope of changes within Kubernetes clusters is a common challenge for customers. Using policies to apply rules-based control of Kubernetes resources is a dynamic and recognized approach to managing Kubernetes configuration. Policy enablement is a common concern among teams looking to automate the management of Kubernetes. PaC solutions provide guardrails to guide cluster users, and prevent unwanted behaviors. PaC solutions are the best way to policy-enable Kubernetes clusters, and provide prescribed and automated controls.

According to the 2020 AWS Container Security Survey, 46% of respondents indicated that they had yet to implement a “general policy management strategy” for Kubernetes. While the survey did not get into why this was the case, exploration of possible solutions is a good first step.

For Kubernetes, there are several PaC solutions available in the open-source software (OSS) community. The following examples are a few solutions, with their respective Cloud Native Computing Foundation (CNCF) project status (if applicable). In this Part 1 of our series, we focus on three solutions that are associated with Open Policy Agent.

- Open Policy Agent (a.k.a. OPA or OPA/classic) – https://www.openpolicyagent.org/

- CNCF Project Status – Graduated

- OPA/gatekeeper (a.k.a. Gatekeeper) – https://github.com/open-policy-agent/gatekeeper

- Part of OPA, CNCF Project Status – Graduated

- Kyverno – https://kyverno.io/ (covered in Part 2)

- CNCF project status – Sandbox

- k-rail – https://github.com/cruise-automation/k-rail (covered in Part 2)

- MagTape – https://github.com/tmobile/magtape

As we explore the PaC use cases, we will discuss the characteristics of each solution and point out aspects that can help organizations choose the best fit for their requirements.

All of the preceding solutions integrate with the Kubernetes API server using admission controllers and webhooks. These admission controllers are enabled for all Amazon Elastic Kubernetes Service (EKS) clusters; moreover, all of these solutions work well to validate EKS (EC2 and AWS Fargate) inbound Kubernetes API server requests.

Kubernetes integration and operation

In Kubernetes, the control plane is responsible for maintaining the desired state of a cluster. Requests are made to the control plane via calls to the Kubernetes API server. Valid changes are persisted into etcd by the API server. In fact, only the API server interacts directly with etcd. Once the desired state changes are in etcd, the API server, along with controllers and kubelets, ensure that actual cluster state reflects desired cluster state, which is stored in etcd.

Kubernetes admission controllers are a means by which the API server request flow can be intercepted and extended for operational needs, such as enforcing security controls and best practices.

According to Kubernetes documentation:

An admission controller is a piece of code that intercepts requests to the Kubernetes API server prior to persistence of the object, but after the request is authenticated and authorized.

As seen in the flow following diagram, PaC solutions work with the mutating and validating admission controllers in the API server request flow. Every Kubernetes cluster state change request goes through the API server request flow. Successful requests are persisted into etcd in the cluster control plane.

The PaC solutions can mutate inbound API server request JSON payloads, before Kubernetes object schema validation is performed. After Kubernetes object schema validation is performed, the PaC solutions policy engines can validate the inbound request, by matching policies to the request JSON payload, and using the policies to verify correct data in the payload. All of the preceding solutions are installed within the cluster.

Kubernetes API server webhooks for mutating and validating API server requests can be configured to use services outside of the respective Kubernetes cluster, as seen in this blog: https://aws.amazon.com/blogs/containers/building-serverless-admission-webhooks-for-kubernetes-with-aws-sam/

PaC solutions are integrated to a Kubernetes cluster API server, as an admission webhook target, via the Kubernetes ValidatingWebhookConfiguration resource. The following is an example configuration.

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

name: policy-validating-webhook-cfg

webhooks:

- admissionReviewVersions:

- v1beta1

clientConfig:

caBundle: <ENCODED_CERTIFICATE_DATA>

service:

name: <SERVICE_NAME>

namespace: <SERVICE_NAMESPACE>

path: /<SERVICE_PATH>

port: <SERVICE_PORT>

failurePolicy: Ignore

matchPolicy: Equivalent

name: <WEBHOOK_NAME>

namespaceSelector: {}

objectSelector: {}

rules:

- apiGroups:

- <KUBERNETES_API_GROUP>

apiVersions:

- <API_VERSION>

operations:

- CREATE

- UPDATE

resources:

- <KUBERNETES_RESOURCE>

scope: '*'

sideEffects: NoneOnDryRun

timeoutSeconds: 3Note: At the time of this writing:

– OPA/gatekeeper mutating functionality is in an “experimental” status: https://github.com/open-policy-agent/gatekeeper/releases/tag/v3.3.0

– MagTape does not support mutating API server requests.

For the purposes of this post, we will examine validation policies and configurations from multiple solutions.

Guardrails and instant feedback

Using validating admission controllers and PaC solutions allows organizations to erect guardrails that prevent unwanted cluster changes, while still enabling users to move fast. The following example response is from a Kubernetes API server request. The request was a kubectl apply -f ... request to create a Kubernetes Deployment resource, where the source image registry in the container spec was not on a list of allowed registries.

Error from server ("DEPLOYMENT_INVALID": "BAD_REGISTRY/read-only-container:v0.0.1"

image is not sourced from an authorized registry. Resource ID (ns/name/kind):

"test/test/Deployment"): error when creating "tests/11-dep-reg-allow.yaml":

admission webhook "validating-webhook.openpolicyagent.org" denied the request:

"DEPLOYMENT_INVALID": "BAD_REGISTRY/read-only-container:v0.0.1" image is not

sourced from an authorized registry. Resource ID (ns/name/kind):

"test/test/Deployment"The API server sent the request payload to a PaC policy engine service installed in the cluster. The policy engine applied a matching policy and determined that the API server request payload was invalid. Matching and validation were based on the rules defined in the policy. The policy engine service responded to the API server with a boolean False, and a message of why the request was invalid. Then the API server (within 1-2 seconds) responded to the requester, in this case, the kubectl client. The end result was instant feedback from the cluster that (1) the request failed and (2) why it failed. This fast-failure with instant feedback improved the user-experience and helped the user quickly troubleshoot and correct their mistake.

Policy authoring – OPA

How policies are authored and applied are differentiators for the PaC solutions, and can be used to choose which solution best fits an organization’s capabilities and direction. OPA, OPA/Gatekeeper, and MagTape all use Rego. According to the OPA documentation:

Rego was inspired by Datalog, which is a well understood, decades old query language. Rego extends Datalog to support structured document models such as JSON.

Rego queries are assertions on data stored in OPA. These queries can be used to define policies that enumerate instances of data that violate the expected state of the system.

For some users, Rego presents somewhat of a learning curve, as its behavior is different than modern imperative languages, like C++, Java, or Golang. Another expectation for PaC solutions for Kubernetes is that the policies should be written in YAML, as that is the markup that is used to build Kubernetes resource configurations. For some, Rego is another language to learn that is not reusable outside of an OPA-based solution. For those reasons, some organizations have opted for a more Kubernetes native approach that is partially offered by Gatekeeper and fully offered by Kyverno, or an imperative language approach like that of Golang in the k-rail solution.

Kyverno and k-rail will be covered in Part 2 of this blog series.

Having worked with Rego for a few years, I am comfortable writing policies and libraries. However, I remember the initial friction of trying to read/write Rego. I have no Datalog experience and the Rego syntax resembled the programming languages with which I had written applications, enough so, that I had trouble understanding how different the lexicon and behaviors of Rego were. It was similar to the shift in thinking that I made moving from OOP to functional languages (a work still in progress). However, once I learned Rego, I realized how functional and powerful the language can be for authoring policies that are reusable across multiple use cases.

These days there are many resources available to help users new to Rego, so the learning curve is less than it was even a few years ago. And the OPA community is very helpful and responsive to folks trying to learn Rego, and succeed in writing policies. To help with writing Rego, OPA policy authors can use the following tools:

- The Rego Playground: an online REPL (read-evaluate-print-loop) environment – https://play.openpolicyagent.org/

- OPA General Tools: the OPA tools provide multiple ways (REPL, eval, server, etc.) to execute OPA – https://www.openpolicyagent.org/docs/latest/#running-opa

- VS Code OPA Plugin: https://marketplace.visualstudio.com/items?itemName=tsandall.opa

There are also several example repositories for OPA policies:

- EKS Best Practices Guide: https://github.com/aws/aws-eks-best-practices/tree/master/policies/opa

- Kubernetes CIS Benchmark example policies: https://github.com/raspbernetes/k8s-security-policies

- OPA Contributions/Examples: https://github.com/open-policy-agent/contrib

The following sample Rego policy is written to be used in an OPA implementation. This policy controls from where images used in a cluster are allowed to be sourced.

package kubernetes.admission

import data.lib.k8s.helpers as helpers

deny[msg] {

helpers.request_kind = "Deployment"

helpers.allowed_operations[helpers.request_operation]

image = helpers.deployment_containers[_].image

not reg_matches_any(image,valid_deployment_registries_v2)

msg = sprintf("%q: %q image is not sourced from an authorized registry. Resource ID (ns/name/kind): %q", [helpers.deployment_error,image,helpers.request_id])

}

valid_deployment_registries_v2 = {registry |

allowed = "GOOD_REGISTRY,VERY_GOOD_REGISTRY"

registries = split(allowed, ",")

registry = registries[_]

}

reg_matches_any(str, patterns) {

reg_matches(str, patterns[_])

}

reg_matches(str, pattern) {

contains(str, pattern)

}The preceding policy looks at Deployment resources in inbound API server request payloads, and determines if the image element in the container spec specifies images from allowed registries. The policy looks for the strings GOOD_REGISTRY and VERY_GOOD_REGISTRY in the image string. If those substrings are not found, then OPA returns an invalid status (boolean False) to the API server, which prevents the change from being persisted to etcd. The preceding policy also uses reusable “helper” functions from the following library.

package lib.k8s.helpers

allowed_operations = allowed_ops {

allowed_ops := {"CREATE", "UPDATE"}

}

request_operation = op {

op := input.request.operation

}

request_metadata_labels = labels {

labels := input.request.object.metadata.labels

}

request_spec_template_metadata_labels = labels {

labels := input.request.object.spec.template.metadata.labels

}

deployment_error = e {

e := "DEPLOYMENT_INVALID"

}

deployment_containers = c {

c := input.request.object.spec.template.spec.containers

}

required_deployment_labels = l {

l := {"app", "owner"}

}

deployment_role = dr {

dr := input.request.object.spec.template.metadata.annotations["iam.amazonaws.com/role"]

}

request_id = value {

value := sprintf("%v/%v/%v", [

request_namespace,

request_name,

request_kind

])

}

request_name = value {

value := input.request.object.metadata.name

}

else = value {

value := "NOT_FOUND"

}

request_namespace = value {

value := input.request.object.metadata.namespace

}

else = value {

value := "NOT_FOUND"

}

request_kind = value {

value := input.request.kind.kind

}

else = value {

value := "NOT_FOUND"

}Reusing common libraries reduces duplicative logic and enables policy authors to focus more on the control satisfied by the policy.

Policy authoring – Gatekeeper

Gatekeeper extends OPA by using Kubernetes Custom Resource Definitions (CRD) to allow users to manage policies, as a hierarchy of constraints and constraint templates. According to the Gatekeeper documentation:

Gatekeeper introduces the following functionality:

– An extensible, parameterized policy library

– Native Kubernetes CRDs for instantiating the policy library (aka “constraints”)

– Native Kubernetes CRDs for extending the policy library (aka “constraint templates”)

– Audit functionality

Gatekeeper separates policy authoring into two main parts:

- Contraint Templates

- Constraints

In Gatekeeper, Rego is written into constraint templates, which are based on the ConstraintTemplate CRD. This CRD, along with the Constraint CRD, is installed in the cluster with Gatekeeper. The following example Gatekeeper constraint template solves the allowed-registry use case. The constraint template is a cluster-wide resource, and specifies parameters, as properties, that can be supplied as arguments by constraints, that use, and are based on, this constraint template.

apiVersion: templates.gatekeeper.sh/v1beta1

kind: ConstraintTemplate

metadata:

name: k8sdepregistry

spec:

crd:

spec:

names:

kind: K8sDepRegistry

listKind: K8sDepRegistryList

plural: k8sdepregistry

singular: k8sdepregistry

validation:

# Schema for the `parameters` field

openAPIV3Schema:

properties:

allowedOps:

type: array

items:

type: string

allowedRegistries:

type: array

items:

type: string

errMsg:

type: string

targets:

- target: admission.k8s.gatekeeper.sh

libs:

- |

package lib.k8s.helpers

allowed_operations = value {

value := {"CREATE", "UPDATE"}

}

review_operation = value {

value := input.review.operation

}

review_metadata_labels = value {

value := input.review.object.metadata.labels

}

review_spec_template_metadata_labels = value {

value := input.review.object.spec.template.metadata.labels

}

deployment_error = value {

value := "DEPLOYMENT_INVALID"

}

deployment_containers = value {

value := input.review.object.spec.template.spec.containers

}

deployment_role = value {

value := input.review.object.spec.template.metadata.annotations["iam.amazonaws.com/role"]

}

review_id = value {

value := sprintf("%v/%v/%v", [

review_namespace,

review_name,

review_kind

])

}

review_name = value {

value := input.review.object.metadata.name

}

else = value {

value := "NOT_FOUND"

}

review_namespace = value {

value := input.review.object.metadata.namespace

}

else = value {

value := "NOT_FOUND"

}

review_kind = value {

value := input.review.kind.kind

}

else = value {

value := "NOT_FOUND"

}

rego: |

package k8sdepregistry

import data.lib.k8s.helpers as helpers

violation[{"msg": msg, "details": {}}] {

helpers.review_operation = input.parameters.allowedOps[_]

image = helpers.deployment_containers[_].image

not reg_matches_any(image,input.parameters.allowedRegistries)

msg = sprintf("%v: %v image is not sourced from an authorized registry. Resource ID (ns/name/kind): %v", [input.parameters.errMsg,image,helpers.review_id])

}

reg_matches_any(str, patterns) {

reg_matches(str, patterns[_])

}

reg_matches(str, pattern) {

contains(str, pattern)

}The preceding constraint template specifies the allowedOps, allowedRegistries, and errMsg properties that are supplied as arguments in constraints that use this constraint template. The following constraint supplies the arguments that satisfy the constraint template configuration. The constraint also specifies to which Kubernetes resource the constraint will be applied, and within which namespace(s) the constraint applies.

apiVersion: constraints.gatekeeper.sh/v1beta1

kind: K8sDepRegistry

metadata:

name: deployment-allowed-registry

spec:

match:

kinds:

- apiGroups: ["*"]

kinds: ["Deployment"]

namespaces:

- "opa-test"

parameters:

allowedOps: ["CREATE","UPDATE"]

allowedRegistries: ["GOOD_REGISTRY","VERY_GOOD_REGISTRY"]

errMsg: "INVALID_DEPLOYMENT_REGISTRY"Constraints instantiate constraint templates and multiple constraints can use a single constraint template. That relationship, coupled with the reusable and extensible policy libraries, can reduce the amount of Rego that needs to be managed, while giving policy managers a coarse-grained interface with which to implement controls and countermeasures.

The Gatekeeper community has created a separate project to manage Gatekeeper policies: https://github.com/open-policy-agent/gatekeeper-library. At a high level, this library is divided into general Kubernetes policies, and policies that are designed to replace Pod Security Policies (PSP), that are currently due to be removed from Kubernetes in version 1.22.

As the Kubernetes community prepares to deprecate Pod Security Policies (PSP), it’s important to realize that PaC solutions can complement as well as replace PSP. In fact, PaC and PSP are not mutually-exclusive. So, current users of PSP can layer PaC solutions with PSP right now, as a defense-in-depth approach, that can improve the cluster user experience. And, when PSP are removed from the cluster via Kubernetes upgrades, the PaC solution will remain, to handle the task once meant for PSP. More information on the potential to use Gatekeeper as a PSP replacement can be seen in the following blog post: https://aws.amazon.com/blogs/containers/using-gatekeeper-as-a-drop-in-pod-security-policy-replacement-in-amazon-eks/ .

As evidenced in this tweet by Jim Bugwadia, founder of Nirmata (the creators of Kyverno), Kyverno (covered in Part 2 of this series) policies are also able to replace PSP: https://twitter.com/jimbugwadia/status/1225342874100781057

Policy authoring – MagTape

Like OPA and Gatekeeper, MagTape, an open-source project from T-Mobile, uses Rego for it’s policies. However, MagTape has taken a somewhat different route. MagTape wraps and extends OPA to include business workflow and logic as well as notification layers that integrate into Slack via webhooks. MagTape operates between the Kubernetes API server and the OPA service; moreover, the MagTape init container handles initializing the OPA services.

package kubernetes.admission.policy_allowed_registry

policy_metadata = {

# Set MagTape Policy Info

"name": "policy-allowed-registry",

"severity": "HIGH",

"errcode": "MTxxxx",

"targets": {"Deployment", "StatefulSet", "DaemonSet", "Pod"},

}

servicetype = input.request.kind.kind

matches {

# Verify request object type matches targets

policy_metadata.targets[servicetype]

}

deny[info] {

# Find container spec #find_containers(servicetype, policy_metadata)

containers := find_containers(servicetype, policy_metadata)

# Check for allowed registry

container := containers[_]

name := container.name

image := container.image

not reg_matches_any(image,valid_deployment_registries_v2)

# Build message to return

msg := sprintf("[FAIL] %v - \"%v\" image, for \"%v\" container, is not sourced from an allowed registry. (%v)", [policy_metadata.severity, image, name, policy_metadata.errcode])

info := {

"name": policy_metadata.name,

"severity": policy_metadata.severity,

"errcode": policy_metadata.errcode,

"msg": msg,

}

}

# find_containers accepts a value (k8s object type) and returns the container spec

find_containers(type, metadata) = input.request.object.spec.containers {

type == "Pod"

}

else = input.request.object.spec.template.spec.containers {

metadata.targets[type]

}

valid_deployment_registries_v2 = {registry |

allowed = "GOOD_REGISTRY"

registries = split(allowed, ",")

registry = registries[_]

}

reg_matches_any(str, patterns) {

reg_matches(str, patterns[_])

}

reg_matches(str, pattern) {

contains(str, pattern)

}In the preceding Rego policy to validate allowed container registries, policy metadata is used to set the severity of the policy, as well as the business-specific error code, and the targets that this policy will validate. The policy includes logic to find the container spec in the following resources that create pods: Deployment, StatefulSet, DaemonSet, and Pod. This is handy when you want to write policies that work with multiple resource types. The following error message returned from the API server includes the error code from the policy metadata.

Error from server: error when creating "../../classic/tests/11-dep-reg-allow.yaml":

admission webhook "magtape.webhook.k8s.t-mobile.com" denied the request:

[FAIL] HIGH - "BAD_REGISTRY/read-only-container:v0.0.1" image, for "bad-test"

container, is not sourced from an allowed registry. (MTxxxx)The MagTape logs show log data captured from the interaction between MagTape and OPA.

[2021-02-10 21:48:45,125] INFO in magtape: ##################################################################

[2021-02-10 21:48:45,125] INFO in magtape: Deny Level: LOW

[2021-02-10 21:48:45,125] INFO in magtape: Processing Deployment: opa-test/test

[2021-02-10 21:48:45,125] INFO in magtape: Request User: kubernetes-admin

[2021-02-10 21:48:45,134] INFO in magtape: Call to OPA was successful

[2021-02-10 21:48:45,135] INFO in magtape: [FAIL] HIGH - "BAD_REGISTRY/read-only-container:v0.0.1" image

[2021-02-10 21:48:45,135] INFO in magtape: for "bad-test" container

[2021-02-10 21:48:45,136] INFO in magtape: is not sourced from an allowed registry. (MTxxxx)

[2021-02-10 21:48:45,136] INFO in magtape: K8s Event are enabled

[2021-02-10 21:48:45,158] INFO in magtape: Slack alerts are NOT enabled

[2021-02-10 21:48:45,158] INFO in magtape: Sending Response to K8s API ServerMagTape is installed via YAML or helm, and uses a Deny Level setting that that can be configured to adjust how policy severities are handled by the policy engine. The Deny Levels and their meanings can be seen in the following example. Users can think of this setting as a “volume-knob“ that can be adjusted to control the volume of violations that are blocked by MagTape.

- OFF: No severity violations are blocked

- LOW: Only high severity violations are blocked

- MEDIUM: High and medium severity violations are blocked

- HIGH: High, medium, and low severity violations are blocked

According to the MagTape project:

This configuration provides flexibility around controlling which checks should result in a “deny” and allows for a progressive approach as the platform and its users mature.

MagTape offers a default and advanced configuration. The webhook configuration specifies that namespaces can be opted-in to validation via the label-scheme:

namespaceSelector:

matchLabels:

k8s.t-mobile.com/magtape: enabledAll of the solutions include features to exempt/include resources.

Webhook failure modes (fail open vs. fail closed)

Services that integrate into the Kubernetes API server as validating admission controllers can be configured to fail open or closed. In the fail open scenario, if the API server webhook call to the validation service times-out (default 10 secs), changes from the respective API server request are permitted to proceed to etcd. Fail open is used so that clusters are not rendered inoperable when the connected validation services are unavailable. OPA and Gatekeeper are set up, at least currently, to fail open. By default, the MagTape solution is set to fail closed, and runs multiple pods to service the calls from the API server.

Failure modes for these solutions are set up in the respective validating webhook configuration resources using the failurePolicy. The following setting is for a fail closed scenario.

failurePolicy: FailAuditing violations

Due the prevalence of the fail-open scenario, and the strong desire to not “brick” a cluster (or fleet thereof), solutions have emerged, both open-source and commercial, that provide auditing and background scanning features. These solutions are designed to be compensating controls for the case when preventative validation is not possible and reactive detection is required.

On the open-source side, Gatekeeper offers audit functionality that is described in the following document: https://open-policy-agent.github.io/gatekeeper/website/docs/audit. According to the documentation:

The audit functionality enables periodic evaluations of replicated resources against the policies enforced in the cluster to detect pre-existing misconfigurations. Audit results are stored as violations listed in the status field of the failed constraint.

On the commercial side, the creators of OPA offer the Styra DAS (Declarative Authorization Service) product. This solution can be found in the AWS Marketplace, and offers multiple levels of consumption:

- Styra DAS Enterprise

- Styra DAS Pro

- Styra DAS Free

According to the Styra DAS product documentation:

Styra DAS provides actionable, graphical views of all admission control policy decisions/mutations, as well as any compliance violations. Dashboards give immediate insights to Security and DevOps teams, and data can be sent to external monitoring systems like Prometheus or SIEM tools.

Extensibility – programming and data

The PaC solutions (OPA, Gatekeeper, MapTape) discussed in this post are open-source solutions, primarily written in Golang and Python. Given those characteristics, all of these solutions can be extended, at a minimum, via their respective policy syntax, or by contributing to the OSS projects. Beyond that, OPA offers direct programming extensibility without having to contribute to, or modify, the OSS project (https://www.openpolicyagent.org/docs/latest/extensions/).

These solutions can be extended via external data as well. OPA supports several ways to use external data, beyond that available in the Kubernetes API server request payload: https://www.openpolicyagent.org/docs/latest/external-data/ .

Kubernetes policy architecture and direction

The future of policy architecture for Kubernetes is being formed in the Kubernetes Policy Working Group. According to the description of the group, their purpose is to:

Provide an overall architecture that describes both the current policy related implementations as well as future policy related proposals in Kubernetes. Through a collaborative method, we want to present both dev and end user a universal view of policy architecture in Kubernetes.

Creators and sustainers of some of the solutions discussed in this post, as well as other interested parties, are engaged in this group. What comes from this group will drive the future directions for policy-as-code solutions. There are several initiatives being considered and underway that might interest users and decision-makers, and help cluster admins and security professionals. Two of those initiatives are:

- Report integration to existing security tools like Falco and kube-bench

- Report integration to standards, like NIST Open Security Controls Assessment Language (OSCAL)

Shifting policy enforcement to the left

In the context of DevOps, “shifting left” means that steps or stages that normally take place later in automated CICD pipelines, or even within the Systems Development Life Cycle (SDLC), are moved to earlier steps or stages in the process. The premise is based on the value proposition that it is faster, cheaper, and less complex to identify and remediate issues earlier in the process. Shifting issues identification and remediation to the left can also reduce impact on downstream systems and stakeholders.

Not withstanding the mature integration between Kubernetes and PaC solutions, shifting data mutation and validation decisions to the left is a foundational characteristic of DevOps. To that end, the ability to evaluate policies and structured data in automated DevOps pipelines, or even at the developer desktop, should be considered when evaluating PaC solutions.

For OPA users, there are several OPA commands that work well in DevOps pipelines and developer desktops. These commands can be used alone, or as orchestrated steps to build automated validation solutions:

- The opa check command checks Rego files for parse and compilation errors

- The opa deps command analyzes Rego files for dependencies

- The opa test command is used to unit test Rego policies

- The opa eval command is used in a “one-shot” mode to apply policies to evaluate structured data

- The opa parse –format json command parses a Rego policy into a JSON formatted Abstract Syntax Tree (AST) that can be used to build Rego linting capabilities

A following example Docker command runs OPA eval using containers.

docker run -v $(CURDIR):/input -v $(CURDIR):/data -it --rm --name $(NAME) \

$(OPA_IMAGE) eval -i input/$(OPA_INPUT) -d data/$(OPA_DATA) $(OPA_RULE)With the OPA eval command, pipeline steps can evaluate data with policies, before that data is introduced into downstream systems. Using containers in CICD steps also reduces the overall administrative load and complexity of managing multiple and disparate binaries on common CICD servers, and increases the fidelity between developer activities and steps executed within automated CICD pipelines.

Conftest is another popular solution for Rego users that fits well the DevOps pipeline use case. The following example executes an evaluation on structured data, in this case a Kubernetes Deployment manifest, using Rego and the Conftest container.

docker run --rm -v jimmyray/conftest:/project instrumenta/conftest:v0.21.0 test "configs/opa-test-reg.yaml"

FAIL - configs/opa-test-reg.yaml - invalid deployment: allowed registry not found., namespace="opa-test", name="simple-server", registry="this.wont.work/simple-server:1.0.0"

1 test, 0 passed, 0 warnings, 1 failure, 0 exceptionsBeing able to reuse these Kubernetes PaC solutions in DevOps pipelines enables organizations to empower their developers to fail, react, and succeed fast, with minimal impact to operating environments. DevOps usage of these PaC solutions can validate both structured data and the policies used for evaluation. The capability to test and validate actual policies before they are used to evaluate data enables organizations to correctly apply traceability and provenance (which is important to audit requirements) to data-validation methods used in their processes.

Policy decisions made early in the software lifecycle or container supply-chain have the following effects:

- Save both time and resources

- Prevent unwanted behaviors and changes

- Reduce attack surfaces and blast radii

- Minimize impacts to systems and environments

Making the right choice for your use case and organization

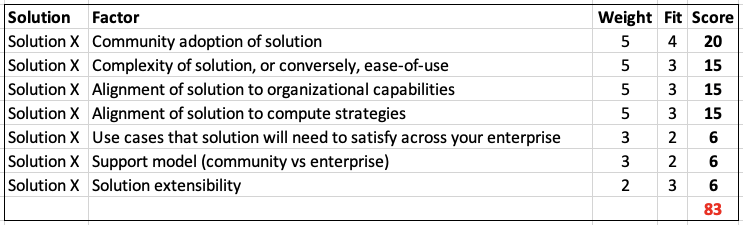

Choosing the right policy engine requires that you consider multiple factors. Some of those factors include:

- Community adoption of solution

- Complexity of solution, or conversely, ease-of-use

- Alignment of solution to organizational capabilities

- Alignment of solution to compute strategies

- Use cases that solution will need to satisfy across your enterprise

- Support model (community vs enterprise)

- Solution extensibility

- Integration to existing tooling

Given the preceding factors, organizations should decide on the level of importance (weight) each factor carries within their respective organizations, as well as the level of fit each solution provides for those weighted factors. Organizations may also need to add organization-specific factors not listed. A simple and effective approach towards a data-driven decision is to use a spreadsheet or scorecard, fed by data from solution testing and evaluation.

The sample scorecard illustrates how organizations could choose the right solution for their needs. The weight and fit values are multiplied for each Solution-Factor combination, and those products are summed for an overall solution score. It’s important to understand that, for a data-driven outcome, the “fit” column should be based on criteria determined by the organization, and satisfaction of said criteria, by the solution under evaluation, demonstrated in testing and/or proofs-of-concept. During the evaluation of these solutions, the open-source communities for each project are valuable resources to gain insights about potential use cases, and to help organizations learn more about the individual solutions.

Summary

As more organizations move to containers as a means of delivering applications and solutions, these same organizations must keep up with the never-ending and ever-changing demand on security, compliance, privacy, and best practices. There is no such thing as “secure enough“ or ”good enough.” Application strategies that deliver fast-paced solutions and innovation can use policy-as-code solutions for more secure outcomes and enforcement of best practices.

Policy-as-code solutions provide automated guardrails that both enable users as well as prevent unwanted behaviors. While not a one-way door, choosing the right policy-as-code solution is not a trivial task. Organizations must consider several factors when making the right choice. Making data-driven decisions based on the outcome of testing and proofs-of-concept is a sound approach. Regardless of selected solution, policy-as-code is emerging as a foundational component to DevOps and defense-in-depth strategies. Make sure to check out part 2 of this series.

The OPA and Gatekeeper policies shared in this post are available from the EKS Best Practices Guides GitHub companion repository: https://github.com/aws/aws-eks-best-practices/tree/master/policies