AWS for Industries

How Telefonica Germany achieved a centralized tracing solution with VPC Traffic Mirroring

Background

Critical infrastructure in telecommunications includes networks, systems, and services that are essential for the functioning of a society, economy, and national security. Mobile networks belonging to Communications Service Providers (CSP) fall into this category, and it is essential to have real-time insights to monitor network performance and proactively address any potential service degradation. This visibility enables operational efficiency and helps minimize or prevent unplanned downtime and revenue loss. One approach CSPs use today to get real-time network KPIs, is through implementation of network probing or network tracing. Tracing involves passive or active monitoring of network traffic quality, supported by user experience, and service performance information. Through tracing, CSPs can monitor network KPIs and gather insights which enable data-driven planning and resource allocation. This blog post covers the concepts of network tracing and how it can be implemented across a complex, distributed environment where different teams run applications in separate AWS accounts, using Amazon Virtual Private Cloud (VPC) feature called Amazon VPC Traffic Mirroring.

Tracing concepts

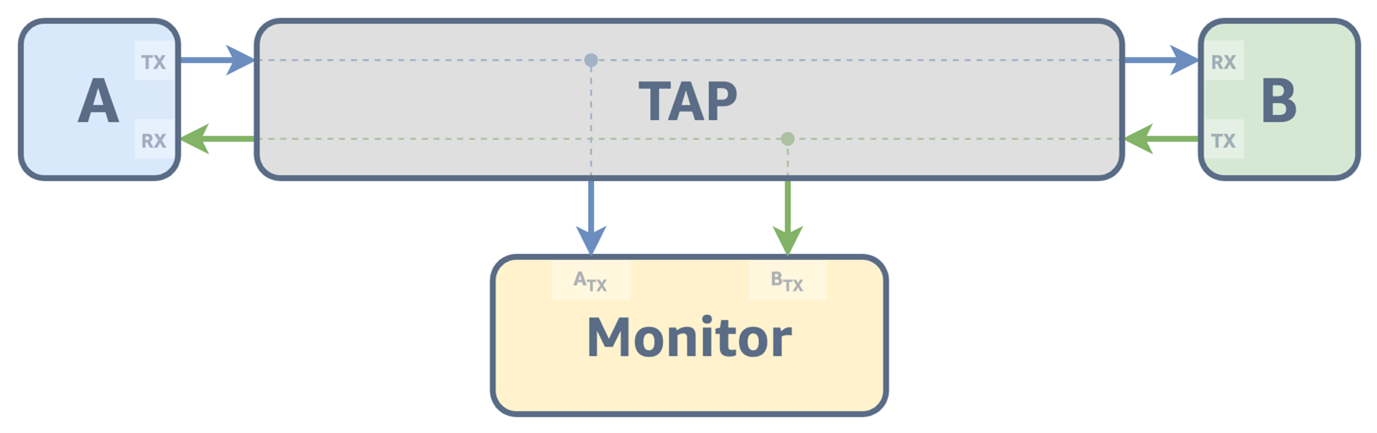

In traditional networks, tracing and network observability is typically achieved by installing Test Access Points (TAPs) on the links/interfaces to be observed. These TAPs are physical devices that enable monitoring of network traffic without interfering with the normal flow of data, i.e. passive monitoring. These devices operate as traffic splitters, featuring at least three ports: an input port connected to the source (A), an output port connected to the destination (B), and a monitor port. The monitor port connects to other monitoring systems such as data aggregators, network analyzers, or packet capture tools.

Figure 1: Tapping High Level Architecture

As networks evolved from traditional bare-metal servers to virtualized environments and began adopting Software-Defined Networking (SDN) architectures, the methods used by Operations and Business Support Systems (OSS/BSS) for traffic monitoring also evolved. Alternatives such as configuring port mirroring, enabling traffic mirroring through SDN controllers, or leveraging built-in capabilities in network devices and applications (i.e., embedded virtual TAPs) have gained traction.

As network transformation continues to accelerate, CSPs are adopting diverse deployment strategies, such as hybrid cloud architectures, integrating on-premises infrastructure with public cloud services. Cloud-native solutions have become essential to meet evolving operational demands. In this context, VPC Traffic Mirroring emerges as a powerful option for delivering end-to-end visibility into network traffic by effectively bridging the gap between traditional monitoring approaches and the dynamic, scalable requirements of modern cloud infrastructures.

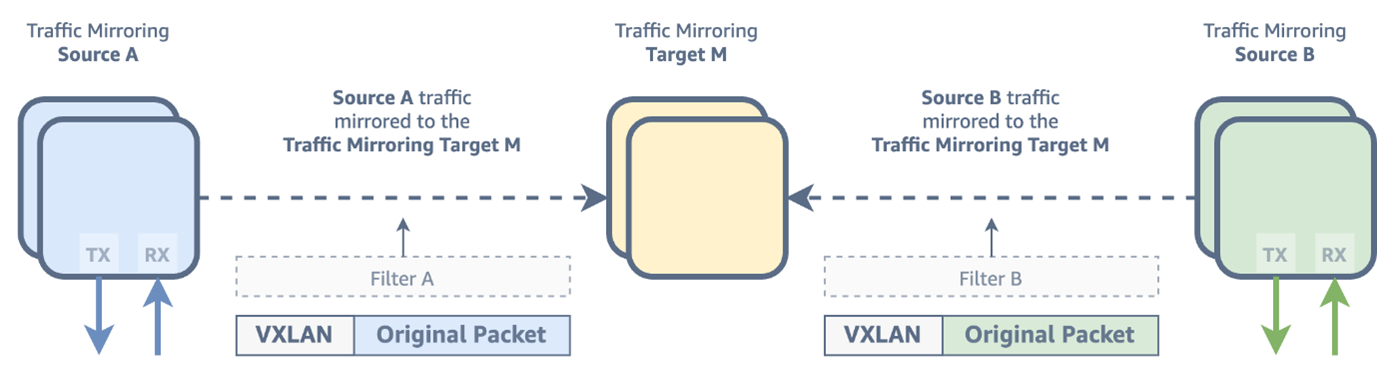

VPC Traffic Mirroring is an Amazon VPC feature that allows you to replicate network traffic from an Elastic Network Interface (ENI) and forward it to a monitoring or analysis target. Common use cases include threat detection, content inspection, troubleshooting, and advanced monitoring of network behavior. It supports filtering and packet truncation to selectively mirror specific traffic types. The destination or target ENIs are attached to Elastic Compute Cloud (EC2) instances running a virtual appliance or attached to either a Network Load Balancer (NLB) or a Gateway Load Balancer (GWLB) in front of a fleet of EC2 instances. In short, VPC Traffic Mirroring is made up of the following concepts:

- Source – The ENI to monitor or get the traffic from

- Filter – A set of rules that defines the traffic that is mirrored

- Target – The destination ENI for mirrored traffic

- Session – An established relationship between a source, a filter, and a target

Figure 2: VPC Traffic Mirroring Concept – Copying network traffic from source elastic network interfaces and encapsulating it in VXLAN headers for transmission

Challenges

As cloud-native architectures become the norm, new monitoring challenges emerge. Modern cloud environments are built on tightly interconnected services, with Telecommunications Network Functions (NFs) implemented as microservices — often containerized, inherently dynamic, short-lived, and constantly evolving. Tracing mechanisms must now dynamically identify traffic sources and destinations, efficiently manage large volumes of data, support rapid horizontal scaling, and enforce end-to-end encryption to protect sensitive metadata.

Beyond the technical complexity of individual workloads, a robust tracing solution must integrate seamlessly within a scalable, secure, and well-architected multi-account AWS environment. AWS customers achieve scale by implementing one or more landing zones, a pattern that CSPs also follow for multiple network workloads. Accordingly, the tracing solution must be capable of scaling across all network workloads deployed within an AWS landing zone.

To address these challenges, Telefónica Germany developed a centralized tracing solution leveraging Amazon VPC Traffic Mirroring within a VPC architecture shared across internal network domains. This solution delivers dynamic discovery and mapping of tracing sources and destination probes, automated configuration and lifecycle management of mirroring sessions, and increased end-to-end network visibility.

Solution architecture and design concept

Traditionally, telco networks use distinct routing domains, i.e., virtual routing and forwarding (VRF), to ensure traffic isolation. As Telefónica Germany embarked on its cloud journey, a key challenge was extending this segmentation model to AWS cloud. The solution was to establish a centrally governed infrastructure, where each routing domain (VRF) is represented by a dedicated Amazon VPC.

In this model, these centrally managed VPCs share their subnets with other application accounts through AWS Resource Access Manager (AWS RAM), granting access to specific network segments. Applications “consume” these networks by creating ENIs and attaching them to Amazon EC2 instances. This capability to attach ENIs from different VPCs, is called multi-VPC ENI attachment. It enables telecom operators to maintain VPC-level segregation between network domains, such as separating management, signaling, and user plane traffic, while allowing network functions that span multiple domains to communicate across them without additional overlay networking or routing constructs. This architecture is illustrated in Pattern 5 of the referenced blog post.

Traffic mirroring design

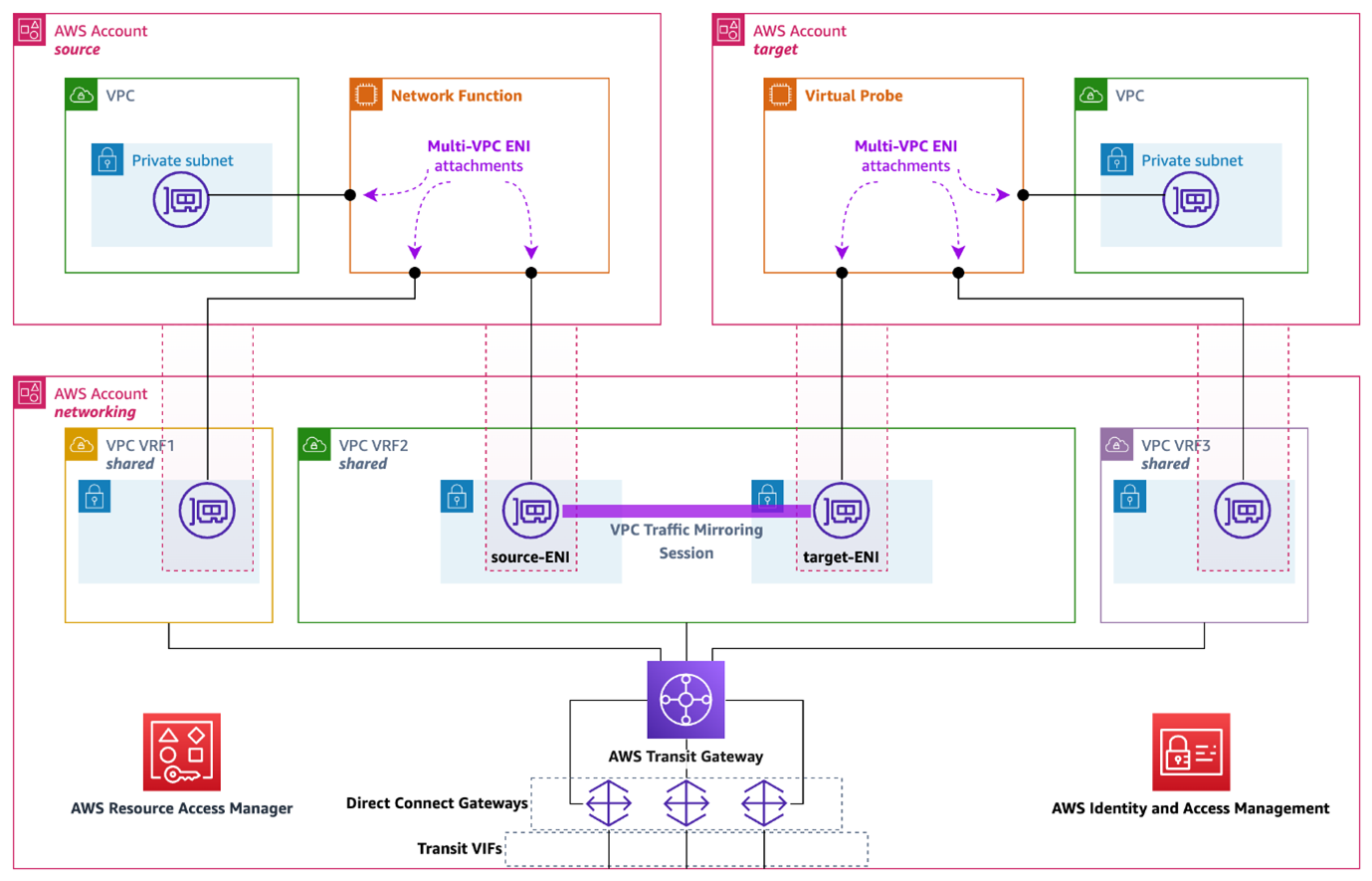

Telefónica Germany’s central tracing architecture consists of these main components:

- Application Accounts (source accounts) – An account where network workloads are deployed and where the mirroring sources are.

- Tracing Account (target account) – An account where network traffic is mirrored to, aggregated, and initially processed by virtual probes.

- VPC Traffic Mirror Session – An Amazon VPC feature that can be used to copy network packets from a source ENI to a target.

Figure 3: Centralized VPC Traffic Mirroring with Shared Networking Architecture. Application Accounts and Tracing Account

The architecture above illustrates a centralized VPC traffic mirroring design. Both the source and target accounts utilize subnets from a central network account’s VPC for mirroring traffic. This allows both source and target accounts to create and attach ENIs within the same network segment, represented by VPC VRF2. In this example, VPC VRF2 represents the signaling VRF used for carrying signaling traffic in a core network. The AWS account on the upper left of the architecture, hosts a NF connected to multiple network segments, including the signaling VRF. On the upper right, a virtual probe has an ENI from a subnet that also belongs to signaling VRF. As a result, the two accounts are seamlessly connected over the same network facilitated by the shared signaling VRF in the central network account.

From a VPC Traffic Mirroring perspective, a traffic mirroring session is created on the VPC VRF2, where an ENI attached to the NF in the source account is paired with an ENI attached to a probe in the target account. Traffic filtering rules can be configured for both inbound and outbound directions, to control which traffic is mirrored from the source ENI to the target ENI. This allows selective mirroring of specific types of traffic (for example, HTTPS on TCP port 443), while excluding others (such as, SSH on TCP port 22). The VPC traffic mirroring (VTM) configuration and management is centralized in the networking account.

This concept scales to include multiple NF sources and across network segments from one or multiple source accounts all mirrored towards probes located in a single tracing account. This centralized design architecture offers several advantages:

- Simplified networking: it eliminates the need for traffic routing via VPC Peering or Transit Gateway (TGW) between applications located across different AWS accounts.

- Consistent traffic boundaries: both network segment separation and application boundaries are maintained.

- Separation of concerns: traffic mirroring sources are controlled by the application teams via resource tags (details in next section), while networking teams manage VPC traffic mirroring sessions centrally.

- Consistent encryption: for supported Nitro instance types (such as C5n, C6a, C6gn, C6i), automatic in-transit encryption is maintained during direct ENI-to-ENI mirroring. More information on encryption can be found here.

Systems processing highly sensitive telecommunications data must meet strict security requirements, including end-to-end encryption across all data stages and robust key management controls. The AWS Nitro System addresses these needs through specialized hardware capabilities that perform encryption and decryption directly on the Nitro hardware using AEAD algorithms and 256-bit keys, ensuring confidentiality and integrity of data in-transit. Key material is generated and securely stored within the Nitro hardware boundary, with hardware-enforced controls preventing operator access. Newer Nitro-based EC2 instances also offer confidential computing with memory encryption enabled by default for data in-use protection. Beyond encryption, mirrored traffic storage must be secured with strict access controls and encryption at rest, as captured packets may contain sensitive credentials and tokens. Additionally, VPC mirroring capabilities are centrally governed at Telefónica Germany to prevent unauthorized cross-account traffic capture and ensure only legitimate monitoring activities occur. For more information about encryption in AWS, please refer to the Data Protection in Amazon EC2 documentation.

Solution implementation and workflows

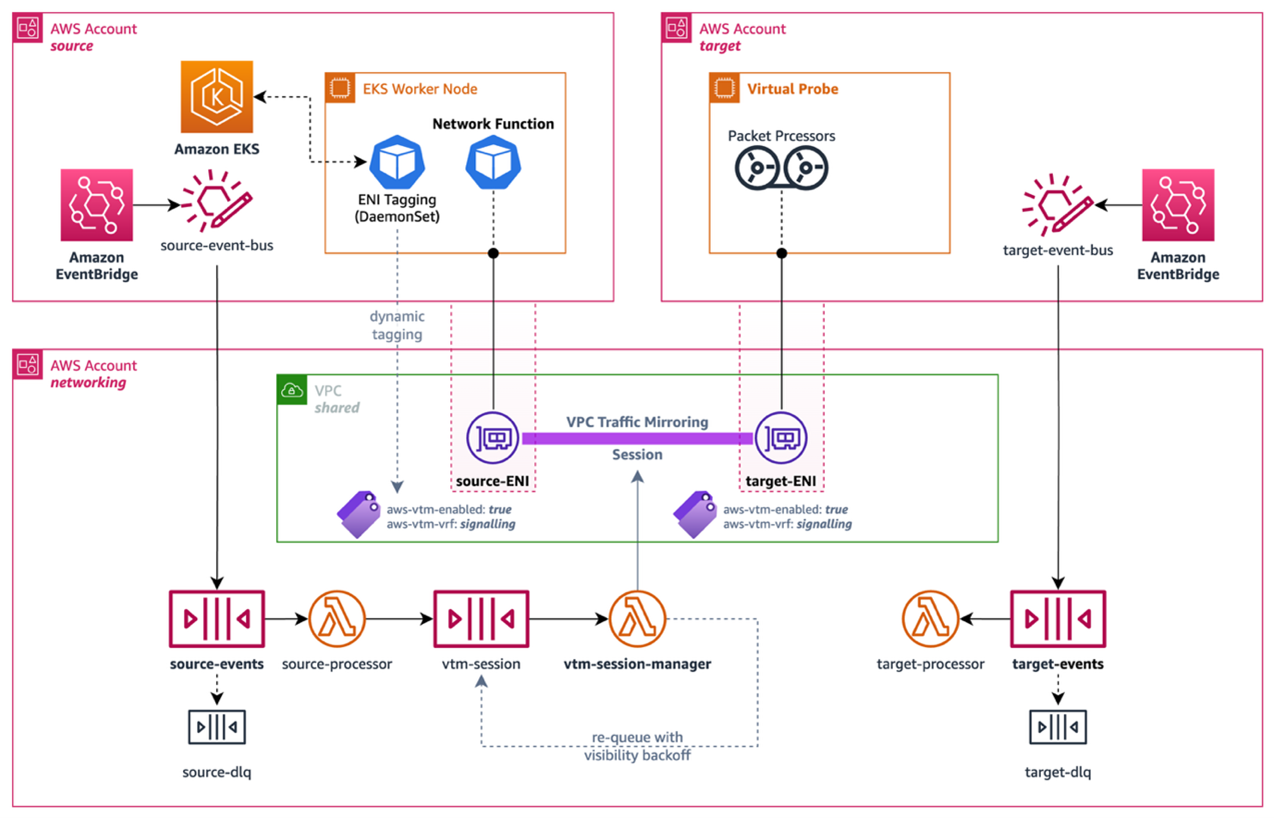

Due to the dynamic nature of the environment, where applications are deployed as pods on top of Amazon Elastic Kubernetes Service (EKS), and considering the separation of concerns between application, networking, and tracing teams, the solution leverages two complementary design principles: tag-based ENI management and an event-driven architecture. Together, these patterns enable a fully automated, scalable deployment of the tracing solution with minimal operational overhead.

Figure 4: VPC Traffic Mirroring Automation

Tag-Based ENI Management

In a dynamic EKS environment, pods are ephemeral: they are created, rescheduled, and terminated continuously. Rather than requiring the networking team to manually track which workloads need tracing, the solution uses AWS resource tags applied to Elastic Network Interfaces (ENIs). Application pods express their tracing intent through pod annotations — for example, a pod requiring traffic mirroring would include aws-vtm/enabled: “true” in its metadata. A lightweight DaemonSet deployed on all EKS worker nodes, monitors pod lifecycle events and automatically updates the aws-vtm-enabled tag on the corresponding ENI. This decouples tracing configuration from the application deployment process, allowing each team to operate independently within their own domain:

- Application teams declare tracing intent via pod annotations.

- Networking teams manage VPC Traffic Mirroring sessions centrally, reacting to tag changes rather than application-specific logic.

- Tracing teams focus on probe management and traffic analysis, without needing visibility into individual workload deployments.

Event-Driven Architecture

Tag-based management alone is not sufficient in a fast-moving environment, and changes must be acted upon in near-real-time. This is where the event-driven architecture comes in. AWS resources required for this solution are grouped into three logical pools to support a multi-account deployment:

- Source account(s): One or more AWS accounts where Network Functions (NFs) are deployed and source ENIs reside.

- Target account: One AWS account where virtual probes are deployed and target ENIs reside.

- Networking account: One AWS account where VTM sessions and filters are defined and centrally managed.

In both the source and target accounts, a custom Amazon EventBridge bus captures ENI lifecycle and tagging events, such as ENI attachment, detachment, or tag value changes, and forwards them to the networking account. The networking account hosts two Amazon SQS queues:

- source-events queue: Receives source ENI events (attachment, detachment, tagging). A dedicated AWS Lambda function processes these events to trigger VTM session creation or removal.

- target-events queue: Receives target ENI events. A dedicated Lambda function processes these to manage VTM target creation or removal.

When a source account event indicates that a new mirroring session should be created or removed, an additional event is emitted to a third queue (vtm-session). The associated Lambda function identifies the appropriate target ENI and either creates or tears down the corresponding VTM session.

Session Creation and Removal

A VPC Traffic Mirroring session is created when:

- An ENI in the source account is attached to an EC2 instance and tagged with

aws-vtm-enabled: true, and a target ENI capable of accepting new sessions exists. If no target is available, the event is parked and retried later. - An already-attached ENI is tagged with

aws-vtm-enabled: true, or its tag value changes from false to true, and a suitable target ENI exists.

A VPC Traffic Mirroring session is removed when:

- An ENI in the source account is detached from its EC2 instance and was previously tagged with

aws-vtm-enabled: true, and an active session for that ENI exists. - The

aws-vtm-enabledtag value for a source ENI change from true to false, or the tag is deleted entirely.

This automated lifecycle management ensures that the tracing configuration always reflects the current state of the environment, without requiring manual intervention from the networking team.

Conclusion

Telefónica Germany’s centralized network tracing implementation using Amazon VPC Traffic Mirroring demonstrates how telecommunications companies can successfully bridge traditional network monitoring with modern cloud-native architectures. This solution addresses the challenge of maintaining real-time network visibility across dynamic, multi-account AWS environments while preserving networking segmentation constructs and separation of concerns.

Among the yielded benefits are:

- Operational Efficiency: Automated, event-driven VTM session management eliminates manual configuration overhead and reduces time-to-insight for network troubleshooting

- Scalability: The centralized architecture seamlessly scales across multiple network workloads and AWS accounts without requiring individual network implementations per application

- Security: End-to-end encryption through the AWS Nitro System and confidential computing ensures sensitive telecommunications data remains protected throughout the tracing pipeline

- Cost Optimization: Tag-based session management and dynamic ENI tagging minimize unnecessary traffic mirroring, reducing both network overhead and associated costs, multi-VPC ENI attachments reduce traffic costs.

This architecture establishes a foundation for network tracing in AWS, demonstrating how telecommunications companies can maintain operational excellence and security posture while improving reliability, performance efficiency and cost when embracing cloud-native technologies.