Artificial Intelligence

The importance of hyperparameter tuning for scaling deep learning training to multiple GPUs

Parallel processing with multiple GPUs is an important step in scaling training of deep models. In each training iteration, typically a small subset of the dataset, called a mini-batch, is processed. When a single GPU is available, processing of the mini-batch in each training iteration is handled by this GPU. When training with multiple GPUs, […]

Maximize training performance with Gluon data loader workers

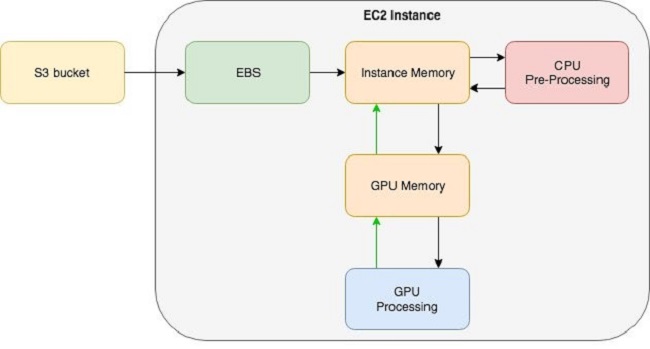

With recent advances in CPU and GPU technology, training complex and deep neural network models in a few hours is within reach for many state of-the-art deep models. However, when you use a system with such high processing throughput potential, the required data for the processing pipeline must be ready before each iteration.