Networking & Content Delivery

How FIS centralized 13,000 VPC endpoints to strengthen security and simplify operations

FIS is a global leader in financial technology, delivering modern banking and payments solutions to institutions worldwide. Its Total Issuer Solutions business represents one of the largest credit issuing and processing platforms globally, serving clients in more than 75 countries and processing over 40 billion transactions annually. The portfolio combines FIS’s scale, data richness and AI capabilities with decades of issuer expertise to provide end-to-end card issuing, fraud management, loyalty, and value-added services, enabling financial institutions to modernize operations and accelerate growth.

As FIS scaled their AWS infrastructure across multiple accounts and Regions, managing over 13,000 Amazon Virtual Private Cloud (Amazon VPC) endpoints distributed across hundreds of VPCs in seven AWS regions became a significant operational and financial challenge.

The distributed approach to VPC endpoint management created three primary challenges. First, the cost impact was substantial—VPC endpoint usage represented a significant line item on their AWS bill. Second, each VPC endpoint consumed IPv4 RFC 1918 address space, with typical applications requiring more than 30 endpoints across multiple Availability Zones, creating an opportunity to optimize address allocation at scale. Third, cross-Region API access forced traffic to traverse the public internet through forward proxies, introducing security and performance concerns.

FIS addressed this challenge using a hub-and-spoke architecture powered by AWS Transit Gateway, combined with the DNS management capabilities of Amazon Route 53 Profiles—a feature released in April 2024 that simplifies private hosted zone (PHZ) associations across multiple VPCs and accounts.

In this post, we walk through FIS’s implementation of a centralized VPC endpoint architecture that consolidated 13,000 endpoints into a shared services VPC in each Region—reducing costs and improving security. You learn how FIS designed a scalable endpoint services VPC, implemented Route 53 Profiles for cross-account DNS management, configured AWS Transit Gateway routing for cross-Region API access, and executed a phased migration strategy that minimized disruption to production workloads.

Solution Overview

FIS built their VPC endpoint consolidation strategy on a hub-and-spoke network topology using Transit Gateways. This design establishes regional Transit Gateways across seven AWS Regions with full mesh peering connections between gateways. Using Amazon VPC endpoints is a standard practice at FIS adopted since the beginning of their cloud journey.

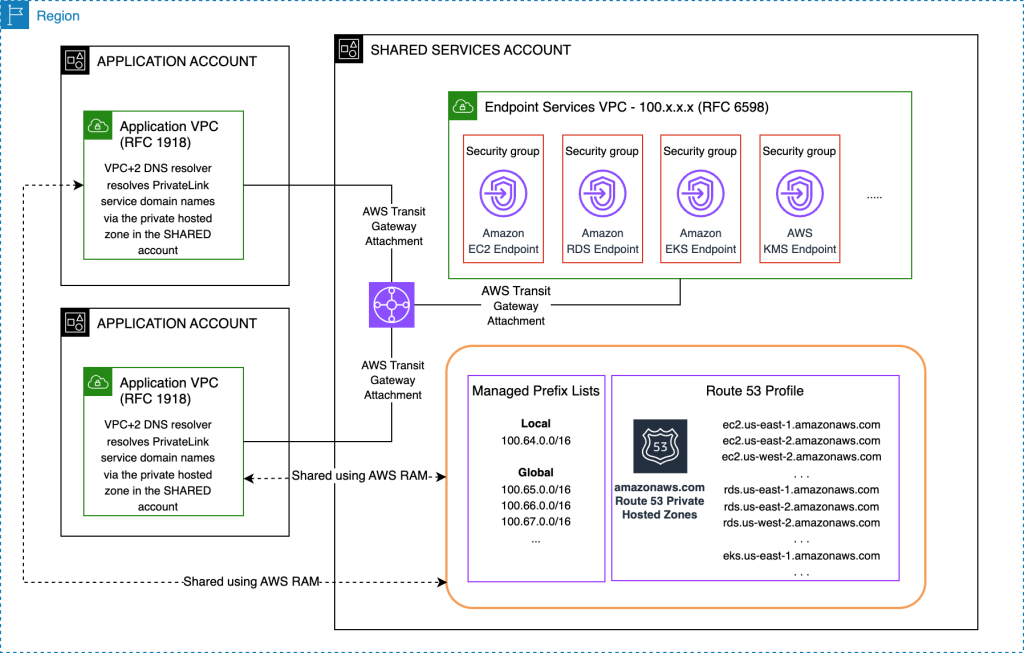

The following architecture diagram shows how they centralized the VPC endpoints. The diagram shows VPC endpoints, Route 53 Profiles and the Transit Gateway set up in the shared services account, and how the Route 53 Profiles are shared with the application accounts within the same Region.

Figure 1: Diagram showing all the resources in a single region

The architecture (Figure 1) has the following key components:

- The VPC endpoints are placed in an endpoint services VPC within the centralized shared services account. The diagram shows a few services that FIS uses for illustration.

- The endpoint services VPC uses the carrier-grade NAT range (RFC 6598) of 100.64.0.0/10. This avoids using addresses from the private IP address ranges (RFC 1918) that are in short supply.

- FIS created a Route 53 Profile that includes the Route 53 Private Hosted Zones for every Region, providing cross-Region access to VPC endpoints.

- FIS uses AWS Resource Access Manager (RAM) to share the regional Route 53 Profiles with application accounts.

- Each endpoint has a security group that permits traffic from all the application VPCs globally.

FIS replicates this setup in each of the seven Regions where they operate. In addition to the resources listed earlier, each Region also has two managed prefix lists. One references the CIDR block of that Region’s endpoint services VPC, while a second “global” one includes the CIDR blocks of endpoint services VPCs of all other Regions. This allows application VPCs to restrict access to in-Region endpoints only or allow access to cross-Region endpoints too. A separate “local” prefix list also helps reduce the number of rules in a security group, when cross-Region connectivity is not needed.

All the resources in the architecture are deployed using Terraform, enabling consistent deployment across all seven AWS Regions through infrastructure-as-code practices.

Implementing Route 53 Profiles for DNS Management

Creating Route 53 Private Hosted Zones (PHZs) for each AWS service endpoint across all Regions forms the foundation of this centralized VPC endpoints architecture. Rather than using AWS-managed private hosted zones that deploy automatically when you enable private DNS on VPC endpoints, disabling this feature provides complete control over DNS resolution strategy.

FIS created custom private hosted zones for services like ec2.us-east-1.amazonaws.com, rds.eu-west-1.amazonaws.com, and kms.us-west-2.amazonaws.com within the shared services account and associated them with the centralized endpoints VPC.

Each private hosted zone contains an A record at the zone apex that is an Alias record to the corresponding VPC endpoint in the centralized endpoint services VPC. This configuration ensures DNS queries for AWS service endpoints resolve to centralized infrastructure without requiring local VPC endpoints in each spoke VPC.

Route 53 Profiles eliminate the need for individual zone associations across hundreds of VPCs. Previously, associating 30 private hosted zones with 200 VPCs required 6,000 individual associations. To establish centralized DNS governance, FIS created a single Route 53 Profile in the shared services account. They then associated all regional endpoint private hosted zones across all Regions with this profile. Note that Route 53 Profiles are regional resources. FIS created one profile for each Region.

FIS uses AWS RAM as the secure sharing mechanism for both the Route 53 Profile and managed prefix lists. The Route 53 Profile is shared with all organizational account principals using the AWS-managed permission AWSRAMPermissionRoute53ProfileAllowAssociation, which grants read-only access for VPC association while preventing modifications to the profile. FIS is exploring extending the usability of that profile to allow application teams to add zones to it.

Migration Strategy

As a large organization, FIS needed to ensure a smooth transition from local VPC endpoints to centralized VPC endpoints. This included allowing application teams to migrate at a time of their own choosing without forcing all of them to migrate simultaneously.

DNS resolution priority rules enabled this flexibility. When both AWS-managed zones from local VPC endpoints and Route 53 Profile zones exist for the same DNS name, local VPC-associated zones take precedence. This behavior supports phased migration where application teams associate the Route 53 Profile with their VPCs without immediate traffic disruption.

Application teams then perform pre-migration validation using the commands FIS provided. This validation confirms that the profile association succeeded and that they can access AWS services through the new centralized VPC endpoint rather than the existing local VPC endpoint. To do this, teams use the AWS-provided DNS name of the centralized VPC endpoint rather than the service name defined on the Route 53 hosted zone. This approach validates both network routing through Transit Gateway and security group configurations.

For a large enterprise like FIS, structuring migrations in waves reduces risk and operational disruption. Application teams started with non-production environments and moved to production workloads. They also received detailed runbooks, including rollback procedures and testing commands tailored to their service dependencies. Each successful migration immediately delivers cost savings and frees valuable RFC 1918 IP address space while maintaining operational stability throughout the transition process.

Benefits (Cost savings, IP address recovery, and improved security)

By consolidating 13,000 distributed endpoints into a shared services model, FIS eliminated redundant endpoint provisioning across hundreds of VPCs while maintaining service access levels. The cost reduction strategy focused on identifying commonly used AWS services across the infrastructure and provisioning centralized endpoints only for high-utilization services.

The solution also reclaimed valuable IPv4 RFC 1918 address space. Each VPC endpoint consumes one IP address per Availability Zone, and typical applications require more than 30 endpoints, creating substantial address space consumption. The implementation uses RFC 6598 carrier-grade NAT address space for the centralized endpoint services VPC, preserving RFC 1918 ranges for application workloads while ensuring full routability within AWS and on-premises connectivity.

The centralized architecture eliminated cross-Region API access traversing the public internet through forward proxies. Full-mesh Transit Gateway peering between all seven AWS Regions, combined with Route 53 Profiles containing regional endpoint zones, enables secure cross-Region API access entirely over the AWS backbone. This approach reduces security exposure while improving network performance and reliability.

Route 53 Profiles replace the previous zone association model that would have required thousands of individual associations across multi-account, multi-Region infrastructure. Future development involves extending profile capabilities to allow application teams to associate their own private hosted zones, creating unified DNS views across all VPCs. Custom-managed permissions through AWS RAM will replace current AWS-managed permissions, enabling application teams to contribute zones to the centralized profile while maintaining governance controls.

Challenges and Lessons Learned

Implementing this centralized VPC endpoint architecture at enterprise scale presented several technical and organizational challenges that yielded valuable lessons:

Security Group Rule Optimization Challenge: Managing security group rules across thousands of endpoints required careful design to avoid hitting AWS service limits. FIS addressed this by implementing a dual prefix list strategy—using local prefix lists for same-region traffic and global prefix lists only when cross-Region access was essential. This approach maintains security boundaries while preventing security groups from exceeding the maximum number of rules, demonstrating that thoughtful network segmentation can solve both security and scalability challenges simultaneously.

Large-Scale Migration Complexity: Coordinating the migration of hundreds of application teams from local to centralized VPC endpoints without service disruption required innovative DNS management. The key lesson learned was leveraging Route 53’s DNS resolution priority rules, where local VPC-associated zones take precedence over Route 53 Profile zones. This enabled phased migrations where teams could associate the Route 53 Profile with their VPCs without immediate traffic impact, then validate connectivity before removing local endpoints.

Organizational Change Management: Perhaps the most valuable lesson was that technical excellence and organizational enablement are essential for enterprise transformations. FIS succeeded by providing detailed runbooks with rollback procedures and testing commands, allowing application teams to migrate at their own pace. This approach reduced operational risk while maintaining team autonomy throughout the transition process.

Conclusion

In this post, you learned how FIS centralized 13,000 VPC endpoints across multiple AWS Regions using a hub-and-spoke architecture powered by Route 53 Profiles, AWS Transit Gateway, and AWS RAM. This approach reduced costs, preserved valuable IPv4 address space, and eliminated the need for cross-Region API traffic to traverse the public internet.

To dive deeper into the technical implementation details, watch the full video from the AWS FSI Meetup in 2025. To get started with a similar architecture in your own environment:

- Explore Route 53 Profiles in the Amazon Route 53 console

- Review the Route 53 Profiles documentation

- Check out the VPC Endpoints User Guide for additional configuration options.

- For multi-account networking patterns, explore the AWS Transit Gateway and AWS RAM documentation.