Networking & Content Delivery

Integrating external multicast services with AWS

Introduction

Many enterprise customers and telecom operators run IP Multicast in their networks for video transcoding, financial trading platforms, multimedia broadcast multicast system (MBMS), and other services. As more and more customers migrate their on-premises workloads to the cloud, there is a need to not just build multicast applications on AWS, but also to integrate external multicast services with AWS.

When speaking to customers about their needs, a common thread appears on the topic of multicast — extending multicast capability between their on-premises datacenters and AWS by adding support for Internet Group Management Protocol (IGMP), and multicast routing protocols such as Protocol Independent Multicast (PIM). Whilst there is no native support for this functionality at present, with a little engineering it is possible to enable such a solution.

This blog post outlines a mechanism that can be used to integrate AWS with external multicast services.

Update – December 10, 2020 – IGMPv2 support is now available within a VPC attached to AWS Transit Gateway. Clients can dynamically join and leave a Multicast Group by sending IGMP messages. In addition, when IGMPv2 is enabled, any Nitro instance can be a source – there is no static mapping required. Check the official documentation for further guidance and region support.

Solution Overview

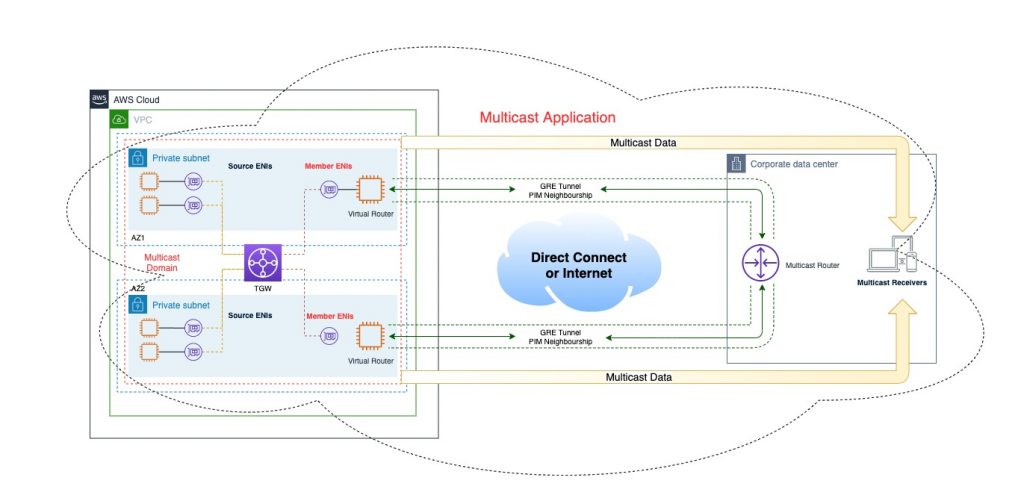

The proposed solution requires the deployment of 3rd party, multicast routing capable, appliances within an Amazon Virtual Private Cloud (VPC). A tunneling mechanism between multicast domains both outside of and within AWS is also required. The mechanics of how you choose to connect your on-premises multicast routers with AWS are not fixed; you can make use of both public internet connectivity and private Direct Connect (DX) circuits.

The constituent parts of this solution are:

Underlay Network

- This is the network substrate, made up of physical connections, IP routing, network tunneling, and VPC security groups

Overlay Network

- This is the multicast layer, made up of multicast enabled routing devices that negotiate and exchange multicast data across network tunnels, AWS multicast components, and VPC settings

The following diagram sets up the solution overview:

Multicast routing overview

In 2019, AWS announced multicast support for AWS Transit Gateway. AWS was the first Public Cloud Provider to offer this capability and, in doing so, has helped customers to build multicast applications in the cloud.

Multicast is a traffic forwarding mechanism that intelligently floods packets to a subset of interfaces interested in receiving them. When a client opens a multicast capable application, a special control message called an IGMP join is generated. This message can be intercepted by switches through a process called IGMP snooping; subsequently the relative interfaces on a switch get enabled for multicast forwarding. IGMP joins are also flooded to Layer 3 routers connected to the network.

These routers are ultimately responsible for delivering multicast packets between different network segments. There are several multicast routing protocols, with Protocol Independent Multicast (PIM) being a popular choice. PIM, like other multicast routing protocols builds logical constructs called ‘trees’ or ‘reverse path trees’ that map the path to a source, as opposed to a destination. This is important in the context of network tunneling, since the exit interface for the source of a tree may not be the same as the interface the multicast packets arrived on!

You can read more about multicast routing architectures in this IETF overview.

Pre-Requisites

Network underlay requirements

The first step is to establish private IP connectivity between AWS VPC and an on-premises network. We expect the reader of this blog to be familiar with configuration steps that are taken to extend AWS VPC access to the on-premises network. The multicast tunneling solutions we describe here are connectivity-agnostic, so you can use any of the traditional on premises resource to AWS VPC connectivity methods such as:

- Direct Connect (DX) with Transit Virtual Interface (VIF) and AWS Transit Gateway

- DX with Private VIF and Virtual Private Gateway (VGW)

- VPN over public internet or with DX and Public VIF

You can read more about hybrid connectivity options in the AWS documentation:

https://docs.aws.amazon.com/whitepapers/latest/aws-vpc-connectivity-options/welcome.html

To explain our solution, we will use the Private VIF topology example. You can use a different connectivity option in your setup.

DX with Private VIF and Virtual Private Gateway

In this scenario, a customer makes use of DX circuits from their datacenter to build private IP connections to the VPCs that contain the virtual routers in AWS. The internal IP addresses of the virtual routers and the datacenter routers would be advertised as part of the eBGP advertisements across the Private VIF neighborship. Again, in this scenario the connections are private and make use of high bandwidth, low latency connections.

Tunneling, Source-Destination checking, and Security Groups

To forward multicast traffic between AWS VPCs and on-premises environments, an EC2 instance capable of running multicast routing functions should be deployed in each availability zone that requires multicast connectivity to an on-premises network. This appliance will act as a tunnel head-end and tail-end for the multicast traffic sourced within or destined to the workloads in a VPC. Tunneling can be accomplished using several tunneling mechanisms. We have tested using Generic Routing Encapsulation (GRE) tunnels on multiple vendor devices to prove the pattern.

To simplify our setup, we attach the EC2 instance running virtual router software to a VPC via the single ENI interface, this is known as a “router on a stick” deployment. The same ENI-facing interface on the router is used to receive and transmit native multicast traffic and serve as a head-end and tail-end of a GRE tunnel.

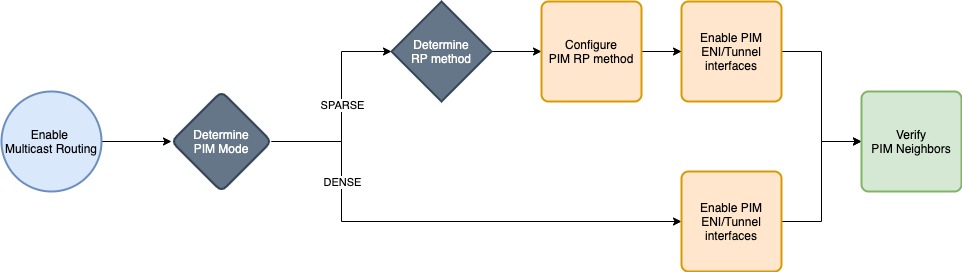

On your virtual routing instances, enable multicast routing and PIM over the tunnel interfaces. If using PIM Dense Mode (PIM-DM), your neighborships should form across the tunnel interfaces shortly after. If using PIM Sparse Mode (PIM-SM), you will need to specify static Rendezvous Point (RP) configuration or enable AutoRP. For both configurations, verify that the PIM adjacencies are established.

Use the following workflow as a guide to establishing your PIM neighborships:

Your virtual routing devices will be handling traffic for services that use IP addresses outside of the VPC and thus, fail the Source/Destination Check for network interfaces within the VPC. Make sure you disable this antispoofing function from within the VPC console on the interface that will provide the multicast routing functionality.

Your virtual instances will be building tunnels to routing devices that are outside of the VPC. If using GRE, you must enable inbound traffic from those devices for the GRE protocol (47).

* Important – Note that because any traffic passed across the tunnel is encapsulated with GRE, it will bypass security group checks on the virtual routing instance as it has already been permitted by the inbound GRE rule.

Multicast overlay configuration

AWS Transit Gateway

Following-on from the configuration of the network underlay, AWS Transit Gateway needs to be deployed within the same Region that the virtual routers were deployed into. AWS Transit Gateway plays a critical role in the multicast communication path. It is the service that permits the sending and receiving of multicast packets, both to and from network interfaces that are registered to it.

To get started, open the AWS console in a region that supports Multicast and create a new AWS Transit Gateway. Be sure to enable Multicast support! You will need to create a AWS Transit Gateway VPC attachment for the VPC that contains the virtual routers; we will bind this attachment to the multicast configuration in a later step.

* Note that as of writing, multicast is supported on AWS Transit Gateway in the following regions us-east-1, sa-east-1, ap-east-1, me-south-1, ap-northeast-2 and eu-central-1.

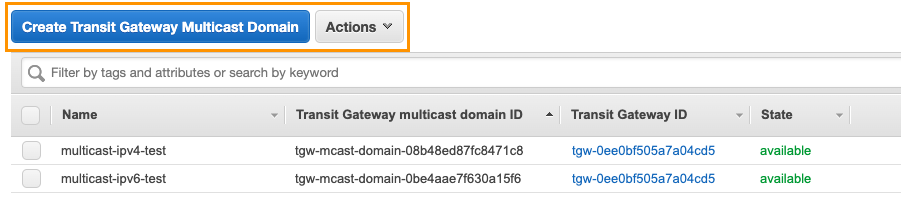

Once this AWS Transit Gateway has been created and your VPC attachment is complete, you need to create a Multicast Domain. Back in the console, open the Transit Gateway Multicast window and use the applet to create one.

Provide a name for the Multicast Domain and associate it with the AWS Transit Gateway that you just created.

With your newly created AWS Transit Gateway and Domain, you are ready to configure your Multicast Domain Associations, and Group configurations!

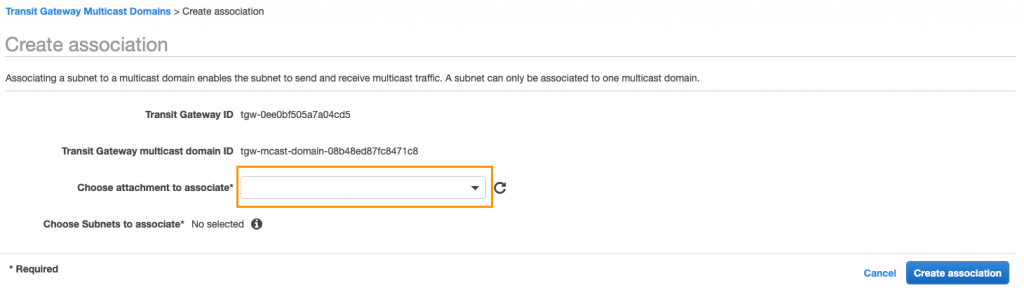

A Multicast Domain is associated with Subnets from AWS Transit Gateway VPC attachments. Use the applet to perform the domain association with the VPC and Subnets that will contain the virtual router instances.

We’re nearly there, the last step is to set up the multicast group members and sources.

Multicast Group Membership

Multicast groups are configured statically on AWS Transit Gateway (no IGMP support). Multicast groups are contained within a single multicast enabled AWS Transit Gateway and within a single account, as multicast functionality cannot be shared via Resource Access Manager (RAM). Configuring AWS Transit Gateway is part of the requirement here, the other part is statically configuring your virtual routing instances to forward multicast traffic to the VPC on a chosen interface. This interface will either be the source of a multicast flow (when originating from outside the VPC) or the receiver of a multicast flow (when originating from within the VPC).

Refer to the following table as a reference for this configuration:

Since AWS Transit Gateway will not propagate IGMP join messages sent by an EC2 instance running a multicast receiver, or by statically configuring AWS Transit Gateway, the virtual router will not be aware that it needs to forward the multicast packets. If multicast receivers reside in AWS, you should configure static IGMP joins on the virtual router running in AWS VPC. This will enable the multicast tree to be built from on-prem to the AWS VPC.

Configure static IGMP joins on the virtual router

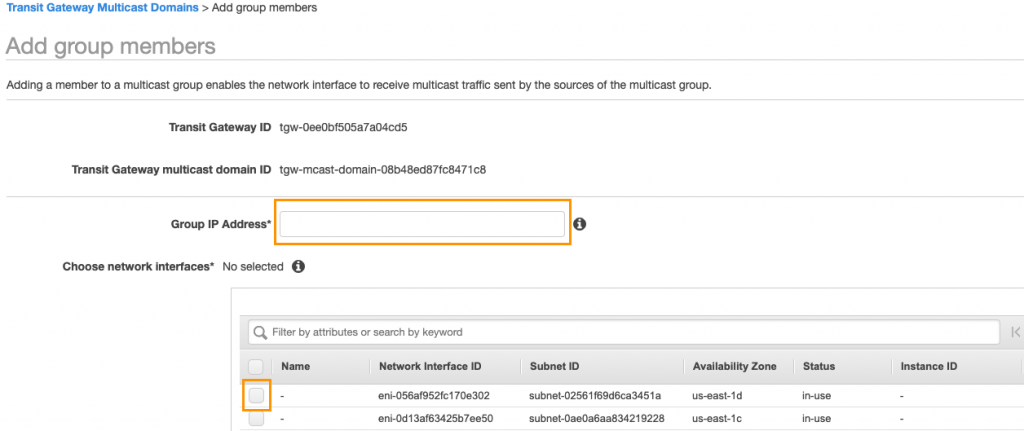

Based on your scenario, multicast group sources and members should be mapped to specific network interfaces.

To perform the mapping, add a multicast member to a group. Use a similar process to add additional members and multicast sources.

* Note – You may have noticed that we have defined two sources for the multicast group 232.0.0.1. By default, this is not possible as there is a soft limit of one multicast source per multicast group. To raise this limit, work with your account team or open a support ticket through the console.

At this point, the multicast overlay configuration is complete.

For further details on AWS Transit Gateway and enabling multicast support within a VPC, refer to the following document:

https://docs.aws.amazon.com/vpc/latest/tgw/working-with-multicast.html

Use Cases

Multicast Video Streaming – On-premises sources to AWS

The first solution that we will cover, facilitates the distribution of multicast traffic from a customer datacenter to a series of interested EC2 instances. In this particular scenario we are going to stream video so that it can be watched simultaneously on different EC2 instances. One of the benefits of multicast is that it can reduce bandwidth utilization care of its broadcast nature – multicast will fan out the distribution of the video at the last possible moment. In this case, this fanout will happen at the AWS Transit Gateway. The diagram below sets up the architecture:

Our multicast source here is a terminal in the customer datacenter running a version of Linux that has ffmpeg installed. Since the underlay network and overlay multicast configuration are already in place, all that left to setup is a multicast stream from the client in the datacenter and connect to it from instances within AWS.

We start by preparing a file in the Transport Stream (ts) format, so that it would not need to be converted on a fly. This file can now be streamed to a multicast group and a UDP port of our choice, such as this:

ffmpeg -re -i vid/0001.ts -c copy -f mpegts udp://232.0.0.1:1234?pkt_size=188

ffmpeg is reading the source .ts file at the native frame rate, copying all the streams and specifying the output format as ‘mpegts’, and outputting the media to a multicast group at ip 232.0.0.1:1234 with a packet size as a multiple of 188.

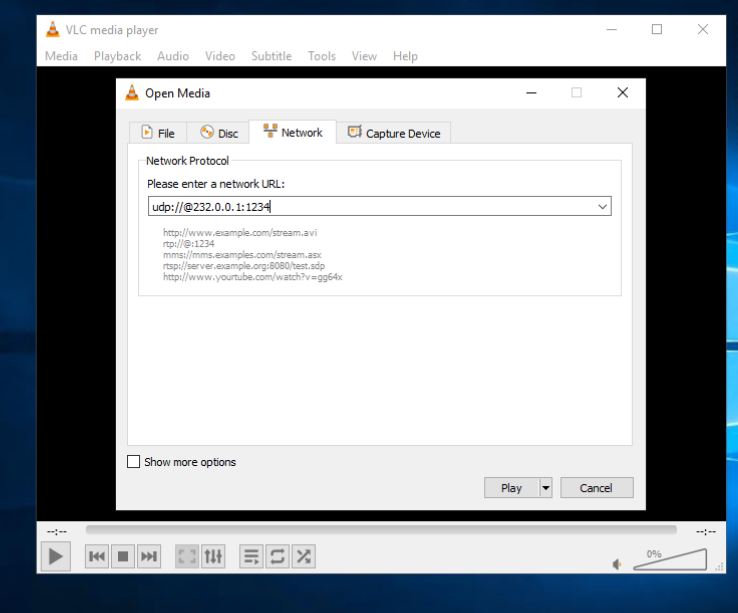

On the AWS side, we are using Wireshark and VLC client to receive the multicast stream. Wireshark gives us the confidence that the multicast packets are being delivered immediately, and VLC shows the video!

When setting up VLC, you’ll need to specify the multicast address and the UDP port that ffmpeg used to send the stream as well as the transport protocol that was chosen.

Great, all that’s left to do now is send the stream and watch the video arrive, simultaneously on multiple EC2 instances!

Multicast Video Streaming – Sources in AWS to On-Prem

In the previous example we demonstrated how the multicast stream originated on-premises can be received by EC2 instances in AWS. Our solution can also facilitate the distribution of multicast traffic from AWS VPC to a customer on-premises environment. Similar to the previous example, you can send video over Direct Connect or VPN, so that it can be watched simultaneously on different workstations within customer’s networks. Without multicast, streaming the same video to a number of on-premises devices would mean that the traffic needs to be duplicated and carried over multiple unicast flows, increasing egress traffic from AWS and impacting the overall cost of the solution. With multicast, the stream can only be sent once and replicated on-premises to the receivers. The diagram below depicts this architecture:

End-to-end multicast traffic flow diagram

Now that we’ve demonstrated how to setup the multicast traffic forwarding between the on-prem and AWS environments, let us walk through the packet forwarding scenario. We will review the setup with a multicast source on an EC2 instance.

- The multicast source sends traffic to a multicast group

- A transit gateway with the multicast domain configuration and registered source and member forwards packets to the virtual router

- The virtual router deployed in a VPC receives the multicast packets

- The virtual router forwards multicast packets to a rendezvous point via the tunnel

- On-premises router receives packets via the tunnel and decapsulates them

- Decapsulated packets are sent over downstream multicast interface to the receivers

For brevity, we will not cover the scenario with multicast sources residing on-premises, as the packet flow diagram is essentially the same, with on premises and virtual router in AWS switching encapsulation and decapsulation roles.

Deployment considerations

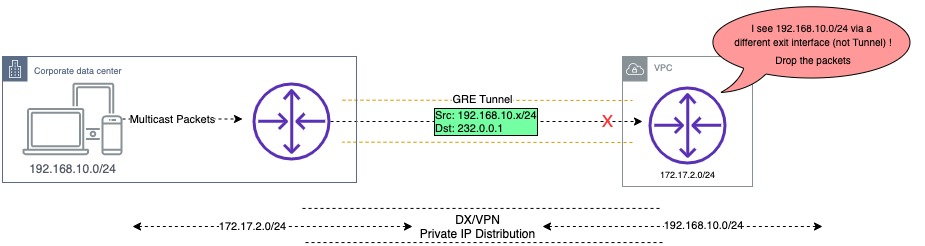

Reverse Path Forwarding (RPF)

One of the key concepts of the multicast communication is the RPF check. In order to avoid forwarding loops, a multicast router performs an RPF check when it receives a multicast packet. This check assures that the interface on which the packet was received is also the outbound interface in the unicast routing table for that same source network. If it is, the packet is forwarded, if it is not, it is discarded. When using tunnels for multicast traffic it’s likely that RPF checks will fail as unicast static routes or BGP will dictate that the source of the payload is routable outside of the tunnel interface. The source of multicast packets is always a unicast IP address.

In order to avoid packets being discarded by a multicast router when the packets are received over the GRE tunnel interface, a multicast route must be added to the virtual or on-premises router with an entry for the unicast IPv4 CIDR block associated with the source. This can be achieved by creating a multicast static route (mroute) entry and pointing it to the tunnel interface.

Rendezvous Point Placement

If using PIM-SM, consideration will need to be given as to the placement of the RP. This is because the RP forms the initial multicast forwarding path between sources and receivers – therefore the RP IP address must be reachable from all neighbors.

Resilience

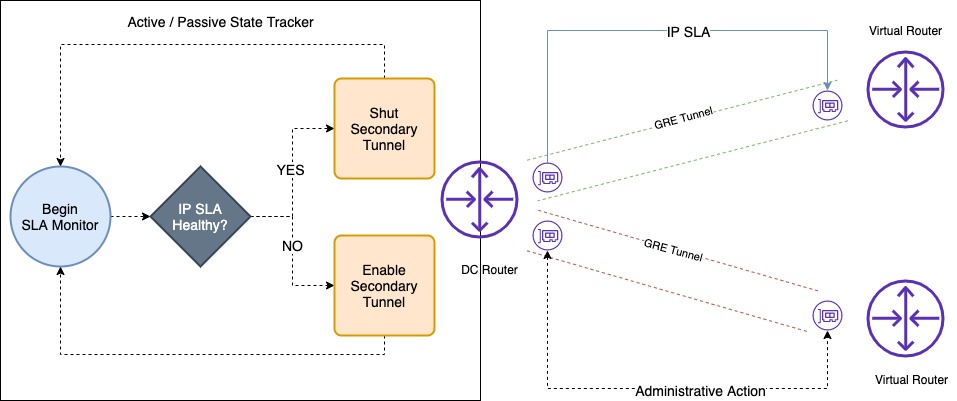

In the diagrams shown throughout this blog post, we have depicted AZ resilience by having two virtual routers configured to receive the same multicast stream. This multicast stream would be sent over two different tunnels into AWS, and AWS Transit Gateway would then forward the multicast packets to the same receivers, twice! Depending on your requirements, this may be acceptable or it may not. Consider whether your application can handle duplicate packets and associated costs of doing so.

Below is an example workflow which sets up an active/passive state tracker for the primary tunnel interface from the datacenter router. The health of the primary tunnel destination, determines the administrative state of the secondary tunnel. During normal operations, multicast traffic is only sent across the primary tunnel interface. If the health check fails, the secondary tunnel is made live. A return to health of the primary tunnel would shut the secondary once again.

This example workflow could be deployed on the on-premises router, terminating GRE tunnels from AWS.

Note that there are many alternatives here with regards to how you architect your multicast High Availability. You can explore other options such as unique multicast group ranges per AZ, multicast server-side failover with an out-of-band channel, etc.

Instance Types

Whilst Nitro-based instances for your multicast receivers are not essential, any multicast source must be a Nitro instance. Members of multicast groups need not be Nitro based instances.

Multicast Limits

As with many other services, AWS imposes service quotas (previously known as limits) on multicast deployments. We recommend that you review the Multicast section of the following document and plan your deployment accordingly:

https://docs.aws.amazon.com/vpc/latest/tgw/transit-gateway-quotas.html

Conclusion

In this post we have shown how enterprise and telecom customers can build multicast topologies using AWS Native Services such as AWS Transit Gateway and AWS Direct Connect, and partner solutions from AWS Marketplace.

You can get started right now on the AWS Management Console. If you want more details on how Multicast on AWS Transit Gateway works please head to our documentation website.