AWS Open Source Blog

Securing Amazon EKS Using Lambda and Falco

Intrusion and abnormality detection are important tools for stronger run-time security in applications deployed in containers on Amazon EKS clusters. In this post, Michael Ducy of Sysdig explains how Falco, a CNCF Sandbox Project, generates an alert when an abnormal application behavior is detected. AWS Lambda functions can then be configured to pass those alert messages to Slack.

—Arun

Securing Amazon Elastic Container Service for Kubernetes (Amazon EKS) clusters on Amazon Web Services (AWS) can take many forms. AWS provides several options, including virtual private clouds (VPCs), which allow the traditional networking controls of Security Groups and Network ACLs. Amazon EKS also supports IAM authentication, which ties directly into the Kubernetes RBAC system. On top of these features, AWS isolates the nodes running your EKS cluster from other customers.

Even with all of these powerful features, additional security layers can still be important. Falco, an open source CNCF Sandbox project from Sysdig, detects abnormal behavior in your EKS cluster and applications deployed to that cluster, to provide an extra level of security.

Coupled with AWS services such as Amazon Simple Notification Service (Amazon SNS) and AWS Lambda, Falco actively protects your EKS cluster by deleting compromised containers, tainting EKS nodes to prevent new workloads from being scheduled, and notifying teams via a messaging system such as Slack.

Runtime Security with Falco

Falco provides runtime security for containerized applications and their underlying host systems. By instrumenting the Linux kernel of the container host, Falco is able to create an event stream from the system calls made by containers and the host. Rules can then be applied to this event stream to detect abnormal behavior.

For example, here’s a rule that detects any process attempting to read a secret after a container has already started (secrets should only be read at startup):

- macro: proc_is_new

condition: proc.duration <= 5000000000

- rule: Read secret file after startup

desc: >

an attempt to read any secret file (e.g. files containing user/password/authentication

information) Processes might read these files at startup, but not afterwards.

condition: fd.name startswith /etc/secrets and open_read and not proc_is_new

output: >

Sensitive file opened for reading after startup (user=%user.name

command=%proc.cmdline file=%fd.name)

priority: WARNINGFalco will also pull metadata information from underlying container runtimes and orchestrators including Docker and Kubernetes. This metadata can be implemented in Falco rules to restrict certain behaviors depending on the container image, the container name, or the particular Kubernetes resource such as pod, deployment, service, or namespace.

When Falco detects an abnormal event, it will fire an alert to a destination. Possible destinations include stdout, a log file, Syslog, or triggering a program that passes the alert as stdin. By leveraging a sidecar container in Kubernetes, you can expand these destinations to include messaging services like AWS Simple Notification Service (SNS). Alerts published to SNS can then trigger other services that are subscribed to the Falco alerts, such as an AWS Lambda function.

Deploying Falco with SNS Support

The easiest way to get started with Falco is to leverage the Helm chart that Falco provides. Helm is a package manager for Kubernetes that provides a rich library of applications. Helm also allows you set options for an application that are specific to your particular environment. In order to install Helm you’ll need a Helm binary for your workstation, and you’ll need to install Helm on your EKS cluster.

$ brew install kubernetes-helm # For macOS users with Homebrew installed

$ kubectl -n kube-system create sa tiller

$ kubectl create clusterrolebinding tiller --clusterrole cluster-admin --serviceaccount=kube-system:tiller

$ helm init --service-account tillerOnce Helm is installed, installing Falco with SNS support is simple:

$ helm install --name sysdig-falco-1 \

--set integrations.snsOutput.enabled=true \

--set integrations.snsOutput.topic=SNS_TOPIC \

--set integrations.snsOutput.aws_access_key_id=AWS_ACCESS_KEY_ID \

--set integrations.snsOutput.aws_secret_access_key=AWS_SECRET_ACCESS_KEY \

--set integrations.snsOutput.aws_default_region=AWS_DEFAULT_REGION \

stable/falcoAdjust SNS_TOPIC, AWS_ACCESS_KEY_ID, AWS_SECRET_KEY, and AWS DEFAULT_REGION to the settings appropriate for your EKS deployment, and Falco will begin publishing any alerts to the SNS topic you’ve specified.

Creating a Role for Our Lambda Functions

EKS provides the ability to authenticate users and service accounts via AWS IAM. In order to have our Lambda function interact with EKS to delete pods or perform other actions based on a Falco alert, we will create an IAM role for a service account. Kubernetes will verify that the IAM service account is a valid user, then use the internal Kubernetes RBAC functionality to restrict what the service account can do inside our cluster.

Here we’ve created an IAM role for our Lambda function. We then need to create a trust relationship between the IAM role and a user that has permissions to authenticate to the cluster. For this environment, we will create a user in IAM called kubernetes-response-engine. We will leave the permissions empty for the user, which will allow it to authenticate as a valid user to Kubernetes, but not create or modify objects in our AWS account. We can then map that user to the IAM role as shown below.

We also need to create (or update) a configmap in Kubernetes for the aws-iam-authenticator to use to map our IAM role to a user Kubernetes user. This configmap may already exist if you’ve followed the AWS guide on launching worker nodes. You can run kubectl desc configmap aws-auth to get any existing configmap. If a configmap does exist, you’ll want to merge the data sections from the existing configmap and the below configmap.

apiVersion: v1

kind: ConfigMap

metadata:

name: aws-auth

namespace: kube-system

data:

mapRoles: |

- rolearn: arn:aws:iam::<ARN From IAM>:role/iam_for_lambda

username: kubernetes-response-engine

groups:

- system:mastersFinally, we need to add roles to the Kubernetes RBAC system to allow the Lambda function to take action on the Kubernetes cluster. We’ve included the required yaml for the cluster roles and cluster role bindings in the Falco Github repository.

$ git clone https://github.com/falcosecurity/falco.git

$ cd integrations/kubernetes-response-engine/deployment/aws

$ kubectl apply -f cluster-role.yaml cluster-role-binding.yamlDeploying Lambda Functions to React to Falco Alerts

Now that we’re publishing Falco alerts to an SNS topic, we can deploy Lambda functions to react to those alerts. To get started, check out the examples in the Falco GitHub repository.

After cloning the repo, navigate to the integrations/kubernetes-response-engine/playbooks directory. In order to deploy our function to Lambda, we need to package up the function and any dependencies as a zip file. Since the function is written in Python, you’ll need a basic Python environment, as well as pip and pipenv.

First, generate a requirements.txt that contains the list of Python libraries required to run your Lambda function. This can be done with pipenv:

$ pipenv lock --requirements | sed '/^-/ d' > requirements.txtNow that the dependencies have been defined, pip can pull these dependencies down to a local directory. Since we want to push messages to Slack using Lambda, we will also copy in the slack.py function, which will fire when Falco sends an alert:

$ mkdir -p lambda

$ pip install -t lambda -r requirements.txt

$ pip install -t lambda .

$ cp functions/slack.py lambda/With the dependencies and function now in a single directory, all that’s left to do is create a zip file to deploy to AWS Lambda:

$ cd lambda

$ zip ../slack.zip -r *

$ cd ..The code is packaged up and we can create the Lambda function using the AWS CLI. You’ll need to replace the --role value with the correct ID for the role you created earlier as well as replace the Slack webhook URL with the URL for the channel to which you wish to post your Falco messages:

$ aws lambda create-function \

--function-name falco-slack \

--runtime python2.7 \

--role <arn:aws:iam::XXXxxXXX:role/iam_for_lambda> \

--environment Variables=”SLACK_WEBHOOK_URL=https://<custom_slack_app_url>” \

--handler slack.handler \

--zip-file fileb://./slack.zipDeploying Functions to Act on EKS

Besides sending messages to Slack, AWS Lambda can be used to take action on your EKS clusters. You can find these functions in the integrations/kubernetes-response-engine/playbooks/functions directory.

Using the instructions above to pull the function’s dependencies, replace functions/slack.py with the function you want to deploy instead. In order to allow these Lambda functions to communicate with EKS, we’ll need to generate a kubeconfig file which contains info about our cluster.

$ aws eks update-kubeconfig --name <cluster-name> --kubeconfig lambda/kubeconfig

$ sed -i "s/command: aws-iam-authenticator/command: .\/aws-iam-authenticator/g" lambda/kubeconfigWe also need to copy in the aws-iam-authenticator which allows the Lambda function to authenticate back to IAM to operate on the EKS cluster. Then we’ll be able to create our zip file as before:

$ cp extra/aws-iam-authenticator lambda/

$ cd lambda

$ zip ../delete.zip -r *

$ cd ..To deploy the function, we’ll finally need to specify the location of the kubeconfig file and whether we want to load it in our function:

$ aws lambda create-function \

--function-name falco-delete \

--runtime python2.7 \

--role <arn:aws:iam::XXXxxXXX:role/iam_for_lambda> \

--environment Variables=”KUBECONFIG=kubeconfig,KUBERNETES_LOAD_KUBE_CONFIG=1” \

--handler delete.handler \

--zip-file fileb://./delete.zipTying Together SNS and Lambda

Now that we have Falco publishing to SNS and a function in Lambda to react to the alerts, we need to wire together the SNS topic and function. This can be done easily via the AWS console.

Navigate to the Lambda service and select the falco-slack function. In the designer, you can add triggers by selecting them from the left pane. Scroll down and click SNS to add the trigger. Under Configure triggers, search for the SNS topic we created earlier, ensure that Enable trigger is selected, and save the function.

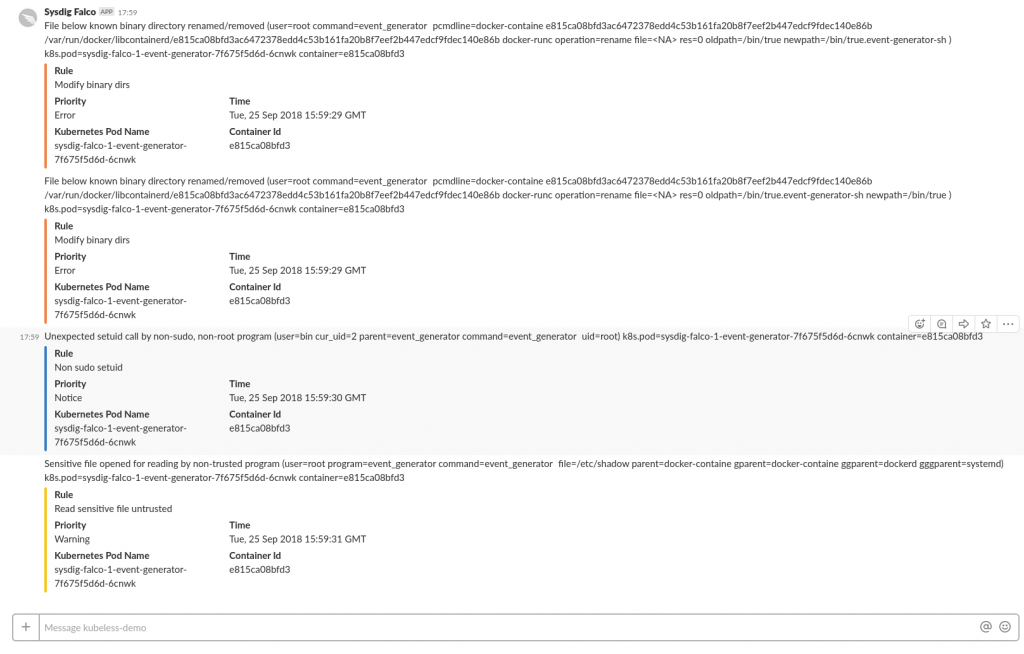

Triggering a Falco alert will now publish the alert to SNS, fire our Lambda function, and post the alert to Slack.

Updating Function Subscriptions

The Lambda functions might not need to fire for every single event published to SNS. For instance, if a function deletes a pod, we would only want the function to fire on critical alerts. To control which alerts a function fires on, we can update the functions subscription.

In the AWS SNS console, select Subscription, then edit the filter policy for the function’s subscription.

Create a JSON object and set the filter policy. Now the Lambda function will only fire when an alert of “Error” or “Warning” is received.

Get Involved with Falco

We hope this helps you integrate Falco runtime security with Amazon Web Services to build a security stack that fits your needs.

If you did find this helpful and wish to contribute to the Falco project, here are several ways to do so:

- Help us improve this guide and create more documentation

- Integrate more tools—PRs are always great!

- Join the discussion on Sysdig’s open source Slack community, #open-source-sysdig, or chat with us on Twitter at @sysdig!

The content and opinions in this post are those of the third-party author and AWS is not responsible for the content or accuracy of this post.