Amazon S3 Intelligent Tiering 스토리지 클래스

액세스 패턴 변경 시 데이터를 이동하여 스토리지 비용 절감을 자동화

개요

Amazon S3 Intelligent Tiering은 데이터 액세스 패턴이 변화할 때 성능에 대한 영향 또는 운영 오버헤드 없이 자동으로 스토리지 비용을 절감하는 유일한 클라우드 스토리지 클래스입니다. Amazon S3 Intelligent Tiering 스토리지 클래스는 액세스 패턴이 변화할 때 가장 비용 효과적인 액세스 티어로 데이터를 자동으로 이동하여 스토리지 비용을 최적화하도록 설계되었습니다. 약간의 월별 객체 모니터링 및 자동화 요금만 지불하면 이용 가능한 S3 Intelligent Tiering은 액세스 패턴을 모니터링하여 사용하지 않는 객체를 저렴한 액세스 티어로 자동으로 옮깁니다. 2018년 S3 Intelligent Tiering 출시 이후, 고객들은 S3 Intelligent Tiering을 활용하여 Amazon S3 Standard 대비 40억 USD 이상의 스토리지 비용을 절감하였습니다.

S3 Intelligent Tiering은 객체 크기나 보존 기간에 관계없이 액세스 패턴이 불명확하거나 변화하는 데이터에 적합한 이상적인 스토리지 클래스입니다. 거의 모든 워크로드, 특히 데이터 레이크, 데이터 분석, 새로운 애플리케이션 및 사용자 생성 콘텐츠에 대한 기본 스토리지 클래스로 S3 Intelligent Tiering을 사용할 수 있습니다.

장점

액세스 패턴을 기반으로 스토리지 비용을 최적화하여 자동으로 비용 절감

비용을 자동으로 최적화하는 최초이자 유일한 클라우드 스토리지

탁월한 내구성

Deep Archive Access 티어를 선택할 수 있는 가장 저렴한 클라우드 스토리지

Amazon S3 Intelligent Tiering

Amazon S3 Intelligent-Tiering 스토리지 클래스는 액세스 패턴이 변화할 때 가장 비용 효과적인 액세스 티어로 데이터를 자동으로 이동하여 스토리지 비용을 최적화하도록 설계되었습니다. 약간의 월별 객체 모니터링 및 자동화 요금만 지불하면 이용 가능한 S3 Intelligent-Tiering은 액세스 패턴을 모니터링하여 액세스하지 않은 객체를 저렴한 액세스 티어로 자동으로 옮깁니다. S3 Intelligent-Tiering은 3개의 대기 시간이 짧고 처리량이 높은 액세스 티어에서 자동 스토리지 비용 절감 효과를 제공합니다. 비동기식으로 액세스할 수 있는 데이터의 경우, S3 Intelligent-Tiering 스토리지 클래스 내에서 자동 아키이브 기능을 활성화할 수 있습니다. S3 Intelligent-Tiering에는 검색 요금이 없습니다. 나중에 Infrequent 또는 Archive Instant Access 티어의 객체에 액세스하는 경우 해당 객체가 자동으로 Frequent Access 티어로 다시 이동됩니다. S3 Intelligent Tiering 스토리지 클래스 내에서 객체가 액세스 티어 간에 이동할 때는 별도의 계층화 요금이 부과되지 않습니다.

S3 Intelligent Tiering에 대해 자세히 알아보기

- Frequent, Infrequent 및 Archive Instant Access 티어는 S3 Standard와 동일한 낮은 지연 시간과 높은 처리량 성능 제공

- Infrequent Access 티어는 스토리지 비용을 최대 40% 줄입니다.

- Archive Instant Access 티어는 스토리지 비용을 최대 68% 줄입니다.

- 자주 사용하지 않는 객체를 위한 옵트인 비동기 아카이브 기능

- Archive Access 및 Deep Archive Access 티어는 드물게 액세스되는 객체에 대해 S3 Glacier Flexible Retrieval 및 S3 Glacier Deep Archive와 동일한 성능을 제공하면서 스토리지 비용이 최대 95% 더 낮습니다.

- 여러 가용 영역에 걸쳐 99.999999999%의 객체 내구성과 99.9%의 연중 가용성을 제공하도록 설계

- 운영 오버헤드, 수명 주기 요금, 검색 요금 및 최소 스토리지 기간 없음

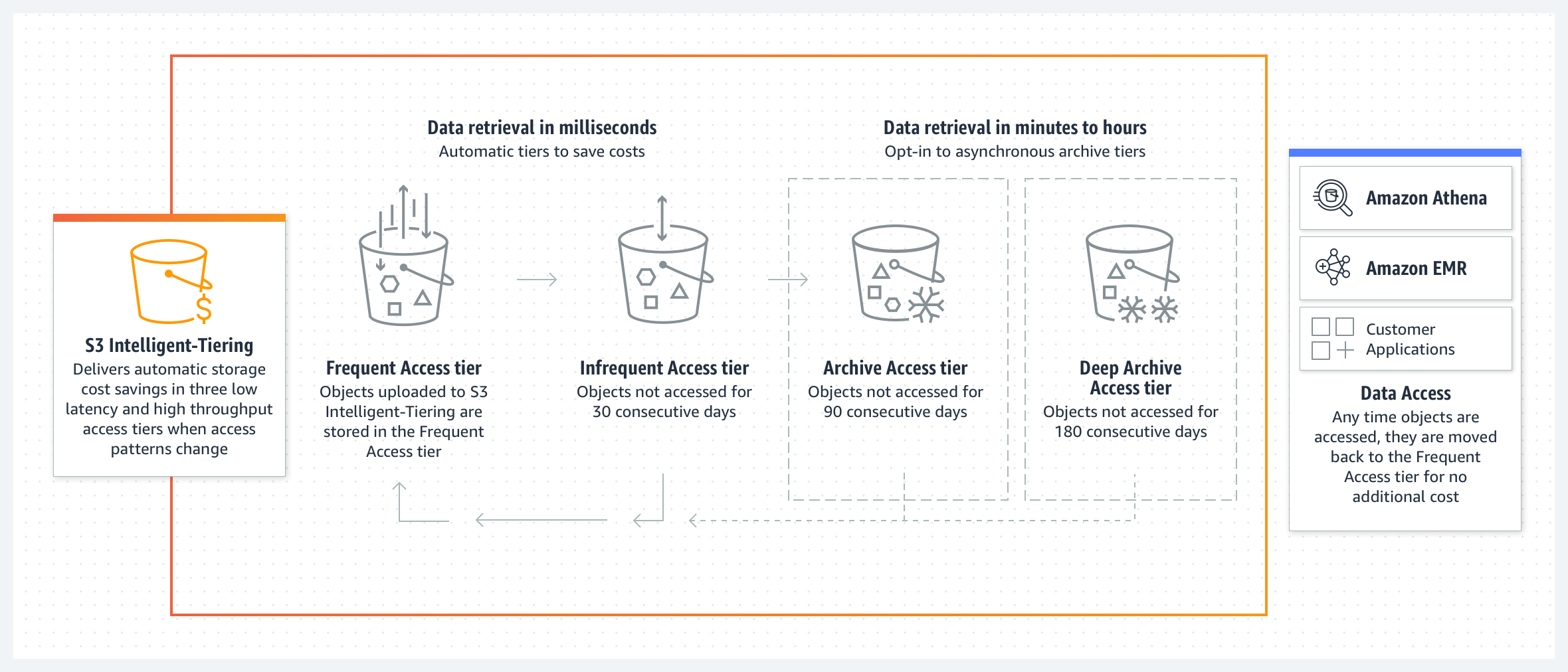

작동 방식 - S3 Intelligent Tiering

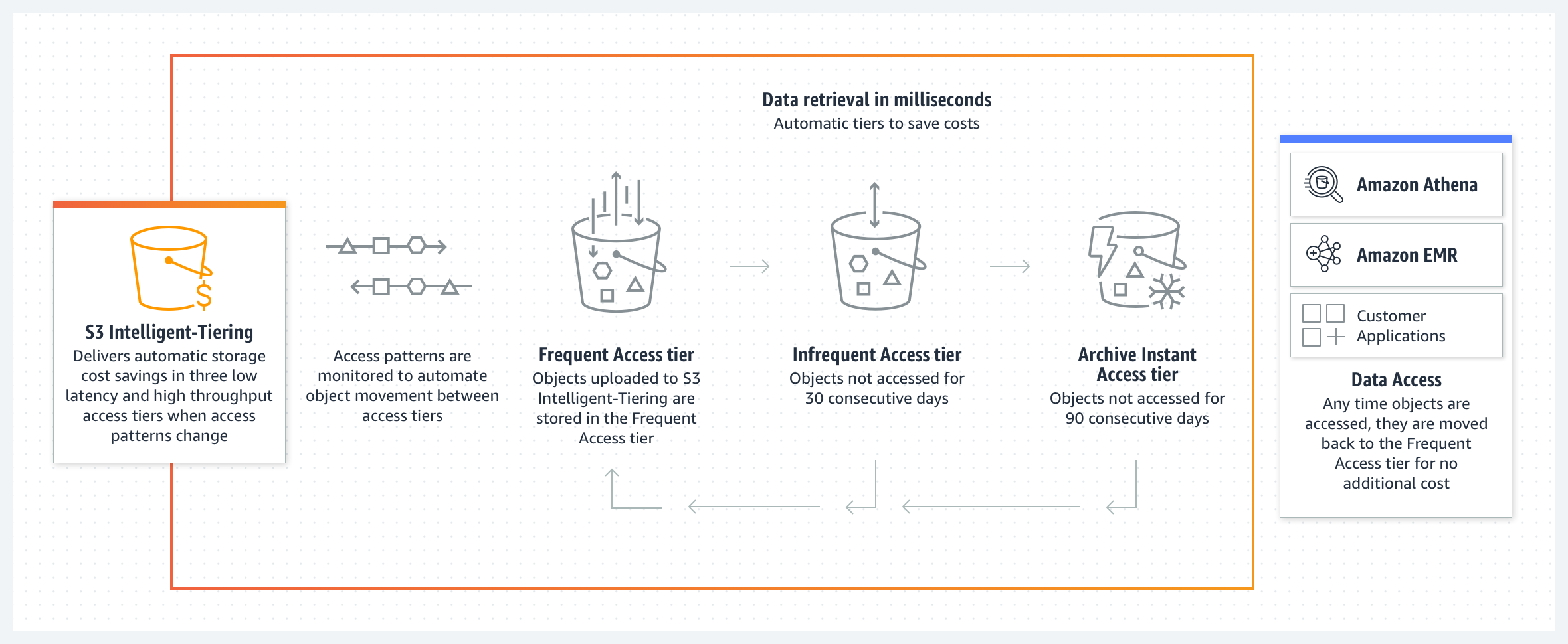

Amazon S3 Intelligent Tiering 스토리지 클래스는 액세스 패턴이 변화할 때 가장 비용 효과적인 액세스 티어로 데이터를 자동으로 이동하여 스토리지 비용을 최적화하도록 설계되었습니다. S3 Intelligent Tiering은 빈번한 액세스에 최적화된 티어, 빈번하지 않은 액세스에 최적화된 저비용 티어, 거의 액세스하지 않는 데이터에 최적화된 초저가 티어, 이렇게 3개의 액세스 티어에 객체를 자동으로 저장합니다. 약간의 월별 객체 모니터링 및 자동화 요금만 지불하면 이용 가능한 S3 Intelligent-Tiering은 객체를 30일 연속으로 액세스하지 않은 Infrequent Access 티어로 옮겨서 비용을 40% 절감하고, 90일 동안 액세스가 없으면 Archive Instant Access 티어로 옮겨서 68% 절감할 수 있습니다. 이후에 객체에 액세스하면 S3 Intelligent Tiering은 객체를 Frequent Access 티어로 다시 이동합니다. 거의 사용하지 않는 스토리지의 비용을 더 절감하려면 추가 다이어그램을 보고 S3 Intelligent Tiering에서 옵트인 비동기 Archive 및 Deep Archive Access 티어를 확인하세요.

S3 Intelligent Tiering에는 검색 요금이 없습니다. S3 Intelligent Tiering에는 최소 적격 객체 크기가 없지만 128KB보다 작은 객체는 자동 계층화에 적합하지 않습니다. 이러한 작은 객체는 저장할 수 있지만 항상 Frequent Access 티어 요금으로 부과되며 모니터링 및 자동화 요금이 발생하지 않습니다. 자세한 내용은 Amazon S3 요금 페이지를 참조하세요. 자세히 알아보려면 S3 Intelligent Tiering 사용 설명서를 참조하세요.

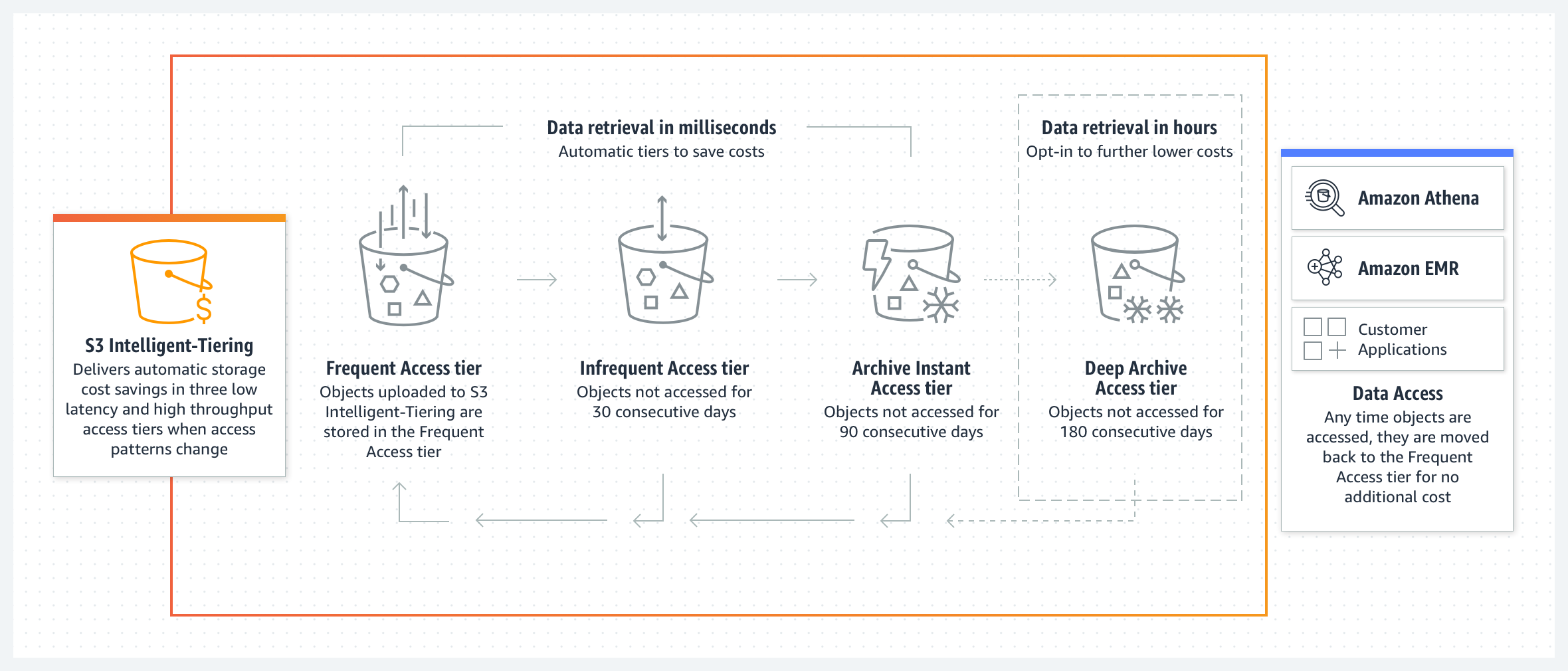

Amazon S3 Intelligent Tiering 스토리지 클래스는 액세스 패턴이 변화할 때 가장 비용 효과적인 액세스 티어로 데이터를 자동으로 이동하여 스토리지 비용을 최적화하도록 설계되었습니다. S3 Intelligent-Tiering은 빈번한 액세스에 최적화된 티어, 빈번하지 않은 액세스에 최적화된 저비용 티어, 거의 액세스하지 않는 데이터에 최적화된 초저가 티어, 이렇게 3개의 액세스 티어에 객체를 자동으로 저장합니다. 약간의 월별 객체 모니터링 및 자동화 요금만 지불하면 이용 가능한 S3 Intelligent-Tiering은 객체를 30일 연속으로 액세스하지 않은 Infrequent Access 티어로 옮겨서 비용을 40% 절감하고, 90일 동안 액세스가 없으면 Archive Instant Access 티어로 옮겨서 68% 절감할 수 있습니다. 즉각적인 검색이 필요하지 않은 데이터를 더 많이 저장하려면 선택적 비동기 Deep Archive Access 티어를 활성화할 수 있습니다. 활성화하면 180일 동안 액세스하지 않은 객체가 Deep Archive Access 티어로 이동되어 스토리지 비용이 최대 95% 절감됩니다. 이후에 객체에 액세스하면 S3 Intelligent-Tiering은 객체를 Frequent Access 티어로 다시 이동합니다. 검색할 객체가 선택적 Deep Archive 티어에 저장되어 있는 경우 객체를 검색하려면 먼저 RestoreObject를 사용하여 복사본을 복원해야 합니다. 아카이브된 객체를 복원하는 방법은 아카이브된 객체 복원을 참조하세요.

S3 Intelligent Tiering에는 검색 요금이 없습니다. S3 Intelligent-Tiering에는 최소 적격 객체 크기가 없지만 128KB보다 작은 객체는 자동 계층화에 적합하지 않습니다. 이러한 작은 객체는 저장할 수 있지만 항상 Frequent Access 티어 요금으로 부과되며 모니터링 및 자동화 요금이 발생하지 않습니다. 자세한 내용은 Amazon S3 요금 페이지를 참조하세요. 자세히 알아보려면 S3 Intelligent Tiering 사용 설명서를 참조하세요.

Amazon S3 Intelligent-Tiering 스토리지 클래스는 액세스 패턴이 변화할 때 가장 비용 효과적인 액세스 티어로 데이터를 자동으로 이동하여 스토리지 비용을 최적화하도록 설계되었습니다. S3 Intelligent-Tiering은 빈번한 액세스에 최적화된 티어, 빈번하지 않은 액세스에 최적화된 저비용 티어, 거의 액세스하지 않는 데이터에 최적화된 초저가 티어, 이렇게 3개의 액세스 티어에 객체를 자동으로 저장합니다. 약간의 월별 객체 모니터링 및 자동화 요금만 지불하면 이용 가능한 S3 Intelligent-Tiering은 객체를 30일 연속으로 액세스하지 않은 Infrequent Access 티어로 옮겨서 비용을 40% 절감하고, 90일 동안 액세스가 없으면 Archive Instant Access 티어로 옮겨서 68% 절감할 수 있습니다. 즉각적인 검색이 필요하지 않은 데이터를 더 많이 저장하려면 선택적 비동기 Archive Access 및 Deep Archive Access 티어를 활성화할 수 있습니다. 활성화하면 90일 동안 액세스하지 않은 객체는 비용 71% 절감을 위해 Archive Access 티어로 직접 이동되고(Archive Instant Access 티어 우회), 180일 후에는 최대 95%의 스토리지 비용 절감과 함께 Archive Deep Archive Access 티어로 이동됩니다. 이후에 객체에 액세스하면 S3 Intelligent-Tiering은 객체를 Frequent Access 티어로 다시 이동합니다. 검색할 객체가 선택적 Archive Access 또는 Deep Archive 티어에 저장되어 있는 경우 객체를 검색하려면 먼저 RestoreObject를 사용하여 복사본을 복원해야 합니다. 아카이브된 객체를 복원하는 방법은 아카이브된 객체 복원을 참조하세요.

S3 Intelligent Tiering에는 검색 요금이 없습니다. S3 Intelligent-Tiering에는 최소 적격 객체 크기가 없지만 128KB보다 작은 객체는 자동 계층화에 적합하지 않습니다. 이러한 작은 객체는 저장할 수 있지만 항상 Frequent Access 티어 요금으로 부과되며 모니터링 및 자동화 요금이 발생하지 않습니다. 자세한 내용은 Amazon S3 요금 페이지를 참조하세요. 자세히 알아보려면 S3 Intelligent Tiering 사용 설명서를 참조하세요.

Shutterstock

2003년에 설립된 Shutterstock은 혁신적인 브랜드 및 미디어 회사를 위한 선도적인 글로벌 크리에이티브 플랫폼입니다. 2백만 명이 넘는 기고자로 구성된 커뮤니티 덕분에 Shutterstock 카탈로그는 성장을 거듭하여 4억 5천만 개 이상의 이미지와 2,500만 개 이상의 동영상을 선보이고 있습니다.

블로그: Shutterstock transforms IT and saves 60% on storage costs with Amazon S3

re:Invent 세션: Shutterstock’s cloud storage revolution with Amazon S3

How Shutterstock implements forecasting to ensure that cost savings is reinvested into the business

“S3 Intelligent Tiering을 사용하여 일부 버킷에서 최대 60%까지 절감하였기 때문에 스토리지 인프라에 추가로 재투자하고 스토리지 환경을 두 번째 AWS 리전으로 복제할 수 있게 되었습니다. 짧은 시간 동안 성능이 향상되고 Amazon S3의 비용이 절감되는 주요 개선이 여러 번 이루어졌습니다. 온프레미스에 있었을 때처럼 대대적인 재설정이 필요하지 않았습니다. S3의 지속적인 혁신과 성능 개선으로 인해 버킷의 액세스 사용률 증가가 따로 처리되고 있습니다. 다행히 최근에 인수한 많은 기업에서도 Amazon S3를 사용하고 있어 기존 아키텍처와 최적으로 통합하고 새로운 동료들과 생산적인 비즈니스 혁신 대화를 나눌 수 있었습니다. 또한 이를 통해 팀의 관심이 스토리지 리소스 계획에서 진정한 혁신으로 전환했습니다.

Chris S. Borys, Shutterstock Team Manager, Cloud Storage Services

Illumina

Illumina는 대규모 유전학 분석을 위한 생명과학 도구 및 시스템의 선도적인 개발, 제조 및 마케팅 업체입니다. 1998년에 설립된 Illumina는 고객이 게놈을 분석하고 생명과학 연구를 빠르게 발전시키며 인간의 건강을 개선하는 데 도움이 되는 광범위한 소프트웨어, 기기 및 서비스를 제공합니다. Illumina의 고객은 자사의 유전자 염기서열 분석 솔루션을 사용하여 치료 및 제약 인사이트를 가속화합니다.

“S3 Intelligent Tiering를 사용한 지 불과 3개월 만에 Illumina는 상당한 월간 비용 절감 효과를 보기 시작했습니다. 그리고 회사는 데이터 1TB당 스토리지 비용 절감액이 60%에 달합니다. Al Maynard는 “지금까지 경험한 최대의 투자 수익률이라고 생각합니다”라고 말합니다. 또한 Illumina 고객은 저렴하고 경쟁력 있는 비용으로 수천 개의 전체 게놈 서열에 거의 즉각적으로 액세스하여 연구 개발을 가속화할 수 있습니다.”

Al Maynard, Illumina Director of Software Engineering

사례 연구: Illumina reduced carbon emissions by 89% and lowered data storage costs using AWS »:

Torc Robotics

트럭 운송의 글로벌 리더이자 선구자인 Torc Robotics는 완전한 자율 주행 차량 소프트웨어 및 통합 솔루션을 제공하며 현재 자율 주행 트럭의 상용화에 주력하고 있습니다. Torc Robotics의 급속한 성장에 따라 S3 스토리지는 차량 데이터 수집 팀이 최적화하고자 했던 S3 버킷의 페타바이트 규모의 데이터로 빠르게 성장했습니다. Torc Robotics는 S3 Intelligent Tiering을 사용하여 애플리케이션 성능에 영향을 주거나 개발 작업을 추가하지 않고도 매월 24%의 자동 스토리지 비용 절감을 실현하고 있습니다.

블로그: How Torc Robotics reduces storage costs with S3 Intelligent-Tiering

re:Invent 세션: Better, faster, and lower-cost storage: Optimizing Amazon S3

TORC Robotics, S3를 통해 더 우수하고 빠르며 저렴한 스토리지 확보

”우리는 향후 성장을 지원하기 위해 Amazon S3 사용을 최적화하는 데 우선 순위를 두었습니다. 하지만 모든 버킷이 블랙 박스였기 때문에 성능에 영향을 주지 않고 Torc Robotics를 모두 지원할 수 있는 안전한 솔루션을 찾아야 했습니다. S3 Intelligent Tiering은 '간편한' 방법이었으며 그 결과 개발 주기를 추가하지 않고도 필요한 속도로 움직일 수 있었습니다.”

Justin Brown, Torc Robotics Head of Vehicle Data Acquisition

Electronic Arts

Electronic Arts(EA)는 디지털 인터랙티브 엔터테인먼트 분야의 글로벌 리더입니다. EA는 FIFA, Madden, Battlefield와 같은 최고의 프랜차이즈를 포함하여 콘솔, PC 및 모바일에서 4억 5천만 명 이상의 플레이어가 즐길 수 있는 게임을 만듭니다. EA는 Hadoop이 지배적인 환경에서 Amazon S3의 AWS 클라우드 스토리지 기반 데이터 레이크를 중심으로 하는 환경으로 전환했습니다. 여기에는 데이터 보관 및 장기 백업을 위한 S3 Glacier Flexier Retrival이 포함됩니다. 인기 게임을 지원하기 위해 핵심 텔레메트리 시스템은 수십 개의 페타바이트, 수만 개의 테이블, 20억 개 이상의 객체를 일상적으로 처리합니다. EA는 S3 Intelligent Tiering을 사용하여 액세스 패턴이 변화하는 데이터 레이크의 스토리지 비용을 최적화했습니다.

“예측 불가능한 액세스 패턴을 가진 데이터에 대해 S3 Intelligent Tiering을 통해 기존 도구를 거의 또는 전혀 변경하지 않고 스토리지 비용을 30% 절감할 수 있었습니다. 이를 통해 데이터 인프라 팀은 게임 출시와 관련된 핵심 역량에 집중할 수 있었습니다. AWS와의 협력을 통해 성장과 고객 만족에 더욱 집중하면서 전 세계에 계속해서 게임에 대한 열정을 불어넣을 수 있었습니다.”

Sundeep Narravula, EA Principal Technical Director

Stripe

Stripe는 인터넷을 위한 경제 인프라를 구축하는 기술 회사입니다. 신생 기업부터 상장 기업에 이르기까지 모든 규모의 기업이 Stripe 소프트웨어를 사용하여 결제를 수락하고 온라인으로 비즈니스를 관리합니다.

“2018년에 S3 Intelligent-Tiering을 출시한 이후로 성능에 영향을 주거나 데이터를 분석할 필요 없이 스토리지 비용을 매월 최대 30%까지 자동으로 절감했습니다. 새로운 Archive Instant Access 티어를 통해 아카이브 스토리지 가격 책정의 이점을 자동으로 실현하면서 필요할 때 즉시 데이터에 액세스할 수 있는 기능을 유지할 수 있을 것으로 기대됩니다.“

Kalyana Chadalavada, Stripe Head of Efficiency

re:Invent 세션: Modernize your data archive with Amazon S3, featuring Stripe »

GRAIL

GRAIL은 조기 암 발견을 위한 신기술을 개척하여 생명을 구하고 건강을 개선하는 데 주력하는 의료 회사입니다. GRAIL은 과학자, 엔지니어 및 의사로 구성된 다학문적 조직을 구축했습니다. 그리고 차세대 염기서열 분석, 인구 규모 임상 연구, 최첨단 컴퓨터 과학 및 데이터 과학의 힘을 활용하여 의학의 가장 큰 과제 중 하나를 극복하고 있습니다.

”우리는 대부분의 데이터를 S3 Intelligent Tiering으로 전환하여 기가바이트당 스토리지 비용을 40% 절감할 수 있었습니다.”

Olga Ignatova, GRAIL Director of Software Development

사례 연구: GRAIL develops a pioneering multicancer early detection test using AWS »

CineSend

CineSend는 영화 및 TV 산업을 위한 클라우드 기반 미디어 자산 관리 도구를 제공하는 선도 기업입니다. CineSend는 스튜디오, 독립 제작자 및 영화 배급사가 프리미엄 미디어 콘텐츠 전송 워크플로를 관리할 수 있도록 즉시 사용 가능한 맞춤형 소프트웨어 솔루션 포트폴리오를 제공합니다.

”S3 Intelligent Tiering을 통해 저장된 미디어 콘텐츠에 '한 번 설정 후 자동 수행(set-it-and-forget-it' model)' 모델을 사용할 수 있게 되었습니다. 자주 사용하는 파일, 자주 사용하지 않는 파일이 적절한 스토리지 클래스에 있으며 효율적인 최소 수준으로 비용이 유지된다는 확신 덕분에 우리 팀은 최첨단 기술을 통해 전 세계에 안전한 동영상 콘텐츠를 제공하는 데 집중할 수 있습니다.”

D’Arcy Rail-Ip, CineSend VP Technology

블로그: How CineSend manages their media content using S3 Intelligent-Tiering »

Zalando

2008년에 설립된 Zalando는 유럽의 대표적인 패션 및 라이프스타일 전문 온라인 플랫폼으로, 3,200만 명이 넘는 활성 고객을 확보하고 있습니다. Amazon S3는 Zalando의 데이터 인프라의 기초로서, 스토리지 비용을 최적화하기 위해 S3 스토리지 클래스를 활용했습니다.

"Amazon S3 Intelligent-Tiering은 30일 동안 사용되지 않은 객체를 자주 사용하지 않는 액세스 계층으로 자동 이동하여 연간 스토리지 비용을 37% 절감해 주었습니다."

Max Schultze, Zalando Lead Data Engineer

블로그: How Zalando built its data lake on Amazon S3 »

Amazon Photos

Amazon Photos는 전 세계 8개 마켓플레이스의 Amazon Prime 회원에게 무제한 사진 스토리지와 5GB의 비디오 스토리지를 제공합니다. 고객은 Amazon Photos의 모바일, 웹 및 데스크톱 앱에서 추억을 백업, 재현 및 공유하고, 이러한 추억을 Amazon Echo Show 및 Amazon Fire TV와 같은 Amazon 스마트 스크린 디바이스에서 다시 감상합니다.

”Amazon Photos가 출시된 이후로 우리는 Amazon S3를 사용해 왔습니다. S3 Standard 스토리지는 비즈니스 규모에 따라 성장할 수 있었지만, 계속 증가하는 대량 데이터의 비용과 성능을 최적화해야 하는 과제가 있었습니다. 2018년에 S3 Intelligent Tiering이 출시되고 최근에 S3 Intelligent Tiering Archive Instant Access 티어가 추가되면서 Amazon Photos 팀은 기존 서비스를 거의 또는 전혀 변경하지 않고도 AWS 솔루션을 즉시 사용할 수 있었고 그 과정에서 스토리지 비용을 10% 이상 절감할 수 있었습니다.“

Arun Kumar Agarwal, Amazon Photos Software Development Manager, Stacie Buckingham, Amazon Photos Senior Software Development Manager

블로그: How Amazon Photos uses Amazon S3 Intelligent-Tiering to significantly reduce storage costs »

Teespring

크리에이터가 고유한 아이디어를 바탕으로 맞춤형 상품을 만들 수 있는 서비스를 제공하는 온라인 플랫폼인 Teespring은 고속 성장에 따라 회사의 데이터가 페타바이트 규모로 기하급수적으로 증가했으며 이 같은 증가세는 지금도 계속 이어지고 있습니다. 여타의 클라우드 네이티브 기업과 마찬가지로 Teespring도 AWS를 사용하여 문제를 해결했으며, 특히 Amazon S3에 데이터를 저장한 것이 주요했습니다.

S3 Intelligent Tiering을 사용하면서 Teespring은 이제 월간 스토리지 비용을 30% 이상 절감하고 있습니다.

블로그: Teespring controls its legacy data using Amazon S3

Joyn

ProSiebenSat.1과 Discovery의 합작 회사인 독일 라이브 스트리밍 서비스 Joyn GmbH는 풍부한 콘텐츠 저장소를 활용하여 구독자에게 독점적인 하이퍼로컬 시리즈와 과거의 영화를 즐길 수 있도록 합니다. 이를 가능하게 하기 위해 Joyn은 최근 40개의 AWS Snowball 어플라이언스를 사용하여 3개월도 안 되어 온프레미스 시설에서 Amazon S3로 3페타바이트(PB) 이상의 미디어 아카이브를 전송했습니다.

Joyn은 Amazon S3 Intelligent-Tiering을 활용하여 성능이나 운영 오버헤드에 영향을 주지 않으면서 모든 콘텐츠를 온라인 상태로 유지하고 액세스 패턴 변화에 따라 스토리지를 자동으로 최적화할 수 있습니다. 자주 사용하는 아카이브 콘텐츠는 Frequent Access 티어로, 자주 사용하지 않는 콘텐츠는 Infrequent Access 티어로 분류됩니다.

”예전에는 Deep Archive에서 어떤 콘텐츠를 검색할지 또는 아카이브에 무엇을 보관해야 할지 선택해야 했지만 이제 신경을 쓸 필요가 없습니다. S3 Intelligent Tiering을 사용하여 동일한 총 소유 비용(TCO)으로 스토리지 볼륨을 3배 늘릴 수 있었습니다. 공간을 확보하기 위해 더 이상 콘텐츠를 삭제할 필요가 없고, 사용 빈도가 낮은 콘텐츠는 Infrequent Access or Archive 티어에 있으니 무척 좋습니다.”

Stefan Haufe, Joyn Media Engineer

블로그: Joyn readies exclusive content for audiences with Amazon S3 Intelligent-Tiering and Amazon S3 Glacier »

SimilarWeb

SimilarWeb은 AWS를 사용하여 대량의 데이터를 관리하며, 데이터 과학자는 이를 통해 시장 인텔리전스 플랫폼을 개선하기 위한 알고리즘을 구축합니다. SimilarWeb은 S3 Intelligent Tiering을 사용하여 직원을 위해 해당 데이터를 대중화하고 스토리지 비용을 20% 절감할 수 있습니다.

동영상: SimilarWeb saves 20% in storage costs with Amazon S3 Intelligent Tiering

AppsFlyer

AppsFlyer는 선도적인 모바일 광고 귀인 및 마케팅 분석 플랫폼입니다. AppsFlyer는 Amazon S3의 페타바이트 규모의 데이터 레이크에 매일 1,000억 개의 이벤트 데이터를 저장합니다. 하지만 365일이 경과한 객체에 대해 향후 자주 액세스함으로써 예상치 못한 검색 비용이 발생할 수 있을지 여부에 대해서는 거의 파악하지 못했습니다. AppsFlyer는 다른 솔루션이 필요했는데 S3 Intelligent-Tiering에서 그 솔루션을 찾았습니다.

AppsFlyer는 정보에 입각한 결정을 내려 데이터를 S3 Intelligent-Tiering로 전환함으로써 저장 GB당 18%의 비용을 절감할 수 있었습니다. 비용 절감은 수익 증대에 도움이 되고 새로운 워크로드에 투자하는 것이 가능해져 우리 회사에 중요합니다.

”S3 Intelligent Tiering을 사용하면 과거 데이터에 변경 사항을 적용해야 할 때마다 데이터 활용성과 비용 효율성을 높일 수 있습니다.”

Reshef Mann, AppsFlyer CTO 겸 공동 창립자

블로그: How AppsFlyer reduced its data lake cost with Amazon S3 Intelligent-Tiering »

Capital One

Capital One은 1994년부터 뱅킹 및 결제를 혁신하는 기술을 사용하여 금융 서비스 산업에 지각 변동을 일으켰습니다. 오늘날 이 ‘디지털 은행’은 AWS에 올인하여 스토리지, 데이터 분석, 마이크로서비스, AI/ML 및 기타 솔루션으로 혁신을 이어가고 있습니다.

“우리는 기업 전체에서 매우 크고 빠르게 성장하는 버킷의 스토리지 비용을 신속하게 최적화할 수 있는 방법을 찾고 싶었습니다. 스토리지 사용 패턴은 상위 버킷에 따라 매우 다양하기 때문에 운영 오버헤드를 감수하지 않고 안전하게 적용할 수 있는 명확한 규칙이 없었습니다. S3 Intelligent Tiering 스토리지 클래스는 변화하는 데이터 액세스 패턴에 따라 성능에 영향을 주지 않고 스토리지를 자동으로 절감했습니다. 추가 노력 없이 더 큰 비용 절감을 실현할 수 있는 S3 Intelligent Tiering의 새로운 Archive Instant Access 티어의 효과를 기대하고 있습니다.”

Jerzy Grzywinski, Capital One Director of Software Engineering

Mobileye

Mobileye는 컴퓨터 비전, 기계 학습, 매핑 및 데이터 분석 분야에서 세계적으로 유명한 전문 지식을 활용하여 자율 주행 및 운전자 지원 기술로 모빌리티 혁명을 주도하고 있습니다.

“액세스 패턴을 예측할 수 없는 경우가 많기 때문에 Amazon S3 Intelligent-Tiering를 사용합니다. 새로운 S3 Intelligent-Tiering Archive Instant Access 티어를 통해 수 페타바이트의 자율 주행 차량 데이터에 대한 비용을 더욱 절감하면서 개발자가 즉시 액세스할 수 있습니다.“

Mobileye 클라우드 인프라 개발 이사 Yanor Barros

Epic Games

Epic Games는 4억 명 이상의 플레이어를 보유한 세계에서 가장 인기 있는 비디오 게임 중 하나인 Fortnite를 만든 인터랙티브 엔터테인먼트 회사입니다. 1991년에 설립된 Epic은 현재 자동차, 영화 및 TV, 시뮬레이션과 같이 산업 전반에서 실시간 프로덕션에 사용되는 수백 개의 게임을 지원하는 3D 제작 엔진인 Unreal Engine을 출시하여 게임을 혁신했습니다.

”S3 Intelligent Tiering을 사용하여 서비스 및 활동을 중단하지 않고 스토리지 변경을 구현할 수 있습니다. 데이터 액세스에 따라 비용이 저렴한 계층으로 데이터가 자동 이동되므로 개발 시간과 비용이 절감됩니다. 시간이 흐르면서 우리 팀은 조직 목표를 달성하기 위해 인프라 비용을 절감할 수 있는 다른 기회를 찾는 데 집중할 수 있습니다. S3 Intelligent Tiering의 새로운 Archive Instant Access 티어는 스토리지 비용을 훨씬 많이 절감하는 데 도움이 될 것입니다.”

Joshua Bergen, Epic Games Cost Management Lead

Embark

Embark는 도로를 더 안전하게 만들고 운송 효율성을 개선하기 위해 자율 주행 트럭 기술을 구축하고 있습니다. COVID-19 팬데믹이 닥쳤을 때 Embark는 공중 보건에 대한 사회적 책임을 준수하고 직원의 안전을 보장하기 위해 트럭 운영을 중단하기로 결정했습니다. Embark는 Amazon S3에 있는 페타바이트 규모의 과거 데이터를 발판으로 이러한 데이터를 더욱 심층적으로 활용할 수 있는 시스템을 개발했습니다.

엔지니어들은 관심 시나리오를 찾기 위해 수년 간의 과거 데이터에서 수천 시간의 주행 데이터를 수집하기 시작했고, 이 데이터를 사용하여 시스템을 테스트할 수 있는 강력한 시뮬레이션을 구축했습니다. Embark는 모든 데이터를 S3 Intelligent-Tiering 스토리지 클래스를 사용하여 저장했기 때문에 비용을 최적화하는 동시에 데이터 레이크에 대한 갑작스러운 무작위 데이터 액세스 패턴을 지원하기 위해 어떤 데이터를 제공해야 하고, 이러한 데이터를 다른 스토리지 계층 간에 어떻게 이동해야 하는지 고민할 필요가 없었습니다.

S3 Intelligent-Tiering은 팀이 더 나은 데이터 파이프라인과 시뮬레이션 시스템을 구축하는 데 모든 엔지니어링 노력을 집중할 수 있도록 비용 최적화 작업을 모두 수행했습니다. AWS의 도움으로 Embark의 팀은 팬데믹 문제에 빠르게 적응할 수 있었고 트럭 운영 중단이 해제된 후에도 자율 주행 트럭의 안전 및 효율성 이점을 제공하는 데 계속 집중할 수 있었습니다.

리소스

S3 Intelligent Tiering 시작하기

S3 PUT API 요청 헤더에서 Intelligent Tiering을 지정하여 S3 Intelligent Tiering을 새로 생성한 데이터에 대한 기본 스토리지 클래스로 구성할 수 있습니다. 99.9%의 가용성 및 99.999999999%의 내구성을 만족하도록 설계된 S3 Intelligent Tiering은 S3 Standard와 같이 낮은 지연 시간과 높은 처리량을 자동으로 제공합니다. 자세히 알아보려면 S3 Intelligent Tiering 사용 설명서를 참조하세요.

지금 Amazon S3로 스토리지를 마이그레이션하여 비용 절감

AWS Migration Acceleration Program for Storage는 고객이 비용을 절감하고 스토리지 워크로드를 AWS로 신속하게 마이그레이션하는 데 유용한 AWS 서비스, 모범 사례 및 도구로 구성되어 있습니다. AWS 서비스, 모범 사례, 도구 및 인센티브를 활용하여 마이그레이션 목표를 더 빠르게 실현할 수 있습니다. 온프레미스 데이터 레이크, 대형 비정형 데이터 리포지토리, 파일 공유, 홈 디렉터리, 백업 및 아카이브 등의 워크로드가 스토리지 마이그레이션에 적합합니다.

AWS는 스토리지 비용을 절감할 수 있는 다양한 방법과 데이터를 마이그레이션하기 위한 다양한 옵션을 제공합니다. 수많은 고객이 AWS 스토리지를 사용하여 클라우드 IT 환경의 기반을 구축하는 이유가 여기에 있습니다. Storage Migration Acceleration Program에 대해 자세히 알아보기 »