IBM & Red Hat on AWS

Cut costs, scale smarter with ROSA: Karpenter automates compute provisioning

By deploying applications on Red Hat OpenShift Service on AWS (ROSA), developers gain operational benefits through containerization, node auto-scaling, and elastic compute, but can still face instances of resource underutilization. To improve compute utilization and reduce costs for ROSA, Red Hat is offering Red Hat build of Karpenter, which is a fully-managed autoscaler based on the upstream Project Karpenter.

Karpenter provisions right-sized Amazon Elastic Compute Cloud (Amazon EC2) instances just-in-time based on the exact compute requirements of the deployed workloads rather than statically defined instance types. This approach significantly reduces compute costs through efficient allocation of resources and continuous assessment of compute requirements leading to cost effective scale up, efficient bin-packing, and scale-down.

This blog explains how Karpenter solves the compute capacity management challenges, then dives into how Red Hat build of Karpenter (currently in Technology Preview) brings these capabilities to ROSA with hosted control planes (HCP), and how you can use it with your clusters.

The Node Scaling Problem

Cluster administrators have long relied on the Kubernetes Cluster Autoscaler as well as manually configured machine pools to manage compute capacity. While functional, this approach does have limitations:

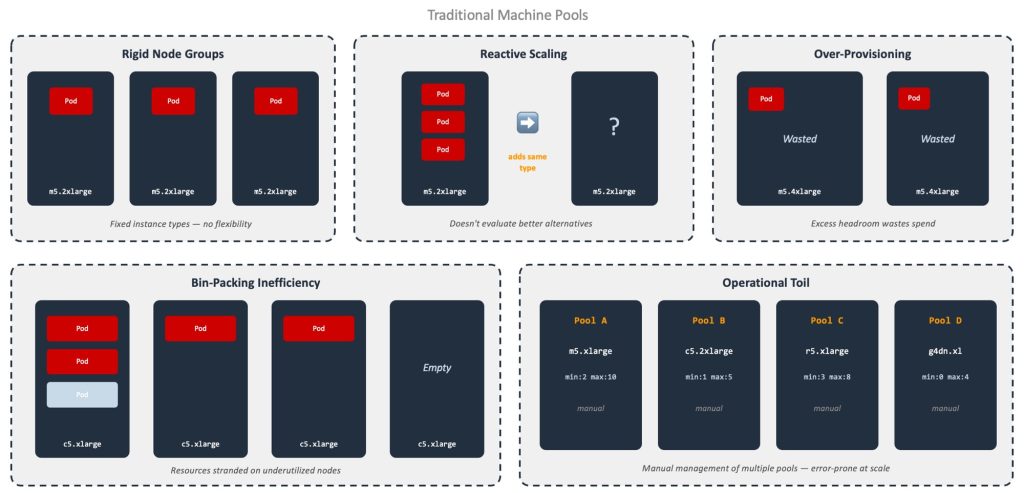

Figure 1: Visual representation of the traditional machine pool node scaling problem

-

- Rigid node groups with fixed instance types and sizes mean you are locked into a single instance type, such as m6i.8xlarge, per node group, even when your workloads have diverse requirements (CPU-intensive, memory-heavy, GPU, Arm-based).

- Reactive scaling results in the Cluster Autoscaler adding more nodes of the same instance type when pods cannot schedule. It does not proactively evaluate whether a different instance type (family or size) would be a better fit.

- Over-provisioning is often employed to ensure workloads have provisioned resources to expand, resulting in oversized machine pools and wasted spend.

- Bin-packing inefficiency arises when resources are stranded on partially utilized nodes that cannot be consolidated.

- Operational toil is a result of the manual, error prone process of managing multiple machine pools with different instance types, sizes, market options, and scaling policies.

- Resource constraints occur if a specific instance type is not available at a given time, or if a compute quota is exceeded.

For organizations operating at scale across multiple environments and teams, these inefficiencies compound quickly.

The Solution: Karpenter

Karpenter, created by Amazon Web Services (AWS) and open-sourced in 2021, is a high-performance, Kubernetes-native node autoscaler designed to improve upon the limitations of the traditional Kubernetes Cluster.

Unlike traditional autoscalers that operate on node groups, Karpenter works at the individual pod level. When pods can’t be scheduled, Karpenter evaluates their resource requests for constraints (e.g., CPU, memory, GPU, topology) and provisions the optimal Amazon EC2 instance to satisfy them. It does this by calling the EC2 CreateFleet API directly, selecting from over 400 instance types across multiple architectures (x86, Arm/Graviton), capacity types (On-Demand, Spot), and AWS Availability Zones.

The result is compute that is right-sized and requires no manual intervention.

Cost Savings with Karpenter

- Vertical Scaling: Instance types and sizes are continually right-sized to avoid over-provisioning of compute capacity throughout the day.

- Horizontal Scaling: The number of worker nodes scales up and down based on workload demand, increasing capacity and availability as applications need it.

- Market Optimization: Market conditions are monitored to determine when workloads should utilize On-Demand and Spot Instances, optimizing for cost without sacrificing availability. When you have reserved capacity, it smartly prioritizes capacity reservation when available and falls back to on-demand when the reserved capacity is all utilized.

- Operational Savings: By automating the sizing, monitoring, and adjusting of multiple node pools, platform teams are freed up to focus on other areas.

How Karpenter Works

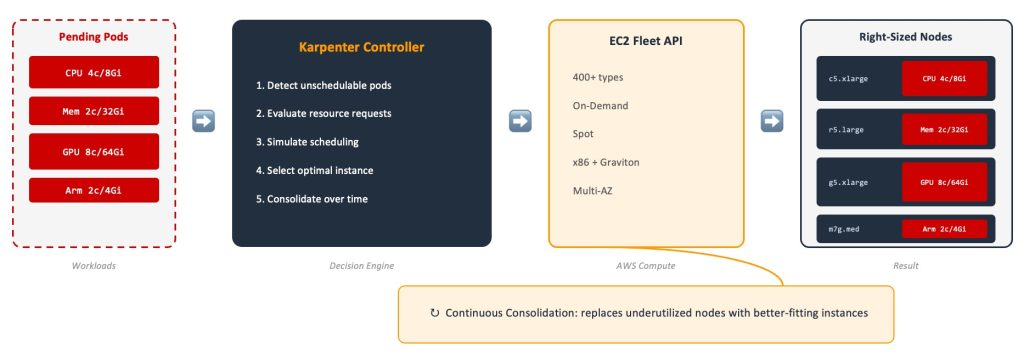

Figure 2: Details of the components and operation of Karpenter

The Karpenter architecture follows a straightforward flow:

- Pending pods trigger provisioning: When pods cannot be scheduled due to insufficient capacity, Karpenter detects them.

- Workload evaluation: Karpenter evaluates resource requests (CPU, memory, GPU) and scheduling constraints (topology, affinity, taints).

- Scheduling simulation: Karpenter simulates the Kubernetes scheduler across candidate instance types to find the best, not just cheapest, fit.

- Optimal instance selection: Karpenter uses the EC2 CreateFleet API to provision the most cost-efficient instance which meets all constraints.

- Node joins, pods schedule: The newly provisioned node joins the cluster so that the pending pods are scheduled immediately.

Beyond provisioning, Karpenter continuously runs a consolidation loop that evaluates existing nodes for underutilization so that they can be replaced with better suited instances. Your cluster never stops optimizing, even after initial provisioning.

Red Hat build of Karpenter benefits

Controllers Hosted in the Control Plane

The Karpenter controllers are hosted and managed as part of the hosted control plane, not on your worker nodes. There are no extra pods to manage, no additional compute overhead, and no resource contention between Karpenter and your applications.

Enable on Existing Clusters

Karpenter can be enabled on existing ROSA HCP clusters once they are upgraded to the OpenShift version that supports it. No need to recreate your cluster to take advantage of Karpenter.

Independent Upgrades

You have full flexibility over upgrade scheduling. The hosted control plane, machine pools, and Karpenter nodes can be upgraded to an OpenShift version independently of each other, based on your requirements.

Coexistence with Cluster Autoscaler

You can use Cluster Autoscaler, Karpenter, and manual scaling in the same cluster. It is recommended to adopt pod placement techniques (taints/tolerations, labels/nodeSelectors) to target workloads according to your node management strategy. This enables a gradual migration path from self-managed to Karpenter-managed NodePools at your pace.

Capacity Reservations and CapacityBlocks for ML

You can use the reserved capacity (both On-Demand Capacity Reservations (ODCRs) and Amazon EC2 Capacity Blocks for ML) with Karpenter. This is critical for teams running machine learning training or applications with regulatory requirements that demand reserved compute capacity.

Configuring Kubelet and Tuning Nodes

For advanced use cases, take advantage of kubelet configurations and TuneD profiles to meet the needs of diverse workloads.

Cost Optimization and Sustainability

Karpenter does more than just simplify operations, it also drives meaningful cost and sustainability outcomes. Karpenter evaluates over 400 EC2 instance types and dynamically provisions each node based on workload requirements, eliminating over-provisioned capacity. It automatically leverages Spot instances and available reserved (On-demand Capacity Reservations) instances with fallback to On-Demand, which optimizes for cost while maintaining availability. Underutilized nodes are continuously consolidated and replaced, ensuring your cluster runs efficiently around the clock. Additionally, Arm-based AWS Graviton instances deliver better price-performance and lower energy consumption per unit, aligning with the AWS Sustainability Pillar of the Well-Architected Framework.

Enterprise Security and Compliance

Karpenter integrates seamlessly with your existing security posture. Red Hat provides the compliance foundation, including FIPS, SOC2, and FedRAMP, while Karpenter respects all Kubernetes scheduling constraints, taints, and tolerations. Amazon VPC subnet and security group configurations are managed through the EC2NodeClass, ensuring nodes launch only in approved network segments. OpenShift-managed AMI selection guarantees that only approved OS images are used for worker nodes. Finally, integration with AWS Identity and Access Management (AWS IAM) and AWS Security Token Service (AWS STS) provides least-privilege access for Karpenter controllers.

Conclusion

Karpenter delivers significant infrastructure cost savings through right-sizing, spot optimization, and continuous consolidation, eliminating the operational burden of manual node scaling. Red Hat build of Karpenter will be generally available in the next minor release of Red Hat OpenShift in all AWS Regions where ROSA is generally available.

To learn more:

- Read this blog about optimizing costs with ROSA.

- Join BO1403 at Red Hat Summit, if you are attending in Atlanta.

- Get in touch with your Red Hat representative or Red Hat Support.

- Visit the ROSA web page