AWS for Industries

AWS Cloud Connectivity Patterns for Financial Market Infrastructures

Financial institutions are migrating critical workloads to the cloud to improve scalability, resilience, and speed of innovation. Amazon Web Services (AWS) supports transaction processing, risk analytics, fraud detection, and data-driven services.

As organizations modernize, a key challenge remains – securely connecting cloud-based workloads to Financial Market Infrastructures (FMIs). This refers to Financial Market Infrastructures (FMIs) generally, not a specific AWS service. This is appropriate usage in context. FMIs operate in highly regulated environments with strict network requirements and minimal tolerance for disruption.

Traditionally, connectivity to FMIs has relied on dedicated private circuits often MPLS-based provisioned through telecommunications providers and terminated on customer-managed infrastructure. This model requires deploying and maintaining on-premises networking equipment such as routers, firewalls, and switches in data centers or co-location facilities. While well understood, it often introduces long provisioning timelines and significant operational overhead.

Cloud-based connectivity models can help reduce this complexity. By using managed services and cloud-native architectures, organizations can accelerate onboarding, simplify operations, and establish private connectivity without traversing the public internet.

In this post, we introduce four connectivity patterns that represent different approaches to integrating AWS-based workloads with FMIs. These patterns span from customer-managed infrastructure to fully cloud-native architectures. You’ll learn how to evaluate each approach based on your organization’s requirements and how to evolve your connectivity strategy over time using AWS services.

Prerequisites

Before proceeding, we assume that you’re familiar with AWS networking services like Amazon Virtual Private Cloud (Amazon VPC), AWS Direct Connect, AWS Transit Gateway, AWS Network Firewall, AWS Privatelink, and AWS VPC Resources.

Solution overview

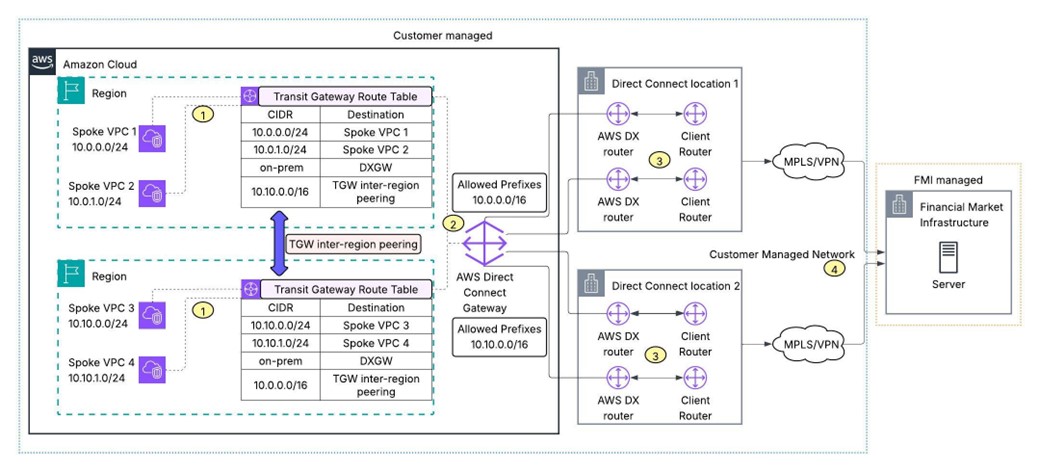

Pattern 1 – Customer-managed routers with AWS Direct Connect

The customer manages physical routers provided by the FMI, connects them to AWS via AWS Direct Connect, and uses Transit Gateway to distribute connectivity across a multi-region AWS footprint.

Figure 1: AWS Direct Connect architecture showing customer-managed routers connecting to

Transit Gateway via Direct Connect Gateway

The architecture works as follows:

1) Customer workloads are hosted across Spoke VPCs associated with a Transit Gateway, which acts as a centralized routing hub. Transit Gateway inter-region peering enables cross-region communication between spoke VPCs, with route tables in each region maintaining paths for spoke attachments.

2) Transit Virtual Interfaces (Transit VIFs) are provisioned over AWS Direct Connect dedicated connections to a Direct Connect Gateway – a globally available resource that can associate with Transit Gateways across multiple AWS Regions, enabling a single Direct Connect connection to bridge on-premises infrastructure with multi-region workloads.

3) To achieve the maximum resiliency SLA, AWS Direct Connect dedicated connections are deployed. This model uses redundant physical connections across multiple independent Direct Connect locations, ensuring no single point of failure at the device, connection, or facility level.

4) Connectivity between the customer-managed routers at the Direct Connect co-location facilities and the FMI servers is established over the customer’s managed network using private connectivity options such as MPLS circuits or IPsec VPNs, keeping this segment entirely under the customer’s operational control.

Use this pattern if you have physical presence at co-location facilities and want full operational control over your routing infrastructure. While this approach carries higher operational overhead managing physical routers, MPLS circuits, and Direct Connect connections – it offers maximum control over the end-to-end network path for latency-sensitive financial transactions. The responsibility boundary in this model lies at the Direct Connect demarcation point, with everything beyond it falling under the customer’s operational purview.

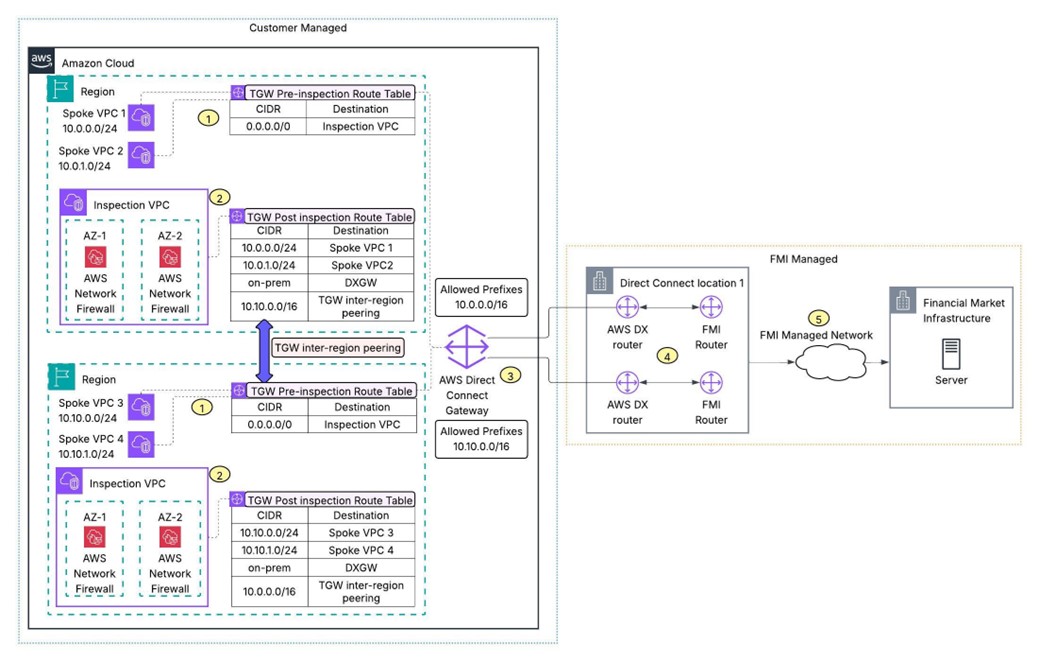

Pattern 2 – FMI-managed routers with AWS Direct Connect hosted connection:

The FMI manages the routing infrastructure and offers an AWS Direct Connect hosted connection in collaboration with an AWS Direct Connect Delivery Partner, reducing the customer’s hardware burden while maintaining private, dedicated connectivity.

Figure 2: FMI-managed hosted connection architecture with AWS Network Firewall inspection

and Transit Gateway routing

Here’s how traffic flows:

1) Customer workloads are hosted across Spoke VPCs associated with Transit Gateway, with a pre-inspection route table directing traffic (0.0.0.0/0) to the Inspection VPC before it reaches destination. Transit Gateway inter-region peering enables cross-region communication between workloads spanning multiple AWS Regions.

2) AWS Network Firewall deployed across multiple Availability Zones within an Inspection VPC inspects traffic flowing between Spoke VPCs and the FMI – with post-inspection route tables directing inspected traffic to the appropriate destination attachment.

3) A Direct Connect Gateway associated with Transit Gateways across multiple AWS Regions – provides hybrid connectivity over AWS Direct Connect hosted connections provisioned through the FMI, eliminating the need for the customer to procure and manage their own Direct Connect dedicated connections.

4) The FMI owns and manages the physical routers at the AWS Direct Connect co-location, removing the customer’s need to maintain networking hardware at co-location facilities – reducing both capital expenditure and operational overhead.

5) End-to-end connectivity from the Direct Connect location to the FMI servers is established over the FMI’s own managed network, with the FMI retaining full control over routing, security, and transport within their infrastructure.

Use this pattern to minimize physical infrastructure and operational overhead with FMI-managed routing equipment and AWS Direct Connect hosted connections. The customer focuses on managing workloads within AWS and establishing BGP peering over Transit VIFs on hosted connections provided by the FMI, while AWS Network Firewall provides centralized traffic inspection between the AWS environment and the FMI.

Moving from Pattern 1 to Pattern 2 eliminates the most operationally expensive element of FMI connectivity: the physical router. In Pattern 1, customers are responsible for housing, powering, patching, and maintaining FMI-provisioned routers in co-location facilities as a model that requires dedicated network engineering headcount, hardware refresh cycles, and co-location contracts. This pattern transfers that burden entirely to the FMI.

The trade-off for this pattern is that the customer cedes some control over the physical network path. For institutions with strict requirements around hardware sovereignty or specific routing policies, Pattern 1 may remain preferable. For the majority, Pattern 2 represents a clear operational upgrade.

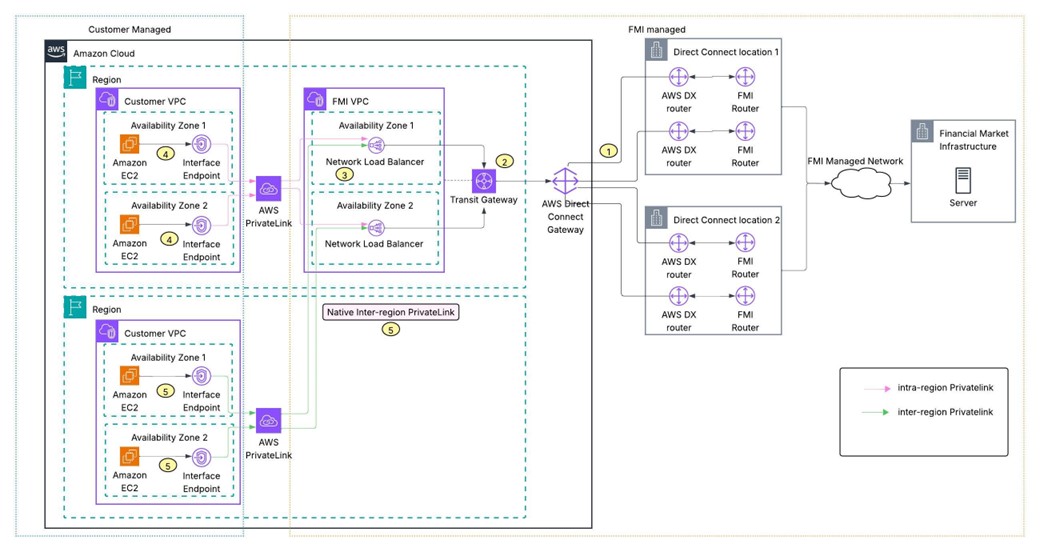

Pattern 3 – FMI connectivity with AWS PrivateLink

In this pattern, FMI exposes services via AWS PrivateLink, enabling customers to connect through VPC endpoints without traversing the public internet into a cloud-native model that simplifies network management and enhances security.

Figure 3: AWS PrivateLink endpoint service architecture enabling customer VPCs to connect to

FMI services without hybrid connectivity

This pattern includes these components:

1) The FMI manages AWS Direct Connect dedicated connections deployed using redundant physical connections across multiple independent Direct Connect locations, establishing reliable hybrid connectivity between their AWS environment and on-premises data centers.

2) The FMI VPC is attached to a Transit Gateway, which connects to a Direct Connect Gateway to facilitate hybrid connectivity – with the FMI’s on-premises servers registered as targets behind Network Load Balancers within the FMI VPC.

3) Network Load Balancers deployed across multiple Availability Zones in the FMI VPC are registered as VPC Endpoint Services, enabling the FMI to expose their on-premises services to customers in a controlled, scalable manner. Separate Network Load Balancers registered as VPC endpoint services are used to isolate connectivity per customer. FMIs can control the creation of VPC endpoint to the endpoint services by leveraging the Allow List Principal feature.

4) Customers in the same AWS Region as the FMI VPC access the FMI services by creating Interface Endpoints within their VPCs, which connect to the FMI’s endpoint service over AWS PrivateLink – keeping traffic on the AWS private network without requiring hybrid connectivity infrastructure on the customer side.

5) Customers in different AWS Regions use native inter-region PrivateLink support to access the FMI’s endpoint service, enabling cross-region consumption of FMI services through Interface Endpoints in their VPCs without needing Transit Gateway inter-region peering or additional networking constructs.

This approach eliminates the need to manage hybrid connectivity constructs like Transit Gateway, Direct Connect Gateway, or Transit Virtual Interfaces. The customer needs to create an Interface Endpoint toward the endpoint service hosted by the FMI. There are no VPC route table configurations to manage, no BGP peering to establish, and no physical infrastructure to maintain, which reduces the network management overhead. The connectivity is unidirectional and can be initiated by the customer toward the FMI, scoped strictly to the resources the FMI has registered behind the VPC endpoint service. This pattern natively supports overlapping CIDRs, so customers do not need to worry about IP address conflicts with the FMI’s address space. AWS PrivateLink also supports native inter-region connectivity, allowing FMIs to provide services to customers operating in different AWS Regions without requiring additional networking constructs like VPC or Transit Gateway inter-region peering.

However, the tradeoff of this pattern is that it will work if the FMI has built and published an AWS PrivateLink endpoint service backed by hybrid connectivity to their on-premises infrastructure. The unidirectional connectivity model also means this pattern does not fit use cases where the FMI needs to initiate connections back to the customer.

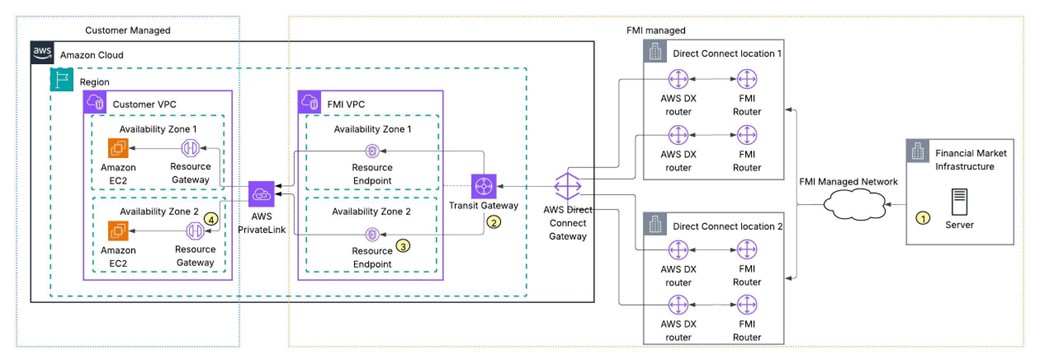

Pattern 4 – AWS VPC resources for FMI-initiated connectivity:

In this pattern, the customer provisions an AWS Resource Gateway, enabling FMIs to initiate connectivity into the customer’s AWS environment as a model suited for scenarios where the FMI needs to pull data from the participant.

Figure 4: AWS VPC Resource Gateway architecture enabling FMI-initiated connectivity to

customer resources over PrivateLink

The connection process involves:

1) The FMI manages AWS Direct Connect connections deployed using redundant physical connections across multiple Direct Connect locations, with hybrid connectivity from the FMI VPC traversing the FMI’s own managed network to reach on-premises servers.

2) The FMI VPC is attached to a Transit Gateway connected to a Direct Connect Gateway, establishing hybrid connectivity – while also hosting Resource Endpoints across multiple Availability Zones that enable the FMI to reach customer resources over PrivateLink.

3) Resource endpoints are associated with the Resource Gateway shared by the customer, providing the FMI a private, scoped path to the specific resources the customer has registered.

4) Customers deploy Resource Gateways in their Customer VPC and register specific resources either by IP address or DNS name, or ARN – making them accessible to the FMI over AWS PrivateLink without needing to set up Network Load Balancers, endpoint services, or additional networking constructs.

While Pattern 3 solves customer-initiated connectivity to the FMI, there are use cases where the FMI needs to initiate connections back to the customer. Customers could stand up Network Load Balancers and register them as endpoint services to enable this, but that adds unnecessary cost and complexity when a single resource needs to be reachable. Resource Gateway simplifies this as customers register the resource in their VPC, share the Resource Gateway with the FMI’s AWS account through AWS RAM, and the FMI creates Resource Endpoints in their VPC to reach it. Access to FMI is scoped to the registered resource in the customer’s VPC. Overlapping CIDRs between the customer and FMI are handled natively, so there is no IP address coordination needed.

Resource Gateway based PrivateLink does not support native cross-region connectivity today, so the customer’s Resource Gateway and the FMI’s Resource Endpoints must be in the same AWS Region. Customers operating across multiple regions would need to deploy Resource Gateways in each region where FMI-initiated connectivity is required. This pattern also needs the FMI to have a VPC in AWS to host the Resource Endpoints, which may not be the case for every FMI at this point.

Security and Compliance Considerations

Security is paramount when establishing connectivity to Financial Market Infrastructures (FMIs). Encryption in transit such as MACsec for AWS Direct Connect connections or TLS encryption for end-to-end encryption, adds critical protection layers to safeguard data across external networks, mitigating risks like eavesdropping or tampering. In all patterns, you control access through Amazon VPC security groups, network access control lists (NACLs), and IAM policies.

For regulated environments, each pattern provides audit-ready network paths. Consult with your compliance team to confirm which pattern controls map to your specific DORA, PCI DSS, or other regulatory requirements. For information refer the blog.

Conclusion

These connectivity patterns help financial institutions move from infrastructure-heavy models to managed, cloud-based approaches reducing operational complexity while maintaining security and compliance. This shift allows teams to focus less on network operations and more on innovation.

As Financial Market Infrastructures (FMIs) expand cloud-native capabilities, including private connectivity options, the path to modern architecture becomes clearer. These patterns support the performance, resilience, and regulatory requirements of financial services while enabling greater scalability and agility.

Each pattern aligns to a different stage of cloud maturity. Organizations to adopt the approach that fits their needs today and evolve over time.

As a next step, evaluate your FMI’s connectivity options and align them with the pattern that best supports your operating model and target architecture. To explore these patterns further, review the AWS Direct Connect documentation, AWS PrivateLink documentation, and AWS Transit Gateway documentation. For additional guidance, contact your AWS account team or visit AWS Financial Services resources to learn how AWS supports financial institutions in building secure, scalable connectivity architectures.

Disclaimer:

Discussion of reference architectures in this post is illustrative and for informational purposes only. It is based on the information available at the time of publication. Steps/recommendations are meant for educational purposes and initial proof of concepts, and not a full-enterprise solution. Contact us to design an architecture that works for your organization.