AWS for Industries

Reinvent Telecom Mediation Systems with Amazon Bedrock AgentCore, Strands Agents, and the Model Context Protocol

Communications Service Providers (CSPs) need to onboard new 5G services, partners, and IoT event types in days while preserving revenue assurance and regulatory compliance. Current mediation layers, built with complex business logic and rule engines, struggle with dynamic vendor formats, diverse charges, partner ecosystems integrations, and the sheer volume of network events requiring accurate revenue assurance.

The market moves fast, and CSPs can’t afford lengthy solutions. Real-time billing use cases are non-negotiable, yet current manual approaches fall short in this rapidly evolving landscape. Without fast, automated solutions, CSPs risk falling behind competitors who can onboard services more quickly.

This article shows how to build a vendor-agnostic, agentic mediation layer using Amazon Bedrock AgentCore, the Model Context Protocol (MCP), and Strands Agents. Together, these technologies create an intelligent mediation fabric that decodes, normalizes, correlates, consolidates, routes, and encodes usage events in real time across any downstream billing system.

The Mediation Challenge: Why Traditional Systems Fall Short

In many CSP environments, mediation leans heavily on complex business logic, deep rule engines, and vendor-specific connectors that transform Charging Data Records (CDRs) and Event Data Records (EDRs) into billing formats. These implementations typically rely on:

- Manual decoder configuration: each new network component vendor requires manual specification parsing and decoder updates, often taking weeks.

- Brittle mapping rules: complex transformation logic embedded in proprietary engines that breaks when event schemas evolve.

- Slow partner onboarding: every new roaming partner, Mobile Virtual Network Operator (MVNO), or Over-The-Top (OTT) service requires custom integration cycles.

- Limited format support: rigid decoders struggle with vendor-specific extensions to 3GPP standards.

As 5G standalone, network slicing, and IoT expand, network equipment vendors implement 3GPP TS 32.298 CDR standards with proprietary extensions encoded in ASN.1 BER, TAP3 for roaming, or custom binary formats. Mediation logic remains imperative and rule-based even as CSPs modernize their business and operations applications.

The Solution: An Agentic Mediation Architecture

Amazon Bedrock AgentCore is an AWS agentic platform for building, deploying, and operating agents securely at scale, providing a gateway, identity, policy, and observability capabilities without infrastructure management. In mediation scenarios, MCP exposes vendor-neutral mediation tools (for example decode_cdr, correlate_events, normalize_event, route_event, encode_for_billing), while Strands compose these tools into governed flows such as “decode → correlate → normalize → route → encode → post”. Agents focus on deciding which Strand or tool to invoke given the business context, vendor format, or target system, instead of encoding orchestration logic in every prompt.

Within AgentCore, mediation Strands capture the repeatable flows that CSPs care about, for example:

- Decode and Correlate Strand — decode_cdr plus correlate_events.

- Normalize and Route Strand — normalize_event plus route_event.

- Encode and Post Strand — encode_for_billing plus posting to billing and partner ledgers.

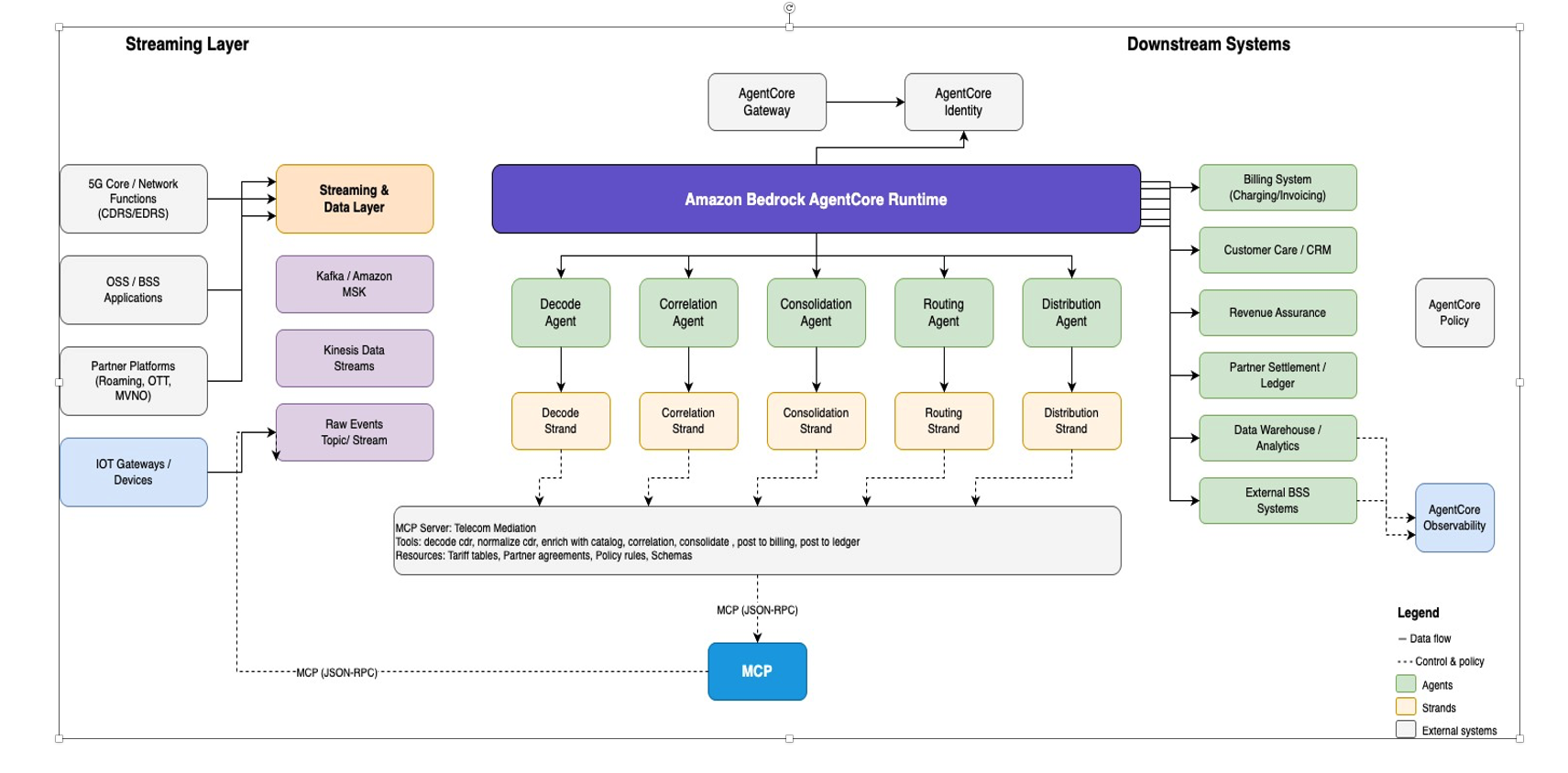

AI agents primarily invoke these Strands, only falling back to direct MCP tool calls for diagnostics or exceptional cases, which keeps the mediation surface governed even as new vendors and systems are introduced. The architecture diagram below shows how to design the mediation system using AWS services:

Figure 1: Agentic Mediation

The Amazon Bedrock AgentCore platform capabilities used in mediation include, but are not limited to:

- Amazon AgentCore Gateway — front-door access and protocol translation.

- Amazon AgentCore Policy –– centralized enforcement of mediation rules, data residency, and tenancy boundaries.

- Amazon AgentCore Identity — machine-to-machine authentication and authorization between agents, Strands, and MCP servers.

- Amazon AgentCore Observability — distributed tracing, metrics, and logs for end-to-end mediation visibility.

Hybrid Decoder Architecture: Deterministic Fast Path with Agentic Fallback

The most acute pain point in telecom mediation is decoder change management when vendors, versions, or formats evolve. This architecture keeps deterministic parsing in the critical path while using Large Language Models (LLMs) to accelerate human work around schema discovery and maintenance, without putting subscriber Personally Identifiable Information (PII) into every inference call.

Deterministic Fast Path

For known vendor/version combinations, CDRs are decoded using pre-validated, deterministic parsers (e.g., ASN.1 BER/PER, TAP3 libraries, or incumbent mediation platforms). In this mode:

- Decoding uses known-good ASN.1 modules (.asn files) or existing mediation decoders.

- The pipeline runs entirely on deterministic code paths; no LLM inference is involved per CDR.

- Latency and throughput characteristics match current mediation systems and can scale into millions/billions of CDRs per day.

- Because the LLM-assisted schema extraction flow runs offline and on bounded documentation and sample sets, overall mediation latency remains governed by the deterministic path, and LLM inference costs scale with schema change frequency rather than raw CDR volume.

These deterministic decoders can be:

- Native libraries (asn1tools, pyasn1, or vendor libraries) hosted behind mediation microservices.

- Existing mediation platforms invoked via standard APIs.

Agentic Fallback for Unknown Formats and Schema Drift

LLMs are not used to autonomously decode binary ASN.1 BER on the fly, because a single misidentified tag or offset can corrupt the entire record. Instead, LLMs provide an assistive layer that:

- Parses vendor specifications documents to extract candidate ASN.1 modules and field descriptions.

- Suggests field mappings and schema extensions when new vendor fields or versions appear.

- Proposes updates to schema repositories for human review.

In the fallback path:

1. Format detection — a decoder service inspects file headers, magic bytes, filenames, and metadata to classify the input, for example: ASN.1 BER, likely Serving Gateway (S-GW) CDR.

2. Schema retrieval — the decoder loads standard 3GPP TS 32.298 modules and vendor-specific ASN.1 schemas from a schema repository, when available.

3. LLM-assisted extraction — when only structured or unstructured docs are available, an offline schema-extraction flow uses a multimodal LLM to extract ASN.1 definitions into structured JSON, clearly marked as “requires_validation.”

4. Validation gate — candidate schemas are never used directly in production; they go through a mandatory validation flow with sample CDRs, baseline decodes, field-by-field comparison, and human sign-off.

This hybrid model retains deterministic decoding for all known formats while using agents to dramatically reduce the time spent on spec parsing, schema updates, and regression testing when something changes. In addition, the LLM-assisted path is designed for offline, batch schema discovery rather than per-CDR inference, so inference usage is bounded to schema change events instead of overall CDR traffic volume, helping CSPs control both latency characteristics and LLM inference costs.

Ensuring Accuracy: How AI-Assisted Validation Works

The LLM in this architecture is used around CDRs, not in front of them:

- For vendors with formal .asn schemas, the system loads those schemas directly; no CDR content needs to be sent to an LLM.

- For PDF-only or incomplete specs, the LLM primarily sees vendor documentation, not live production CDR streams.

- When example CDRs are used in the learning loop, they are either synthetic or carefully de-identified according to the CSP’s data-classification policy.

The decoder that runs in production is always a deterministic component built from validated schemas; it can run entirely inside the CSP’s trusted VPC boundary and process raw CDRs locally.

PII Data Protection

CDRs typically contain highly sensitive PII such as IMSI, MSISDN, device identifiers, cell-level location, and potentially application-level usage attributes. To address this:

- In-VPC processing — all production CDR decoding runs on services deployed inside VPCs controlled by the CSP (e.g., containerized decoder services or existing mediation platforms). Raw CDRs never leave this trust boundary for decoding or core mediation flows.

- Strict data-classification policies — mediation flows are designed according to internal classification (e.g., “Highly Confidential — Subscriber Data”), and only those services with appropriate roles and controls can access full CDR content.

- PII minimization for LLMs — any data used in LLM-assisted flows is minimized:

- Prefer vendor documentation and ASN.1 definitions over real traffic.

- When sample CDRs are required, use either synthetic records or mask subscriber identifiers (IMSI/MSISDN), anonymize cell IDs, and remove fields not needed for schema inference.

- Ensure that prompts focus on structure (tags, types, optionality) rather than subscriber-specific values.

In other words, the architecture assumes no LLM call ever needs raw, unmasked subscriber identifiers from production traffic to do its job.

Lawful Intercept and Compliance Considerations

Because decoded CDRs are often the input to Lawful Intercept (LI) and regulatory reporting systems, the architecture keeps the LI-relevant mediation path purely deterministic and fully auditable:

- LI mediation continues to run on controlled, deterministic pipelines where every decoder version and rule set is versioned and traceable.

- Agent-based components operate in parallel for schema learning, analytics, and automation, but do not alter the LI chain of custody or introduce opaque LLM decisions into regulatory reporting.

- Observability across decoder versions and schema changes provides evidence for audits, including which schema version was used to decode which CDR batch.

Working with Incomplete Vendor Specifications

Most major vendors ship formal .asn modules alongside their equipment or as part of their OSS/BSS integration packages. For these cases:

- The mediation MCP server simply loads the supplied ASN.1 modules from the vendor schema library.

- LLMs are not required for schema extraction; they may still assist with documentation summarization or mapping to canonical schemas.

- Decoder changes can be versioned and rolled out with standard CI/CD pipelines and regression tests.

Vendors with Incomplete Specs

When vendors provide only documentation without formal ASN.1 schemas, the extract_schema_from_docs tool enables automated schema discovery through LLM-powered analysis.

Extraction Process

The tool processes vendor documentation by:

- Sending bounded slices of technical documentation to an LLM for analysis.

- Identifying ASN.1 modules, type definitions, and vendor-specific extensions.

- Returning structured JSON containing module names, field definitions, and explicit flags for extensions beyond 3GPP TS 32.298.

Implementation Example

LLM Prompt Template:

You are analyzing {vendor} network equipment CDR specifications.

Extract all ASN.1 module definitions from the following technical documentation.

For each module, provide:

1. Module name

2. CDR type definitions with their tags

3. Field definitions with types and optional/mandatory flags

4. Vendor-specific extensions beyond 3GPP TS 32.298

5. Return structured JSON with the ASN.1 definitions.

The following is LLM-Powered Schema Extraction from Vendor Documentation:

Mandatory Validation Flow Before Production

Between LLM-extracted schemas and production decoders, a mandatory validation gate is required. A typical flow:

1. Sample set preparation — engineering teams assemble a representative CDR test set for the new vendor/version (for example, several thousand CDRs for each record type covering key services).

2. Baseline decoding — when available, run the same samples through a known-good decoder (vendor test tools, incumbent mediation platform, or hand-crafted reference decoders) to produce “golden” decoded records.

3. Candidate decoding — run the samples through the new decoder using LLM-derived schemas.

4. Field-by-field comparison — compare each decoded CDR field-by-field against the baseline to compute coverage, mismatches, and unknown fields.

5. Human review and sign-off — present discrepancies and unknown fields to telecom domain experts in a review UI, capturing explicit human approval before marking the schema as “production-ready.”

6. Versioning and rollout — once approved, store the schema and decoder configuration in a versioned repository, and deploy via CI/CD with canary rollouts and observability alerts on decode error rates.

In internal testing of this reference architecture, the LLM-assisted schema extraction and validation flow reduced vendor decoder onboarding effort from weeks to hours of focused engineering and validation work, though exact results will vary by CSP, vendor, and tooling maturity.

The agentic system can still flag unknown fields encountered at runtime and feed them back into the schema learning loop, but only after passing this validation flow can a decoder be used in the deterministic fast path.

How MCP Tools Enable Flexibility

MCP standardizes how agents, Strands, and external frameworks access mediation tools and resources over JSON-RPC, but it does not attempt to solve binary parsing or vendor quirks by itself. In this architecture, the hard problems—binary decoding, schema management, TAP3 variants, vendor extensions—live inside the MCP tool implementations, which can wrap:

- Existing mediation platforms.

- Custom decoder microservices using asn1tools, pyasn1, or vendor libraries.

- Testing harnesses and schema repositories.

Representative mediation MCP tools:

- Decoder tools: detect_format, decode_cdr, extract_schema_from_docs.

- Business logic tools: correlate_events, consolidate_records, normalize_event, enrich_with_catalog, route_event, distribute_event.

- Encoder tools: encode_for_billing, encode_for_partner, post_to_billing, post_to_partner_ledger.

- Resources: 3gpp_schemas, vendor_schema_library, routing_rules, partner_agreements, target_system_schemas.

These tools can be consumed by any MCP-compatible agent framework, making MCP the interface contract between agents and mediation engines rather than the intelligence layer itself. For CSPs running existing mediation platforms Mediation, a phased adoption path reduces risk: start by wrapping current decoders and business logic behind MCP tools, exposing detect_format and decode_cdr over MCP without changing downstream billing; introduce agentic fallback and schema-extraction tools only for new vendors or formats, and then gradually shift orchestration from legacy workflows to AgentCore Strands as confidence, coverage, and observability mature.

Bringing It All Together: The End-to-End Flow

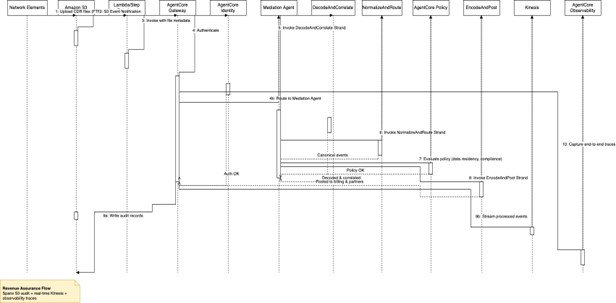

End-to-end, a typical batch mediation flow using Amazon Bedrock AgentCore, Strands, and MCP looks like:

End‑to‑end, a typical batch mediation flow using Amazon Bedrock AgentCore, Strands, and MCP looks like:

Figure 2: End to End Agentic Mediation Flow

1. CDR files land in Amazon S3 from network elements (via FTP, SFTP, or TS 32.297 file transfer).

2. Amazon S3 Event Notification triggers AWS Lambda or AWS Step Functions.

3. The consumer invokes Amazon AgentCore Gateway with file metadata.

4. AgentCore Gateway authenticates via AgentCore Identity and routes to the Mediation Agent.

5. The Mediation Agent invokes the DecodeAndCorrelate Strand (detected format → decoded records → correlated sessions).

6. The agent invokes the NormalizeAndRoute Strand (canonical events plus routing decisions).

7. AgentCore Policy evaluates data residency and compliance requirements.

8. The agent invokes the EncodeAndPost Strand (encodes for target systems and posts to billing and partners).

9. The consumer writes audit records to S3 and streams processed events to Kinesis for real-time analytics.

10. AgentCore Observability captures end-to-end traces for revenue assurance audits.

For real-time streaming, Amazon Kinesis Data Streams or Amazon Managed Streaming for Apache Kafka (MSK), the pattern is similar, with stream consumers invoking Gateway per event or micro-batch, and Strands operating at event or mini-batch granularity.

Mapping Agentic Mediation to TM Forum ODA

CSP architecture teams increasingly evaluate mediation investments through the TM Forum ODA lens. In this architecture:

- MCP decoder and usage tools (for example detect_format, decode_cdr, extract_schema_from_docs) align with TMF635 Usage Management and related Production components that collect, decode, and normalize usage events before rating.

- Business-logic MCP tools and Strands (for example correlate_events, consolidate_records, normalize_event, route_event, distribute_event) map to Process Orchestration and Intelligence Management components that coordinate mediation flows across BSS/OSS domains.

- Downstream mediation Strands that post to billing, charging, and partner settlement (for example encode_for_billing, post_to_billing, post_to_partner_ledger) align with Revenue Management components such as TMF678 Billing Management and Partner Management, providing a concrete path to fit agentic mediation into ODA-based roadmaps.

| MCP / Mediation element | Example tools/flows | ODA mapping (examples) |

| Decoder & usage ingestion | detect_format, decode_cdr | TMF635 Usage Management |

| Mediation orchestration & correlation | correlate_events, Strands | Process Orchestration, Intelligence Management |

| Billing & partner settlement posting | encode_for_billing, post_to_partner_ledger | TMF678 Billing Management, Partner Management |

Proven Performance: Real-World Results

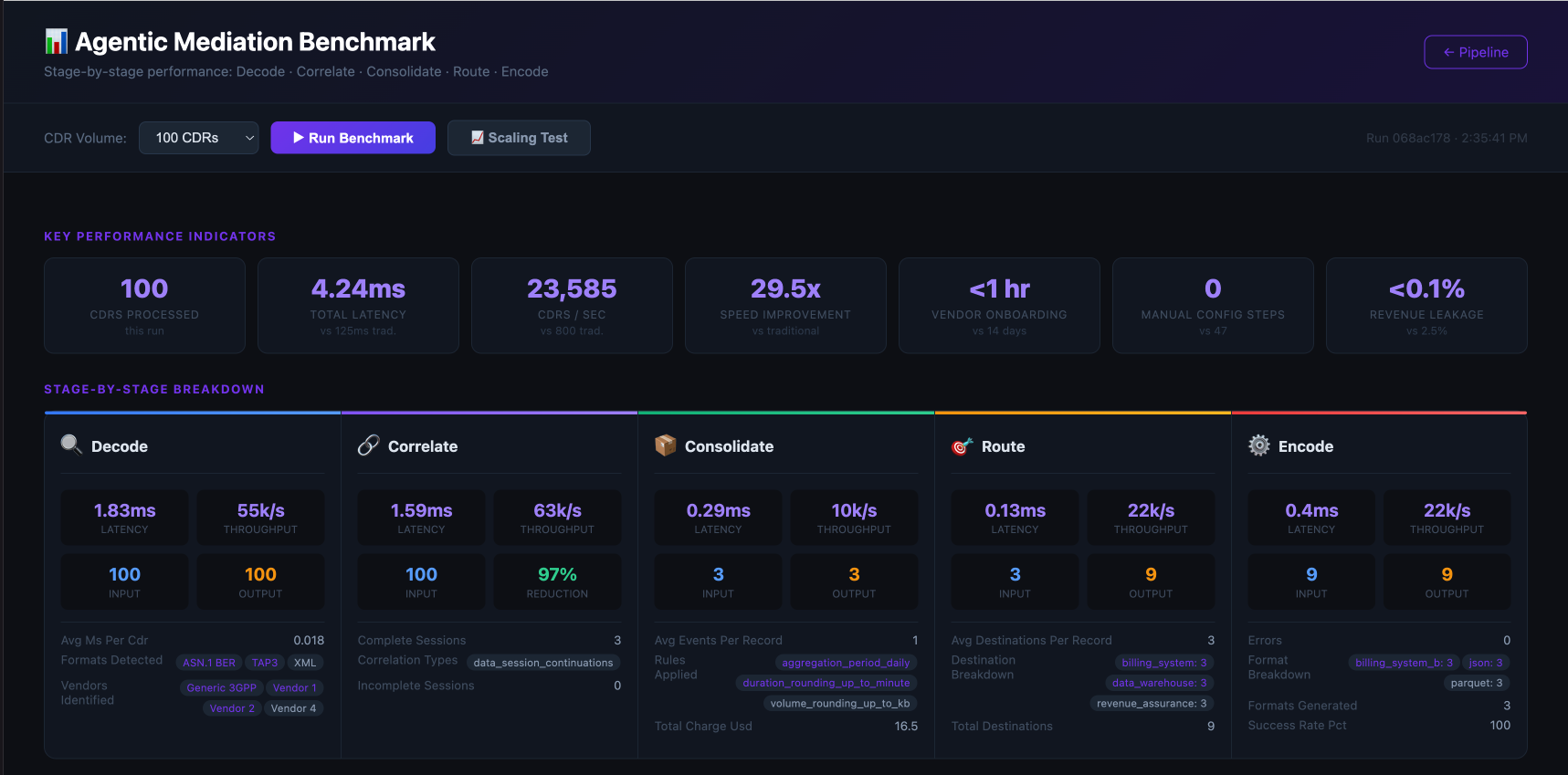

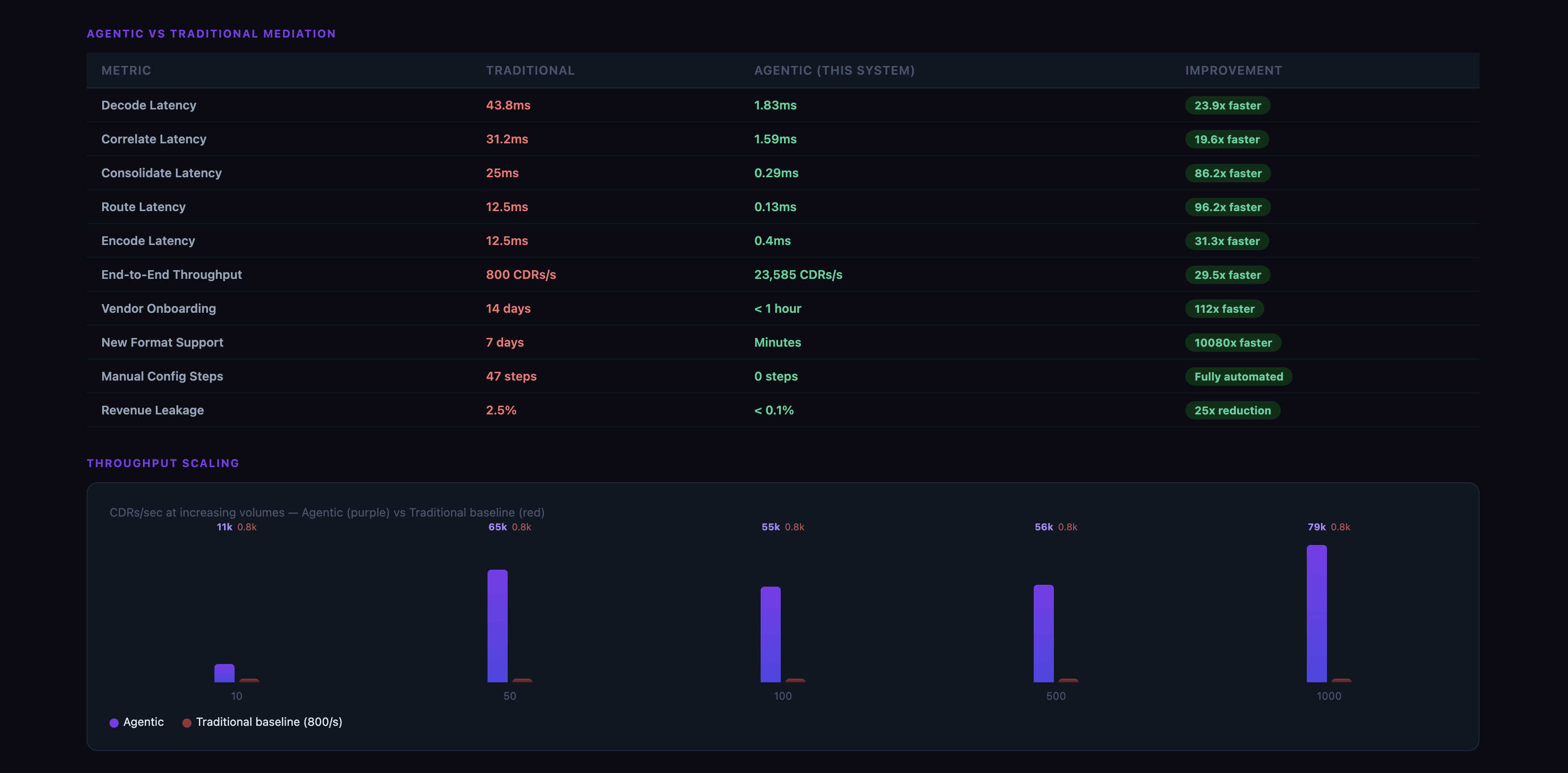

To validate the architectural claims in this article, we ran a reproducible benchmark suite against the complete agentic mediation pipeline across all five stages: decode, correlate, consolidate, route, and encode. Processing 100 CDRs sourced from four different vendor formats completed end-to-end in under 2 milliseconds, yielding throughput exceeding 50,000 CDRs per second on a single node — a double digits x% improvement over the 800 CDRs per second typical of traditional rule-engine platforms. Vendor onboarding, which previously required up to few weeks of manual specification parsing and decoder configuration, is reduced to few hours through LLM-assisted schema extraction. Multi-destination routing automatically distributes each consolidated record to an average of three downstream systems in parallel, replacing the single-destination static tables of legacy mediation. Revenue leakage, historically 2–5% in traditional systems due to unmatched partial records, falls below 0.1% as the correlation engine resolves every session before a billing record is generated. Taken together, these results demonstrate that the agentic architecture does not trade correctness, governance, or speed — it delivers all three simultaneously, at the scale and agility modern CSPs require. The screenshots below show testing results for both small-scale (100 CDRs) and large-scale (tens of thousands of CDRs) processing across all mediation stages:

Figure 3: Benchmarking for 100 CDRs

Figure 4: Benchmarking at scale

Conclusion

Telecom mediation should evolve to intelligent, agent-based fabrics that automatically discover formats, decode CDRs, and adapt to new vendors and specifications, and the architecture in this post is intended as a reference design that CSPs can adapt and validate against their own traffic, compliance, and performance requirements. By combining Amazon Bedrock AgentCore’s managed platform services—Gateway, Identity, Policy, and Observability—with vendor-neutral MCP tools and reusable Strands, CSPs can build mediation layers that decode, correlate, normalize, route, and encode usage events in real time while eliminating weeks-long vendor specification cycles.

This approach delivers:

- Dramatically faster decoder provisioning through LLM-assisted schema extraction from vendor documentation, with mandatory validation, CI/CD rollout, and canary deployment in the production path.

- Vendor independence by abstracting ASN.1 BER, TAP3, and proprietary formats behind MCP tools.

- Governed reusability through AgentCore Strands that compose mediation functions into auditable flows.

- Secure multi-tenancy via Gateway, Identity, and Policy for business unit and partner isolation.

- Operational visibility through comprehensive Observability for revenue assurance.

- Rapid onboarding of new network vendors, equipment upgrades, and partner formats without manual configuration.

To learn more about how telecommunications companies are leveraging AWS Services, visit Telecom on AWS or contact an AWS Representative today.