Migration & Modernization

Migrating and decomposing APIs with zero-downtime using CloudFront

A fundamental shift in API demand patterns is emerging from artificial intelligence integration. More than 30% of the increase in API demand will come from AI and tools using large language models by 2026. This AI-driven transformation is supported by statistics showing that enterprise AI adoption reached 78% in 2024, marking a dramatic acceleration from 55% just one year earlier. Organizations must modernize their systems to support AI-native architectures and intelligent API management capabilities.

However, whether organizations are responding to AI integration demands, addressing migration APIs without service disruption remains one of the most challenging aspects of workload modernization. Traditional blue-green deployments often require orchestration and can introduce risk during the cutover process.

In this post, we’ll explore how to implement a zero-downtime API migration strategy using Amazon CloudFront Functions with Amazon CloudFront KeyValueStore for intelligent traffic routing. This solution enables gradual traffic shifting with user-based routing decisions, providing fine-grained control over your migration process.

Motivations

When migrating APIs, several key motivations drive the decision. Organizations seek to deliver better user experience by moving closer to where their customers are located, which means faster response times and lower latency. Meeting compliance obligations is another critical driver, as stricter data residency regulations in certain jurisdictions make regional migration essential for staying compliant and avoiding legal risks. Reducing operational costs also plays a significant role, since regional pricing differences for AWS services create opportunities to lower infrastructure expenses while maintaining the same capabilities. Finally, building resilient systems by distributing APIs across multiple regions ensures services stay available even during outages, protecting business continuity and customer trust.

Challenges

When modernizing APIs, organizations face several critical challenges. The zero-downtime requirement means consumer systems cannot afford service interruptions during the migration process. A gradual rollout is necessary because new APIs need validation with real traffic before full deployment can proceed. User-specific routing adds complexity, as different user segments may need different migration timelines based on their specific requirements. Real-time control is essential, requiring traffic percentages to be adjustable without redeployment to maintain flexibility. Finally, rollback capability must be built-in to enable quick reversion to the original API if issues arise during the migration.

Solution overview

It is recommended to adopt phased migration approaches using the Strangler fig pattern. This pattern involves gradually replacing legacy APIs by incrementally building new API endpoints alongside the existing system, routing traffic progressively to the new implementation while the old APIs continue to function. The solution implements a canary deployment strategy to reduce downtime and risk by shifting traffic to a new API Gateway in N increments.

The methodology has three main phases:

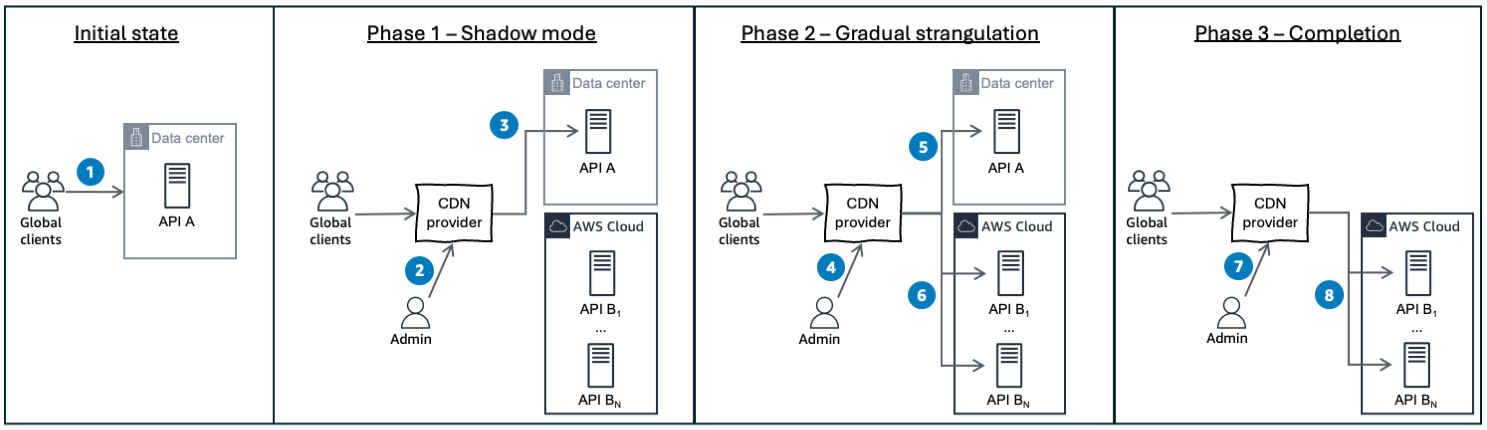

Figure 1 – Three phase API migration implementing Strangler fig pattern gradually breaking down legacy system.

Phase 0 – Initial state: clients send requests to the legacy system, API A, located in a corporate data center or in an AWS Region, serving 100% of clients’ requests (1).

Phase 1 – Establish the fig (shadow mode): if not already done, deploy a Content Distribution Network (CDN) as an intelligent traffic distribution layer that sits between the clients and multiple API Gateway endpoints.

The key innovation lies in using CDN functions with key-value store to dynamically route traffic based on configurable percentages and URL patterns. The “API A” represents the legacy system (the “host tree”), whereas “API B1/B2” are the new modernized services (the “strangler fig”) to which the traffic needs to be redirected to.

The new API endpoints are deployed, but the admin directs 0% of traffic (2) to new APIs, allowing for thorough testing and validation before flows redirection. The legacy API keeps receiving 100% of the traffic (3).

Phase 2 – Gradual strangulation: the CDN function reads traffic percentage from the key-value store and route requests to the legacy system (5), or the new APIs (6). To mitigate the risks, the admin (4) starts shifting a low percentage and then, when confident, increases the percentage until 100%. The admin also limits the blast radius as it validates the performance while increasing the load with real-world traffic. Finally, to roll back if issues arise, the admin decreases the routing value to instantly reverse traffic to the legacy system.

Phase 3 – Complete migration: once confidence is established, the admin sets traffic routing to 100% (7) to direct all clients’ flows to new APIs (8). The legacy system can be safely decommissioned.

Solution Architecture

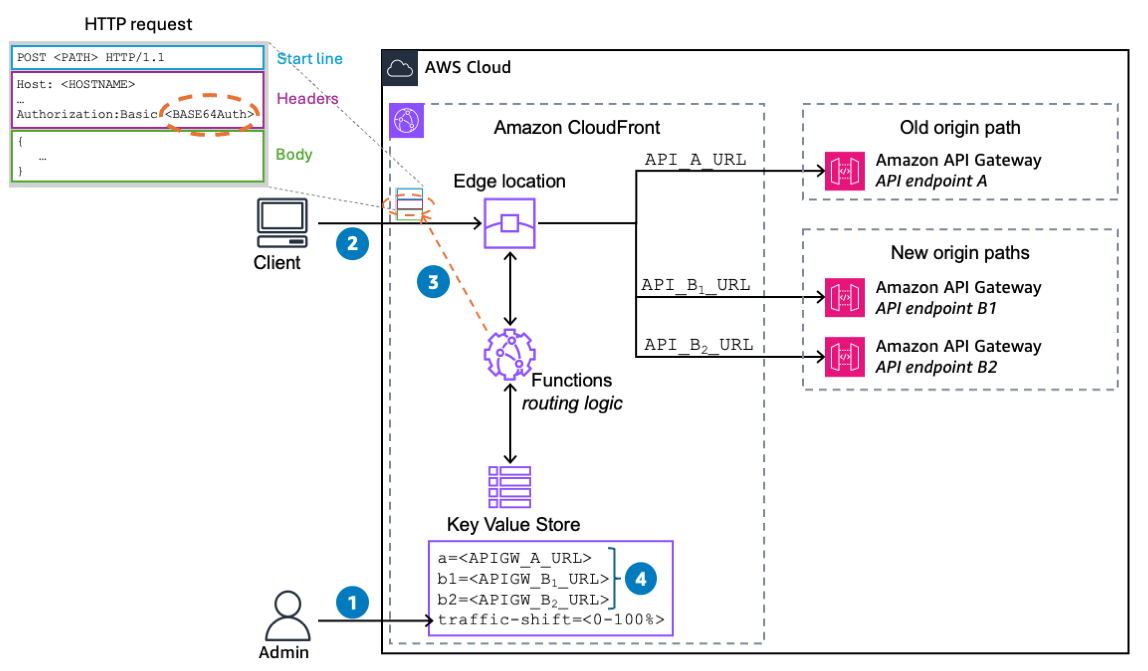

Amazon CloudFront Functions with Amazon CloudFront KeyValueStore acts as an intelligent routing layer sitting between clients and your API Gateway endpoints. This approach makes edge-based routing decisions for minimal latency impact. The dynamic routing logic through CloudFront Functions splits traffic based on authorization header. It gives a real-time traffic control without function redeployment and gives a rollback mechanism to reverse the deployment if unexpected issues arise.The following diagram illustrates how the administrator controls the gradual traffic migration by adjusting the traffic-shift value (1). When clients send requests to the edge location (2), a routing function examines the username in the HTTP request header’s authorization field (3) to make user-aware routing decisions.

The function applies a hash algorithm to the username, deterministically assigning each user to one of two groups: legacy-api-consumers or new-api-consumers.

- Users assigned to the legacy-api-consumers group are routed directly to the legacy system (API A).

- Users assigned to the new-api-consumers group have their requests processed through an URL mapping mechanism that translates legacy paths to new endpoints (API B1 or B2) using a key-value store (4).

This approach ensures consistent routing for each user while enabling the administrator to progressively shift traffic from the legacy API to the modernized API endpoints.

Figure 2 – Clients are sending requests to CloudFront. Based on user’s data, the function compute the redirection either to the legacy origin or new origins.

Note: While this example uses the username as the hashing input for simplicity and illustration purposes, the hash could be applied to other attributes such as user ID, session token, or any consistent user-specific value.

User-aware routing function with even distribution

The CloudFront function sits between the users and the API endpoints. When a request arrives, the function generates a deterministic hash number (username.hashCode()) and compares it against a configured traffic percentage to determine if new APIs should handle the request

The hashCode function is the cornerstone of consistent user routing. Unlike random number generation, which would route the same user to different APIs on each request, the hash code ensures that each user consistently goes to the same API throughout their session and across multiple requests.The following code sample implements a deterministic hash algorithm that processes each character in the username (while (i < len)). Then, to minimize hash collisions, the hash value is multiplied by 31 which creates a spreading effect that helps distribute hash values more evenly. Instead of multiplication, it uses bit shifting and subtraction, which are primitive CPU operations. This optimization ensures the hash function runs efficiently even under high traffic loads during your API migration, maintaining consistent user routing without performance degradation.

String.prototype.hashCode = function() {

var hash = 0, i = 0, len = this.length;

while ( i < len ) {

hash = ((hash << 5) - hash + this.charCodeAt(i++)) << 0;

}

return hash + Math.pow(2,31);

};Sample application

Prerequisites

For this walkthrough, you should have the following prerequisites:

- An AWS account. If you don’t have an AWS account, sign up at https://aws.amazon.com.

- Permissions to create AWS Identity and Access Management (IAM) roles, policies, Amazon CloudFront and Amazon API Gateway.

- AWS CDK, AWS Command Line Interface (AWS CLI) version 2, git, curl and openssl.

Deployment

The architecture depicted in Figure 2 can be deployed using AWS CDK. Please go to this GitHub repository containing the AWS CDK sample application, written in TypeScript and bash. To deploy it, complete the following steps:

- Use your command-line shell to clone the GitHub repository.

git clone https://github.com/aws-samples/sample-api-migration - Navigate to the repository’s root.

cd api-gateway-migration - Run the following cdk command to bootstrap your AWS environment.

cdk bootstrap

Note: Bootstrapping launches resources into your AWS environment that are required by AWS CDK. These include an S3 bucket for storing files and AWS Identity and Access Management (IAM) roles that grant permissions needed to run our deployment. - Deploy the AWS CDK application. It typically takes 5 minutes to provision the IAM roles, policies, three API Gateways, CloudFront distribution and the traffic splitting function. When the deployment completes, make a note of the CDK outputs KV_STORE_ARN, API_A_URL, API_B1_URL and API_B2_URL for the validation steps.

cdk deploy - To see the resources deployed into your AWS account, navigate to the CloudFormation console. You can smoke test the API Gateway responses by running this cURL command to each of the API Gateway URL.

curl <API A|B1|B2 URL>

Testing approach and validation steps

This section demonstrates a controlled, linear migration approach that minimizes risk while transitioning from legacy infrastructure (API A) to modern APIs (API B1 and B2).

Pre-Migration setup

Set the parameters in Amazon CloudFront KeyValueStoreBefore beginning the migration, populate your Amazon CloudFront KeyValueStore with the API endpoints:

Scenario based testing

To validate the API migration infrastructure, the test scenario uses a percentage-based control mechanism that linearly increases traffic shift over a defined period.

Traffic shift control

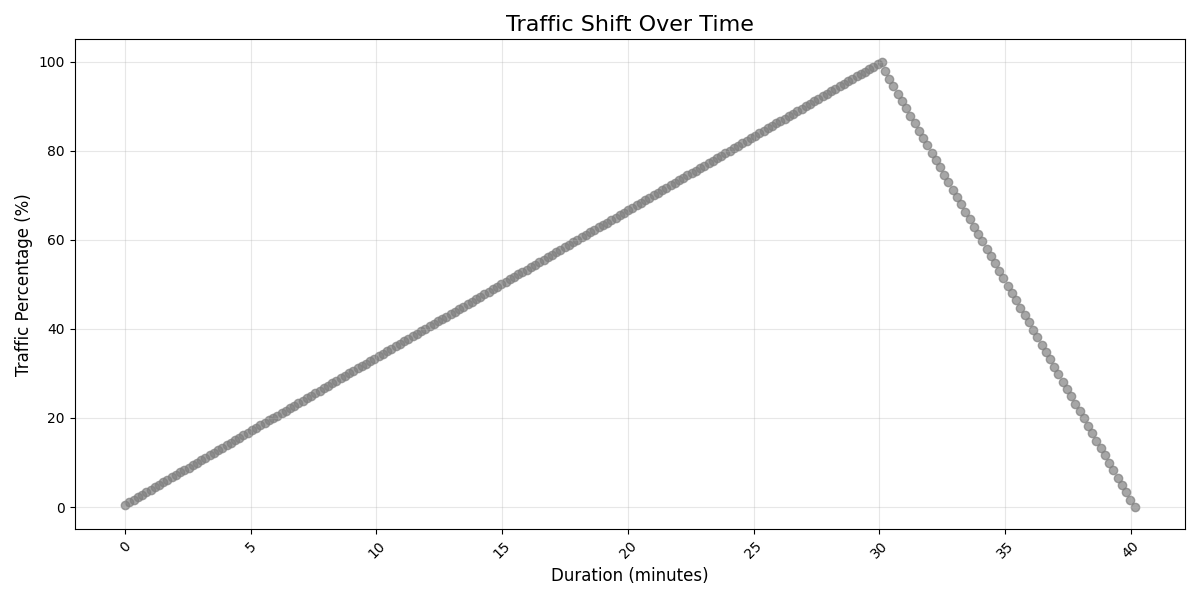

The test scenario follows a structured progression starting with the traffic shift parameter set to 0%, meaning all requests are routed to API A. The administrator automatically or manually increments the parameter from 0% to 100% in a 30min period, creating a gradual migration pattern. At 100%, all traffic is redirected to API B1 and B2 endpoints. Finally, the traffic shift parameter is decreased back to 0% in 10 minutes time window to validate rollback capability.The following chart validates that the traffic shift parameter is applied correctly. This visualization shows the percentage over time and demonstrates the gradual ramp-up from 0% to 100%. It also confirms the controlled ramp-down back to 0% and validates the timing and simplicity of the migration process.

Figure 3 – Traffic shift control linearly increases from 0% to 100% moving the traffic from legacy API A to new API B1 and B2 within 30min. Then stays idle for 5min and finally rolls back to API A in 10min.

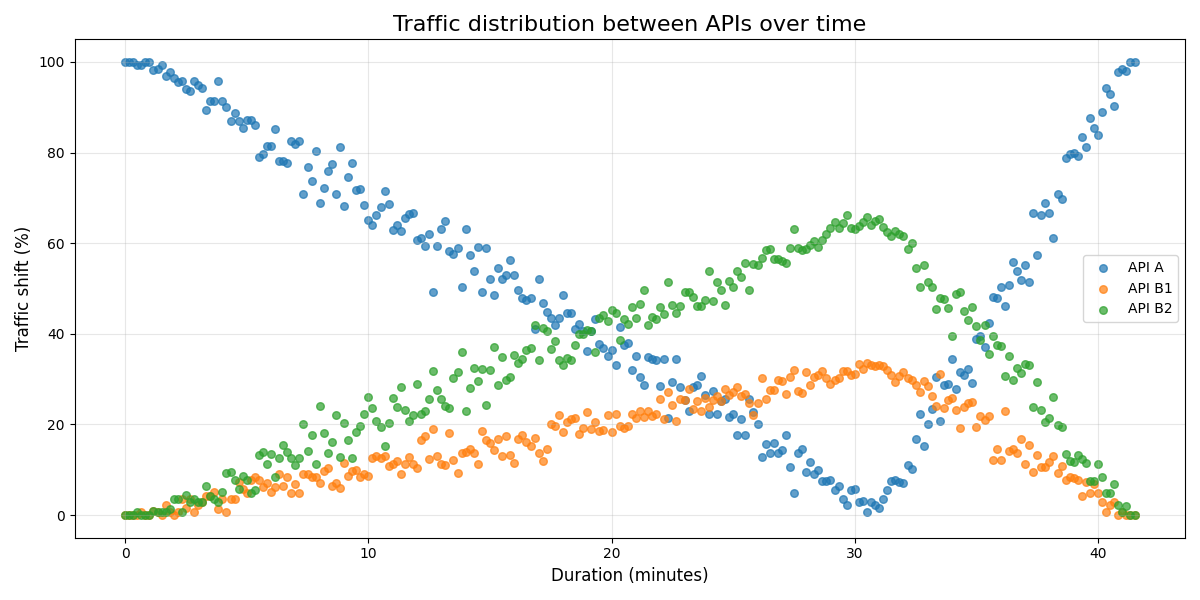

Traffic distribution

To validate the actual request routing behavior, this visualization shows real request distribution (34860 requests grouped in a 10 second interval time-window), between API A, B1 and B2, confirming that traffic is being redirected as intended. It demonstrates path distribution between B1 and B2 endpoints and validates that the CloudFront function correctly interprets the traffic shift parameter.

Figure 4 – Traffic distribution from API A to API B1 and B2 in 30min (0% to 100%), then rolling back to API A in 5min (100% to 0%).

Cleanup

When you’re ready to delete the sample application, follow these steps to avoid incurring future costs:

cdk destroy

Considerations

Consider the following before migrating:

- Resource costs: during the gradual traffic shift phases, both legacy and new API endpoints must remain operational, effectively doubling resource costs. CloudFront Functions and KeyValueStore add their own costs for request processing and storage. Consider leveraging the AWS Migration Acceleration Program (MAP) to offset these migration costs.

- Authorization header exposure: while this solution extracts usernames from HTTP Authorization headers to make routing decisions, it is recommended to implement a session token system, for instance issuing a signed JWT containing a user identifier.

Conclusion

This post outlines a practical approach to implementing the Strangler Fig pattern as an API migration strategy. By harnessing CloudFront’s edge capabilities, organizations can modernize their API infrastructure through user-aware traffic shifting, gradual rollout, zero-downtime transitions, and real-time control – all while maintaining business continuity throughout their architectural transformation. While this solution is implementing CloudFront, alternative CDNs can be used.The beauty of the strangler fig pattern lies in its patience: rather than dismantling legacy system abruptly, it gradually envelops them until the old infrastructure naturally becomes obsolete. This solution embodies that philosophy, allowing legacy APIs to retire gracefully as their modern counterparts demonstrate their reliability in production environments.The architecture doesn’t limit you to just two APIs (B1 and B2) – you can extend it to support one or multiple target endpoints by modifying the CloudFront logic and KeyValueStore configuration. Additionally, the migration doesn’t require linear traffic shifting. You can implement any traffic distribution pattern that suits your needs, whether that’s exponential ramp-up, step-wise increases, or custom curves based on your specific needs and risk tolerance.The flexibility makes the solution adaptable to various migration scenarios, from simple A/B testing to complex multi-region deployments with sophisticated traffic strategies.

To deploy the sample application, examine the GitHub repository, and adapt this technique to your own application pipeline. I’d love to hear your feedback and experiences in the comments below.