AWS Open Source Blog

Kata Containers 1.5 Release with Support for Firecracker

中文版 – Firecracker was announced at re:Invent 2018. It provides security and isolation of virtual machines along with fast startup times and density of containers. It provides a cloud-native hypervisor for running containers safely and efficiently. In this post, Eric Ernst from the Kata Containers project explains how Firecracker meets a need in their community for a minimal hypervisor, and how it is now easily integrated with Kata Containers.

– Arun

Kata Containers is an open source project and global community working to build a standard implementation of lightweight virtual machines that feel and perform like containers, but provide stronger workload isolation by using a virtual machine as a second layer of defense.

While initially based on QEMU, the Kata Containers project was designed up front to support multiple hypervisor solutions.

At the end of November, Amazon Web Services announced the open sourcing of its Firecracker hypervisor. From the Firecracker GitHub readme: “Firecracker has a minimalist design. It excludes unnecessary devices and guest-facing functionality to reduce the memory footprint and attack surface area of each microVM. This improves security, decreases the startup time, and increases hardware utilization.”

This was very exciting for the Kata community, as it addresses Kata end users’ requests for a more minimal hypervisor solution for simple use cases. The Kata community began working with Firecracker immediately, and had some great opportunities to collaborate with the Firecracker teams at AWS.

https://twitter.com/nmeyerhans/status/1072361001792749568

This week we have released Kata Containers 1.5, which introduces preliminary support for the Firecracker hypervisor. This is complementary to the project’s existing QEMU support. Given the tradeoff on features available in Firecracker, we expect people will use Firecracker for feature-constrained workloads, and use a minimal QEMU when working with more advanced workloads (for example, if device assignment is necessary, QEMU should be used).

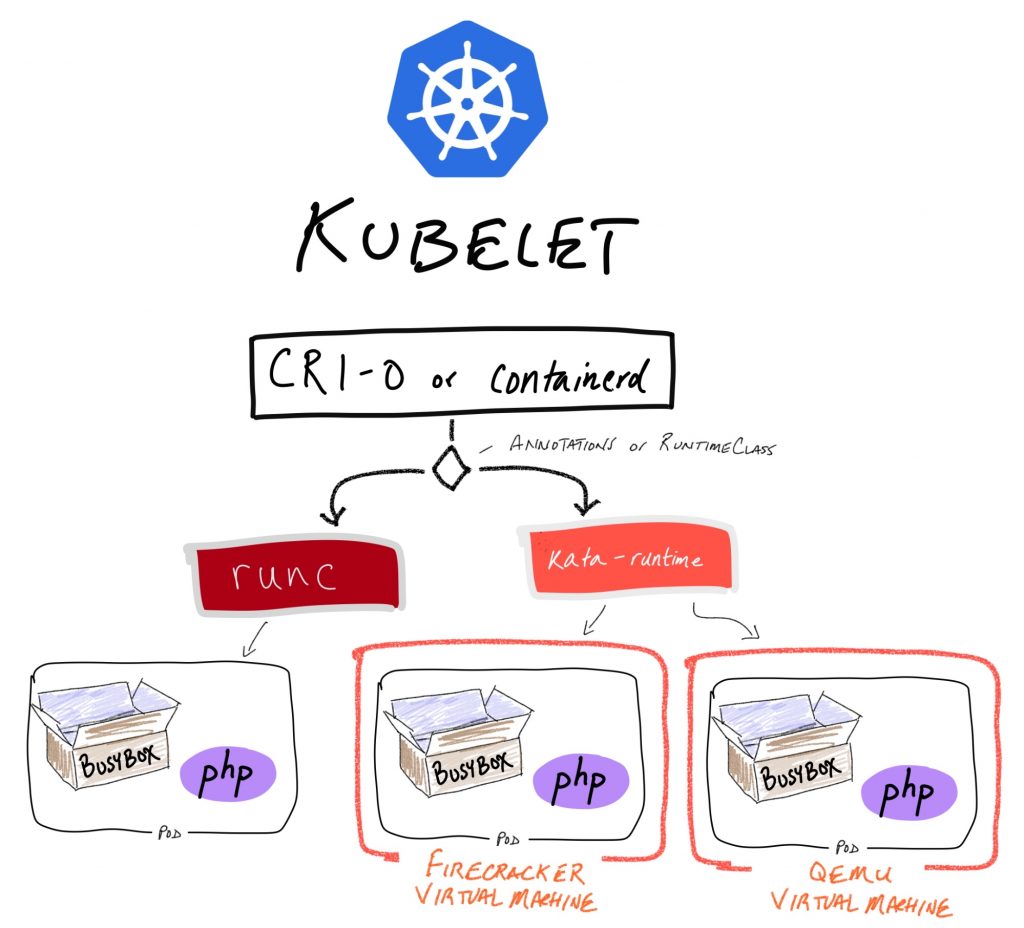

We foresee that it would be typical to utilize runc, Kata + QEMU and Kata + Firecracker in a single Kubernetes cluster, as shown in diagram below:

To achieve this configuration, the cluster must be configured to use either CRI-O or containerd, and must be configured to use the runtimeClass feature of Kubernetes. With runtimeClass configured in Kubernetes as well as in CRI-O/containerd, end users can select the type of isolation they’d like on a per-workload basis. In this example, two runtimeClasses are registered: kata-qemu and kata-fc. Selecting Firecracker-based isolation is as simple as patching existing workloads with the following YAML snippet:

spec:

template:

spec:

runtimeClassName: kata-fcTo utilize QEMU, the runtimeClassName tag would be modified to kata-qemu.

A demonstration of this setup, utilizing CRI-O, Kata Containers and Firecracker VMM, can be seen in the screencast Kata configured in CRIO+K8S, utilizing both QEMU and Firecracker.

runtimeClass is an alpha feature as of Kubernetes 1.13, so, as of today, it is still cumbersome to disable feature gates and bring up a cluster with runtimeClass appropriately configured. To facilitate getting started quickly with Kata, Firecracker, and runtimeClass, we provided a vagrant image, along with directions on how to bring up and use the configured cluster.

As previously mentioned, the Firecracker hypervisor is minimal by design. As a result, there will always be gaps in Kubernetes functionality when using Kata + Firecracker. One such gap is the inability to dynamically adjust memory and CPU definitions for a pod. Similarly, since Firecracker can only support block-based storage drivers and volumes, today devicemapper is required. This is available in Kubernetes + CRI-O and Docker version 18.06. Work is ongoing to add more storage driver options. See this GitHub issue for current limitations of Kata + Firecracker.

For the Kata Containers 1.5 release, Firecracker is included as part of our kata containers static release tarball, which includes all of the configurations and binaries needed for running Kata + Firecracker. We are working to have this available in packages in the near future. To test this out with Docker CLI, see the getting started guide, which includes details on how to bring up workloads with either Firecracker or QEMU for added isolation.

Looking beyond 1.5, we are planning plenty of enhancements for better Firecracker support:

- Improved block-based storage driver support

- Use the Firecracker Jailer to provide stronger host isolation.

- Optimizations around boot-time

- Reference admission controller to help select appropriate hypervisor isolation when using Kata Containers

We’re excited to be working with the Firecracker team and continuing to improve our support for Firecracker VMM, and how it integrates into Kubernetes.

The Kata Containers community is stewarded by the OpenStack Foundation (OSF), which supports the development and adoption of open infrastructure globally by hosting open source projects and communities of practice. Kata’s open source community produces code under the Apache 2 license. Anyone is welcome to join and contribute code, documentation, and use cases.

Meet and collaborate with the Kata team at the Open Infrastructure Summit in Denver, April 29 – May 1, 2019, where we will have several presentations, as well as collaborative working sessions where we can discuss roadmap, planning, and do hands-on hacking to improve the project. In the meantime, you can explore Kata on GitHub and KataContainers.io, and get involved with the community via these channels:

- Slack or IRC Freenode: #kata-dev

- Mailing List

- Weekly meetings

You can find more detail in Eric Ernst’s Kata Containers 1.5 release post.

The content and opinions in this post are those of the third-party author and AWS is not responsible for the content or accuracy of this post.