AWS Partner Network (APN) Blog

Getting the “Ops” Half of DevOps Right: Automation and Self-Service Infrastructure

Next generation Managed Services Providers (MSPs) are able to offer customers significant value today, above and beyond the basics of retroactive notifications or outsourced helpdesk services that were common with early, traditional MSPs. Today’s cloud evolved MSPs are able to drive revolutionizing business outcomes, even including DevOps transformations. This week in the MSP Partner Spotlight series, we hear from Jason McKay, CTO of Logicworks, as he writes about the importance of automation in operations and helping their customers meet their DevOps goals.

Next generation Managed Services Providers (MSPs) are able to offer customers significant value today, above and beyond the basics of retroactive notifications or outsourced helpdesk services that were common with early, traditional MSPs. Today’s cloud evolved MSPs are able to drive revolutionizing business outcomes, even including DevOps transformations. This week in the MSP Partner Spotlight series, we hear from Jason McKay, CTO of Logicworks, as he writes about the importance of automation in operations and helping their customers meet their DevOps goals.

Getting the “Ops” Half of DevOps Right: Automation and Self-Service Infrastructure

By Jason McKay, CTO of Logicworks

DevOps has been a major cultural force in IT for the past ten years. But a gap remains between what companies expect to get out of DevOps and the day-to-day realities of working on a IT team.

Over the past ten years, I’ve helped hundreds of IT teams manage a DevOps cultural shift as part of my role as CTO of Logicworks. Many of the companies we work with have established a customer-focused culture and have made some investments in application delivery and automation, such as building a CI/CD pipeline, automating code testing, and more.

But the vast majority of those companies still struggle with IT operations. Their systems engineers spend far too much time putting out fires and manually building, configuring, and maintaining infrastructure. A recent survey found that it takes more than a month to deliver new infrastructure for 33 percent of companies, and more than half had no access to self-service infrastructure. The result is that systems engineers burn out quickly, developers are frustrated, and new projects are delayed. Add to the mix a constantly shifting regulatory landscape and dozens of new platforms and tools to support, and chances are that your operations team is pretty overwhelmed.

Migrating to Amazon Web Services (AWS) is often the first step to improving infrastructure and security operations for DevOps teams. AWS is the foundation for infrastructure delivery for the largest and most mature DevOps teams in the world, but running IT operations on AWS the same way you did on traditional infrastructure is simply not going to work.

The Power of Automation

Transforming operations teams for DevOps begins with a cultural shift in the way engineers perceive infrastructure. You’ve no doubt heard it before: Operations can no longer be the culture of “no.” Keeping the lights on is no longer enough.

The key technology and process change that supports this cultural change is infrastructure automation. If you’re already running on AWS, there is no better cloud service for building a mature infrastructure automation practice—it integrates with what your developers are doing to automate code deployment, and makes it easier for your company to launch and test new software.

AWS has all the tools you need. But you also need people who know how to use those tools. That’s what Logicworks helps companies do. We are an extension of our clients’ IT teams, helping them figure out IT operations on AWS in this new world of DevOps and constant change.

Our corporate history mirrors the journey most companies are going through today. Ten years ago, the engineers at Logicworks also spent most of their time nurturing physical systems back to health, responding to crises, and manually maintaining systems. When Amazon Web Services launched, we initially wondered if we would have a place in this new paradigm. Where does infrastructure maintenance fit in a world where companies want infrastructure “out of the way” of their fast-moving development teams? Then we realized that not only could we keep managing infrastructure, but we could do something an order of magnitude more sophisticated and elegant for our clients. We started to approach the business not as racking and stacking hardware, but instead using AWS to create responsive and customized infrastructure without human intervention. That really changed the business model.

Today our engineers spend their time writing and maintaining automation scripts that orchestrate AWS infrastructure, not manually maintaining the thousands of instances under our control. In many ways, we have become a software company. We write custom software for each client that makes it easier for their operations teams to deliver AWS infrastructure quickly and securely. Of course we still have 24x7x365 NOC teams, networking professionals, DBAs, etc., but all of our teams approach every infrastructure problem with this question: How can we perform this (repetitive, manual) task smarter? How can we stop doing this over and over and focus on solutions that make a substantial difference for our customers?

Infrastructure Automation in Practice

Many of the best practices of software development — continuous integration, versioning, automated testing — are now the best practices of systems engineers. In enterprises that have embraced the public cloud, servers, switches, and hypervisors are now strings and brackets in JavaScript Object Notation (JSON). The scripts that spin up an instance or configure a network can be standardized, modified over time, and reused. These scripts are essentially software applications that build infrastructure and are maintained much like a piece of software. They are versioned in GitHub and engineers patch the scripts or containers, not the hardware, and test those scripts again and again on multiple projects until they are perfected.

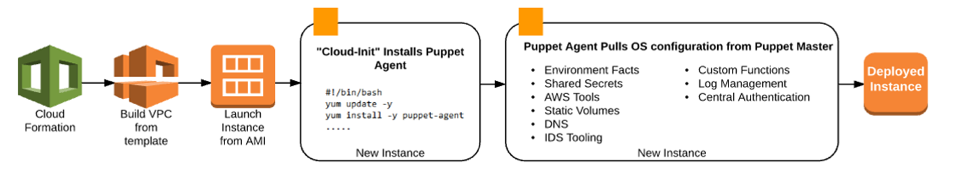

An example of an instance build-out process with AWS CloudFormation and Puppet.

In practice, infrastructure automation usually addresses four principal areas:

- Creating a standard operating environment or baseline infrastructure template in AWS CloudFormation that lives in a code repository and gets versioned and tested.

- Coordinating with security teams to automate key tools, packages, and configurations, usually in a configuration management tool like Puppet or Chef and Amazon EC2 Systems Manager.

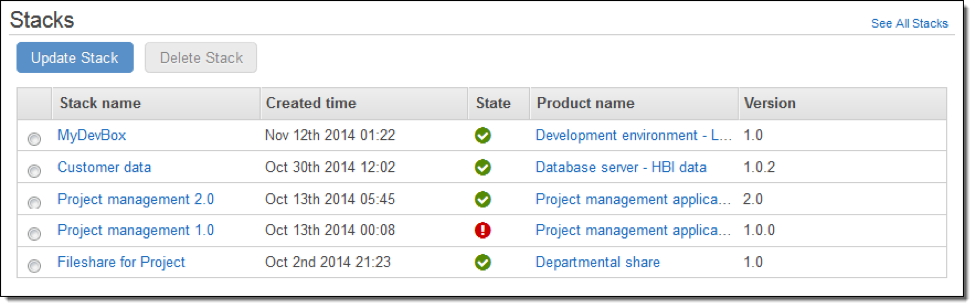

- Delivering infrastructure templates to developers in the form of a self-service portal, such as the AWS Service Catalog.

- Ensuring that all templates and configurations are maintained consistently over time and across multiple environments/accounts, usually in the form of automated tests in a central utility hub that can be built with Jenkins, Amazon Inspector, AWS Config, Amazon CloudWatch, AWS Lambda, and Puppet or Chef.

Together, this set of practices makes it possible for a developer to choose an AWS CloudFormation template in AWS Service Catalog, and in minutes, have a ready-to-use stack that is pre-configured with standard security tools, their desired OS, and packages. Your developers only launch approved infrastructure, never have to touch your infrastructure configuration files, and no longer wait a month to get new infrastructure. Imagine what your developers could test and accomplish when they’re not hampered by lengthy operations cycles.

This system obviously has a big impact on system availability and security. If an environment fails or breaks during testing, you can just trash it and spin up another testing stack. If you need to make a change to your infrastructure, you change the AWS CloudFormation template or configuration management script, and relaunch the stack. This is the true meaning of “disposable infrastructure”, also known as “immutable infrastructure”—once you instantiate a version of the infrastructure, you never change it. Since your infrastructure is frequently replaced, the potential for outdated configurations or packages that expose security vulnerabilities is significantly reduced.

Example of AWS Service Catalog.

This is why the work we do at Logicworks to automate infrastructure is so appealing to companies in risk-averse industries. Most of our customers are in healthcare, financial services, and software-as-a-service because they want infrastructure configurations to be consistently applied (and can prove that they are applied universally across multiple accounts to auditors) and changes are clearly documented in code. Automated tests ensure that any configuration change is either proactively corrected or alerts a 24×7 engineer.

Responsibilities and External Support

If you’re managing your own infrastructure, your operations team is responsible for everything up to (and including) the Service Catalog layer. Your developers are responsible for making code work. That creates a nice, clear line of responsibility that simplifies communication and usually makes developer and ops relationships less fraught.

If you’re working with an external cloud managed service provider, look for one that prioritizes infrastructure automation. Companies that work with Logicworks appreciate that we have abandoned the old style of managed services. Long gone are the days when you paid a lot of money just to have a company alert you after something went wrong, or when a managed service provider was little more than an outsourced help desk. AWS fundamentally changed the way the world looks at infrastructure. AWS also has changed what companies expect from outsourced infrastructure support, and has redefined what it means to be a managed services provider. Logicworks is proud to have been among the first group of MSPs to become an audited AWS MSP Partner and to have earned the DevOps and Security Competencies, among others. We have evolved to continue to add more value to our customers and to help them achieve DevOps goals from the operations side—and not just to keep the lights on.

Whether you outsource infrastructure operations or keep it in-house, the most important thing to remember is that you cannot create a culture that innovates at a higher velocity if your AWS infrastructure is built and maintained manually. Don’t ignore operations in your enthusiasm to build and automate code delivery. Prioritize automation for your operations team so that they can stop firefighting and start delivering value for your business.