AWS Architecture Blog

Issues to Avoid When Implementing Serverless Architecture with AWS Lambda

There’s lots of articles and advice on using AWS Lambda. I’d like to show you how to avoid some common issues so you can build the most effective architecture.

Technologies emerge and become outdated quickly. So, solutions that may look like the right solution, otherwise known as anti-patterns, can prevent you from building a cost-optimized, performant, and resilient IT system. In this post, I highlight eight common anti-patterns I’ve seen and provide some recommendations to avoid them to ensure that your system is performing at its best.

1. Using monolithic Lambda functions with a large code base

During a lengthy project, your Lambda function code might grow bigger and bigger. This leads to longer Lambda invocation times and makes maintaining the function code more complex.

Here are my recommendations to avoid this:

- Break up large Lambda functions into smaller ones with less business logic inside.

- Use AWS Step Functions to orchestrate multiple Lambda functions. This lets you control your Lambda implementation flow and benefit from features like error condition handling, loops, and input and output processing.

- Use Amazon EventBridge to exchange information between your serverless components. EventBridge is an event bus with rich functionality on filtering, logging, and forwarding event messages. This allows you to turn any event into a native AWS event. EventBridge supports more than 200 AWS native and partner event sources.

2. Using synchronous architectures

I often see architectures where a Lambda function is responsible for various tasks that run one after another. For example, Lambda is configured to receive user-uploaded files through the Amazon API Gateway. This will transform the file, save the operation to an Amazon Simple Storage Service (Amazon S3) bucket, and return the HTTP reply back to the user.

Such use cases lead to longer runtimes and higher Lambda implementation costs.

My suggested improvement for this case:

- Consider introducing an asynchronous workflow. For example, you can upload files directly to Amazon S3 from a web or mobile application. You can simultaneously run a separate long-running process that can handle data transformations and user notification features.

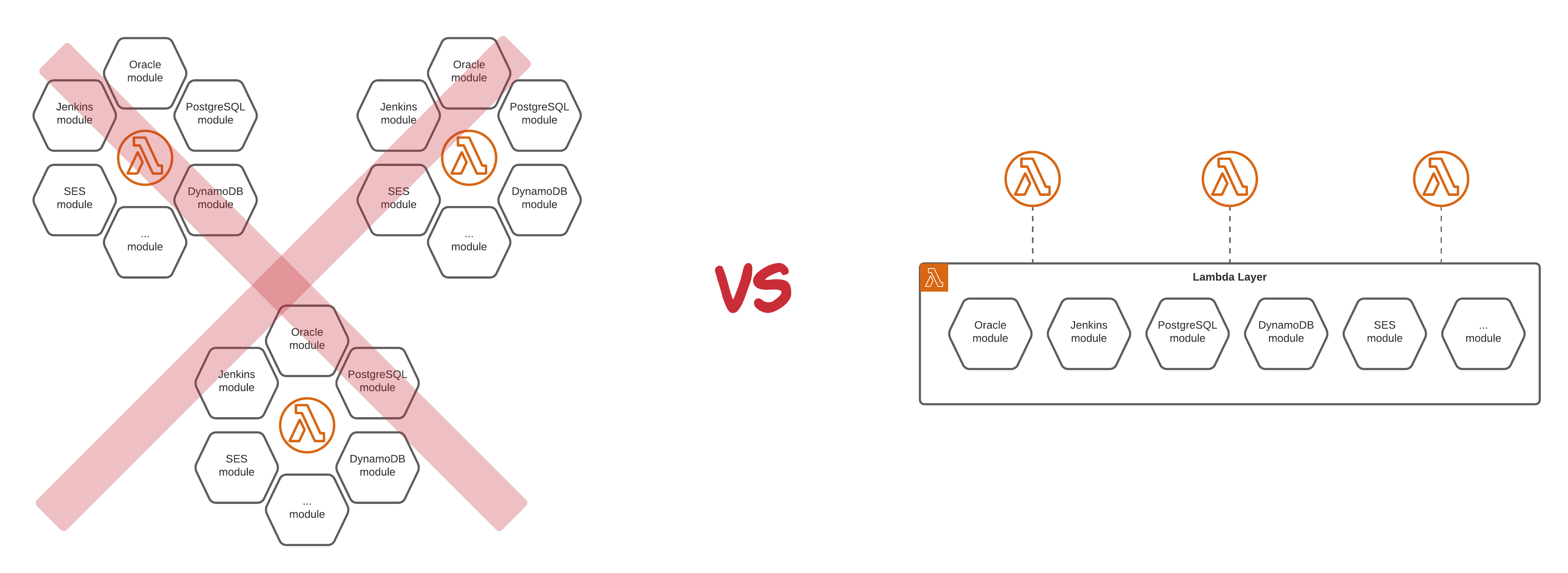

3. Not managing the shared code base

I see many projects where people repeat the common Lambda function code while creating their applications. This leads to implementation inconsistency and technical debt, because sooner or later developers will modify the common code base in different parts of the project.

To avoid this anti-pattern, introduce the following:

- Lambda layers – The same code base or libraries can be mounted to your Lambda functions.

- Shared API – Replacing the common code base with a shared microservice API will help simplify the code base management and abstract its implementation for dependent services.

4. Choosing the wrong Lambda function memory configuration

Right-sizing the Lambda function is similar to Amazon Elastic Compute Cloud (Amazon EC2) sizing. I see many cases where Lambda functions are provisioned with extensive memory configuration, which can lead to added costs.

I recommend using the following goals to develop a “just right” sized Lambda function:

- Test Lambda function to ensure that it is configured with the right memory and timeout values.

- Confirm that your code is optimized to run as efficiently as possible.

Determining the Lambda function’s right size may be challenging. It highly depends on the runtime environment and your code. To simplify this challenge, Alex Casalboni introduced a framework for Lambda Power Tuning. He created a state machine powered by Step Functions that helps you optimize your Lambda functions for cost and performance in a data-driven way. Additionally, AWS Compute Optimizer helps you identify the optimal Lambda function configuration for your workloads. The Optimizing AWS Lambda Cost and Performance using AWS Compute Optimizer blog post provides information to help you get started.

As soon as the output of the framework is JSON based, you may consider incorporating it into your continuous integration and continuous delivery (CI/CD) pipelines to automatically adjust Lambda configuration accordingly.

Figure 4. Automated Lambda function optimization for cost and performance

5. Making Lambda functions dependent on less scalable service

Lambda is a scalable solution. It can quickly overwhelm a less scalable service in your architecture. If Lambda can directly access a single EC2 instance or Amazon Relational Database Service (Amazon RDS) database, it can be a potentially dangerous integration.

Here’s how you can prevent potential problems:

- Minimize and decouple your dependencies on non-serverless services.

- Use asynchronous processing wherever is possible. This will let you decouple microservices from each other and introduce buffering solutions.

- Implement a buffering Amazon Simple Queue Service (Amazon SQS) message queue in front of non-scalable or non-serverless services.

- Implement Amazon RDS Proxy in front of Amazon RDS to maintain predictable database performance by controlling the total number of database connections.

6. Using a single AWS account to deploy your workloads

There are some hard limits for a single AWS account that can be reached fairly quickly. This includes the amount of elastic network interfaces or AWS CloudFormation stacks you are allowed to have.

There’s a fairly simple solution for avoiding this:

- Split your workloads and put them into different accounts. AWS Organizations and AWS Control Tower can manage several AWS accounts, even for non-corporate AWS customers.

7. Using Lambda where it is not the best fit

Lambda is ideal for short-lived workloads that can scale horizontally. The common Lambda application types and use cases provide information on where Lambda is an ideal choice. However, be careful when considering using Lambda for operations that might take a long time to run. If they’re not designed or coded properly, those operations might exceed the Lambda timeout.

A bit of advice:

- Consider using Lambda to assist purpose-built services like Amazon EC2, Amazon Elastic Container Service (Amazon ECS), and Amazon Elastic Kubernetes Service (Amazon EKS), and others to handle long-running compute tasks.

8. No monitoring for implementation costs

There are many blogs and websites that cover controlling costs. However, many individuals and companies have not implemented measures to protect themselves.

Last advice for today:

- Set up a daily spending limit in your account settings and an alarm to notify you when your limit is reached. This will allow you not only to save some money, but likely help resolve possible technical issues quicker.

Summary

This article showed you the eight most common serverless anti-patterns to avoid. The solutions I mentioned are applicable to the most common serverless application workloads.