Containers

Deploy applications on Amazon ECS using Docker Compose

Note: Docker Compose’s integration with Amazon ECS has been deprecated and is retiring in November 2023

There are many reasons why containers have become popular since Docker democratized access to the core Linux primitives that make a “docker run” possible. One reason is that containers are not tied to a specific infrastructure or stack, so developers can move them around easily (typically from their laptops, through the data center, and all the way to the cloud). While we spend most of our time these days talking about container orchestrators, lest we forget that the true core portability of containers is guaranteed by their packaging and standard runtime requirements and not only by the YAML choreography around them (albeit it is true that this YAML choreography creates a certain amount of operational burden and gravity).

According to Docker, “[Compose is] currently used by millions of developers and with over 650,000 Compose files on GitHub.” There is a good reason for that; Docker Compose is an elegant yet very simple way to describe your containerized application stack. This format has been used and will continue to be used by thousands of developers to run applications requiring multiple Docker containers, and service to service communication. Often, these developers are also looking for a convenient way to run their code on AWS.

It was with this spirit in mind that AWS and Docker, earlier this year, started to collaborate on the open Docker Compose specifications to create a path for developers using the Docker Compose format to deploy their applications on Amazon ECS and AWS Fargate. In July, Docker released a beta for Docker Desktop that embedded these functionalities and, on September 15th, Docker released an updated experience in their Docker Desktop stable channel.

Today, we are releasing another set of features and we are graduating this integration to general availability.

With all this background out of the way, let’s get our hands dirty. The remainder of this blog is structured around two major themes:

- Using Docker Compose to extend existing investments

- Using Docker Compose to improve the ECS developer experience

In addition to these themes, there is a third and final section with some considerations and practical suggestions related to this integration.

Using Docker Compose to extend existing investments

Four years ago, I created a simple (yet representative of real life) application that I could use as a basis for learning new technologies. Instead of focusing on a technology in abstract and try out the tutorial it was coming with, I wanted to focus on “my application” and try to use the technology in my defined and existing context. At the end of the day this is what real customers do. Their goal isn’t to run the example successfully, but it is rather to apply the technology they are evaluating to their own application stack. I simply wanted to mimic the customer’s technologies adoption patterns.

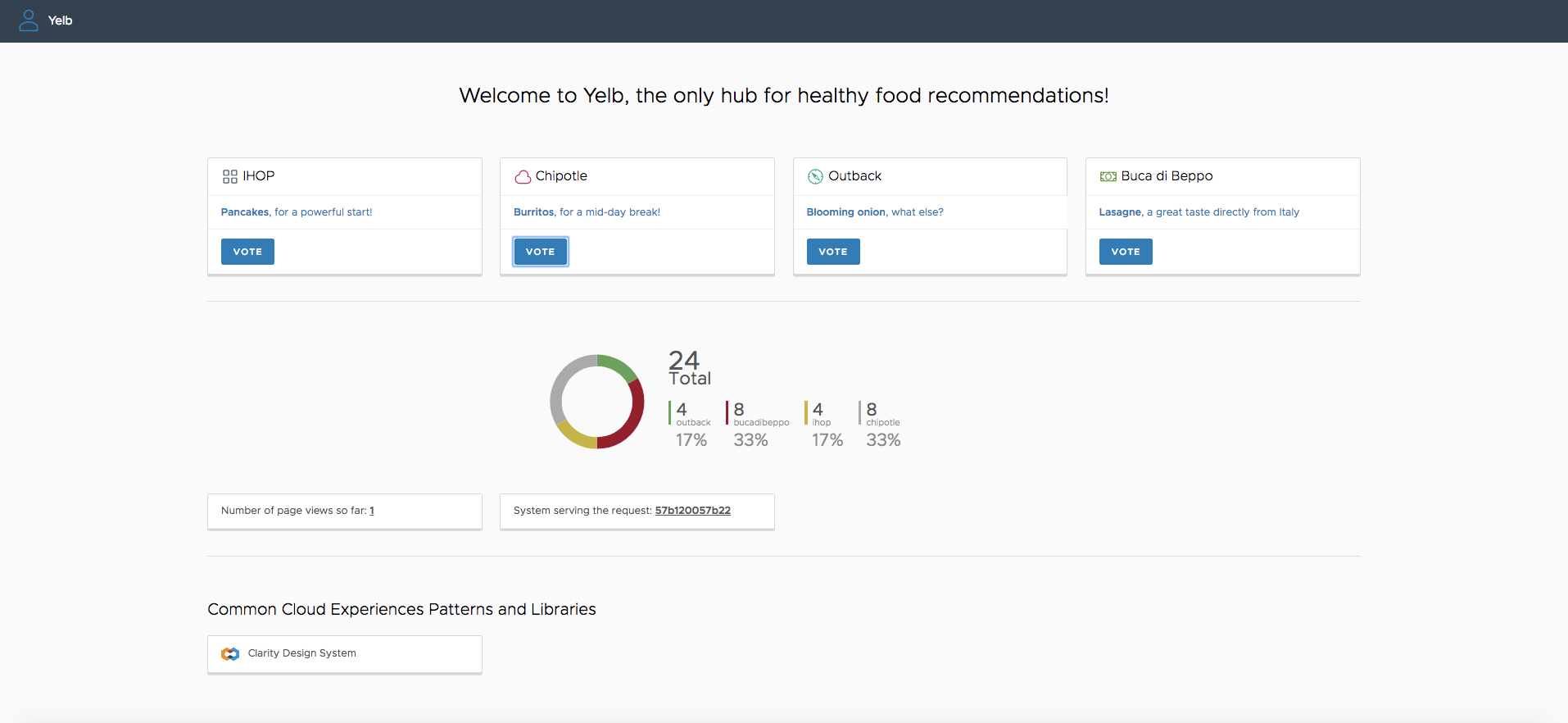

Enter Yelb. Yelb is a traditional web application with four components: a user interface, an application server, a cache server, and a database.

As I started to learn different container-based solutions throughout the years, I applied those to Yelb. Today you can deploy a containerized version of Yelb with Docker Compose, Kubernetes, and ECS. This required me to author all of the YAML choreography for each of the orchestrators (in the specific order I have mentioned) while using the same container images.

Since we’ve started this collaboration with Docker, I continued to ask: “what if I did not want to, or I could not, spend time to re-author the original Docker Compose YAML file into a native ECS YAML file? Would this Docker Compose/ECS integration work for Yelb?” My Yelb Docker Compose file is one among those 650.000 I mentioned above.

Interestingly, my test did work out of the box with the Docker Compose file I authored more than 4 years ago.

Let me show you what the developer experience looks like. Later, we will touch on the mechanics of how this works behind the scenes. For now the only thing you need is Docker Desktop and an AWS account. For this test , I am using Docker Desktop (stable) version 2.5.0.1.

The first thing you need to do is clone the Yelb repository and move to the deployment directory that includes the Compose YAML file in the repository (docker-compose.yaml):

➜ git clone https://github.com/mreferre/yelb

Cloning into 'yelb'...

remote: Enumerating objects: 4, done.

remote: Counting objects: 100% (4/4), done.

remote: Compressing objects: 100% (4/4), done.

remote: Total 805 (delta 0), reused 0 (delta 0), pack-reused 801

Receiving objects: 100% (805/805), 3.41 MiB | 1.25 MiB/s, done.

Resolving deltas: 100% (416/416), done.

➜ cd ./yelb/deployments/platformdeployment/Docker/

➜ ls

README.md docker-compose.yaml stack-deploy.yamlNow we can docker-compose up the Compose YAML file in the repository. This, by default, will instantiate the Yelb application on your workstation:

➜ docker-compose up -d

Creating network "docker_yelb-network" with driver "bridge"

Creating docker_yelb-db_1 ... done

Creating docker_redis-server_1 ... done

Creating docker_yelb-appserver_1 ... done

Creating docker_yelb-ui_1 ... doneYou can test everything is working by pointing your web browser to the http://localhost on your machine. You should see the Yelb voting app:

If the test was successful, you can now tear your local stack down running docker-compose down. If you are coming from a Docker background, you have probably used this workflow thousands of times for a number of years. Nothing new here.

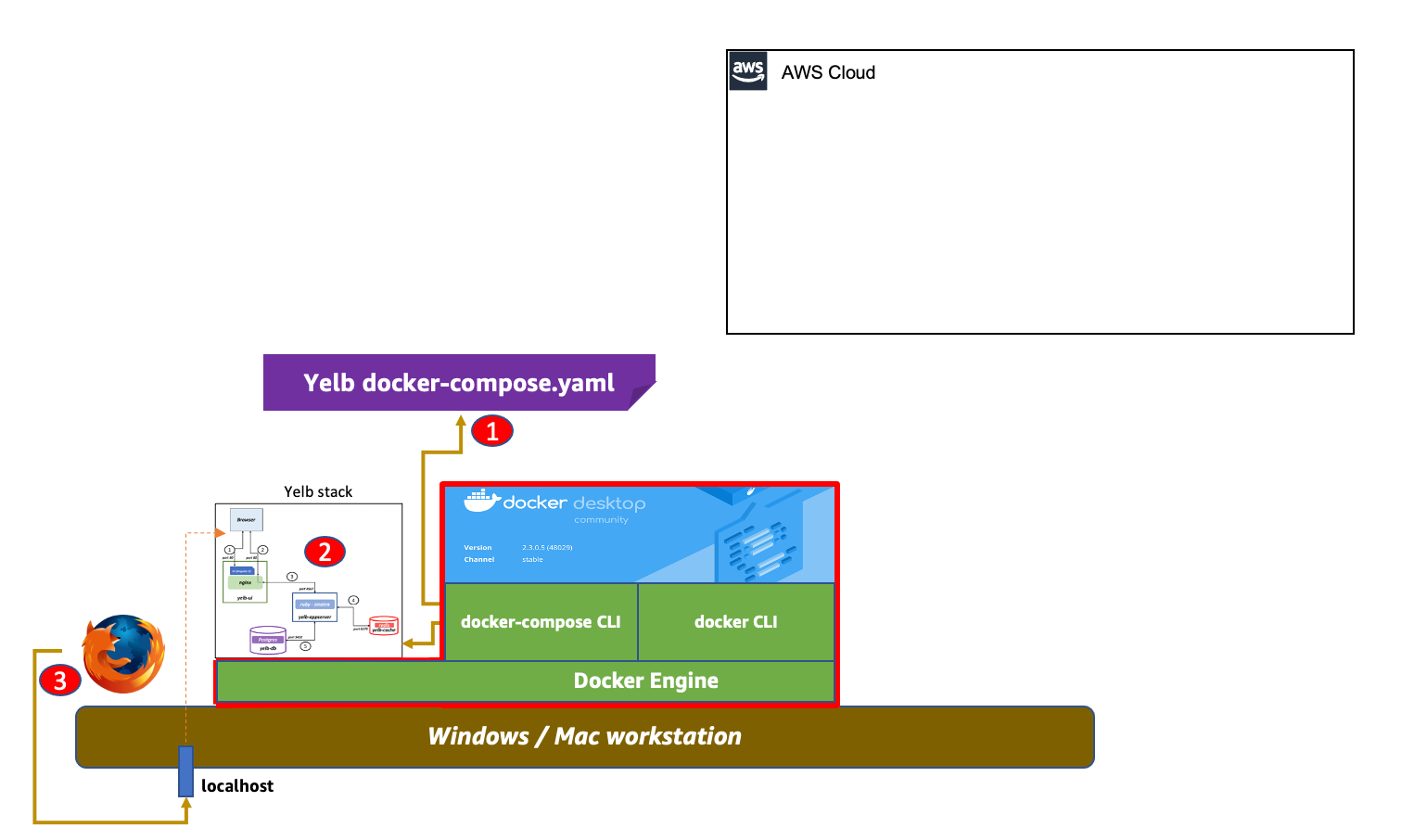

As we often say, a picture is worth 1000 words. This is a visual representation of the flow we have just executed (note how nothing is being deployed, yet, on the AWS cloud):

Let’s now see how we can deploy the same stack to ECS. To do this we need to prepare our Docker Desktop environment.

First, we will create a new docker context so that the Docker CLI can point to a different endpoint. By default, Docker points to a local context called default (that is the Docker runtime on your machine) but we will add an Amazon ECS context using the command docker context create ecs.

A note on the AWS credentials: if you are already familiar with AWS you probably already have your AWS CLI environment ready with either a default or named profiles. That’s fine, the Docker CLI can use those credentials. If not, the Docker workflow will allow you to either read the environment variables with your AWS credentials (

AWS_ACCESS_KEY_IDandAWS_SECRET_ACCESS_KEY) or it will ask for those credentials and will store the credentials for you (in$HOME/.aws/credentials).

In this example, I am pointing the Docker workflow to my existing AWS profile:

➜ docker context create ecs myecscontext

? Create a Docker context using: An existing AWS profile

? Select AWS Profile default

Successfully created ecs context "myecscontext" Now, Docker has an additional context call myecscontext (or type ecs) that points to my existing AWS CLI default profile. Note that we are also setting myecscontext to be our new active context (which is marked with a * ):

➜ docker context ls

NAME TYPE DESCRIPTION DOCKER ENDPOINT KUBERNETES ENDPOINT ORCHESTRATOR

default * moby Current DOCKER_HOST based configuration unix:///var/run/docker.sock swarm

myecscontext ecs

➜ docker context use myecscontext

myecscontext

➜ docker context ls

NAME TYPE DESCRIPTION DOCKER ENDPOINT KUBERNETES ENDPOINT ORCHESTRATOR

default moby Current DOCKER_HOST based configuration unix:///var/run/docker.sock swarm

myecscontext * ecs The credentials in the AWS profile must have sufficient permissions to deploy the application in AWS. This includes permissions to create, for example, VPCs, ECS tasks, Load Balancers, etc.

We are now going to bring the Yelb stack live on the cloud.

Note how I am now using the main docker binary to do this, instead of the

docker-composebinary I have used above to deploy locally. Thedockerbinary now comes with an expanded functionality todocker compose up(more on this later).

➜ docker compose up

WARN[0001] networks.driver: unsupported attribute

[+] Running 26/26

⠿ docker CreateComplete 345.5s

⠿ YelbuiTCP80TargetGroup CreateComplete 0.0s

⠿ LogGroup CreateComplete 2.2s

⠿ YelbuiTaskExecutionRole CreateComplete 14.0s

⠿ YelbnetworkNetwork CreateComplete 5.0s

⠿ YelbdbTaskExecutionRole CreateComplete 14.0s

⠿ CloudMap CreateComplete 48.3s

⠿ Cluster CreateComplete 6.0s

⠿ YelbappserverTaskExecutionRole CreateComplete 14.0s

⠿ RedisserverTaskExecutionRole CreateComplete 13.0s

⠿ YelbnetworkNetworkIngress CreateComplete 0.0s

⠿ Yelbnetwork80Ingress CreateComplete 1.0s

⠿ LoadBalancer CreateComplete 122.5s

⠿ RedisserverTaskDefinition CreateComplete 4.0s

⠿ YelbappserverTaskDefinition CreateComplete 3.0s

⠿ YelbuiTaskDefinition CreateComplete 3.0s

⠿ YelbdbTaskDefinition CreateComplete 3.0s

⠿ RedisserverServiceDiscoveryEntry CreateComplete 1.1s

⠿ YelbdbServiceDiscoveryEntry CreateComplete 5.5s

⠿ YelbuiServiceDiscoveryEntry CreateComplete 4.4s

⠿ YelbappserverServiceDiscoveryEntry CreateComplete 4.4s

⠿ RedisserverService CreateComplete 68.2s

⠿ YelbdbService CreateComplete 77.7s

⠿ YelbuiTCP80Listener CreateComplete 5.4s

⠿ YelbappserverService CreateComplete 108.5s

⠿ YelbuiService CreateComplete 76.6s

➜ docker compose ps

ID NAME REPLICAS PORTS

docker-RedisserverService-bs6RqrSUuIux redis-server 1/1

docker-YelbappserverService-yG2xExxLjU6D yelb-appserver 1/1

docker-YelbdbService-RDGo1mRenFMt yelb-db 1/1

docker-YelbuiService-X0bPBdwZmNcC yelb-ui 1/1 docke-LoadB-C7CWCW0SZZCC-240648981.us-east-1.elb.amazonaws.com:80->80/httpIf you look at the details of this compose stack (docker compose ps), you will see that the yelb-ui component is exposed on a particular port of a particular endpoint. In the example above, when I point my browser to https://docke-LoadB-C7CWCW0SZZCC-240648981.us-east-1.elb.amazonaws.com:80 I see the very same application (described in the same Docker Compose file) deployed on ECS and Fargate.

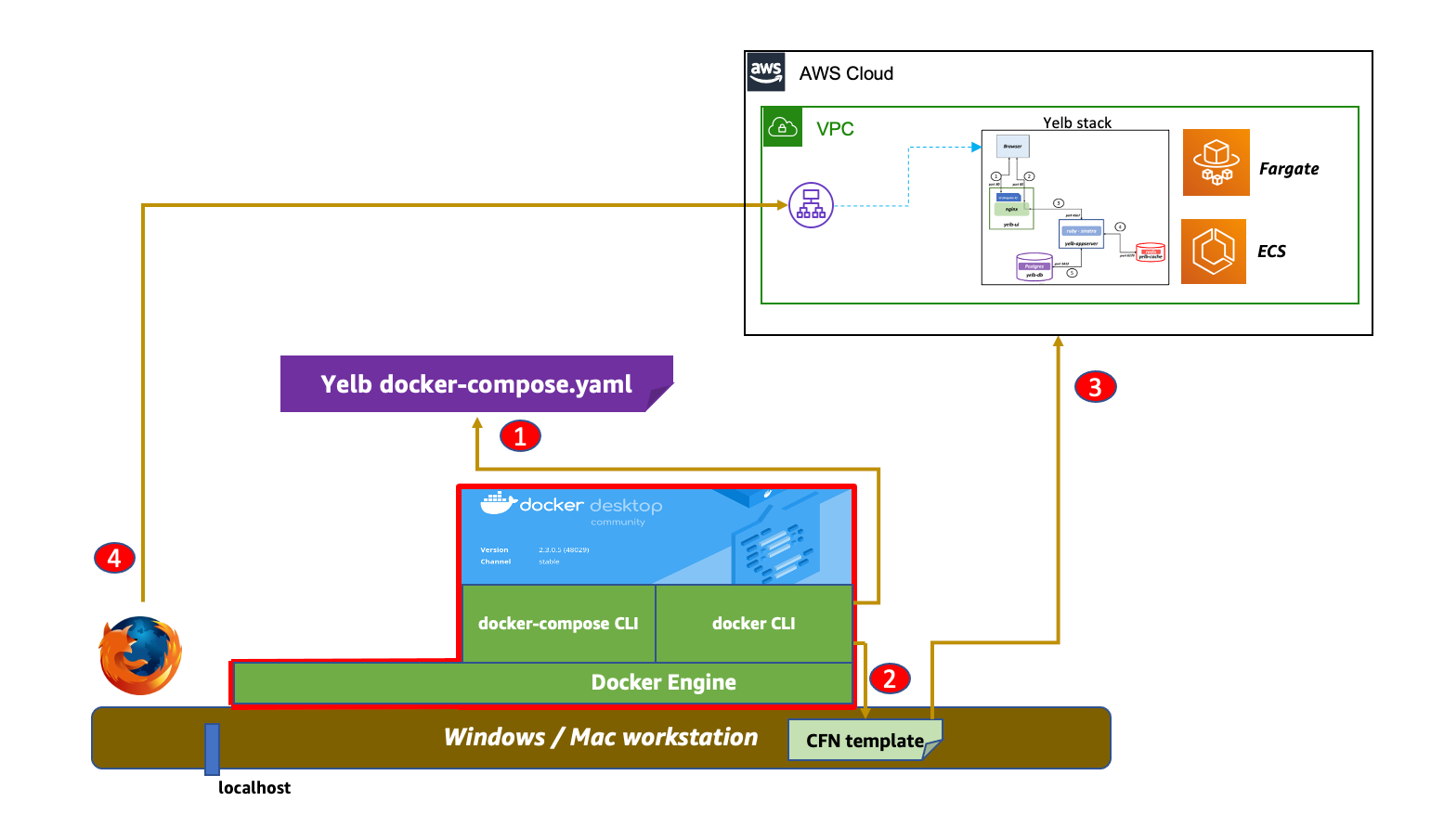

This is a visual representation of the flow we have used:

This is what happened behind the scenes:

- You

docker compose upand Docker reads thedocker-compose.yaml - Docker converts the original compose file on the fly into an AWS CloudFormation template

- Docker deploys the CloudFormation template on AWS

Using Docker Compose to improve the ECS developer experience

So far we have focused on how you could reuse a 4 year old Docker Compose file. Now let’s see how this integration can make the experience better for future deployments.

The Docker Compose integration with Amazon ECS can make the developer experience better on at least a couple of dimensions: writing less YAML and being able to test your application locally (while connecting to cloud services).

The best way to explain this is to focus on another example. Imagine you need to deploy an application that uses the following architecture:

The application (running in the ECS task) reads messages from an SQS queue and processes files on two folders of an EFS volume.

Setting up this architecture on AWS requires doing the following:

- creating a dedicated VPC

- creating an EFS volume

- creating an ECS cluster

- creating an IAM policy and role to read SQS messages and put logs to CloudWatch

- creating an ECS task definition (with the EFS mount and the proper IAM role)

- creating an ECS service

- injecting a certain number of environment variables

If you were to code the above infrastructure details using CloudFormation, you’d easily need to write a few hundreds lines of YAML.

If you were to use Docker Compose to define this application, it would be as simple as writing these 20 lines:

services:

ecsworker-in-region:

environment:

- SQS_QUEUE_URL=https://sqs.us-east-1.amazonaws.com/123456789/main-queue

- EFS_SOURCE_FOLDER=/data/sourcefolder/

- EFS_DESTINATION_FOLDER=/data/destinationfolder/

- AWS_REGION=us-east-1

image: 123456789.dkr.ecr.us-east-1.amazonaws.com/ecsworker:amd64-slim

volumes:

- efs-share:/data

x-aws-role:

Version: '2012-10-17'

Statement:

- Effect: Allow

Action: sqs:*

Resource: arn:aws:sqs:us-east-1:123456789:main-queue

volumes:

efs-share:If you are curious about the nature of these x-aws Docker Compose extensions, please refer to the last section of this blog. In a nutshell, x-aws-role allows the developer to assign an in-line IAM policy that will bind to the application (as an ECS task role).

While our Docker context still points to our AWS endpoint (myecscontext), we will deploy this application on AWS:

➜ docker compose up

[+] Running 19/19

⠿ composelocal CreateComplete 193.5s

⠿ CloudMap CreateComplete 48.6s

⠿ EfsshareFilesystem CreateComplete 9.5s

⠿ LogGroup CreateComplete 3.7s

⠿ Cluster CreateComplete 9.5s

⠿ DefaultNetwork CreateComplete 8.0s

⠿ EcsworkerinregionTaskExecutionRole CreateComplete 14.5s

⠿ DefaultNetworkIngress CreateComplete 0.0s

⠿ EfsshareNFSMountTargetOnSubnetbd150891 CreateComplete 93.9s

⠿ EfsshareAccessPoint CreateComplete 18.2s

⠿ EfsshareNFSMountTargetOnSubnetc4f32f8f CreateComplete 93.9s

⠿ EfsshareNFSMountTargetOnSubnet7fe9c173 CreateComplete 93.9s

⠿ EfsshareNFSMountTargetOnSubnet6301dc5c CreateComplete 93.9s

⠿ EfsshareNFSMountTargetOnSubnetd56c7a8f CreateComplete 93.9s

⠿ EfsshareNFSMountTargetOnSubnetc82799ac CreateComplete 96.5s

⠿ EcsworkerinregionTaskRole CreateComplete 15.0s

⠿ EcsworkerinregionTaskDefinition CreateComplete 3.0s

⠿ EcsworkerinregionServiceDiscoveryEntry CreateComplete 2.3s

⠿ EcsworkerinregionService CreateComplete The application is now running on AWS and the only thing I had to do to deploy it was to write 20 lines of YAML.

On the topic of making the developer experience better, as an engineer you may, however, also wonder how to iterate quickly on this application on your workstation without having to necessarily deploy to the cloud, while experimenting with quick code changes. The beauty of this integration is that it maps standard Docker constructs to AWS constructs. Let’s take the volume declaration, for example. When used against the local Docker endpoint (the default Docker context) a traditional local Docker volume is created. However, when used with the AWS context, the Docker volume object maps to an EFS object and hence an EFS volume is created.

In theory, we could just change the Docker context to default and point to the local Docker runtime. While the Compose file would still be semantically correct, if we did so, we would not have access to the AWS services we need to interact with to test my application (namely SQS in my example above). This is because my application would not be authorized to read from the SQS queue, for example.

This is why we have introduced an additional Docker context that you can enable with the flag `--local-simulation. You can create such a context like this:

➜ docker context create ecs --local-simulation ecsLocal

Successfully created ecs-local context "ecsLocal"

➜ docker context use ecsLocal

ecsLocal

➜ docker context ls

NAME TYPE DESCRIPTION DOCKER ENDPOINT KUBERNETES ENDPOINT ORCHESTRATOR

default moby Current DOCKER_HOST based configuration unix:///var/run/docker.sock swarm

ecsLocal * ecs-local ECS local endpoints

myecscontext ecs The Docker ecsLocal context behaves similarly to the default local Docker context but it will automatically embed the ECS local container endpoints. This is a container that simulates the instance metadata services, including the AWS IAM credentials that it sources from the local $HOME/.aws/credentials file. In the cloud, the vending of the IAM credentials happens by attaching an IAM role to the ECS task. Locally, this function is simulated by the ECS local container endpoints and allows the application to work transparently.

The next thing you’d need to do is to docker login to pull the image from ECR. You can do so using this command:

echo $(aws ecr get-login-password --region us-east-1) | docker login --password-stdin --username AWS 123456789.dkr.ecr.us-east-1.amazonaws.com/ecsworker At this point, you can launch locally the application using the docker compose up in the newly created ecsLocal Docker context:

➜ docker compose up

Starting composelocal_ecs-local-endpoints_1 ... done

Starting composelocal_ecsworker-in-region_1 ... done

Attaching to composelocal_ecs-local-endpoints_1, composelocal_ecsworker-in-region_1

ecs-local-endpoints_1 | time="2020-11-16T15:49:02Z" level=info msg="ecs-local-container-endpoints 1.3.0 (4fa3c29) ECS Agent 1.27.0 compatible"

ecs-local-endpoints_1 | time="2020-11-16T15:49:02Z" level=info msg=Running...

ecsworker-in-region_1 | Importing the env variables

ecsworker-in-region_1 | Env variables have been imported

ecsworker-in-region_1 | Initializing the boto client

ecsworker-in-region_1 | Boto client has been initialized

ecsworker-in-region_1 | Entering the infinite queue monitoring loopNote the application logs are available in the local

stdoutconsole instead of Cloudwatch (which is what happens when you deploy on AWS). Also note how thebotoclient (AWS SDK for Python) has been initialized properly the connection to the SQS queue leveraging the local credentials via the metadata container (the ECS local container endpoints).Note also that we found a small bug last minute that caused this example not to work properly with the ecsLocal context when using Docker Desktop 2.5.0.1. The issue is described here and the PR to solve it has already been merged. The next release of Docker Desktop will include this fix. In order to work around the bug, just remove entirely the `x-aws-role` section in the YAML above when running in the ecsLocal context.

This is a visual representation of what we have achieved:

Additional considerations and practical suggestions

Developers that have a good understanding of both Docker and AWS technologies will be able to navigate this integration easily. If you are coming from a Docker-only background or an AWS-only background, some of the aspects may require a bit of additional “context“ (no pun intended). Below is a list of practical considerations you may find useful as you start playing with the integration.

Linux users without Docker Desktop support

The core of this integration is built around a new tool dubbed Compose CLI (this is not to be confused with the original docker-compose CLI). This new CLI surfaces to the user as new functionalities in the docker command. While in Docker Desktop all this plumbing is completely hidden and available out of the box, if you are using a Linux machine you can set it up using either a script or a manual install. This new CLI is, essentially, a new version of the docker binary.

Pay attention to which CLI you are using

Please note that the workflows we have explored above (deploying locally Vs deploying on AWS) are using the same compose file as a target. One of the major differences, as it stands today, is that the local workflow uses the docker-compose binary, whereas the cloud workflow is using the new docker binary. Be mindful of that if you don’t want to see error message on the line of ERROR: The platform targeted with the current context is not supported. In the future, Docker plans to add docker compose support for the default Docker context (i.e. local Docker Engine) experience as well.

Docker Compose extensions

For the most part, this integration will try to use sensible defaults and completely abstract the details of the AWS implementation. However, there are some situations where you either need or want to drive specific AWS resources, configurations or behaviors. This is achieved by using compose extensions that take the form of x-aws-* parameters in the Docker Compose file. As an example, this is just a subset of the list of the currently supported extensions:

x-aws-pull_credentials: it can retrieve id/password from AWS Secrets Manager to login in private registriesx-aws-keys: it can retrieve generic keys from AWS Secrets Managerx-aws-policies: it can configure the ECS task with a specific existing IAM policyx-aws-role: it can configure the ECS task with a specific in-line IAM policyx-aws-cluster: it can force compose to use an existing ECS cluster (default: create a new one)x-aws-vpc: it can force compose to use an existing VPC (default: use the default VPC)

This list will continue to grow over time so it’s good to bookmark this page on the Docker documentation to have an up-to-date list of the AWS extensions supported. We expect developers to use these extensions on a per need basis to tune their AWS deployments (for example, to re-use existing VPCs instead of creating a new one at every docker compose up, which is the default behavior).

Inspect the intermediate artifacts

A useful aspect about the new functionality in the Docker CLI is the docker compose convert command. Not only docker can up a compose file into an AWS stack but it also allows to inspect the intermediate CloudFormation template it generates. This could be useful, for example, in those cases where the developer isn’t directly responsible for the deployment on AWS. In this case, they can take the CloudFormation file generated by the above command and hand it over to the team responsible to deploy on AWS.

Tracking supported features

As I started working with this integration, one thing that wasn’t obvious to me was “which feature” was available in “which Docker Desktop channel.” The easiest way to track the new integration features being developed is to check the releases of the new Compose CLI. Each Docker Desktop release notes will include which release of the Compose CLI has been shipped with which Docker Desktop release (both in the stable and in the edge channels). Below are useful links that you should bookmark if you intend to use this integration:

- This is the documentation page. It has basic information about this integration, including how to get started

- This link on the GitHub project page shows the mapping between Docker Compose features and corresponding ECS features

- This link on the GitHub project page includes practical examples of this integration that you could reuse

Fargate first, EC2 when needed

As we covered already, the power of this integration lies in the fact that there are built-in mappings between Docker objects and ECS objects and that mapping is transparent to the end user. In general, the compute mapping is such that all ECS tasks are backed, by default, by AWS Fargate. However, there are scenarios that are not yet supported by Fargate that require the Compose CLI mapping to fall back to use EC2. For example, when you request GPU support for a container.

Conclusions

In this blog, we have covered the basic features of the Docker Compose integration with Amazon ECS and AWS Fargate. We have also covered how this integration allows for extending the investments many developers made in producing Docker Compose artifacts over the years as well as how this integration makes easy deploying containerized applications on Amazon ECS. This only lies the foundations of what’s possible. We are already thinking about how this integration could be used to migrate existing Docker Swarm clusters to Amazon ECS as well as how CI/CD pipelines could be used to iterate on new application versions using Docker Compose as the application deployment modeling.

Stay tuned for more to come and let us know how you intend to use this integration. In the meanwhile, download Docker Desktop and start deploying your Docker Compose file on ECS. Also, check out the Docker blog post that talks about this launch. Happy deployments!