Containers

Domain reduces scaling time for their mobile API services with Amazon ECS

![]()

This post was contributed by Peter Kiem, Senior DevOps Engineer, Cloud Platform Team, Domain Group, Mitch Beaumont, Principal Solutions Architect, Large Enterprise, Container SME, and Mai Nishitani, Solutions Architect, Large Enterprise.

Domain provides real estate information and services to Australians through mobile applications, the web, and newspapers. The company’s services include property marketing and search tools, customer relationship management technologies for real estate agents, and data and research services for property buyers and sellers, real estate agencies, government agencies, and financial markets. Domain Group is part owned by Nine Entertainment Co and employs about 500-1000 people.

The Cloud Platforms Team manages a variety of applications, which include some that are hosted on a fleet of older generation Amazon EC2 instances. One of these applications provides consumer facing API services to Android and iOS applications.

We needed to address the following three challenges:

Upgrade to newer generation Amazon EC2 instances

We attempted to move to Amazon EC2 fifth generation instances from third generation instances, but the existing setup was not compatible with these instance types.

Remove dependencies from scaling

Each time the application scaled out, a set of processes would execute to set up and configure the Operating System and application. In the event of an issue with any of these processes, we would experience a runtime issue with the application. On one evening, two installation dependencies were causing every scale out action to fail. This caused a significant service interruption impacting mobile users.

Improve the speed of scaling, unused capacity, and increased costs

Our standalone Amazon EC2 setup struggled to scale fast enough to manage peak time traffic for the application. This resulted in a lot of errors caused by performance and resource starvation. As a workaround, we configured a scheduled action to scale up just prior to peak traffic time; this was not ideal as it often resulted in a lot of unused capacity.

Overview of solution

Goals

- Improving efficiency and performance of the mobile workload by getting as close to real-time scaling as possible using Amazon ECS Capacity Providers.

- Deploy changes to Amazon ECS via Infrastructure as Code with AWS CloudFormation.

- Better networking performance by right-sizing EC2 instances for ECS clusters.

- Reduce costs by scaling down ECS tasks which are not required quickly after peak traffic.

Risks

The two risks we are looking to mitigate with this piece of work involve:

- The underlying infrastructure not scaling to meet demand fast enough, which would give users a poor experience during peak times.

- Scaling dependencies failing causing unplanned outages, which would cause reputational damage due to the high consumer traffic.

Steps involved:

- Convert the existing Infrastructure as Code setup into a container build process so it could be built and saved in Amazon Elastic Container Repository and deployed to Amazon ECS.

- The Cloud Platforms Team conducted load testing to ensure that the application can scale with production load.

- We diverted traffic gradually over two weeks by increasing the percentage of traffic to Amazon ECS each day via a canary style deployment.

- We used the task placement strategy, which was fit for scaling down after peak traffic settled down. The binpack placement strategy removed tasks from hosts that had the least amount of running tasks so the ECS Capacity Provider can scale the instance down after high traffic events.

- The application uses Amazon ElastiCache for Redis for caching. Upgrading to the fifth-generation cache nodes improved cache usage. This upgrade improved performance of higher connection counts due to a larger number of smaller containers.

- Amazon CloudWatch Container Insights helped with assigning resources to individual tasks and continuously improve resource reservation.

- We used AWS Cost Explorer to confirm that costs have been reduced by 25% due to right-sizing the ECS cluster instances and scaling according to load.

- We used Amazon CloudWatch to monitor the scaling activity to confirm that scaling activity was aligned with actual load.

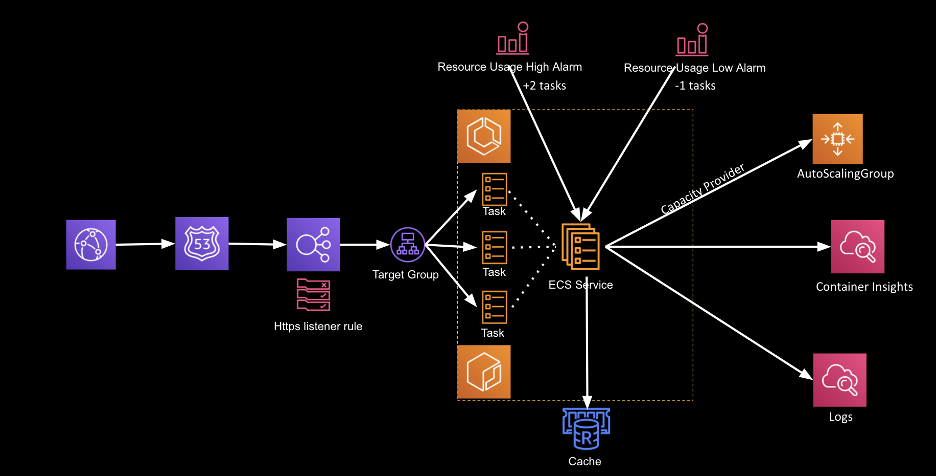

Architecture diagram

Here we see the solution of:

- Using Elastic Load Balancing to manage the distribution of traffic to the running containers (Tasks).

- Amazon CloudWatch alarms to monitor resource utilization and add/remove containers to match desired usage.

- ECS Capacity Providers and an Auto Scaling group to manage the container host capacity required for the desired number of containers.

- Logs and metrics published to CloudWatch logs and Container insights.

Reliability

The key focus of this activity was improving the reliability, in particular during peak times. We were able to alleviate the impact of a heavily increased amount of traffic with effective scaling. The ability to scale reduced the number of alerts triggered during peak time for an improved user experience.

Performance

One of the main goals of this activity was to ensure that our infrastructure scales on usage so we are not paying for unused capacity.

If we look at the number of requests:

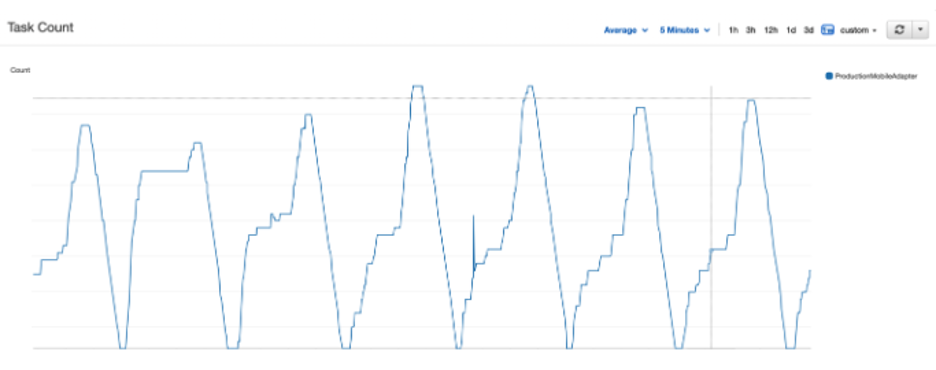

We can see a direct correlation to the number of tasks running to service the higher numbers:

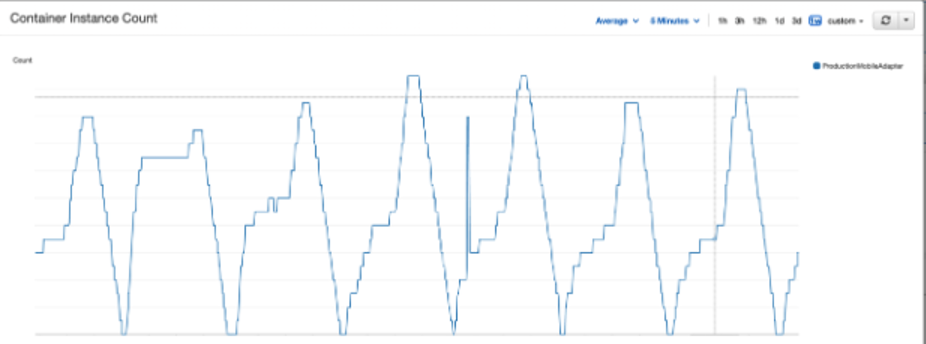

In addition to the above task count metrics, we can also see the number of container instances scaling up and down in correlation to the task count:

In this graph, you can also see that a deployment occurred on the 5th cycle where the cluster scaled up to support a deployment transition and the capacity provider bought it back down afterwards.

Costs

While reliability was our first objective, we reduced costs by 25% and achieved usage-optimized scaling. We also reduced our EC2 costs by working through our custom reports on AWS Cost Explorer.

Simplifying the deployment process and moving off old architecture has enabled further cost saving initiatives that can be looked at in future such as:

- Making better use of client caching and Amazon ElastiCache

- Using Amazon EC2 Spot Instances to run a percentage of the workload

- Using the AWS Graviton2 processor EC2 instances and Bottlerocket AMI for ECS

Conclusion

Additional Future Opportunities

We are currently looking at integrating this workflow with AWS CodePipeline and AWS CDK to integrate Amazon ECS into an automated workflow. There are opportunities to apply this architecture to existing and future workloads within Domain.

Next Steps:

Learn more about Amazon ECS Capacity Providers.

Amazon ECS Cluster Auto Scaling.

There is also information on various Amazon ECS Task Placement strategies that suit your use case.

In addition, you can track your performance metrics with Amazon CloudWatch Container Insights.

Learn more about Bottlerocket for Container AMIs here.

AWS Graviton2 Processor for Amazon EC2 further information.