AWS Database Blog

Augment DMS SC with Amazon Q Developer for code conversion and test case generation

You can use the AWS Database Migration Service Schema Conversion (AWS DMS SC) with generative AI feature to accelerate your database migration to AWS. This feature automatically handles the conversion of many database objects during migration by using traditional rule-based techniques and deterministic AI techniques. For the AWS DMS SC issues that can’t be converted, Amazon Q Developer can generate PostgreSQL-compatible code alternatives, workarounds, and comprehensive test cases to help you complete your database modernization journey.

In this post, we demonstrate how Amazon Q Developer delivers generic solutions for complex AWS DMS SC issues, intelligently converts database stored procedure code from source to target database-compatible code, and automatically generates comprehensive test cases to validate your migrated database objects.

Solution overview

AWS DMS SC provides valuable conversion capabilities and detailed assessment reports that identify any schema elements that required manual attention. Amazon Q Developer enhances this workflow and generates solutions for complex SQL Server to Amazon Aurora PostgreSQL-Compatible Edition migration challenges that weren’t handled by AWS DMS SC. We explore three possible ways that Amazon Q Developer can accelerate your database modernization journey beyond AWS DMS SC capabilities:

- Providing generic solutions for complex AWS DMS SC issues, reducing manual workaround development time.

- Automating commercial to open source database code conversions, reducing manual development effort.

- Generating comprehensive test cases that cover most use cases automatically, reducing testing cycles by automating test case generation while helping maintain code quality and consistency across the migration.

Prerequisites

Complete the following prerequisites before getting started.

- Install the Amazon Q Developer for your preferred IDE. In this post, we use Visual Studio Code (VS Code).

- Sign in with either a Builder ID or IAM Identity Center.

- Complete database assessment for SQL Server to Amazon Aurora PostgreSQL-Compatible Edition using AWS DMS SC with generative AI enabled.

- Security in the cloud is the top priority of AWS. This documentation guides you through applying the shared responsibility model in the context of Amazon Q Developer. We recommend reviewing it before proceeding with the following solution.

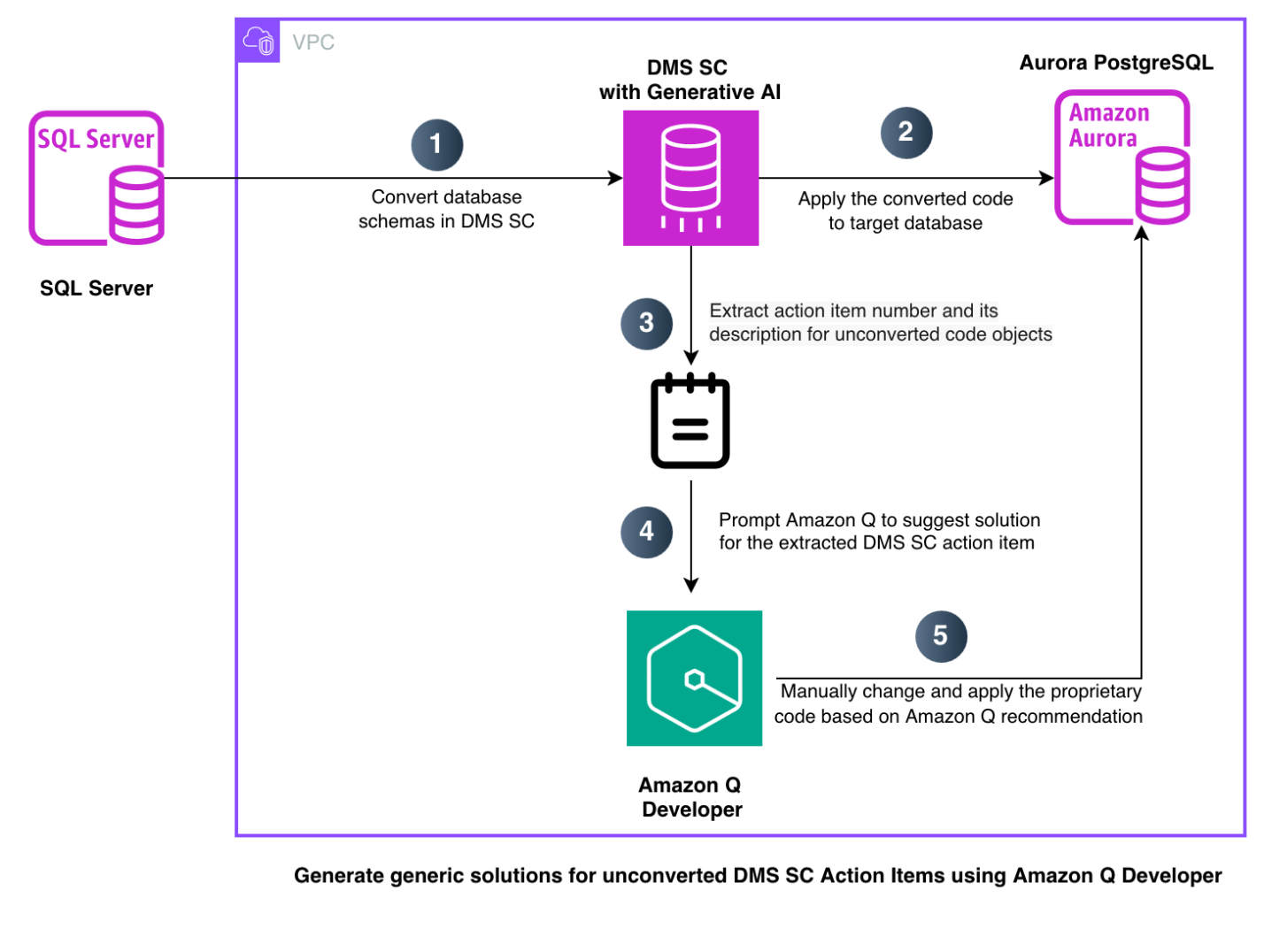

I. Amazon Q Developer Generic Solution for AWS DMS SC issues

This solution helps resolve migration issues without exposing your data model or proprietary code, helping you maintain data privacy. This is recommended for organizations with strict security requirements.

Amazon Q Developer generates generic, reusable code samples for AWS DMS SC issues without accessing your sensitive database schemas or stored procedures. You provide AWS DMS SC with action item numbers through prompts to discover workarounds for complex migration scenarios while keeping your intellectual property secure.

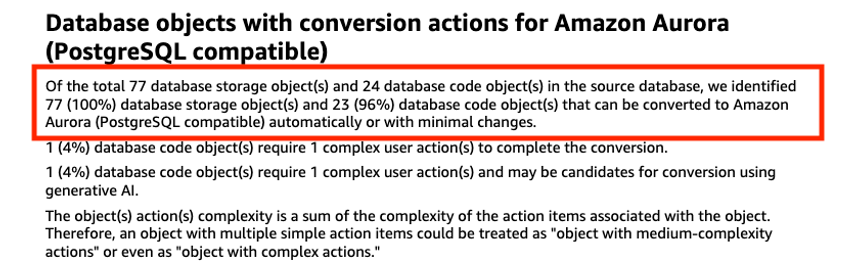

The following screenshot shows the results of the sample assessment. The assessment report provides a high-level summary of how much of the schema can be converted automatically from SQL Server to PostgreSQL, and the work that will be left after conversion.

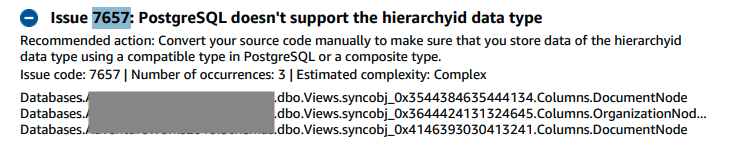

Let’s consider the following example where you can use Amazon Q Developer to provide solution for AWS DMS SC action item:

We used the following prompt to ask Amazon Q Developer for a workaround:

“Provide workaround for AWS DMS SC issue 7657: PostgreSQL doesn’t support the hierarchyid data type – SQL server to PostgreSQL migration”

Several workarounds were provided by Amazon Q Developer for this action item. The following are two examples:

1. Use PostgreSQL’s ltree Extension (Amazon Q Recommended)

The ltree extension provides hierarchical tree-like structures similar to hierarchyid:

2. Use Materialized path pattern

In the next example, we ask Amazon Q Developer to provide solution for AWS DMS SC issue 7658: PostgreSQL doesn’t support the sql_variant data type. We used the following prompt to ask Amazon Q Developer for a workaround:

“Provide workaround for AWS DMS SC issue 7658: PostgreSQL doesn’t support the sql_variant data type”

Several workarounds were provided by Amazon Q Developer for this action item. The following are two examples:

1. Use JSONB

2. Use TEXT with type prefix

Generative AI inference is non-deterministic. Code conversion results can vary between executions and might not be identical to the previous examples shown. The workarounds provided are based on observed patterns but might require adjustment for your specific use case. Always review and test generated code thoroughly in your development environment before production use.

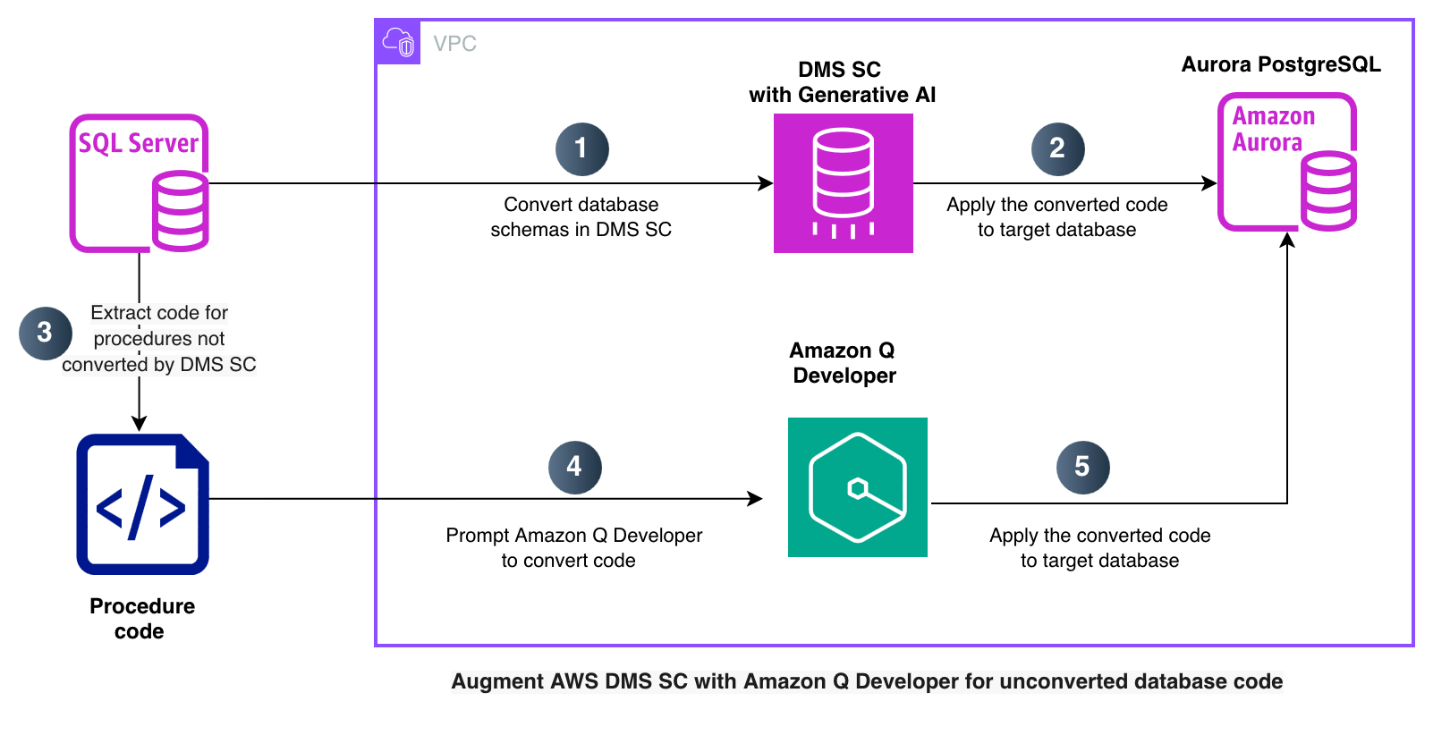

II. Augment AWS DMS SC with Amazon Q Developer for Unconverted database code

Amazon Q Developer can help you further convert SQL Server stored procedures to Aurora PostgreSQL-Compatible by translating T-SQL syntax, control flow structures, and proprietary functions into PostgreSQL-compatible PL/pgSQL code.

Let’s review an example where we have a SQL Server stored procedure that performs a merge statement based on the business criteria. This stored procedure synchronizes the Products table with incoming data by updating existing products, inserting new ones, and deleting products that no longer exist in the source. It returns a log of all changes made (inserts, updates, and deletes) for tracking purposes.

SQL Server sample code

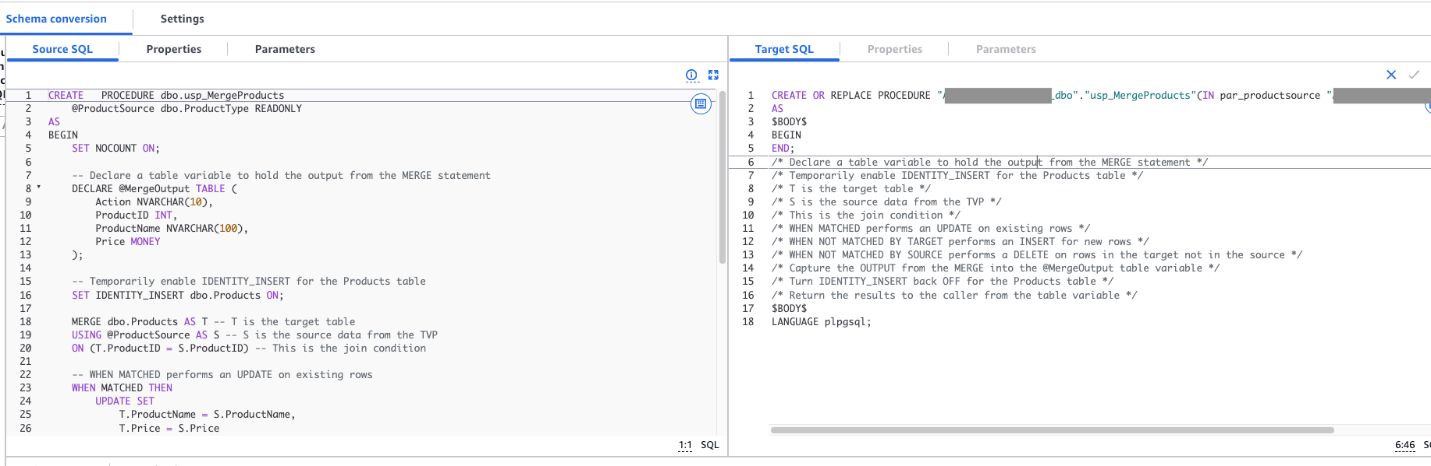

AWS DMS SC can’t convert this procedure and comment all the code as shown in the following image:

Because AWS DMS SC can’t convert the code, perform the following steps by providing source code and target folder details to Amazon Q Developer in VS Code.

- Extract code for this specific procedure from either the AWS DMS SC console shown in the previous image or directly from the SQL Server database.

- Save the code in your preferred location, in this post we stored the code in a local computer drive.

“Convert SQL Server procedure code into Aurora PostgreSQL-Compatible code.

Source code is present in a designated folder <Path>

Convert it to a procedure and keep lower case for object names, keep the names of objects same as the source code. Qualify table names with the schema dbo and print each record using RAISE NOTICE

Save the converted code output in folder <>”

The output from Amazon Q Developer can vary between runs, and you might see different results from what’s shown here. AI models are evolving at a rapid pace, with nearly continuous improvements and updates being released. The examples and outputs presented in this post reflect the model’s performance at the time of writing and testing. As these technologies advance, you can expect even more refined and enhanced capabilities in future iterations. The following is the Amazon Q Developer generated code for this specific procedure.

Generative AI inference is non-deterministic. Code conversion may not be the same as shown in the previous example.

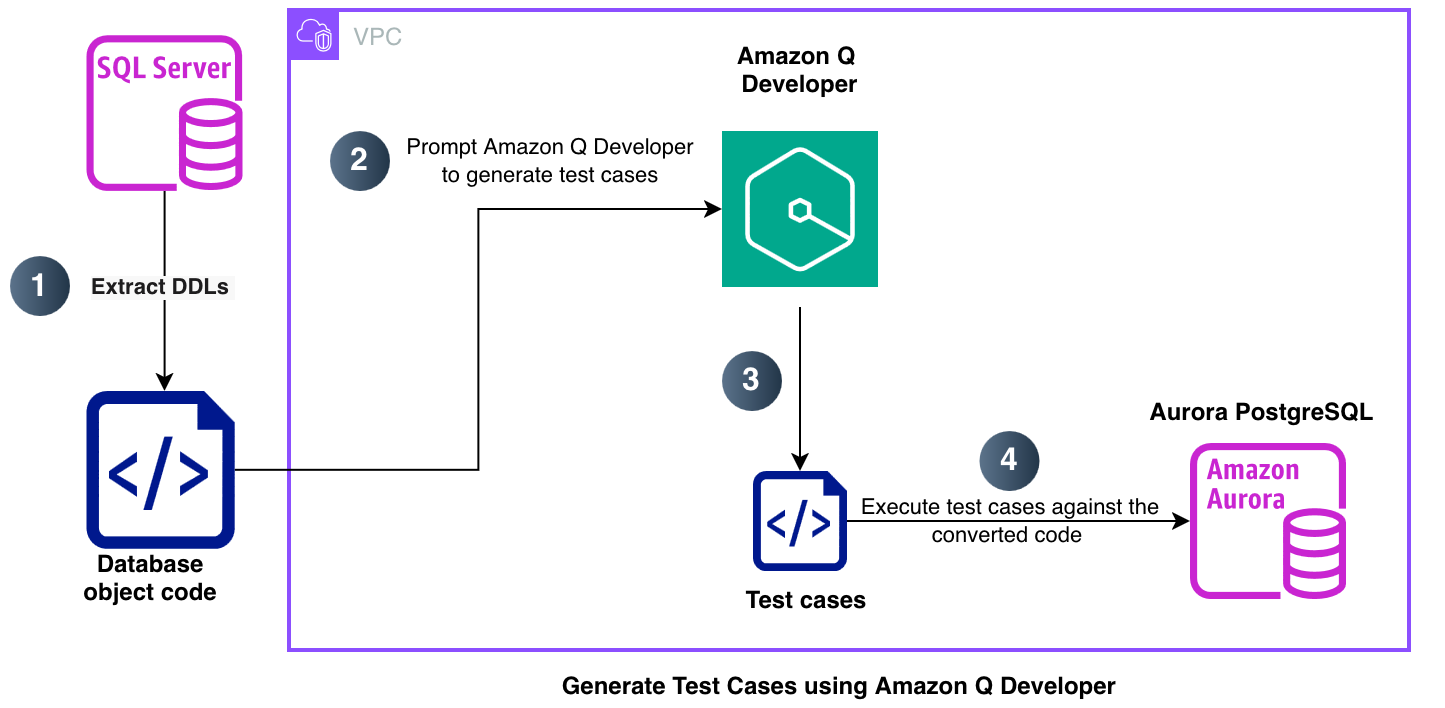

III. Generate test cases using Amazon Q Developer

Amazon Q Developer can help to automatically generate test cases, thereby reducing testing cycles while maintaining code quality and consistency throughout the migration.

Now, we will use Amazon Q Developer to create comparable test cases for both SQL Server and PostgreSQL procedures with identical scenarios: INSERT new records, UPDATE existing records, DELETE through partial source, mixed operations, and empty source deletion. The tests use the same input data and verify that both platforms produce identical final table states, validating that the migration preserves the original MERGE logic despite implementation differences (TVP vs temp table, OUTPUT vs RAISE NOTICE).

The following prompt will generate test cases for the SQL Server and for PostgreSQL procedure:

“Look at the source SQL Server code at <Path> and Amazon Q developer converted file at <Path>. Create comparable test cases for each one of them to validate the migration from SQL Server to PostgreSQL. There is already data in both source and target, do not change or delete existing data”

The number and scope of test cases generated can vary between different runs due to AI model variations and context interpretation differences. Always review and validate all generated test cases before execution in any environment (development, staging, or production).

For this run, Amazon Q Developer generated five test cases for both SQL Server and PostgreSQL:

SQL SERVER TEST CASES (Preserving Existing Data)

POSTGRESQL TEST CASES (Preserving Existing Data)

Note that sample code, software libraries, command line tools, proofs of concept, templates, or other related technology are provided as AWS Content or Third-Party Content under the AWS Customer Agreement, or the relevant written agreement between you and AWS (whichever applies). You should not use this AWS Content or Third-Party Content in your production accounts, or on production or other critical data. You are responsible for testing, securing, and optimizing the AWS Content or Third-Party Content, such as sample code, as appropriate for production grade use based on your specific quality control practices and standards. Deploying AWS Content or Third-Party Content might incur AWS charges for creating or using AWS chargeable resources, such as running Amazon EC2 instances or using Amazon S3 storage.

Summary

In this post, we showed how you can use Amazon Q Developer with AWS DMS SC to accelerate the database migration processes for SQL Server to Amazon Aurora PostgreSQL, leading to increased efficiency and reduced time-to-completion.

Take the first step in your database modernization journey by exploring Amazon Q’s capabilities at the Amazon Developer Portal. You can also use AWS Transform for SQL Server Modernization, an AI-powered service that automates the full-stack modernization of Microsoft SQL Server databases and their associated .NET applications to Amazon Aurora PostgreSQL.