AWS for Industries

Accelerating Android Builds on AWS: From 3 Hours to Under 5 Minutes with SourceFS

Building the Android Open Source Project (AOSP), at scale, is one of software engineering’s most resource-intensive compute tasks. With the release of AOSP 16, the codebase grew to over 140 million lines of code (LOC), and real-world device codebases are even larger. Automotive Original Equipment Manufacturers (OEMs), smartphone manufacturers, and IoT companies that depend on AOSP face a compounding problem: as codebases grow, build times lengthen, compute costs escalate, and developer productivity erodes.

In this post, we explore how SourceFS from Source.dev, running on AWS, transforms the AOSP build experience – reducing end-to-end checkout-and-build time from 3 hours to under 5 minutes. We highlight how leading automotive OEMs are achieving material gains in build velocity, cost efficiency and developer productivity by using SourceFS.

The challenge of building AOSP at scale

AOSP 16 is massive. A standard checkout downloads over 200 GB of source code and toolchains and a full build requires an additional 100 GB of disk space, at least 64 GB of RAM, and as many CPU cores as you can provision.

In this section, we walk through a traditional AOSP 16 checkout-and-build to illustrate the scale required. To make it reproducible, we provide step-by-step instructions. We recommend using Ubuntu 24.04 LTS running Linux kernel 6.9 or newer. Connect to your Amazon EC2 instance via AWS Systems Manager Session Manager or SSH.

Script used to run the benchmarks below.

First let’s look at the AOSP 16 checkout-and-build time on typical developer machines. These are provisioned for extended periods and cost efficiency matters more than peak performance, often favouring lower-cost instances with 8 vCPUs over higher-core count machines.

| Amazon EC2 instance type |

Total time (incl. setup)

|

Checkout time

(hh:mm:ss) |

Build time

(hh:mm:ss) |

Total cost

(USD) |

| r8i.2xlarge (Intel)

(8 vCPU; 64 GB memory) |

05:30:48 | 00:40:44 | 04:49:04 | $3.98 |

| r8a.2xlarge (AMD)

(8 vCPU; 64 GB memory) |

03:37:04 | 00:41:26 | 02:54:34 | $2.98 |

AOSP 16 checkout-and-build time on standard developer-sized EC2 instances, using 400 GB gp3 EBS storage.

Next, let’s look at machines typically used for CI. These are higher-core instances, often 32 vCPUs, provisioned on demand. They are expected to process jobs quickly enough to avoid CI build queue bottlenecks and to enable frequent integration throughout the day, all while balancing cost and performance.

In practice, this goal is rarely met, and CI often is a bottleneck. Builds are slow, queues depth grow, and capacity becomes constrained. As a result, teams resort to batching multiple PRs into fewer builds. While this keeps pipelines moving, this reduces integration frequency, increases risk, and makes issues harder to isolate.

Intel

| Amazon EC2 instance type | Total time (incl. setup)

(hh:mm:ss) |

Checkout time

(hh:mm:ss) |

Build time

(hh:mm:ss) |

Total cost

|

| m5.8xlarge

(32 vCPU; 128 GB memory) |

03:05:19 | 00:40:34 | 02:23:36 | $5.84 |

| m5d.8xlarge

(32 vCPU; 128 GB memory) |

03:03:43 | 00:38:40 | 02:23:52 | $6.66 |

| m6i.8xlarge

(32 vCPU; 128 GB memory) |

02:30:49 | 00:39:00 | 01:50:46 | $4.76 |

| m6id.8xlarge

(32 vCPU; 128 GB memory) |

02:28:31 | 00:38:00 | 01:49:22 | $5.66 |

| m7i.8xlarge

(32 vCPU; 128 GB memory) |

02:16:44 | 00:38:22 | 01:37:15 | $4.52 |

| m8i.8xlarge

(32 vCPU; 128 GB memory) |

01:50:24 | 00:27:40 | 01:21:34 | $3.83 |

| m8id.8xlarge

(32 vCPU; 128 GB memory) |

01:30:44 | 00:13:18 | 01:17:18 | $3.80 |

AMD

| Amazon EC2 instance type | Total time (incl. setup)

(hh:mm:ss) |

Checkout time

(hh:mm:ss) |

Build time

(hh:mm:ss) |

Total cost

(USD) |

| m5a.8xlarge

(32 vCPU; 128 GB memory) |

04:18:33 | 00:40:29 | 03:37:32 | $7.40 |

| m5ad.8xlarge

(32 vCPU; 128 GB memory) |

03:58:26 | 00:22:43 | 03:34:54 | $7.95 |

| m6a.8xlarge

(32 vCPU; 128 GB memory) |

02:44:02 | 00:39:14 | 02:03:35 | $4.67 |

| m7a.8xlarge

(32 vCPU; 128 GB memory) |

01:52:02 | 00:38:45 | 01:12:05 | $4.25 |

| m8a.8xlarge

(32 vCPU; 128 GB memory) |

01:22:02 | 00:27:28 | 00:53:28 | $2.34 |

AOSP 16 checkout-and-build time on standard CI-sized EC2 instances, using local NVMe or 400 GB gp3 EBS storage.

Organizations can choose to prioritize development velocity over cost and use the highest-core machines available. However, even on a high-performance 192-vCPU instance like i7i.48xlarge, an AOSP 16 checkout-and-build still takes just under 40 minutes and costs almost $14 per run – making it unsustainable at scale, even for well-resourced organizations.

Intel

| Amazon EC2 instance type | Total time (incl. setup)

(hh:mm:ss) |

Checkout time

(hh:mm:ss) |

Build time

(hh:mm:ss) |

Total cost

(USD) |

| m8id.16xlarge

(64 vCPU; 256 GB memory) |

01:04:07 | 00:14:41 | 00:48:14 | $5.37 |

| m8id.32xlarge

(128 vCPU; 512 GB memory) |

00:39:32 | 00:12:52 | 00:26:32 | $6.62 |

| i7i.48xlarge

(192 vCPU; 1536 GB memory) |

00:38:02 | 00:12:00 | 00:25:53 | $13.70 |

AMD

| Amazon EC2 instance type | Total time (incl. setup)

(hh:mm:ss) |

Checkout time

(hh:mm:ss) |

Build time

(hh:mm:ss) |

Total cost

(USD) |

| m8a.16xlarge

(64 vCPU; 256 GB memory) |

01:06:01 | 00:29:18 | 00:35:32 | $5.19 |

| m8a.24xlarge

(96 vCPU; 384 GB memory) |

00:57:54 | 00:25:28 | 00:32:13 | $6.80 |

| m8a.48xlarge

(192 vCPU; 769 GB memory) |

00:55:12 | 00:19:44 | 00:35:03 | $12.93 |

AOSP 16 checkout-and-build time on very large EC2 instances, using local NVMe or 400 GB gp3 EBS storage.

Despite ongoing hardware improvements, the traditional checkout-and-build approach still takes “ages” and forces a tradeoff between cost and performance. This tradeoff becomes more painful with each new release, and it is even more challenging for real-world device codebases, which are much larger. A smartphone codebase based on AOSP 16 often exceeds 200 million lines of code, while an automotive codebase, where AOSP powers infotainment, digital cockpit, and connected vehicle systems, typically exceeds 300 million.

For an individual developer, waiting three hours for a build is painful. For an enterprise running thousands of builds per day, it becomes a crisis.

The cost of long feedback cycles

The impact of slow builds extends far beyond compute bills. Consider the scale at which large organizations operate:

- Build volume: Enterprise AOSP teams routinely run over 1,000+ builds per day and even this is often a constrained subset, with many instrumented or sanitized builds skipped due to cost and time overhead.

- Developer productivity: Every build cycle is a context switch. Developers lose flow state, pick up other tasks, and lose time re-orienting when build results finally arrive. Across thousands of engineers, this adds up to thousands of lost developer-hours daily.

- Multiplicative complexity: Multiple device targets × multiple branches × CI frequency = a combinatorial explosion of required builds.

- Storage costs: At over 300 GB per workspace, with multiple workspaces per developer and hundreds of CI agents, storage costs compound rapidly.

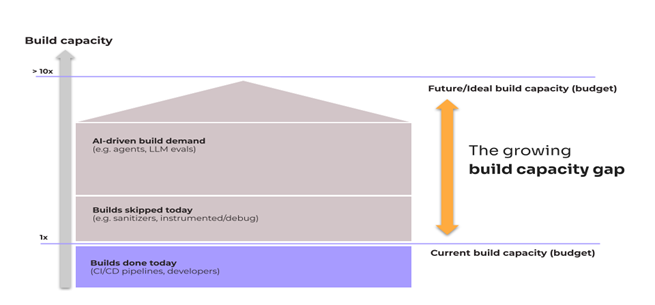

As AI coding compresses code creation, CI/CD pipelines become the critical bottleneck. A 3-hour pipeline serving hundreds or thousands of developers and agents isn’t a bottleneck, it’s a wall, limiting integration throughput. Therefore, optimizing pipeline speed from hours to minutes isn’t a nice-to-have – it is the prerequisite for AI-accelerated software development at scale. Organizations that fail to optimize their CI/CD pipeline velocity risk negating the productivity gains of AI-assisted SDLC.

Figure 1 – The growing gap between build demand and the build capacity (budget)

The path forward is clear: faster and more cost-efficient builds unlock meaningful productivity gains.

Why traditional approaches fall short

Teams have tried many strategies to tame AOSP build times. Each has its own limitations:

Bazel/Buck2 migration: Migrating a massive AOSP codebase to a build system like Bazel or Buck2 is not a viable option. Even well-resourced teams have abandoned these efforts midway. AOSP abandoned its Bazel migration in 2023.

Compiler wrappers (REClient, Goma): These tools distribute compilation across remote workers, but they only cover the compilation subset of the build. They don’t help with checkout times, linking, packaging, or the many non-compilation steps in an AOSP build.

Ccache: Local cache with a C/C++ compiler wrapper. This improves repeat builds on the same machine, but does not address other programming languages, checkout time or non-compilation build steps.

Throwing more hardware at it: Scaling from 8 to 192 vCPUs reduces build time from 5 hours to ~40 minutes – a meaningful improvement, but with sharply diminishing returns and a significant increase in cost.

While each of these approaches has its merits, none address the problem end to end.

Enter SourceFS from Source.dev

SourceFS takes a fundamentally different approach. Rather than optimizing individual build steps or migrating to a new build system, SourceFS is a high-performance virtual filesystem and an intelligent backend cache, written in Rust, that accelerates both the checkout and the build phases. Critically, it requires near-zero migration overhead and works seamlessly with existing Git, Repo, and build toolchains.

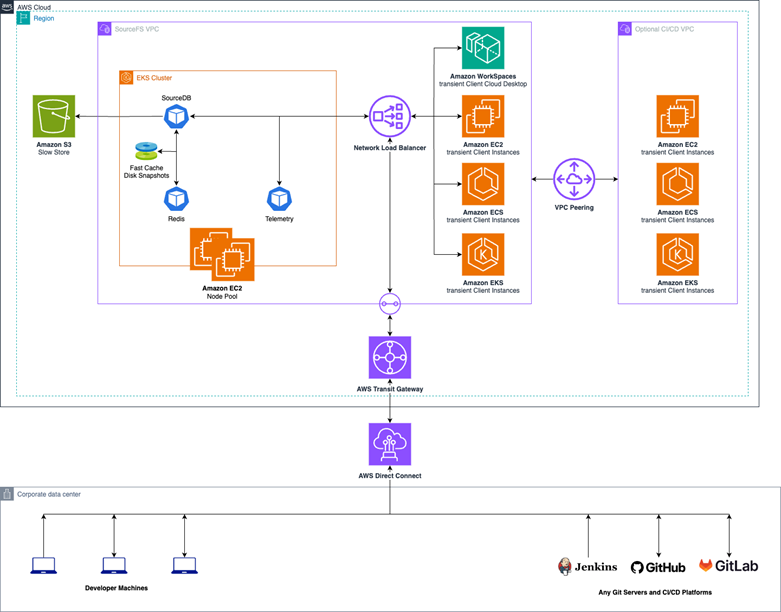

SourceFS integrates directly into your existing infrastructure, from CI pipelines running on Amazon EC2, Amazon ECS or Amazon EKS to developer environments, and can be mounted on Linux kernel 6.9 or newer. Its organization-wide cache can be used to speed up builds across both on-prem machines and cloud-based workspaces, including Amazon WorkSpaces.

Figure 2 – SourceFS architecture when deployed in AWS.

SourceFS accelerates every step of checking out and building the AOSP codebase, while scaling effortlessly to even larger real-world codebases, even beyond AOSP. It achieves this through two core mechanisms:

Virtual checkouts

Instead of downloading the entire 200 GB source tree, SourceFS transparently creates a virtual file representation of the codebase. Files are materialized on-demand only when a build step or developer actually accesses them. By eliminating the need to download hundreds of gigabytes of untouched files, SourceFS delivers 10–20x faster checkouts.

Besides faster checkouts and lesser storage requirements, this unlocks an important property that sometimes gets overlooked. Since checkouts take significantly less space, 2.1 GB for AOSP 16, using an in-memory file system becomes a viable build option, as presented below.

Build caching and replay

To accelerate builds, every build step executed under SourceFS runs inside a lightweight sandbox that precisely records all inputs, outputs, and environment variables. On subsequent builds, SourceFS identifies build steps whose inputs match results stored in the cache and replays their cached outputs, skipping redundant work entirely.

This isn’t limited to compilation. SourceFS covers 99.9% of build steps – including linking, packaging, generating documentation, and any other build step. Cached results are shared across the entire organization. As soon as one developer or CI agent builds a new component, every subsequent execution of a build step, with the same inputs, reuses the results instantly.

SourceFS enables using EC2 Spot Instances for AOSP builds

SourceFS makes EC2 Spot Instances a viable option. Spot instances use spare, unused compute capacity and offer up to 90% cost savings. However, they can be reclaimed with just two minutes notice, making them impractical to use for long-running, non-interruptible AOSP builds.

SourceFS changes this. Because builds complete so quickly, the likelihood of a Spot instance being reclaimed mid-build is significantly lower. And even when a reclaim does occur, builds can resume seamlessly, replaying the work already completed and continuing from where they left off.

The results

The combined effect is dramatic. Below are the times for a clean AOSP 16 checkout-and-build for full replay:

Intel

| Amazon EC2 instance type | Total time

(incl. setup) (hh:mm:ss) |

Checkout time

(hh:mm:ss) |

Build time

(hh:mm:ss) |

Total cost (USD) |

|||||

| Baseline | SourceFS | Baseline | SourceFS | Baseline | SourceFS | Baseline | SourceFS

(On-demand) |

SourceFS

|

|

| m8id.8xlarge

(32 vCPU; 128 GB memory) |

01:30:44 | 00:04:58

– 94.5% |

00:13:18 | 00:01:02

– 92.2% |

01:17:18 | 00:03:04

– 96.0% |

$3.80 | $0.21

– 94.5% |

$0.06

– 98.4% |

| m8id.16xlarge

(64 vCPU; 256 GB memory) |

01:04:22 | 00:04:26

– 93.1% |

00:14:56 | 00:01:05

– 92.7% |

00:48:14 | 00:02:29

– 94.8% |

$5.39 | $0.37

– 93.1% |

$0.07

– 98.7% |

| m8id.32xlarge

(128 vCPU; 512 GB memory) |

00:39:32 | 00:04:51

– 87.7% |

00:12:52 | 00:01:11

– 90.8% |

00:26:32 | 00:02:42

– 89.8% |

$6.63 | $0.81

– 87.7% |

$0.18

– 97.3% |

AOSP 16 checkout-and-build time across Baseline (without SourceFS), SourceFS, and SourceFS using Spot, on Intel EC2 instances, using local NVMe storage. *Spot price varies

Today, AWS doesn’t offer local attached storage for the current or previous generation of AMD chips, which would demonstrate the best build times, however, we can use an even faster storage…

SourceFS enables using tmpfs for AOSP builds

What about even faster builds?

As SourceFS drastically reduces disk usage, in-memory filesystems become a practical option for builds. For example, building AOSP 16 with full replay requires only 16 GB of storage, down from over 300 GB, as SourceFS materializes only the source files, toolchains, and artifacts actually used by the build.

This makes it feasible to use fast in-memory filesystems, and since artifacts are uploaded upon completion, there is no real downside to using volatile storage. In this setup, we mount a 20 GB tmpfs in-memory AOSP build directory for the SourceFS scenario:

AMD

| Amazon EC2 instance type | Total time (incl. setup)

(hh:mm:ss) |

Checkout time

(hh:mm:ss) |

Build time

(hh:mm:ss) |

Total cost (USD) | |||||

| Baseline | SourceFS | Baseline | SourceFS | Baseline | SourceFS | Baseline | SourceFS

(On-demand) |

SourceFS

(Spot) |

|

| r8a.2xlarge

(8 vCPU; 64 GB memory) |

03:37:04 | 00:07:01

– 96.8% |

00:41:26 | 00:01:13

– 97.0% |

02:54:34 | 00:04:55

– 97.1% |

$2.98 | $0.09

– 97.0% |

$0.04

– 98.7% |

| m8a.4xlarge

(16 vCPU; 64 GB memory) |

02:23:36 | 00:05:16

– 96.3% |

00:47:05 | 00:01:10

– 97.5% |

01:35:34 | 00:03:15

– 96.6% |

$2.92 | $0.10

– 96.6% |

$0.05

– 98.3% |

| m8a.8xlarge

(32 vCPU; 128 GB memory |

01:22:03 | 00:04:32

– 94.5% |

00:27:28 | 00:01:13

– 95.5% |

00:53:28 | 00:02:29

– 95.3% |

$2.34 | $0.18

– 92.3% |

$0.06

– 97.4% |

| m8a.16xlarge

(64 vCPU; 256 GB memory) |

01:06:01 | 00:04:10

– 93.7% |

00:29:18 | 00:01:17

– 95.6% |

00:35:32 | 00:02:02

– 94.2% |

$5.19 | $0.32

– 93.8% |

$0.11

– 97.9% |

AOSP 16 checkout-and-build time across Baseline (without SourceFS), SourceFS, and SourceFS using Spot, on AMD EC2 instances, using tmpfs storage. *Spot price varies

With SourceFS, even a 8vCPU instance builds AOSP nearly 10x faster than a traditional build on a 64 vCPU machine.

Using SourceFS on AWS in combination with EC2 Spot and an in-memory file system demonstrates the optimum. Using the EC2 m8a.8xlarge instance as baseline (good balance between performance and cost), AOSP 16 checkout-and-build drops by (from 1h 22min 03sec to 0h 4min 32sec – 18x faster!) and cost drops by 97.4% (from $2.34 to $0.06 – 39x cheaper!).

Note: The benchmarks above assume maximum cache hit rate. In practice, new changes introduce new code that has yet to be cached and compiled before it can be replayed. This typically affects only a small fraction of the ~200k build steps in the AOSP 16 build. All remaining steps are replayed and accelerated by SourceFS, effectively turning clean builds into incremental ones.

Running SourceFS on AWS

SourceFS offers two deployment models on AWS:

SaaS (Managed): Source.dev hosts and manages the caching infrastructure. Teams connect their AWS-based CI/CD pipelines and start building immediately.

BYOC (Bring Your Own Cloud): SourceFS is deployed entirely within your own AWS account. This model integrates with enterprise security requirements – VPC isolation, firewalls, SSO/SAML authentication and private pricing agreements.

Both models use AWS compute (Amazon EC2), and the efficiency gains from SourceFS fundamentally change the instance sizing calculus. The organization-wide cache also creates a network effect: builds get faster as more developers and CI agents contribute to the shared cache. The more your team builds, the faster everyone’s builds become.

Getting started

If your organization builds on AOSP and you’re looking to dramatically reduce build times and infrastructure costs on AWS, here’s how to get started:

- Request a demo at Source.dev to see SourceFS in action with your own codebase.

- Evaluate BYOC deployment if your security and compliance requirements call for running SourceFS entirely within your own AWS account.

SourceFS integrates with existing workflows – no build system migration, no rewriting of build rules, no multi-month onboarding. Teams typically see results within days of deployment.

If you want to talk to an AWS Expert on this topic or request a demo, please reach out to us by sending a mail to: fast-android-builds-on-aws@amazon.com

Conclusion

The release of AOSP 16 underscores a reality that automotive OEMs, smartphone manufacturers, and IoT companies have been grappling with for years: AOSP codebases are enormous, growing, and outpacing traditional build approaches.

SourceFS from Source.dev, running on AWS, eliminates the two biggest bottlenecks – checkout time and redundant build work – through virtual file materialization and organization-wide build caching. The results are 18x faster builds compressing a ~3 hour cycle to under 5 minutes, reducing cost by 39x for a full checkout-and-build cycle.

The combination of AWS cloud infrastructure and SourceFS innovation means that the AOSP build bottleneck, long accepted as an unavoidable cost of working with Android at scale, can now be effectively addressed.

To learn more about running AOSP builds on AWS with SourceFS, visit Source.dev or contact your AWS account team.