AWS for Industries

Intent-Based Nokia Network Slicing Powered by Amazon Bedrock: Enabling Intelligent, Adaptive 5G Slicing

Introduction

The network slicing market is accelerating as leading operators offer wide-scale services to enterprises and consumers. Network slicing is a 5G technology that partitions a single physical network into multiple, isolated virtual networks (slices), each customized for specific use cases like gaming, Extended Reality (XR), Internet of Things (IoT), and enterprise applications. Network slicing provides secure, high-capacity, low-latency, and reliable end-to-end connectivity across the entire path, from device, RAN, transport, core, and applications, while preserving isolation and predictable quality of service. Slices can be tailored to specific customer use cases and application profiles through capabilities such as bitrate, quality, latency, traffic routing, and security aligned with customers’ requirements, ensuring that each workload receives the connectivity it needs.

Mobile network usage varies depending on multiple factors, such as geographical area, movement of people, time of day, and events. These factors create significant challenges for network design and deployment because they generate unpredictable demand patterns that static network configurations cannot efficiently address. Geographic variations, population mobility, time-based fluctuations, and special events like concerts or emergencies can cause sudden traffic surges in localized areas. Additionally, network load, number of active users, and applications like High-Definition (HD) streaming, low-latency gaming, and interactive social media content impact network performance, capacity, coverage, and spectral efficiency. This variability forces operators to choose between over-provisioning infrastructure (increasing capital and operational costs) or experiencing degraded service quality during peak periods. Traditional network management and rule-based automation cannot keep pace with this complexity, requiring thousands of pre-defined rules for every scenario permutation, making agentic Artificial Intelligence (AI) with autonomous reasoning and adaptability essential for intelligent, scalable network slicing. Deploying dynamic network policies tailored to specific areas and use cases enables more efficient network resource utilization and enhanced customer experience.

Nokia and AWS teams have been working together to combine advanced network slicing and AI technologies. Intent-based 5G-Advanced slicing with agentic AI enables operators to provide network slicing services where and when needed, responding to real-world situations and enabling autonomous intelligence. Utilizing Amazon Bedrock services and Strands Agent SDK, network slicing decisions are now possible based on both intrinsic data (such as performance metrics) and extrinsic data (such as real-time traffic patterns).

Nokia’s agentic AI-powered solution on AWS introduces intent-based network slicing that continuously monitors network KPIs, infers real-world contextual data from multiple sources, and automatically enforces and adjusts network policies to meet service-level agreements. The integrated solution uses agentic AI to coordinate data analytics, inferencing, and slicing policies. The Agentic AI mashApp (Nokia’s Agentic AI Web App) further leverages open internet data, including events, timetables, incidents, traffic, locations, maps, and weather for different slicing use cases and applications.

Amazon Bedrock provides a foundation for Nokia’s agentic AI-powered network slicing solution, delivering scalable AI infrastructure with access to foundation models that enable reasoning capabilities without managing underlying infrastructure. Amazon Bedrock’s optimized inference capabilities achieve the response times critical for instant network slicing decisions, while its multi-model flexibility allows the solution to leverage different AI capabilities for specific tasks: reasoning models for complex policy decisions, embedding models for contextual analysis, and specialized models for demand forecasting. The Amazon Bedrock service’s enterprise-grade security with built-in encryption, VPC isolation, and compliance certifications ensures telecommunications operators can handle sensitive network data while meeting regulatory requirements. Additionally, Bedrock’s serverless architecture and pay-per-use pricing enable dynamic scaling based on network demand, optimizing costs while maintaining performance during peak periods, and its native integration with AWS analytics and monitoring services streamlines end-to-end AI pipelines for intelligent slicing decisions.

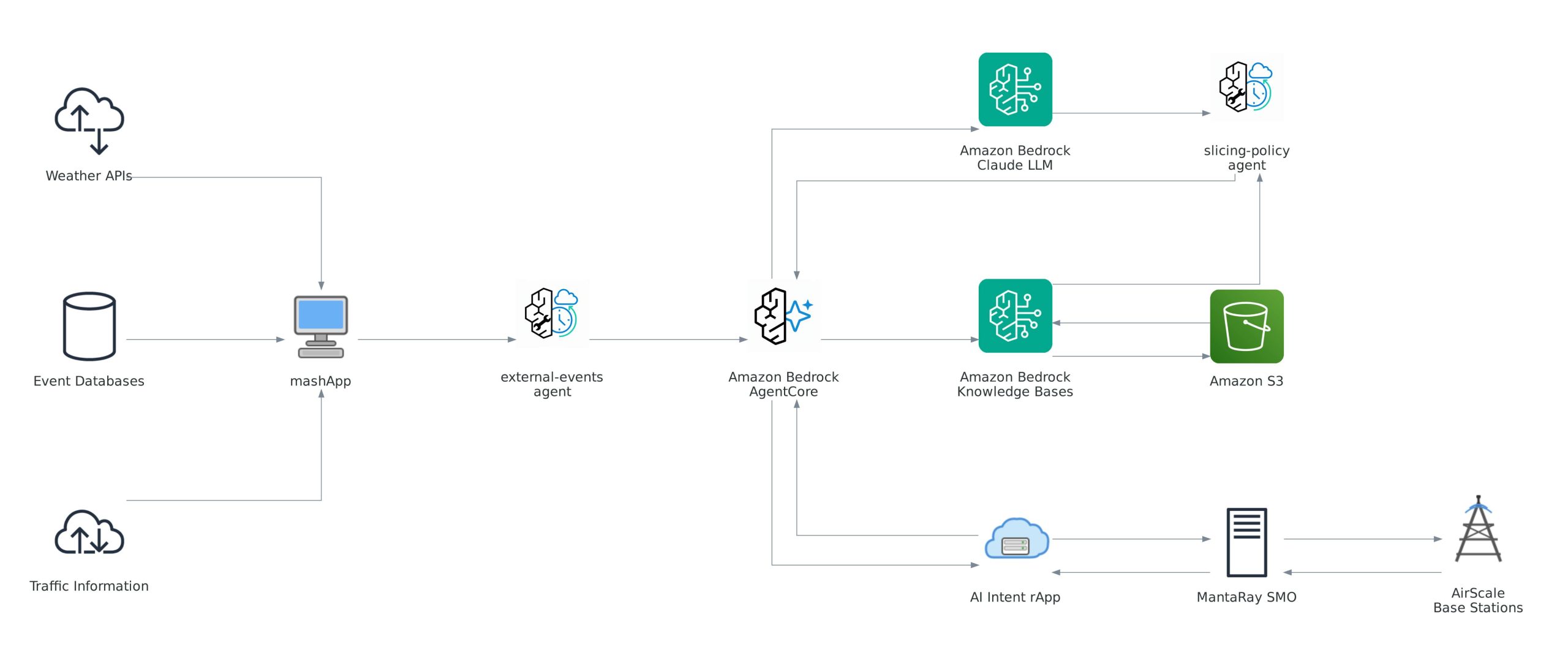

Technical Architecture Overview

The solution employs a multi-layered architecture integrating Nokia’s Radio Access Network (RAN) infrastructure with AWS AI services to enable autonomous, intent-based network optimization.

At the foundation, Nokia’s infrastructure layer consists of AirScale Base Stations that generate real-time performance counters including throughput, latency, and resource utilization metrics as well as enforce network slicing policies. Nokia’s MantaRay Service Management and Orchestration (SMO) aggregates real-time performance data from base stations and executes configuration changes recommended by the AI intent RAN application (rApp). This rApp leverages specialized Nokia AI agents running on Amazon Bedrock AgentCore to analyze network data, apply reasoning, and generate optimized slicing configurations that will be executed by MantaRay SMO.

The AWS AI Services layer provides intelligent processing capabilities through Amazon Bedrock, which delivers foundation model capabilities using the Claude LLM for intelligent parameter estimation and reasoning. Amazon Bedrock Knowledge Bases stores and retrieves historical RAN parameters from Amazon S3, enabling context-aware recommendations, while Amazon Bedrock AgentCore powers the two specialized agents, slicing-policy-agent and external-events-agent, built using Strands SDK. Amazon S3 serves as the repository for historical performance data, network configurations, and training datasets that inform the AI decision-making process.

The Agentic AI components complete the architecture with the Agentic AI mashApp, which queries open internet data sources including weather APIs, event databases, and traffic information, formatting external context as JSON for processing. The architecture leverages two specialized Nokia AI agents with distinct responsibilities: the external-events-agent ingests and processes real-world contextual data including weather conditions, scheduled events, traffic incidents, and location-specific information, while the slicing-policy-agent receives intent parameters and fuses them with historical performance data and external context to compute optimized RAN configurations.

This integrated architecture enables seamless data flow from network infrastructure through intelligent AI processing to autonomous policy deployment, creating a closed-loop system that continuously optimizes network performance without manual intervention.

Figure 1: Technical Architecture Overview

Amazon Bedrock AgentCore: Accelerating Production-Ready Network Slicing

Amazon Bedrock AgentCore provides the foundational infrastructure that enables this intent-based network slicing solution to move from prototype to production with enterprise-grade capabilities.

AgentCore Runtime delivers a secure, serverless environment purpose-built for deploying and scaling dynamic AI agents, offering extended runtime support for long-running network optimization workflows, true session isolation for secure execution, fast cold starts for rapid response to network changes, and automatic scaling without infrastructure management. This reduces the need to build and manage complex infrastructure from scratch, allowing the team to focus on network optimization logic.

AgentCore Memory enables agents to maintain intelligent context across sessions through short-term and long-term memory for tracking network performance patterns and historical configurations. This memory capability is essential for the slicing-policy-agent to combine intent values, historical data, and external context to predict optimal RAN configurations, reducing the need to manually define instructions for every network condition permutation.

AgentCore Gateway transforms existing APIs into agent-ready tools with minimal code integration, providing connectivity to third-party tools and data sources through Model Context Protocol (MCP), fine-grained identity-aware controls, and unified tool access enabling both agents to securely interact with MantaRay Service Management and Orchestration (SMO) APIs, external data sources, and Amazon Bedrock Knowledge Bases.

AgentCore Observability provides visibility through real-time monitoring via CloudWatch dashboards, complete traceability of agent reasoning for debugging and compliance, and OpenTelemetry-compatible telemetry, crucial for telecommunications operators to audit agent decisions and ensure reliable production operations.

AgentCore’s composable services accelerate development with integration requiring less than few lines of code for applications built on frameworks like LangGraph and Strands SDK, enabling rapid development of the slicing-policy-agent and external-events-agent following the Nokia Tampere workshop.

AgentCore supports multi-agent collaboration through Agent-to-Agent (A2A) protocol support and parallel orchestration, demonstrating how specialized agents, one for parameter estimation and one for external context, can collaborate to achieve complex network optimization that neither could accomplish alone. Within Nokia’s intent-based network slicing architecture, these AgentCore services work together to power the two specialized Nokia AI agents. The slicing-policy-agent leverages AgentCore Runtime for secure, scalable execution of long-running optimization workflows, uses AgentCore Memory to maintain context about historical network performance patterns and previous RAN configurations across sessions, accesses Amazon Bedrock Knowledge Bases through AgentCore Gateway to retrieve relevant historical parameters from S3, and relies on AgentCore Observability for complete traceability of parameter estimation decisions. Similarly, the external-events-agent utilizes AgentCore Runtime for continuous monitoring of external data sources, employs AgentCore Memory to track evolving contextual patterns (weather trends, recurring events, traffic patterns), connects to third-party APIs through AgentCore Gateway using Model Context Protocol (MCP), and provides full visibility into its reasoning through AgentCore Observability. Both agents communicate via the standardized Agent-to-Agent (A2A) protocol supported by AgentCore, enabling them to collaborate seamlessly. The external-events-agent shares contextual intelligence with the slicing-policy-agent, which combines this with intent parameters and historical data to generate optimized RAN configurations that are then deployed autonomously through MantaRay SMO APIs, all while maintaining complete audit trails for telecommunications compliance requirements.

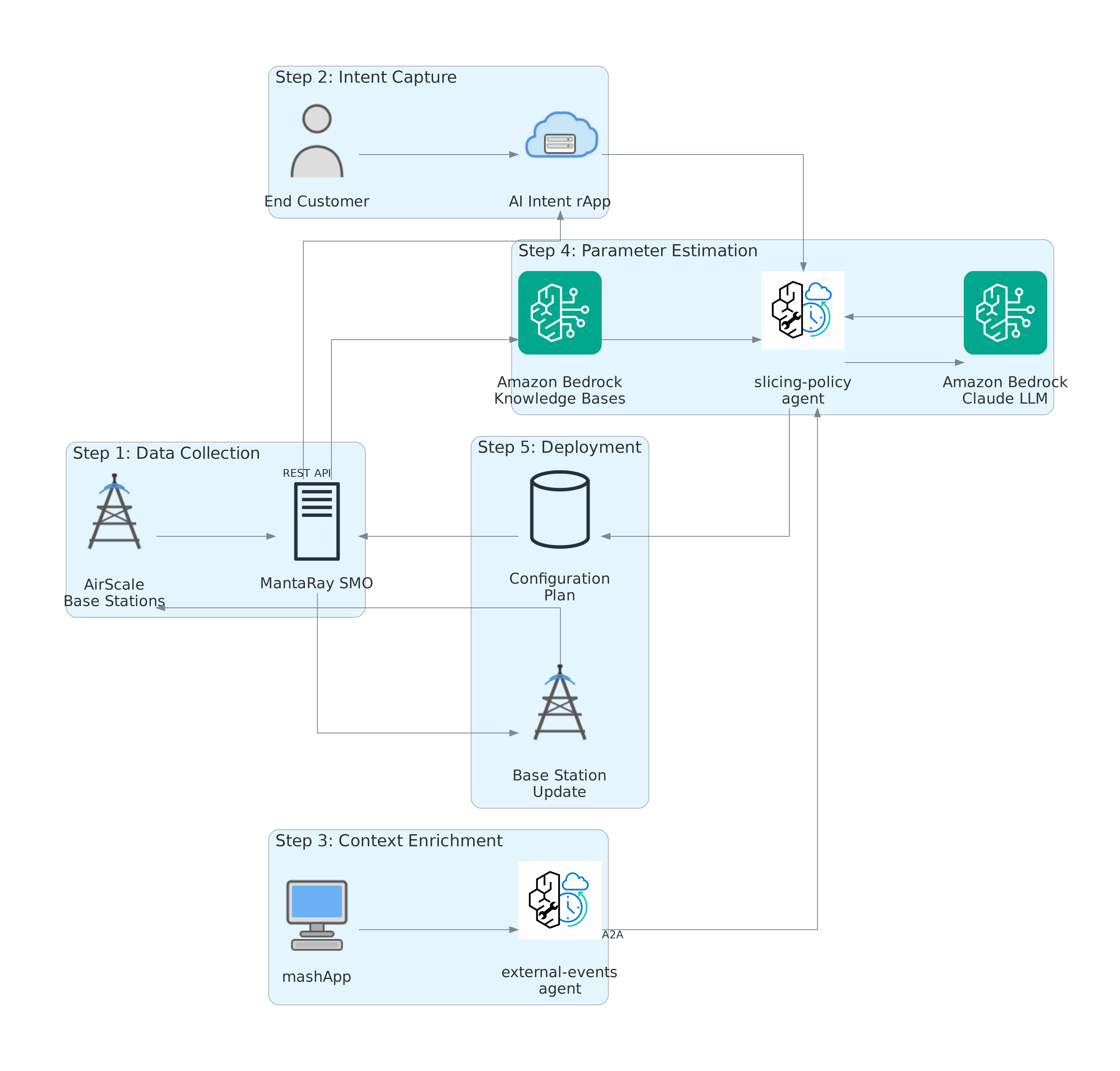

Intelligent Slicing Data Flow Architecture

The intelligent slicing system operates through a coordinated five-step workflow that integrates network infrastructure, AI reasoning, and autonomous policy deployment. This end-to-end data flow demonstrates how intent-based requests are transformed into optimized RAN configurations through the collaboration of specialized AI agents, external context enrichment, and historical performance analysis. Figure 2 illustrates this complete workflow, showing how data moves from initial collection through intelligent processing to autonomous deployment, creating a closed-loop system that continuously adapts network slices to real-world conditions.

Step 1: Data Collection and Aggregation

Base stations continuously collect performance counters measuring bitrate, latency, and resource utilization as well as continuously schedule traffic based on slicing configurations. MantaRay SMO aggregates this counter data from multiple base stations across the network, with historical performance data flowing to Amazon S3 buckets via Amazon Bedrock Knowledge Bases.

Step 2: Intent Capture

End customers express their bandwidth and latency requirements through text, mobile applications (for individual customers), or operator portals (for enterprise customers). These intents are captured as structured data (for example, 150 Mbps representing desired downlink throughput), with the AI Intent rApp receiving intent values as integers or JSON messages.

Step 3: External Context Enrichment

The Agentic AI mashApp queries open internet data sources for relevant contextual information. The external-events-agent retrieves weather, events, traffic, and location data formatted as JSON (for example, {“lat”: 60.1921, “lon”: 24.9458, “timezone”: “Europe/Helsinki”, “temp”: -1.44, “humidity”: 97}). This contextual data is then combined with network performance metrics.

Step 4: Intelligent Parameter Estimation

The AI Intent rApp fetches aggregated counter data from MantaRay SMO. The slicing-policy-agent combines three critical data sources:

- Intent values: Desired performance parameters

- Historical RAN parameters: From Amazon Bedrock Knowledge Bases stored in S3

- External context: From the external-events-agent

After data is formatted for processing, Amazon Bedrock with Claude LLM processes the combined dataset to estimate optimized radio parameters.

Step 5: Autonomous Deployment

The slicing-policy-agent inserts new estimated radio parameters into the MantaRay SMO configuration plan via REST API. MantaRay SMO then pushes new configurations to targeted base stations, where updated RAN slicing policies are applied automatically. Network slices are dynamically adjusted without manual intervention.

Figure 2: Intelligent Slicing Data Flow

Agent Coordination and Orchestration

The two agents work in tandem through Amazon Bedrock AgentCore:

- The external-events-agent operates continuously, monitoring internet data sources and maintaining current contextual awareness and defining slice intents accordingly.

- The slicing-policy-agent receives new intent targets from external-events-agent, queries Amazon Bedrock Knowledge Bases for historical data, and invokes Claude LLM for intelligent estimation.

The agents collaborate through the standardized Agent-to-Agent (A2A) protocol, dynamically exchanging information according to specific use case requirements detailed in the following section.

Key Use Cases

Intent-Based Enterprise and Industrial Slicing

The solution measures live network KPIs such as bitrate and latency and autonomously adjusts RAN policies to meet enterprise SLAs across campuses, business parks, and city areas. This ensures slicing services for critical applications in manufacturing, IoT, drones, smart cities, hospitals, energy, transportation, and ports.

On-Demand Slicing for Emergency Response as well as for Premium 5G+/FWA

Agentic AI boosts network performance for selected 5G base stations to serve first responders and public safety authorities during emergencies, triggered by accidents and alarms from external data sources. The solution preserves quality of service for premium 5G+ and FWA customers using gaming, streaming, XR, and AI applications in response to major traffic surges, weather conditions, and environmental changes.

Mass Event Optimization

The system inferences network data and sets slicing policies for scheduled events, enabling 5G slicing for VIP spectators, payment applications, fan engagement, video broadcasting, and operational crews in arenas, parks, and conference centers.

Value Proposition

Amazon Bedrock provides the production-grade AI foundation essential for telecommunications-scale network slicing, delivering production-ready infrastructure that reduces the complexity of building and managing AI systems from scratch. Bedrock’s serverless architecture with pay-per-use pricing enables dynamic scaling based on network demand while optimizing costs, achieving the response times critical for instant slicing decisions without infrastructure overhead. The services’ multi-model flexibility allows operators to leverage specialized AI capabilities: reasoning models for complex policy decisions, embedding models for contextual analysis, and forecasting models for demand prediction, while built-in security with VPC isolation, encryption, and compliance certifications ensures sensitive network data meets regulatory requirements. Combined with Amazon Bedrock AgentCore’s production-ready runtime, memory management, and observability capabilities, AWS accelerates development from prototype to production with less than few lines of integration code, enabling Nokia and telecommunications operators to focus on network optimization logic rather than AI infrastructure management while maintaining the scalability, security, and reliability required for mission-critical network operations.

Built on AWS services above, this architecture reduces the need for manually defining RAN parameters for every permutation of network conditions by leveraging machine learning to predict optimal configurations. The system continuously learns from historical performance data stored in Amazon S3, adapts to real-world conditions through external data integration, and autonomously adjusts network slices when and where needed, reducing operational complexity and improving service quality. The intent-based orchestration provides autonomous adaptability by combining historical data, intended parameters, and external events to predict optimal RAN slicing configurations for specific cell sites, reducing the need to manually define instructions for every permutation, which would be cost-prohibitive and time-consuming.

Technical Differentiators

- Autonomous Intelligence: The solution operates without explicit instructions for each network condition permutation, using foundation models to infer optimal parameters based on learned patterns.

- Multi-Source Data Fusion: By combining network performance metrics, external contextual data, and historical configurations, the system achieves situational awareness.

- Real-Time Adaptability: The agents operate continuously, enabling the required response times to change network conditions and external events.

- Scalable Architecture: Built on Amazon Bedrock AgentCore and leveraging Amazon S3 for data storage, the solution scales across thousands of cell sites.

Conclusion

Nokia and AWS’s intent-based network slicing solution represents a shift in telecommunications by enabling context-aware, adaptive differentiated services. By combining Nokia’s RAN infrastructure with Amazon Bedrock’s AI capabilities, telecommunications providers can now deliver autonomous, adaptive network services that respond intelligently to real-world conditions.

This innovation unlocks new value streams for operators while supporting next-generation applications and differentiated services across enterprise, industrial, and consumer segments. The solution demonstrates how agentic AI can transform network operations into proactive, autonomous systems that continuously optimize performance, delivering services where and when customers need them.

Call to action: visit Nokia and AWS showcase industry-first agentic AI-powered network slicing | Nokia.com and AWS for Telecom to contact us if you are interested to deploy this solution in your network. Visit us at Mobile World Congress 2026 to dive deeper into this solution and see our live demos.

Nokia R&D contributors: Elias Hagelberg, Rami Nurmoranta, Sirkku Greijula, Virag Toth, Mikko Tsokkinen, Jani Pikkarainen, Jani Lammi, Mika Uusitalo, AWS Telco Customer Solutions Engineering: Youssef Ibrahim, Fahad Ahmed