AWS Global Infrastructure and Sustainability Blog

Gemini delivers high performance, cloud-native trading API with AWS Local Zones

For latency-sensitive trading workloads, proximity to the exchange matters. Even single-digit millisecond differences in round-trip time can meaningfully influence the trading experience. This means that trading firms evaluating cloud infrastructure need to think carefully about instance placement, network configuration, and physical distance to execution venues. Gemini, a NASDAQ-listed exchange, wanted to take advantage of the operational efficiencies of a cloud operating model while meeting their latency and performance requirements.

In the late summer of 2025, Gemini extended its trading infrastructure to an AWS Local Zone in New York City (us-east-1-nyc-2a) and delivered a new WebSocket API that gives cloud-native traders sub-2ms connectivity to Gemini’s matching engine. They implemented this improvement while preserving the scalability, security, and compliance posture of managed cloud deployment. This allows trading clients to stream trades, order book updates, and account balance updates efficiently with the lowest possible latency on Gemini. The new WebSocket API also provides full order management functionality and can stream real-time account updates.

This architecture provides:

- Lower latency: The new WebSocket API runs on top of Gemini’s new and improved messaging infrastructure on AWS Local Zone, providing lower latency for both market data and order entry on AWS.

- Increased security and ease of setup: through VPC Peering, customers can connect to Gemini’s trading system with minimal latency and enhanced security. The whole provisioning process is automated and self-service with defined security controls in place, and can be completed in a matter of minutes.

Architecture overview

Gemini’s trading system is built for ultra-low latency (end-to-end latency below 500 microseconds). The trading system operates out of the Equinix NY5 data center in the New York metropolitan area — the heart of the global financial connectivity ecosystem. This environment supports ultra-low latency through deterministic network performance using the latest advances in hardware acceleration, routing optimization, and network infrastructure.

Trading clients (HFT, Hedge Funds, Trading Firms) previously had two distinct options to connect to Gemini, each with its own balance of latency, cost, and operational flexibility.

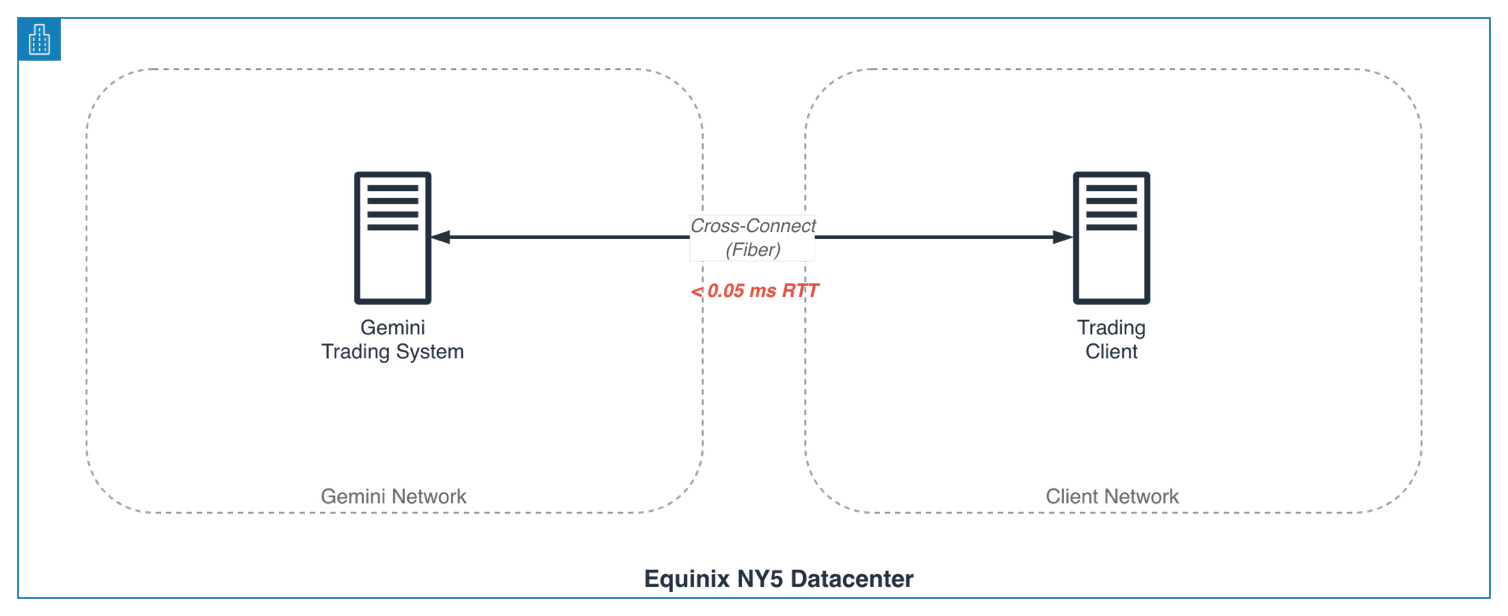

Option 1: Ultra–low–latency access via physical cross-connects

In this configuration, both Gemini’s trading system and the client’s trading servers are located within the same Equinix NY5 facility. A dedicated fiber link (cross-connect) is provisioned directly between Gemini’s network cage and the client’s rack, creating a private network connection.

This setup adds minimal latency overhead (<100us) and the performance is highly consistent, making it ideal for high-frequency and latency-sensitive trading strategies. However, this model comes with a challenge in infrastructure cost and complexity. Clients must invest significant infrastructure to maintain a physical connection to the NY5 Datacenter, coordinate network provisioning with Equinix, and manage detailed Layer 2/3 configurations such as BGP peering, IP addressing, and NAT policies.

The full process — from ordering the cross-connect to completing production testing — can take several weeks or even months, making it a significant barrier for Gemini to onboard new clients.

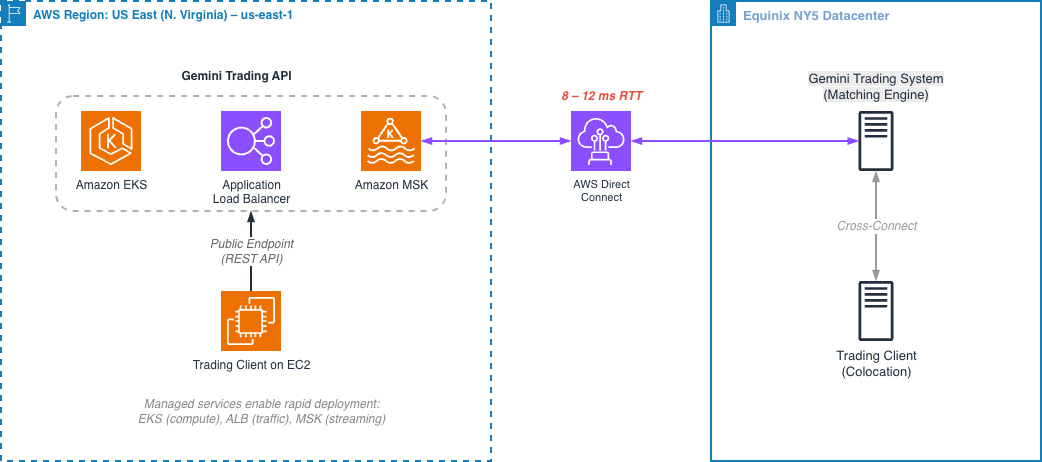

Option 2: Cloud-native access via AWS US East (Northern Virginia) Region

As crypto traders increasingly migrate their trading infrastructure to the cloud, Gemini has expanded its footprint into AWS US East (Northern Virginia) Region (us-east-1) to provide an easier and flexible way for clients to connect to Gemini through AWS.

In this model, the Gemini Trading API runs within AWS us-east-1 (Northern Virginia), backed by a dedicated AWS Direct Connect link to Gemini’s NY5 data center in the New York metropolitan area, where its core matching engine and trading systems operate. To extend trading capabilities into the cloud, Gemini has built an AWS Managed Streaming for Apache Kafka (MSK) based messaging backbone that connects NY5 and the AWS Region. This infrastructure streams market data, orders, and execution reports between the two environments, creating a hybrid architecture that bridges Gemini’s physical trading core with its cloud-based API surface.

In this setup, clients can use their existing AWS infrastructure to connect to Gemini’s Trading API through the public endpoint. This setup is significantly easier to establish, typically in a few hours rather than weeks.

Providing the Trading API through the AWS network allows Gemini to take full advantage of managed cloud services to accelerate deployment and reliability:

- AWS Elastic Kubernetes Service (EKS) enables fast and consistent deployment of microservices at scale.

- AWS Application Load Balancer (ALB) provides built-in traffic distribution, DDoS protection, and SSL termination with minimal operational overhead.

- AWS Managed Streaming for Apache Kafka (MSK) serves as the backbone for high-throughput, fault-tolerant market data and order streaming between AWS and NY5.

This new architecture gives Gemini the ability to iterate faster, scale elastically, and extend access to clients with a modern tech stack. However, this connectivity option did not meet the network latency requirement needed for the workload. This is mostly driven by physical distance between Northern Virginia where the AWS Region is located and New York where the Gemini Trading System is running, but it is also related to other networking overhead on the cloud infrastructure.

- The AWS Region us-east-1 (Northern Virginia) is geographically distant from Gemini’s trading engine in NY5. Connected over AWS Direct Connect, the minimum round-trip latency falls in the 8-12 millisecond range.

- Each managed service layer (AWS Network Firewall, AWS ALB routing, Amazon VPC networking) introduces additional micro-latencies and network hops; although typically negligible for standard web workloads, these overheads become significant in the context of high-frequency trading where microseconds matter.

For Gemini’s customers, this connectivity option is favorable as it overcomes the infrastructure hurdles presented in option 1, but for the most latency-sensitive trading strategies, further reducing round-trip time remained a priority. This led Gemini to explore AWS Local Zones to combine cloud operational benefits with near-colocation proximity: retaining the benefits of AWS infrastructure while reducing round-trip latency to near-data center levels.

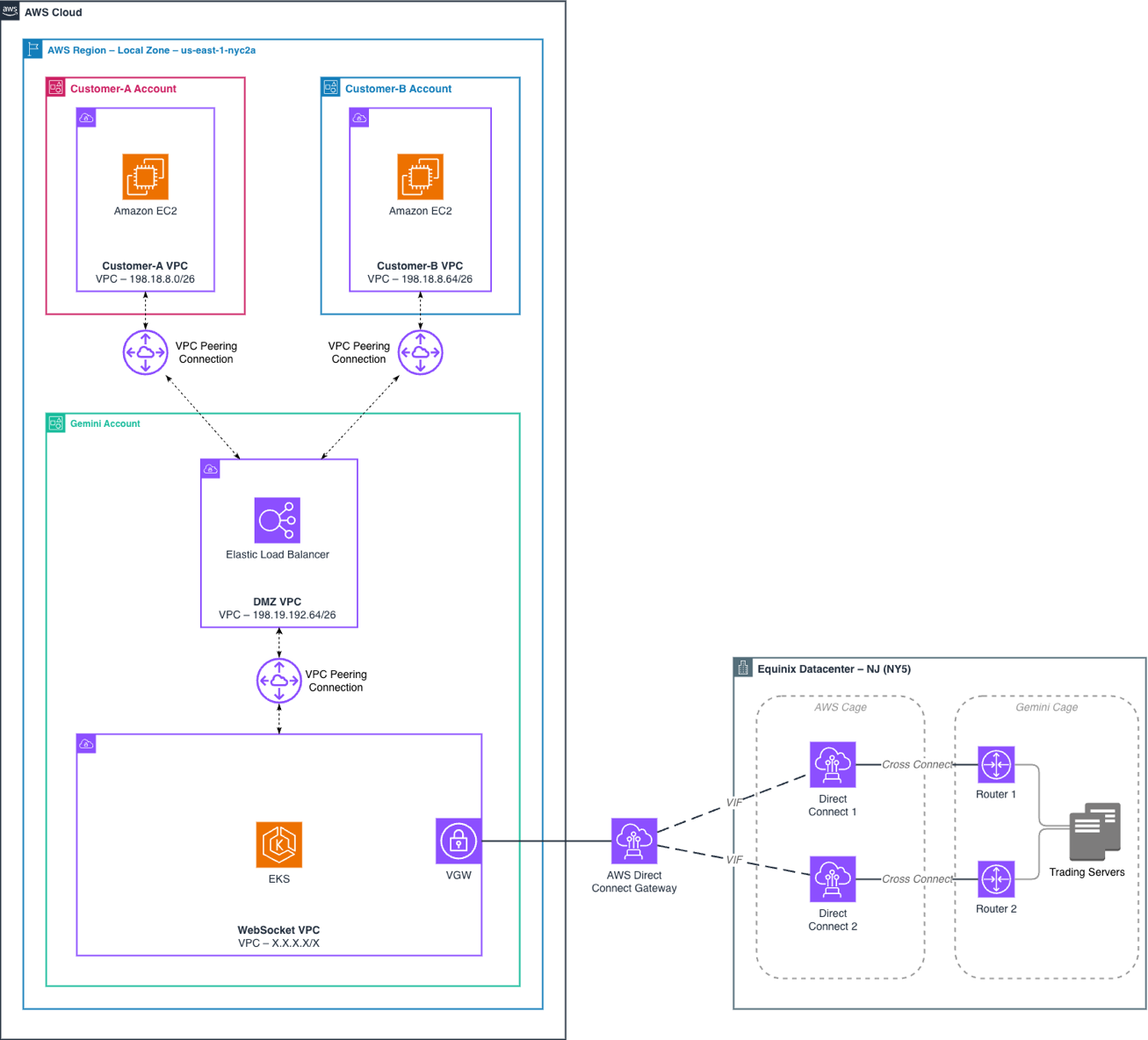

Option 3: AWS Local Zone + VPC Peering

The NYC-2A Local Zone brings compute, storage, and networking resources physically into the New York metropolitan area, significantly reducing the distance between Gemini’s core trading systems in Equinix NY5 and AWS infrastructure. As a result, Gemini can now maintain the ease and scalability of cloud deployment while achieving sub-millisecond connectivity to its NY5 data center.

Gemini’s application design, requirements and testing show round-trip time (RTT) latency of under 1 millisecond between AWS Local Zone NYC-2A and NY5, a significant improvement over the 8-12ms RTT to the closest AWS Region.

| Mode | Relative Proximity | Raw Network RTT | Messaging Infrastructure Overhead | Software Stack Overhead | Integration & Setup Lead Time | Estimated End-to-End Latency (Min) |

|---|---|---|---|---|---|---|

| Physical Cross-Connect (NY5) | < 100 m (same facility) | < 0.05 ms | Multicast network infra within NY5 (negligible) | FIX Protocol (~100 µs) | Multi Months | ~1 ms |

| AWS us-east-1 (N. Virginia) | Hundreds of miles | 8–12 ms | AWS MSK–based message bus (+3–5 ms) | REST API over Multi AZ ALB + AWS Firewall (+1 – 3 ms) | Hours (cloud-based) | ~15 ms |

| AWS Local Zone (NYC-2A) | NYC metro area | < 1 ms | In-memory message bus (< 0.1 ms) | WebSocket overDirect VPC Peering (+0.1–0.3 ms) | Hours (cloud-based) | ~1.5 – 2 ms |

As the table illustrates, the Local Zone connectivity option delivers end-to-end latency approaching that of a physical cross-connect (approximately 1.5–2ms versus ~1ms) while retaining the provisioning speed and operational simplicity of a cloud-based deployment.

Extending Gemini’s trading system network to the Local Zone

AWS Local Zone NYC-2A is located in the NYC metropolitan area and physically close to NY5 Datacenter. To extend Gemini’s network to AWS Local Zone NYC-2A, Gemini established a new Direct Connect path using a third-party carrier connection that links directly from Equinix NY5 to the Local Zone facility, in order to provide a reliable, dedicated, and high-speed network connection between the trading engine and the Local Zone.

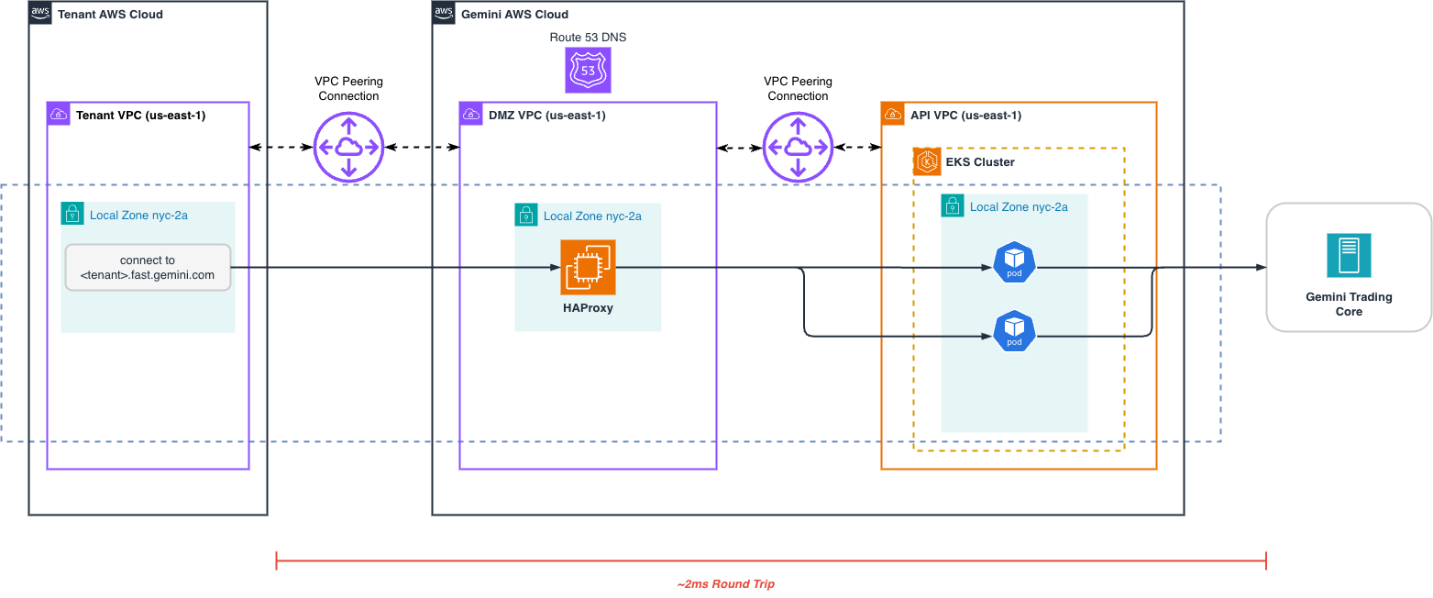

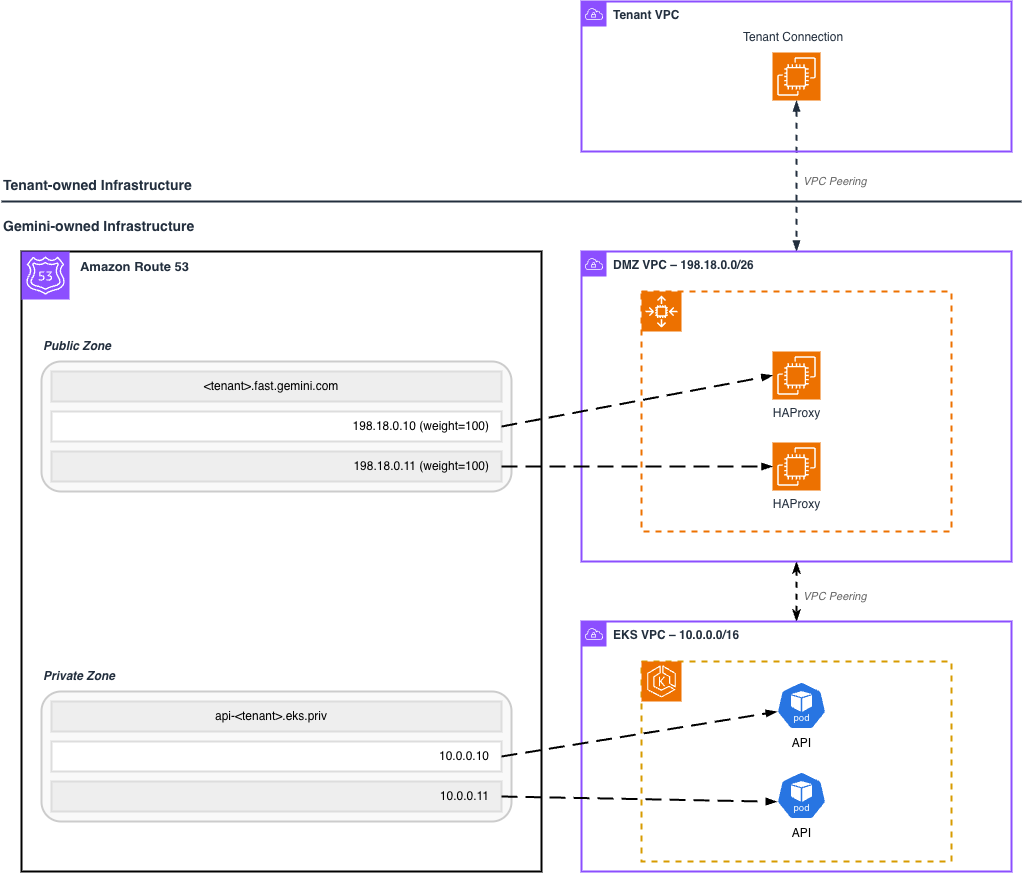

Connecting customers from the Local Zone with VPC Peering

To achieve a low-latency network connection on AWS, Gemini requires each client to establish VPC Peering with one of Gemini’s Demilitarized Zone (DMZ) VPCs, which act as isolated ingress zones for trading traffic. Within each DMZ VPC, a fleet of HAProxy EC2 instances terminate client sessions and forward requests to Gemini’s WebSocket API servers, also in the NYC-2A Local Zone. All communication stays on the AWS private backbone network, avoiding the public internet and firewalls for the lowest and most consistent latency of all 3 connectivity options Gemini offers.

To scale horizontally, Gemini operates multiple DMZ VPCs within the Local Zone, distributing client peering connections across them based on capacity, latency, and AWS per-VPC peering limits. Each DMZ VPC maintains its own routing tables, CIDR allocations, and HAProxy fleet, enabling predictable performance and fault isolation.

The DMZ VPC hosts an EC2 Auto Scaling Group running HAProxy instances in the us-east-1-nyc-2a Local Zone. These instances automatically register a weighted A record inside a public Amazon Route 53 Zone (<tenant>.fast.gemini.com in figure 5) so that tenant connections can discover them. An EC2 Auto Scaling termination lifecycle hook instructs the instance to deregister itself from Route53 before shutdown.

Implementing advanced load balancing using HAProxy in the Local Zone

To optimize for the lowest possible latency when balancing client traffic across multiple WebSocket API server pods running in Amazon EKS on the Local Zone, Gemini implemented a custom load-balancing layer using HAProxy and Route 53.

The WebSocket API server pod IP is registered into a private Route 53 zone so that the HAProxies can discover and balance traffic across pods. The private Route 53 zone is associated with both the Amazon EKS VPC as well as the DMZ VPC where the HAProxies reside. Pod IP registration is accomplished using a combination of a headless Kubernetes service and external-dns controller.

With this architecture, each WebSocket API pod will automatically register/deregister to the “api-<tenant>.eks.priv” record in Route 53. HAProxy is configured to leverage the Amazon VPC Route 53 resolver endpoint to dynamically resolve pod IPs as they come and go. Connections from HAProxy to the WebSocket API pod flow over a VPC Peering connection.

Gemini’s introduction of HAProxy instances provides an abstraction layer similar to AWS Network Load Balancer so that WebSocket API pods can dynamically register/deregister due to scaling events. To measure the additional latency impact, Gemini used sockperf to measure the latency impact of running with and without HAProxy connections between the client an API pod:

| p99.9 | p99 | p90 | p50 | |

|---|---|---|---|---|

| Without HAProxy | 275.04 us | 61.72 us | 58.83 us | 38.31 us |

| With HAProxy | 267.66 us | 86.21 us | 80.61 us | 61.22 us |

Gemini determined the traffic discovery and routing benefits that HAProxy provides outweigh the ~20 microseconds, or 1%, of additional latency as it does not significantly impact their performance goal of <3ms overall latency.

Conclusion

By deploying Gemini’s trading infrastructure on AWS Local Zone NYC-2A, Gemini reduced cloud-based round-trip latency from 8-12ms to approximately 2ms — a 4–5x improvement that brings cloud-native connectivity within striking distance of physical colocation. Trading firms can now access Gemini’s matching engine with sub-2ms RTT through a standard VPC Peering connection, provisioned in minutes rather than months, while benefiting from the scalability and operational excellence delivered by AWS.

Achieving this required solving several infrastructure challenges specific to hybrid edge deployments. Gemini introduced a DMZ VPC pattern to facilitate scalable VPC Peering across multiple client connections, and implemented a custom HAProxy load balancing layer with Amazon Route 53 for dynamic service discovery — providing the traffic distribution capabilities needed to serve WebSocket API pods running on Amazon EKS in the Local Zone.

Gemini continues to invest in its cloud-native trading infrastructure on AWS, with plans to further optimize latency, expand capacity, and extend this architecture to serve a broader set of trading clients.

To learn more and get started with AWS Local Zones, see the Local Zones documentation.