AWS for M&E Blog

How AWS Built a Live AI-Powered Vertical Video Capability for Fox Sports with AWS Elemental Inference

For media companies, delivering highlights to social platforms quickly is a competitive advantage that directly drives viewership and monetization. With hundreds of live events across multiple leagues, many organizations lack resources for manual clipping and reformatting key moments for every game. The key moments still happen — but without a scalable way to capture and distribute them, and the opportunity to engage fans and grow audiences goes unrealized. AWS Elemental Inference solves this challenge, enabling media companies to capture the excitement of the moment and share it with fans as it happens.

Fox Sports worked with AWS to leverage AWS Elemental Inference, a fully managed AI service that automatically detects key moments and transforms live and on-demand broadcasts into vertical video content optimized for any platform. Alongside AWS Elemental MediaLive, AWS Elemental MediaPackage, and AWS Elemental MediaConvert, the new service was used to automatically detect key moments from live sports broadcasts, extract video clips, reformat them for 9:16 vertical social platforms, and surface them in a review portal — all within seconds of the moment occurring on-air. This blog describes the prototype solution AWS built, including the architecture, key design decisions, and the business logic behind each component.

Solution Overview

For a digital content creator working in live sports, the traditional workflow involves monitoring broadcasts across multiple screens, manually identifying key moments, clipping them, reformatting them for vertical social platforms, and distributing to various channels. This solution collapses that workflow into a single, automated experience. Throughout the broadcast, video clips of key moments appear automatically in a web portal — detected, extracted, and verticalized within 20–30 seconds of the moment occurring on-air. From there, editors can review, search, tag, edit, and distribute finished content to social channels, in one, unified workflow and application.

The following three main components enable this experience:

- A user-facing web application provides the interface for content review, editing, and distribution.

- An automated clip harvesting pipeline handles the detection-to-clip lifecycle.

- A video editing and download pipeline supports post-processing workflows such as trimming, stitching, and MP4 export.

The solution combines AWS Elemental services for the media-specific services with a serverless backend for orchestration and storage. AWS Elemental MediaLive ingests and encodes the live broadcast. AWS Elemental MediaPackage V2 prepares the live stream for clip extraction by segmenting the content and maintaining a rolling window of recent footage that the system can harvest from on demand. AWS Elemental Inference analyzes the video in real time, detecting key moments and generating vertically cropped output for social platforms. AWS Elemental MediaConvert handles video transcoding for editing and download workflows. The serverless layer — AWS Lambda, Amazon EventBridge, Amazon Simple Queue Service (SQS), Amazon DynamoDB, and Amazon Simple Storage Service (S3) — ties everything together with event-driven processing and durable storage.

The Web Portal Experience

The web portal provides digital content creators a single workspace for managing the full lifecycle of live sports highlights. From the home screen, operators create live events and monitor incoming clips as they appear in near-real time. Clips come with AI-generated tags and descriptions, making it easy to identify and categorize moments. Editors can filter clips by event, search across metadata, and add custom tags – making it fast and intuitive to find the right clips to share across social platforms.

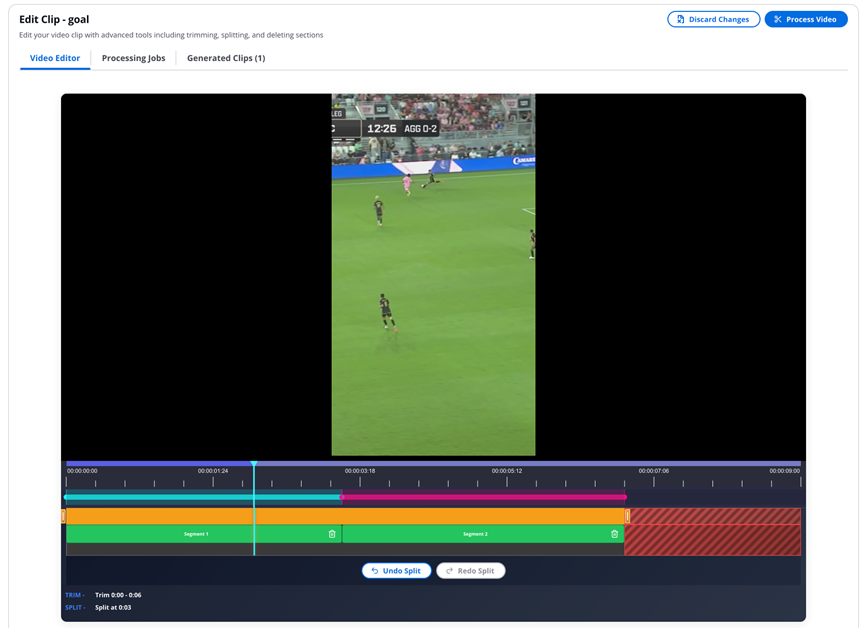

Once a clip is selected, a modal opens with instant time-shifted playback directly from the source stream. A built-in video editor provides a visual timeline that displays individual HLS segments, allowing editors to perform trim, split, delete, and merge operations directly in the browser. Each edit produces a new versioned asset, so the original clip is always preserved. Editors can iterate on multiple versions without risk of overwriting source material.

Example of video editor view with time-shifted playback – Courtesy of Fox Sports

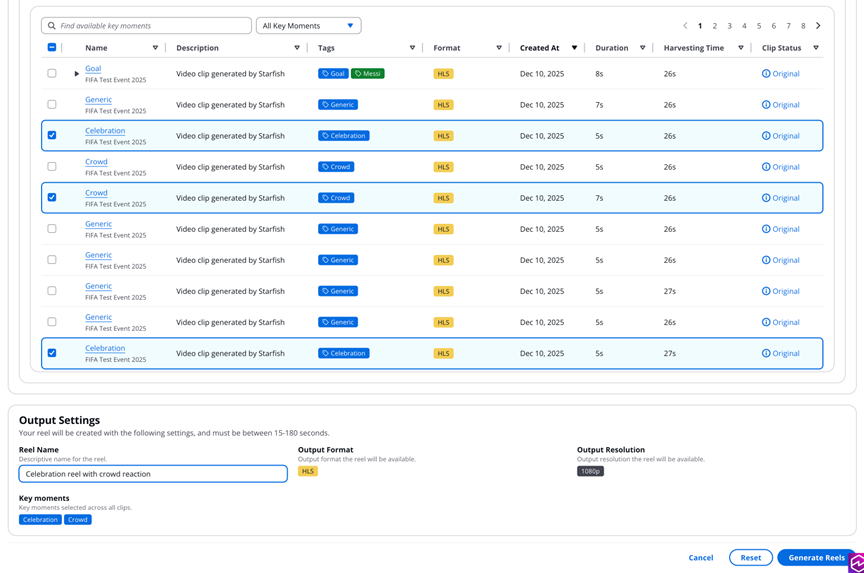

The reel builder allows operators to select clips from across different events and time periods, arrange them in the desired order, and trigger highlight reel generation. Multiple reels can be processed simultaneously, with real-time status tracking in the interface. When content is ready for distribution, editors can batch-trigger MP4 downloads for any combination of clips and reels and share the resulting download links with colleagues.

Each clip and reel also includes a feedback form where editors can submit ratings and comments. This feedback is stored against the asset in the backend, providing a structured way for the team to capture editorial preferences and iterate on output quality over time.

Example of how the reel builder works

Example of how the reel builder works

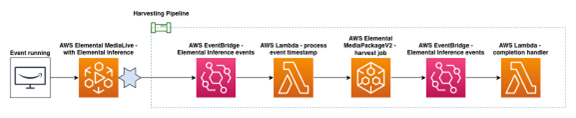

Clip Harvesting Pipeline

When a live broadcast is running, AWS Elemental Inference continuously analyzes the video stream and detects key moments within single-digit seconds. Each detection emits an event to Amazon EventBridge. This event contains PTS (Presentation Timestamp) values, descriptive tags such as “goal” or “celebration,” and an AI-generated description of the moment. The event triggers the harvest pipeline, which extracts the corresponding video segment from the live stream through AWS Elemental MediaPackage V2 and stores it in Amazon S3.

The following is an example of an event emitted by AWS Elemental Inference:

Once a harvest job is completed, an AWS Lambda function validates that the extracted video contains actual content. Empty or failed harvests are cleaned up automatically. For successful harvests, the clip metadata is updated in DynamoDB with the final status and the end-to-end harvesting time.

Service diagram showing the harvesting pipeline

As seen above, Elemental Inference events carry rich metadata, but they do not inherently identify which live event or game the highlight belongs to. The solution addresses this with an “active event” pattern. Operators activate a single event at a time from the web portal, and the harvest pipeline queries DynamoDB for the currently active event each time a new highlight arrives. The incoming clip is then stamped with that event’s ID and name. This design is deliberately simple, matching the real-world workflow where an operator is always focused on a specific broadcast.

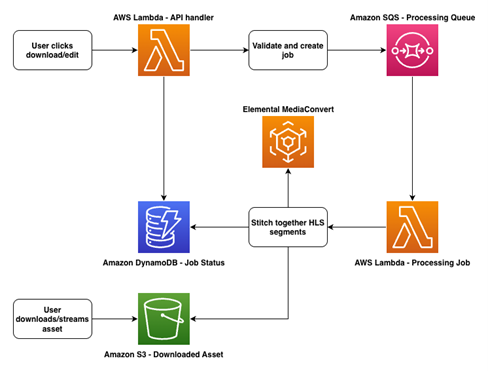

Video Editing & Download Pipeline

Both the video editing and MP4 download workflows follow the same asynchronous processing pattern but use separate queues and Lambda functions to avoid resource contention. In each case, a user action in the portal triggers an API call that places a job in an SQS queue. A processing Lambda picks up the job, performs the video operation, stores the output in S3, and updates the job record in DynamoDB. The user monitors progress from the portal and can stream or download the result once processing is complete.

Architecture drawing of the video editing and download pipeline process

The clip editor supports trim, split, delete, and merge operations, which editors apply through the visual timeline in the web portal. When an editor saves their changes, the operations are submitted to the processing pipeline. The processor retrieves the source HLS segments from S3, applies the requested operations using AWS Elemental MediaConvert, and uploads the result as a new versioned asset.

For content distribution, editors can trigger MP4 downloads for multiple clips and reels simultaneously. The download pipeline converts HLS content to MP4 format using Elemental MediaConvert and generates a pre-signed S3 URL for retrieval. Once a download job has been completed, the resulting URL can be shared and reused by other team members without re-triggering the conversion. This avoids redundant processing and makes it straightforward to distribute finished content across the editorial team.

Results

With this solution in place, editors no longer need to switch between multiple tools or coordinate handoffs across teams. Instead, they work within a single portal where they can monitor incoming clips with clear status indicators, review AI-generated vertical crops alongside the source feed, and move content through an editorial workflow — all while the broadcast is still live.

The prototype solution built for Fox Sports demonstrates how AWS Elemental services, combined with a serverless event-driven architecture, can reduce the time from a live moment to social-ready content to seconds. For media companies looking to scale their digital coverage across more events and leagues, this approach unlocks content that was previously too costly or resource-intensive to produce.

“AWS Elemental Inference exemplifies the power of the partnership between Fox Sports and Amazon Web Services to rapidly turn innovation into real-world impact. What began as a hackathon concept — addressing the reality that nearly 90% of Fox Sports Digital content is consumed vertically — has evolved into a production-ready, machine-learning–driven solution built for live sports at scale. Powered by AWS Elemental Inference, the solution integrates seamlessly into our existing live production and distribution workflows, enabling the real-time creation and delivery of vertical content to fans across multiple major sports leagues.”

— Ricardo Perez-Selsky, Sr. Director, Digital Production Operations, Fox Sports

If you are interested in building a similar workflow, we plan to publish a reference implementation soon.