Migration & Modernization

An Engine of Efficiency: BMW Group’s CI/CD Modernization Journey with AWS

This post is co-written with Hrvoje Lukavski from BMW Group, João Gonçalves and Jonas França from CTW.

BMW Connected Company, a division within BMW Group, develops and operates premium digital services for BMW Group’s connected vehicle fleet of over 24.5 million vehicles worldwide. Critical Techworks (CTW), established in September 2018, emerged from a partnership between BMW Group and Critical Group. CTW focuses exclusively on developing software for BMW Group’s future connected vehicle fleet and IT ecosystem, operating from offices in Braga, Lisbon, and Porto, Portugal.

In 2020, BMW Connected Company made a strategic decision to migrate its entire CI/CD infrastructure from on-premises to the AWS cloud with the goal of improving the availability, reliability, performance, and cost efficiency of its development toolchain.

Today, their Cloud Developer Platform (CDP), internally known as Orbit Spaceship, facilitates over 130,000 builds and deployments daily, supporting over 1,300 microservices across hundreds of AWS accounts spanning multiple regions worldwide. The platform provides 50 reusable building blocks for CI/CD pipelines to over 3,000 developers, enabling seamless integration, simplified maintenance, and flexibility to incorporate new features easily.

In this blog post, we describe BMW’s journey to evolve its CI/CD platform to scale and support the rapid pace of development efficiently, driven by the increasing scale and complexity of the ConnectedDrive ecosystem.

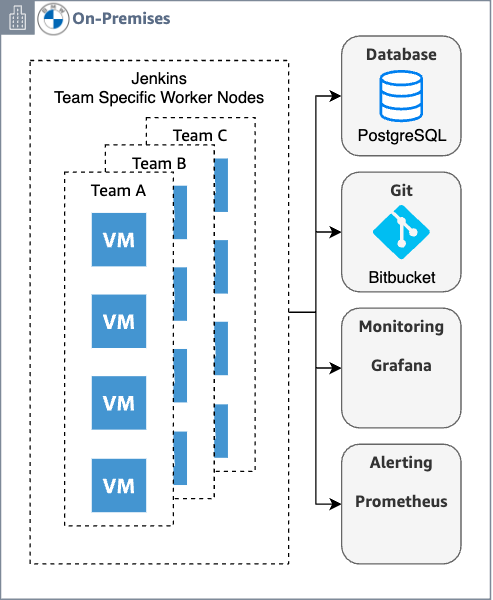

On-Premises Architecture

The initial CI/CD architecture operated from on-premises data centers, leveraging OpenShift for centralized application management. This system utilized a Jenkins CI/CD solution with an enterprise node handling administrative tasks and dedicated admin nodes for each BMW ConnectedDrive backend product, often causing inefficient node utilization. This architecture leveraged the following components:

- Compute: Shared worker nodes from a central pool (~10 always available) with one-click scaling for additional capacity.

- Monitoring: Prometheus for metrics collection and Grafana for system monitoring.

- Data: Oracle Database and PostgreSQL for persistence.

- Development Tools: On-premises Bitbucket, Confluence, and Jira supporting enterprise workflows.

Jenkins Operations Center provided centralized management across multiple teams and projects. Jenkins workers dynamically scaled within OpenStack projects, with teams managing their environments independently. Teams requested firewall rules to approve access to target environments and ensure secure communication.

The system processed hundreds of builds and deployments daily through multiple custom admin jobs and pipelines tailored to developer requirements. Integration with Bitbucket, Confluence, and Jira remained critical for seamless development workflows and team collaboration.

Figure 1: Initial BMW Connected Company’s CI/CD on-premises Architecture

Architecture Evolution on AWS

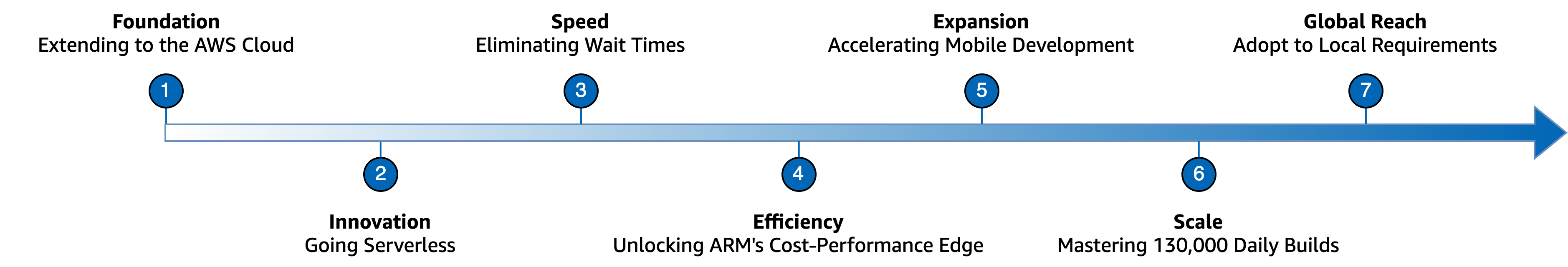

The migration of BMW Connected Company’s CI/CD pipeline to AWS followed a structured, phased approach that balanced technical innovation with operational stability. Each phase delivered measurable improvements in performance, cost efficiency, and developer experience while maintaining reliability standards, as illustrated in Figure 2.

Figure 2: BMW Connected Company’s CI/CD Evolution on AWS

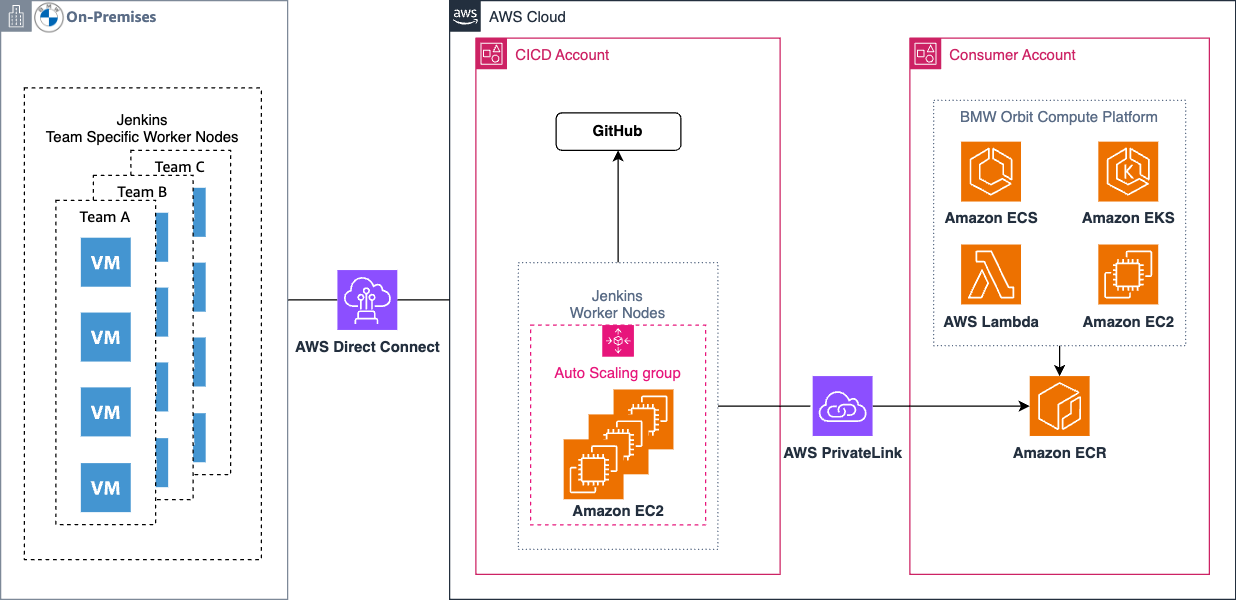

1 – Foundation: Extending to the AWS Cloud

The initial phase of the migration established the core infrastructure needed to support their cloud-based CI/CD operations and leveraged AWS Direct Connect to ensure secure and reliable connectivity to on-premises. Jenkins worker nodes ran on Amazon Elastic Compute Cloud (Amazon EC2) instances, integrated with HashiCorp Vault and Consul for comprehensive secrets management. Additional enterprise services, including Nexus and SonarQube, provided artifact management and code quality analysis capabilities.

This architecture dynamically provisioned Jenkins worker nodes based on workload demand while maintaining seamless connectivity between on-premises and AWS Jenkins infrastructure. This initial solution supported approximately 3,000 build hours and several thousand builds per day.

Figure 3: Interim BMW Connected Company’s CI/CD Jenkins Architecture on AWS

2 – Innovation: Going Serverless

Though the extension to cloud provided scaling benefits, limitations of this architecture soon became apparent, which limited the solution’s ability to meet increasing development demand:

- Shared worker node pools led to overprovisioning and inefficient Amazon EC2 instance utilization

- Scaling wait times hindered elasticity and resulted in slow responsiveness to spikes in demand

- Resource contention during peak hours affected build performance

To overcome these challenges, the team determined that the architecture required significant changes to meet the elasticity and scalability goals, resulting in a new solution, referred to internally as Orbit Spaceship v1.

The natural first step was to address the scaling limitations and overhead involved in maintaining the Jenkins shared worker node pool. Since GitHub was already in place for source control, adopting GitHub Actions was a logical choice. This eliminated the need to maintain a separate CI/CD infrastructure and leveraged native integrations between the platform and the repositories. To implement GitHub Actions, workflows require runners to execute. The team elected to use self-hosted runners to support the customizations that BMW Group’s requirements demand.

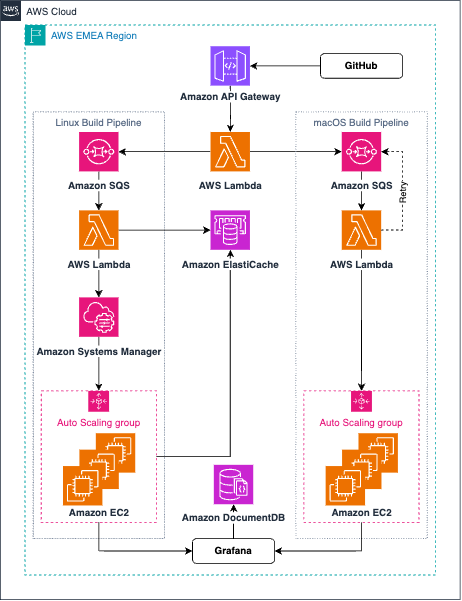

Once the team deployed GitHub Actions, the next objective was to process GitHub webhook events to spawn sufficient Amazon EC2 instances and register GitHub runners within seconds. To achieve this, Amazon API Gateway receives webhook events from GitHub and invokes an AWS Lambda function to queue requests for processing. With GitHub’s limited native filtering capabilities, this function filters out illegitimate webhook requests and prevents system overload. The Lambda function then processes these legitimate requests and sends them to OS-specific Amazon Simple Queue Service (SQS) queues for loose coupling and asynchronous processing, ensuring slower processes do not create bottlenecks that impact other build requests.

Another Lambda function consumes messages from the SQS queues and subsequently invokes the Amazon EC2 API to deploy a new instance and prepare configurations for self-registration upon boot. Amazon EC2 user data scripts automatically terminate instances and run cleanup operations.

Ephemeral runners ensure each build starts fresh, which prevents environment pollution and maintains quality and repeatability. This approach reduced costs by 63% and provided a 50% increase in parallel service usage capacity.

Figure 4: BMW Connected Company’s CI/CD serverless architecture on AWS

3 – Speed: Eliminating Wait Times

The service was functional – DevOps teams could install the GitHub app which provides webhook events, configure workflows with labels, and receive runners. However, the time-to-build did not meet BMW’s ambitious goals. Amazon EC2 instance boot times created unwanted delays that wasted valuable developer time. The solution was elegantly simple: eliminate the wait by maintaining pre-warmed machine pools and scheduled scaling for Amazon EC2 Auto Scaling groups so capacity is always available.

Pre-warmed pools maintained a predetermined number of ready-to-use instances, enabling Lambda functions to register runners directly to existing instances, eliminating configuration write delays and Amazon EC2 instance boot wait times. This reduced runner provisioning time from 2.5 minutes to just 18 seconds per runner.

As early adopters multiplied and system performance significantly improved, the team began transitioning to production. During this phase, the team also migrated from Amazon Linux to Ubuntu to leverage the GitHub Actions community’s ecosystem and improved tool compatibility.

4 – Efficiency: Unlocking ARM’s Cost-Performance Edge

With a highly reliable and responsive system now established, the team began expanding support to ARM architectures, leveraging AWS Graviton-based instances. These instances delivered superior cost-performance ratios, both cheaper and more performant than x86 alternatives, enabling the most eager teams to achieve better cost per build minute while reducing overall build times. The guiding principle remained constant that pipelines will never block creative work.

5 – Expansion: Accelerating Mobile Development

At over 50,000 daily builds with rapidly increasing demand, mobile development teams responsible for the MyBMW and MyMini apps approached CTW engineers to explore Amazon EC2 Mac instances for iOS application builds. These bare-metal instances provided similar performance to on-premises without the operational overhead of managing the underlying hardware.

Ephemeral build runners must run in a sanitized environment for security and consistency. This presents a unique challenge with macOS on bare metal, as sanitization workflows limit availability and reduce productivity. The Apple macOS Software License Agreement adds complexity as it requires a 24-hour minimum billing period for Amazon EC2 Mac Dedicated Hosts.

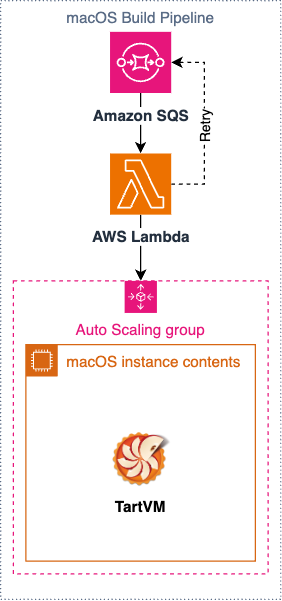

The team researched best practices and solutions to maximize the value of each Amazon EC2 Mac instance and identified Tart as the breakthrough solution. Built on Apple’s Virtualization framework, Tart enables on-demand VM environments on bare-metal machines. These VMs boot in seconds with custom Amazon Machine Images (AMIs) built with HashiCorp Packer and preloaded with essential CI/CD tools, including multiple Xcode versions and simulators. An API wrapper enables seamless integration with AWS Lambda.

Before migrating to Amazon EC2 Mac instances, the average on-premises execution time for a single build job was 90 minutes on M1 Mac mini hardware. On Amazon EC2 M4 Mac instances, the average run time dropped to 35 minutes, a 61% reduction, paving the way for expected platform growth and improved developer experience.

Figure 5: BMW Connected Company’s CI/CD macOS Virtualization architecture

6 – Scale: Mastering 130,000+ Daily Builds

Surpassing 130,000 daily builds and deployments revealed new challenges. As Amazon EC2 instances pulled dependencies from public repositories such as Docker Hub, this generated excessive network traffic and triggered rate-limiting issues that highlighted a need for optimization. To address this, the team introduced JFrog Artifactory to centralize most dependencies while remaining transparent to developers.

Additionally, the team leveraged custom AMIs to embed configurations directly into OS images, including pre-configured settings for commonly used tools like Java, Python, Terraform, and Node.js, which further reduced build times.

The serverless architecture simplified management and scalability, enabling the team to modify concurrency limits without scaling the underlying infrastructure. During periods of low demand, the infrastructure could scale to zero, which proved exceptionally cost-effective.

7 – Global Reach: Adapt to Local Requirements

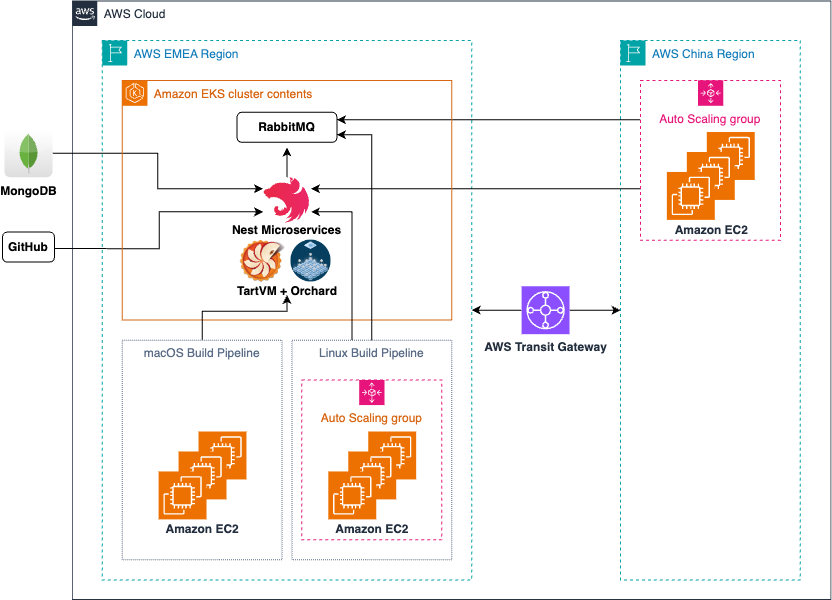

Today, Orbit Spaceship integrates with the broader container platform known internally as Orbit and is the go-to solution for deploying microservices for BMW ConnectedDrive applications. Based on Amazon Elastic Kubernetes Service (EKS), Orbit offers developers a platform to provide managed logging, networking, and scaling of microservices. The team made a strategic decision to maximize the usage of the Orbit platform to ensure deeper adherence to BMW Group standards and a consistent architecture across all regions.

To accommodate this, the team migrated AWS Lambda functions to containers on Amazon EKS and transitioned Amazon SQS queues to RabbitMQ, while retaining Amazon EC2 as the underlying runner platform.

Complementary processes provide data collection for optimization recommendations and FinOps, live metrics for 24/7 operations, and custom integrations that improve the developer experience.

This latest architecture centers on four main components:

- Runner Controller handles the heavy lifting and delegates specific actions to specialized services.

- GitHub microservice manages all GitHub interactions, including webhook filtering and token creation.

- Cloud microservice handles Amazon EC2 instance lifecycle management, Auto Scaling group selection, and termination.

- Metrics microservice manages reporting, periodic data processing, and cleanup operations.

As webhook correlation requires extracting information from different GitHub payloads, MongoDB provides performance and ease of use for storing data. The solution uploads this data to BMW Group’s central data lake for correlation with other metrics for comprehensive analysis.

The system also monitors runner assignment times, creating self-healing processes that proactively address workflows waiting too long for runners. Maintaining independent GitHub data reduces API frequency, improving speed and reliability while providing inventory management for multiple Auto Scaling groups, each containing dozens of machines for rapid deployment.

Furthermore, runner registration follows an IoT-style approach using RabbitMQ messaging. Spaceship agents on Amazon EC2 instances consume messages, register runners, and confirm registration via the Central API.

The team plans to use ML capabilities to optimize Amazon EC2 instance type selection based on historical CPU, RAM, and disk usage patterns to further ease the use of this platform.

Figure 6: BMW Connected Company’s CI/CD Multi-Region Architecture

Lessons Learned

BMW Connected Company’s journey revealed several valuable insights that shaped the platform’s evolution from a few hundred builds daily to over 130,000 builds and deployments across multiple regions.

Empowering teams creates agility and ownership: Autonomous teams make faster decisions, adapt more effectively to change, and deliver higher-quality solutions. By giving teams the freedom to experiment and iterate, BMW Group fostered a culture of innovation where developers felt ownership over their tools and processes, leading to more thoughtful implementations and faster problem resolution.

Architecture must evolve with scale: Every increase in scale revealed new bottlenecks and required architectural evolution. What started as a simple webhook system evolved through multiple iterations, each addressing limitations that only became apparent at higher scales. Planning for growth means building in flexibility to refactor components as needs change.

Localizing assets and dependencies significantly reduces costs: Caching images and enabling Dual Stack VPCs can lower network egress costs substantially. When the volume of daily builds pulled dependencies from public repositories like Docker Hub, network costs skyrocketed and rate limiting became a critical issue. Implementing a centralized cache reduced both costs and build failures, while remaining transparent to developers. Additionally, routing traffic to IPv6-enabled domains through egress-only internet gateways reduced NAT gateway charges in a significant portion of outbound traffic.

Evaluate Spot instances versus Savings Plans carefully: While Amazon EC2 Spot Instances are often attractive for ephemeral workloads, the team discovered that for predictable, high-volume usage patterns, Savings Plans often provided better economics without the complexity of handling interruptions.

AWS Config costs can add up quickly for ephemeral workloads: With thousands of instances launching and terminating daily, AWS Config evaluations generated unexpected costs. The team learned to consider carefully which compliance checks truly need real-time evaluation versus those that can run on longer intervals or through alternative mechanisms.

Comprehensive metrics enable continuous optimization: Collecting detailed data on CPU, RAM, and disk usage patterns supports planned ML-driven optimizations for Amazon EC2 instance type selection. Investing in observability early can pay dividends throughout the platform’s lifecycle, enabling data-driven decisions rather than guesswork.

Conclusion

BMW Group’s journey highlights the power of continuous small iterations over years, rooted in values of developer proximity, team empowerment, and empathy. Starting with a few hundred builds daily, this solution now scales to thousands of builds and deployments, exceeding 130,000 across regions. Their latest focus on simplification preserves core functions and performance, achieving a 50% reduction in maintenance and operational tasks. Builds that once took up to an hour now complete in minutes, and the platform provisions runners automatically in under 10 seconds. This efficiency saves thousands of developer hours daily. With increased development velocity and platform stability, BMW Group delivers new features faster, essential for the software-centric Neue Klasse iX3 release.

To learn more about BMW Group’s approach to cloud-native development and their broader AWS transformation, read our AWS Innovator Case Studies.

Christine Marconcin – Chief Operations Officer CTW: “Orbit Spaceship provides optimizations to allow software development teams to efficiently deliver software, reducing the time to market, and controlling costs, whilst maintaining the high software standards in a more digital BMW World of Customers.”

Felix Willnecker – Group Lead BMW Connected Cloud Platform: “Five years ago, our developers faced a challenge: hundreds of builds a day, long wait times, and a system that couldn’t keep up with their ideas. We asked ourselves, what if we could change that? What if every build was instant, every deployment effortless? That question sparked a journey. Step by step, we moved from on-premises to the cloud, from rigid systems to serverless agility. Today, that vision is reality: 130,000 builds every single day, powering millions of connected vehicles worldwide. This isn’t just a technical achievement, it’s a story of bold thinking, relentless teamwork, and the belief that innovation should never wait.”