AWS Storage Blog

How ClearScale overcame data migration hurdles using AWS DataSync

Many organizations today are either amongst a cloud migration projects or have it as a top priority, but as IT professionals know migrating to the cloud is not an easy task. It takes time, a detailed plan, and expert orchestration between departments. Even with the most comprehensive, in-depth migration planning, issues can arise that result in human and technical errors that delay the project. The key is to create an agile, strategic plan that allows for necessary adjustments along the way. It’s important to also have the expertise to determine what and when those adjustments should occur.

At ClearScale we had a customer in the technology industry that wanted to migrate more than 30 TB of encrypted file data to Amazon Web Services (AWS) from their on-premises environment that was powered by a different cloud provider. ClearScale successfully handled many similar migrations prior, but this one was set to be more challenging from the start.

The migration had to be performed on a live account because the customer asked for minimal downtime during the migration. This meant that our team at ClearScale didn’t have optimal access to either the source data or the storage destination. Further complicating things was the fact that the data was highly sensitive and would require special handling to ensure its security.

In this blog post, I detail how AWS DataSync and other AWS services enabled ClearScale to facilitate this customer’s migration and efficiently move massive volumes of data to AWS when other data migration solutions could not.

ClearScale’s client’s migration requirements

The project kicked off with a migration readiness assessment using the best practices outlined in AWS’s Cloud Adoption Framework (AWS CAF) so that I could evaluate and improve our client’s cloud readiness. Next, ClearScale gathered information on regulatory compliance requirements, data encryption details, data synchronization needs, and the specifics of the data files to be migrated so that the most appropriate migration methods could be provided. A detailed migration plan was created and provided to our client.

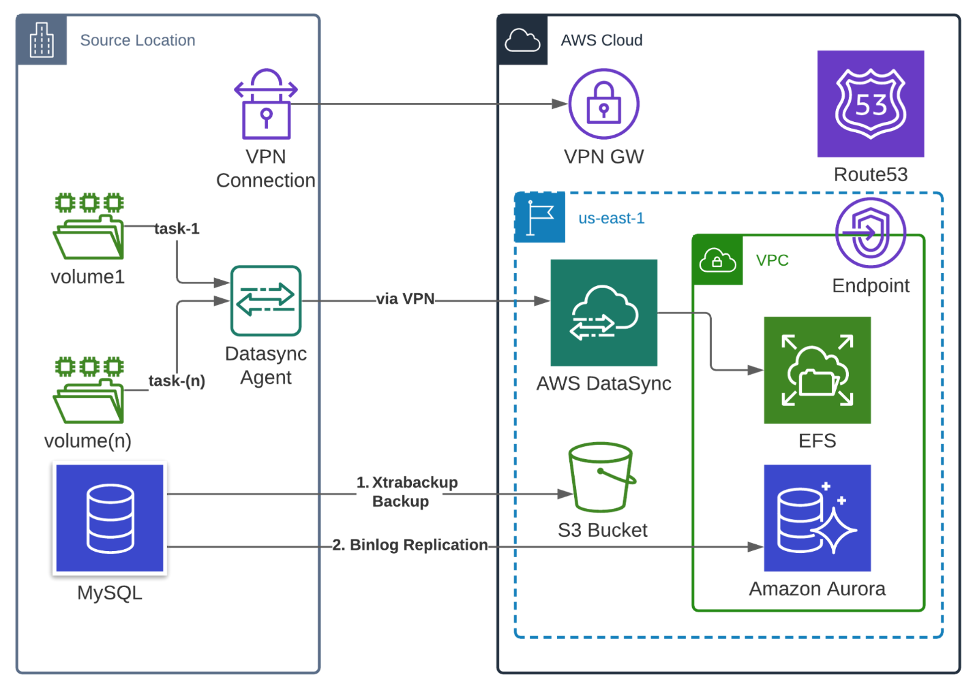

In the course of requirements gathering, ClearScale identified that the customer’s needed to move its data out of Rackspace and into AWS Cloud with minimal downtime and no data is lost during the migration. I needed to find a solution that would accommodate the utilization of a 50 megabit-per-second internet bandwidth, and ensure a reliable, controllable data migration from the client’s Network File System (NFS) datastore to Amazon Elastic File System (Amazon EFS). The best solution surfaced with AWS DataSync. DataSync ensures secure communication over encrypted channel. It allows users to flexibly configure replication jobs based on independent chunks of data, properly log the migration process, and identify issues with the channel and locked data.

Amazon EFS is a managed AWS storage service for scalable and resilient NFS based storage. Amazon EFS scales to manage terabytes of data so it was ideal to effectively handle the 30 TB of files the client needed to migrate, and EFS is provisioned as a multi-availability zone (AZ) service for resiliency within a single region to maintain high availability of file data even in case of potential hardware failures.

Why ClearScale leverages AWS DataSync

Among the most important solution components was AWS DataSync, which was selected for moving the storage file data to AWS. This fully-managed service employs an AWS designed migration protocol that is decoupled from the storage protocol which results in an acceleration of data movement. The DataSync protocol performs optimizations regarding how, when, and what data is sent over the network, and includes incremental migrations, in-line compression, sparse file detection, and in-line data validation and encryption.

DataSync ran in ClearScale’s customer’s data center as a virtual machine (VM) with a DataSync agent connected to a private Virtual Private Cloud (VPC) endpoint via a VPN tunnel. The interface VPC endpoint, or interface endpoint, allows for connecting to services powered by AWS PrivateLink. Connections between the local DataSync agent and the in-cloud service components are multi-threaded to maximize performance over the customer’s Wide Area Network (WAN). A single DataSync task is capable of using up to 10 Gbps over a network link between your on-premises environment and AWS.

All data migrated between the agent and AWS is encrypted in transit with Transport Layer Security (TLS) 1.2. DataSync supports using default encryption for Amazon EFS file system data at rest and ensures that data arrives intact. For each transfer, the service performs integrity checks in transit and data verification once the data is copied. These checks ensure that the data written to the destination matches the data read from the source to validate consistency.

DataSync includes a built-in scheduling capability, so data migration tasks are executed automatically to detect and copy changes from the source storage system to the destination. Tasks can be scheduled using the AWS DataSync Console or AWS Command Line Interface (CLI). There’s no need to write scripts to manage repeated migrations. Task scheduling automatically runs tasks on the schedule configured with hourly, daily, or weekly options provided directly in the console.

AWS DataSync’s fast, secure, and highly configurable capabilities for online data migration provided a complete migration solution for ClearScale’s client.

The obstacles

With the architecture and services determined, the ClearScale team and I configured the network and storage tiers, prepared the source and target environments, and created the scripts for backup and restore procedures and migration verification. Dry runs of the migration for the pilot database and data files were conducted so we could test our solution configuration. The results of the dry run were analyzed.

Working with the live account provided by the customer caused ClearScale to encounter access errors, although these errors were all resolved after notifying the customer. However, additional roadblocks were met with frequent network interruptions and bandwidth issues that caused problems when trying to run the migration tasks. At this point in our testing, it was clear we needed a revised solution to accommodate for these interruptions.

The revised solution

ClearScale determined that the best approach was to break down the file structure into smaller parts and copy each part independently. Each single “chunk” of the file store could later be restarted independently. Because there is no cross-influence between parts of the datastore, all tasks can queue up and run almost continuously.

The revised migration process was changed to:

- Perform the initial setup of the DataSync agent in the on-premises data center as originally specified. This is a best practice when deploying AWS DataSync.

- Divide the file data into portions, also known as folders or mountpoints. Each portion acts as a separate DataSync task that can be scheduled and executed.

- Break down the file storage into smaller portions and migrate them independently. This reduces any faulty domains and with lower file counts for each task we were able to configure the minimum required memory allocated to the VM.

- Move all the files to AWS with AWS DataSync as originally specified.

- Run periodic incremental transfers to catch any changes or additions to the on-premises file system by using AWS DataSync’s ability to scan and validate the data migrated,

- Run migration tasks several times before the final sync to resolve issues during the migration and properly set exception lists as some files may not be needed.

- Run a final data transfer to cover all file changes.

The team also determined that because having the “delete deleted files” option on increases task run time, it would only be used when needed. In addition, the copy periods for the migration tasks would be reduced when approaching the cutover so the final copy of all the files would fit into the migration window.

Figure 1: Solution Architecture Diagram

The results

The revised approach, supported by frequent communication with the client, proved successful. Daily stand-ups were held during the last two months of the project to resolve any new issues that arose.

Among the lessons learned during this migration project was that dividing the files into smaller parts resulted in ClearScale’s ability to manage multiple AWS DataSync tasks instead of one large set of files, in addition to handling troubleshooting, maintaining the schedule, and controlling runs. Additional overhead time was also required to start each task, prepare the file store, and then validate the results ─ a critical factor to keep in mind when planning the migration window versus the number of copy tasks.

AWS DataSync proved to be the powerful migration service that ClearScale needed in order to move huge amounts of frequently changing data. The AWS, fully-managed service made the execution of our client’s migration plan much easier and more efficient than if we would have used our own custom scripts or competing migration service.

Conclusion

In this blog post, I described the challenges that our customer faced when attempting to migrate 30 TB of highly sensitive, encrypted data from an on-premises environment to AWS. By following AWS’s migration best practices and using the powerful AWS DataSync service, ClearScale was able to complete the migration without disrupting our client’s services.

You can learn more about AWS DataSync by clicking into the following resources:

I’d encourage you to try AWS DataSync today, and if you have questions, please leave a comment below for the AWS team.

The content and opinions in this post are those of the third-party author and AWS is not responsible for the content or accuracy of this post.