AWS News Blog

Amazon Elastic Inference – GPU-Powered Deep Learning Inference Acceleration

|

|

One of the reasons for the recent progress of Artificial Intelligence and Deep Learning is the fantastic computing capabilities of Graphics Processing Units (GPU). About ten years ago, researchers learned how to harness their massive hardware parallelism for Machine Learning and High Performance Computing: curious minds will enjoy the seminal paper (PDF) published in 2009 by Stanford University.

Today, GPUs help developers and data scientists train complex models on massive data sets for medical image analysis or autonomous driving. For instance, the Amazon EC2 P3 family lets you use up to eight NVIDIA V100 GPUs in the same instance, for up to 1 PetaFLOP of mixed-precision performance: can you believe that 10 years ago this was the performance of the fastest supercomputer ever built?

Of course, training a model is half the story: what about inference, i.e. putting the model to work and predicting results for new data samples? Unfortunately, developers are often stumped when the time comes to pick an instance type and size. Indeed, for larger models, the inference latency of CPUs may not meet the needs of online applications, while the cost of a full-fledged GPU may not be justified. In addition, resources like RAM and CPU may be more important to the overall performance of your application than raw inference speed.

For example, let’s say your power-hungry application requires a c5.9xlarge instance ($1.53 per hour in us-east-1): a single inference call with an SSD model would take close to 400 milliseconds, which is certainly too slow for real-time interaction. Moving your application to a p2.xlarge instance (the most inexpensive general-purpose GPU instance at $0.90 per hour in us-east-1) would improve inference performance to 180 milliseconds: then again, this would impact application performance as p2.xlarge has less vCPUs and less RAM.

Well, no more compromising. Today, I’m very happy to announce Amazon Elastic Inference, a new service that lets you attach just the right amount of GPU-powered inference acceleration to any Amazon EC2 instance. This is also available for Amazon SageMaker notebook instances and endpoints, bringing acceleration to built-in algorithms and to deep learning environments.

Pick the best CPU instance type for your application, attach the right amount of GPU acceleration and get the best of both worlds! Of course, you can use EC2 Auto Scaling to add and remove accelerated instances whenever needed.

Introducing Amazon Elastic Inference

Amazon Elastic Inference supports popular machine learning frameworks TensorFlow, Apache MXNet and ONNX (applied via MXNet). Changes to your existing code are minimal, but you will need to use AWS-optimized builds which automatically detect accelerators attached to instances, ensure that only authorized access is allowed, and distribute computation across the local CPU resource and the attached accelerator. These builds are available in the AWS Deep Learning AMIs, on Amazon S3 so you can build it into your own image or container, and provided automatically when you use Amazon SageMaker.

Amazon Elastic Inference is available in three sizes, making it efficient for a wide range of inference models including computer vision, natural language processing, and speech recognition.

- eia1.medium: 8 TeraFLOPs of mixed-precision performance.

- eia1.large: 16 TeraFLOPs of mixed-precision performance.

- eia1.xlarge: 32 TeraFLOPs of mixed-precision performance.

This lets you select the best price/performance ratio for your application. For instance, a c5.large instance configured with eia1.medium acceleration will cost you $0.22 an hour (us-east-1). This combination is only 10-15% slower than a p2.xlarge instance, which hosts a dedicated NVIDIA K80 GPU and costs $0.90 an hour (us-east-1). Bottom line: you get a 75% cost reduction for equivalent GPU performance, while picking the exact instance type that fits your application.

Let’s dive in and look at Apache MXNet and TensorFlow examples on an Amazon EC2 instance.

Setting up Amazon Elastic Inference

Here are the high-level steps required to use the service with an Amazon EC2 instance.

- Create a security group for the instance allowing only incoming SSH traffic.

- Create an IAM role for the instance, allowing it to connect to the Amazon Elastic Inference service.

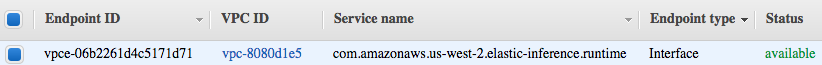

- Create a VPC endpoint for Amazon Elastic Inference in the VPC where the instance will run, attaching a security group allowing only incoming HTTPS traffic from the instance. Please note that you’ll only have to do this once per VPC and that charges for the endpoint are included in the cost of the accelerator.

Creating an accelerated instance

Now that the endpoint is available, let’s use the AWS CLI to fire up a c5.large instance with the AWS Deep Learning AMI.

aws ec2 run-instances --image-id $AMI_ID \

--key-name $KEYPAIR_NAME --security-group-ids $SG_ID \

--subnet-id $SUBNET_ID --instance-type c5.large \

--elastic-inference-accelerator Type=eia1.large

--iam-instance-profile Name="enter the name of your accelerator profile"That’s it! You don’t need to learn any new APIs to use Amazon Elastic Inference: simply pass an extra parameter describing the accelerator type. After a few minutes, the instance is up and we can connect to it.

Accelerating Apache MXNet

In this classic example, we will load a large pre-trained convolution neural network on the Amazon Elastic Inference Accelerator (if you’re not familiar with pre-trained models, I covered the topic in a previous post). Specifically, we’ll use a ResNet-152 network trained on the ImageNet dataset.

Then, we’ll simply classify an image on the Amazon Elastic Inference Accelerator

import mxnet as mx

import numpy as np

from collections import namedtuple

Batch = namedtuple('Batch', ['data'])

# Download model (ResNet-152 trained on ImageNet) and ImageNet categories

path='http://data.mxnet.io/models/imagenet/'

[mx.test_utils.download(path+'resnet/152-layers/resnet-152-0000.params'),

mx.test_utils.download(path+'resnet/152-layers/resnet-152-symbol.json'),

mx.test_utils.download(path+'synset.txt')]

# Set compute context to Elastic Inference Accelerator

# ctx = mx.gpu(0) # This is how we'd predict on a GPU

ctx = mx.eia() # This is how we predict on an EI accelerator

# Load pre-trained model

sym, arg_params, aux_params = mx.model.load_checkpoint('resnet-152', 0)

mod = mx.mod.Module(symbol=sym, context=ctx, label_names=None)

mod.bind(for_training=False, data_shapes=[('data', (1,3,224,224))],

label_shapes=mod._label_shapes)

mod.set_params(arg_params, aux_params, allow_missing=True)

# Load ImageNet category labels

with open('synset.txt', 'r') as f:

labels = [l.rstrip() for l in f]

# Download and load test image

fname = mx.test_utils.download('https://github.com/dmlc/web-data/blob/master/mxnet/doc/tutorials/python/predict_image/dog.jpg?raw=true')

img = mx.image.imread(fname)

# Convert and reshape image to (batch=1, channels=3, width, height)

img = mx.image.imresize(img, 224, 224) # Resize to training settings

img = img.transpose((2, 0, 1)) # Channels

img = img.expand_dims(axis=0) # Batch size

# img = img.as_in_context(ctx) # Not needed: data is loaded automatically to the EIA

# Predict the image

mod.forward(Batch([img]))

prob = mod.get_outputs()[0].asnumpy()

# Print the top 3 classes

prob = np.squeeze(prob)

a = np.argsort(prob)[::-1]

for i in a[0:3]:

print('probability=%f, class=%s' %(prob[i], labels[i]))As you can see, there are only a couple of differences:

- I set the compute context to mx.eia(). No numbering is required, as only one Amazon Elastic Inference accelerator may be attached on an Amazon EC2 instance.

- I did not explicitly load the image on the Amazon Elastic Inference accelerator, as I would have done with a GPU. This is taken care of automatically.

Running this example produces the following result.

probability=0.979113, class=n02110958 pug, pug-dog

probability=0.003781, class=n02108422 bull mastiff

probability=0.003718, class=n02112706 Brabancon griffonWhat about performance? On our c5.large instance, this prediction takes about 0.23 second on the CPU, and only 0.031 second on its eia1.large accelerator. For comparison, it takes about 0.015 second on a p3.2xlarge instance equipped with a full-fledged NVIDIA V100 GPU. If we use a eia1.medium accelerator instead, this prediction takes 0.046 second, which is just as fast as a p2.xlarge (0.042 second) but at a 75% discount!

Accelerating TensorFlow

You can use TensorFlow Serving to serve accelerated predictions: it’s a model server which loads saved models and serves high-performance prediction through REST APIs and gRPC.

Amazon Elastic Inference includes an accelerated version of TensorFlow Serving, which you would use like this.

$ AmazonEI_TensorFlow_Serving_v1.11_v1 --model_name=resnet --model_base_path=$MODEL_PATH --port=9000

$ python resnet_client.py --server=localhost:9000Now Available

I hope this post was informative. Amazon Elastic Inference is available now in US East (N. Virginia and Ohio), US West (Oregon), EU (Ireland) and Asia Pacific (Seoul and Tokyo). You can start building applications with it today!

— Julien;