AWS for Industries

Transform Your Claims Adjudication Process through the Cognitive Insurance Platform

The Challenges with Existing Adjudication Processes

Insurers today are seeking to enable more efficient claims processing to improve the experience for the adjudicator as well as the customer. Increased digitization and automation can help deliver this goal. In the Property and Casualty (P&C) industry, the frequency and severity of claims have been increasing and while great strides have been made in digitizing the First Notice of Loss (FNOL) experience, the adjudication experience is still largely manual and heavily human dependent. Each P&C claim generates hundreds of MBs of unstructured content in the form of pictures, videos, and audio files that an adjudicator typically has to analyze themselves, extending the time it takes to process a claim.

To address this issue, Accenture and AWS collaborated to build a machine learning-backed claims adjudication solution. Part of Accenture’s Cognitive Insurance Platform (CIP), the solution leverages artificial intelligence to examine and analyze the images, documents, and audio and video feeds submitted for a claim. The adjudicator then has access to all of the insights necessary to evaluate the claim at their fingertips, streamlining the adjudication process, reducing administrative costs, and improving the overall customer experience.

Machine Learning-Backed Adjudication in Auto Insurance

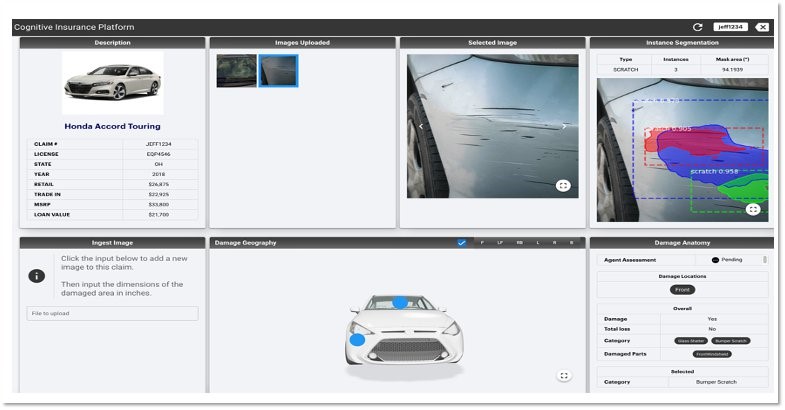

Within auto insurance, CIP is able to reduce the elapsed time for damage estimates from two to three weeks down to just a couple of days. In a centralized one-stop user interface, the adjudicator can see all of the images uploaded by the customer along with the claim record, enriched with both policy and vehicle information. The adjudicator can also see the type of damage and extent of the damage aggregated from all of the uploaded images. In the next step, the adjudicator is presented with a damage appraisal that is created by joining this damage anatomy data with external third-party datasets for repair costs. The adjudicator has all the data they need to evaluate and adjudicate the claim and the customer is spared a manual and labor intensive back-and-forth process with the insurer.

Figure 1 – Claim Adjuster User Interface with insights on vehicle damage

Figure 1 – Claim Adjuster User Interface with insights on vehicle damage

AWS Solution Architecture

The underlying orchestration of the AWS services to enable these insights for the adjudicator is as follows:

Figure 2 – Claims Adjudication Process Technical Architecture

Figure 2 – Claims Adjudication Process Technical Architecture

- Customer uploads the image(s) or video through mobile app.

- Mobile app calls Amazon API Gateway, which makes a pre-signed post upload to Amazon Simple Storage Service (Amazon S3).

- API Gateway also makes an internal, immediate second API call to post a JSON object containing the user/policy/claim information to annotate the previously uploaded image/video data with user data.

- The user data upload triggers an Amazon S3 event, which pushes the event to Amazon Simple Queue Service (Amazon SQS).

- The Lambda function polling the Amazon SQS will fire off an AWS Step Functions orchestration instance and logs into a checkpoint table maintained in Amazon DynamoDB to flag that processing for this record has ”started.” The same Lambda will also update the claims data store with the flag that the video/image data has been uploaded.

- The first step in Step Functions orchestration is an AWS Fargate ECS task that leverages Python-based image processing libraries to detect the content, validate it, check for malware, and preprocess it. Preprocessing examples include grey-scaling/rescaling the image, resizing, and, if it is a video file, dividing into frames, and uploading the preprocessed data back to Amazon S3. Any failure in the orchestration workflow will be notified via Amazon Simple Notification Service (SNS) topic designated for errors.

- The second step in the orchestration is a series of Lambda functions that take the preprocessed content and call an ensemble of Amazon Rekognition Custom Labels models to detect damage and derive damage geography and category. To present these insights back to the adjudicator, the damage location is overlaid on a vehicle stencil using an instance segmentation model trained on Amazon SageMaker.

- The damage-related details obtained from Amazon Rekognition Custom Labels models are integrated with third-party datasets to build damage appraisal and repair estimates.

- Once the aforementioned orchestrated flow is complete, the adjudicator is notified via an Amazon Augmented AI task to review and provide feedback on the damage insights and estimates collected from the previous steps. The adjudicator’s feedback is stored as part of audit data and will be used periodically to retrain and improvise the underlying machine learning models.

- The adjudicator will then follow the next steps in the business process, either to inform the insurance customer or schedule the repair.

CIP’s Tiered Learning Approach

CIP makes extensive use of the Amazon Rekognition Custom Labels. It takes a tiered approach to glue together an ensemble of Amazon Rekognition Custom Labels models to achieve its fine-grained ML-based damage detection objective. Starting with the step of determining whether or not there is a damage to the vehicle to the step of determining which parts of the vehicle are damaged, CIP employs progressively complex models deployed on Amazon Rekognition Customer Labels:

Figure 3 – Ensemble of models for tiered learning using Amazon Rekognition Custom Labels

Figure 3 – Ensemble of models for tiered learning using Amazon Rekognition Custom Labels

Conclusion

CIP is a platform for all things cognitive, focused on leveraging unstructured data to reduce the amount of human interaction and perform more straight-through processing. CIP is not restricted to the claims area and can also be deployed throughout the entire insurance value chain: underwriting, risk management, fraud detection, policy acquisition, servicing, marketing, product and pricing, workforce optimization, finance, and other corporate functions.

To learn more about the business justification behind this initiative, check out this blog post.

To get started with your own transformation, engage Accenture to modernize your insurance solution footprint with CIP.