AWS Big Data Blog

Category: Amazon EMR

Building and Deploying Custom Applications with Apache Bigtop and Amazon EMR

This post shows you how to build a custom application for EMR for Apache Bigtop-based releases 4.x and greater. EMR nodes are based on the Amazon Linux AMI, so I will deploy on RPM packages and use Elasticsearch as the example application.

Use Spark 2.0, Hive 2.1 on Tez, and the latest from the Hadoop ecosystem on Amazon EMR release 5.0

Jonathan Fritz is a Senior Product Manager for Amazon EMR We are excited to launch Amazon EMR release 5.0 today, giving customers the latest versions of 16 supported open-source applications in the big data ecosystem, including new major versions of Spark and Hive. Almost exactly a year ago, we shipped release 4.0, which brought significant […]

Installing and Running JobServer for Apache Spark on Amazon EMR

In this blog post, you will learn how to install JobServer on EMR using a bootstrap action (BA) derived from the JobServer GitHub repository. Then we’ll run JobServer using a sample dataset.

How SmartNews Built a Lambda Architecture on AWS to Analyze Customer Behavior and Recommend Content

This is a guest post by Takumi Sakamoto, a software engineer at SmartNews. SmartNews in their own words: “SmartNews is a machine learning-based news discovery app that delivers the very best stories on the Web for more than 18 million users worldwide.” Data processing is one of the key technologies for SmartNews. Every team’s workload […]

Generating Recommendations at Amazon Scale with Apache Spark and Amazon DSSTNE

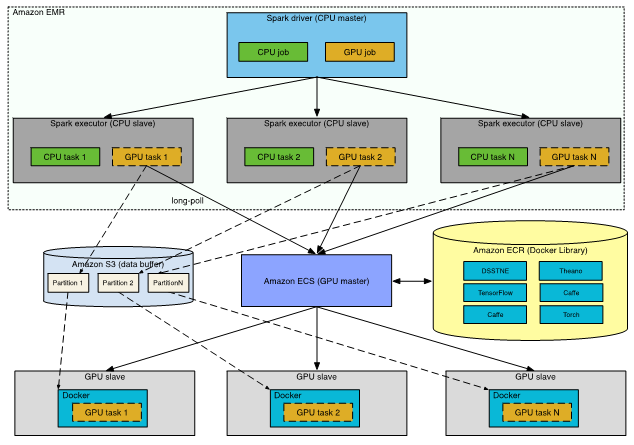

In this post, I discuss an alternate solution; namely, running separate CPU and GPU clusters, and driving the end-to-end modeling process from Apache Spark.

Use Sqoop to Transfer Data from Amazon EMR to Amazon RDS

In this post, I will show you how to transfer data using Apache Sqoop, which is a tool designed to transfer data between Hadoop and relational databases. Support for Apache Sqoop is available in Amazon EMR releases 4.4.0 and later.

Analyze Realtime Data from Amazon Kinesis Streams Using Zeppelin and Spark Streaming

This post shows you how you can use Spark Streaming to process data coming from Amazon Kinesis streams, build some graphs using Zeppelin, and then store the Zeppelin notebook in Amazon S3.

Apache Tez Now Available with Amazon EMR

Amazon EMR has added Apache Tez version 0.8.3 as a supported application in release 4.7.0. Tez is an extensible framework for building batch and interactive data processing applications on top of Hadoop YARN.

Use Apache Oozie Workflows to Automate Apache Spark Jobs (and more!) on Amazon EMR

Mike Grimes is an SDE with Amazon EMR As a developer or data scientist, you rarely want to run a single serial job on an Apache Spark cluster. More often, to gain insight from your data you need to process it in multiple, possibly tiered steps, and then move the data into another format and […]

Supercharge SQL on Your Data in Apache HBase with Apache Phoenix

With today’s launch of Amazon EMR release 4.7, you can now create clusters with Apache Phoenix 4.7.0 for low-latency SQL and OLTP workloads. Phoenix uses Apache HBase as its backing store (HBase 1.2.1 is included on Amazon EMR release 4.7.0), using HBase scan operations and coprocessors for fast performance. Additionally, you can map Phoenix tables […]