AWS Big Data Blog

Supercharge SQL on Your Data in Apache HBase with Apache Phoenix

With today’s launch of Amazon EMR release 4.7, you can now create clusters with Apache Phoenix 4.7.0 for low-latency SQL and OLTP workloads. Phoenix uses Apache HBase as its backing store (HBase 1.2.1 is included on Amazon EMR release 4.7.0), using HBase scan operations and coprocessors for fast performance. Additionally, you can map Phoenix tables and views to existing HBase tables, giving you SQL access over data already stored in HBase.

Let’s run through a quick demo to explore how to connect to Phoenix using JDBC, create a view over an existing HBase table, and create a secondary index for increased read performance.

Create an Amazon EMR cluster and an HBase table

First, using the Amazon EMR console or AWS CLI, launch a new Amazon EMR cluster using release 4.7 and choose Phoenix as an application. Here’s an example AWS CLI command:

aws emr create-cluster --name PhoenixDemo --release-label emr-4.7.0 --instance-type m3.xlarge --instance-count 3 --applications Name=Phoenix --ec2-attributes KeyName=MyKeyName --use-default-roles

Selecting the Phoenix application also includes HBase and Hadoop (YARN, HDFS, and MapReduce), giving you all the components needed for a fully operational cluster.

Next, create a table in HBase to use with Phoenix. You will copy an HBase snapshot from Amazon S3 and restore it on your cluster. Go to this HBase post on the AWS Big Data Blog and follow the instructions under the “HBase shell query walkthrough” section to restore a table named customer (3,448,682 rows).

Finally, run a get request example from that blog to certify your table has been restored correctly.

Connect to Phoenix using JDBC and create a table

Once your HBase table is ready, it’s time to map a table in Phoenix to your data in HBase. You use a JDBC connection to access Phoenix, and there are two drivers included on your cluster under /usr/lib/phoenix/bin. First, the Phoenix client connects directly to HBase processes to execute queries, which requires several ports to be open in your Amazon EC2 Security Group (for ZooKeeper, HBase Master, and RegionServers on your cluster) if your client is off-cluster.

Second, the Phoenix thin client connects to the Phoenix Query Server, which runs on port 8765 on the master node of your EMR cluster. This allows you to use a local client without adjusting your Amazon EC2 Security Groups by creating a SSH tunnel to the master node and using port forwarding for port 8765. The Phoenix Query Server is still a new component, and not all SQL clients can support the Phoenix thin client.

In this example, you will use the SQLLine client included with Phoenix on the master node to connect to the Phoenix Query Server. Return to the terminal on the master node of your cluster. If you closed your SSH tunnel after creating your HBase table, create another SSH tunnel. Connect to Phoenix using this command:

/usr/lib/phoenix/bin/sqlline-thin.py http://localhost:8765

Once the SQLLine client has connected, let’s create a SQL view over the customer table in HBase. We will create a view instead of a table, because dropping a view does not also delete the underlying data in HBase (the behavior for deleting underlying data in HBase for Phoenix tables is configurable, but is true by default). To map a pre-existing table in HBase, you use a ‘column_family’.’column_prefix’ format for each column you want to include in your Phoenix view (note that you must use quotation marks around column and table names that are lowercase). Also, identify the column that is the HBase primary key with PRIMARY KEY, and give the view the same name as the underlying HBase table. Now, create a view over the customer table:

CREATE VIEW "customer" ( pk VARCHAR PRIMARY KEY, "address"."state" VARCHAR, "address"."street" VARCHAR, "address"."city" VARCHAR, "address"."zip" VARCHAR, "cc"."number" VARCHAR, "cc"."expire" VARCHAR, "cc"."type" VARCHAR, "contact"."phone" VARCHAR);

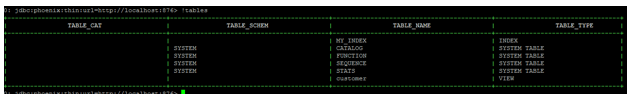

Use SQLLine’s !tables command to list available Phoenix tables and confirm your newly created view is in the list. Make sure your terminal window is wide enough to show the output before instantiating the SQLLine client. Otherwise, the complete output will not appear.

Speeding up queries with secondary indexes

First, run a SQL query counting the number of people with each credit card type in California:

SELECT "customer"."type" AS credit_card_type, count(*) AS num_customers FROM "customer" WHERE "customer"."state" = 'CA' GROUP BY "customer"."type";

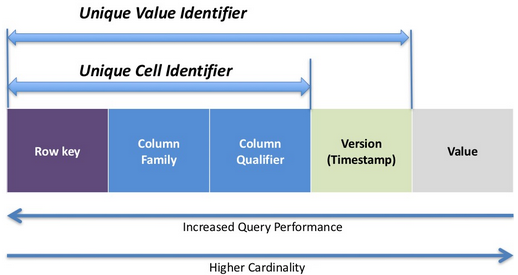

However, because we aren’t including the Primary Key in the HBase table in the WHERE clause, Phoenix must scan all HBase rows to ensure that all rows with the state ‘CA’ are included. If we anticipate our read patterns will filter by state, we can create a secondary index on that column to give Phoenix the ability to scan along that axis. For a more in-depth view of secondary indexing feature set, see the Apache Phoenix documentation. Now create a covered secondary index on state and include the HBase primary key (the customer ID), city, expire date, and type:

CREATE INDEX my_index ON "customer" ("customer"."state") INCLUDE("PK", "customer"."city", "customer"."expire", "customer"."type");

Phoenix will use a Hadoop MapReduce job to create this index and parallelly load it into HBase as another table (this takes around 2 minutes). Now, rerun the SQL query from earlier and compare the performance. It should be at least 10x faster!

Conclusion

In this post, you learned how to connect to Phoenix using JDBC, create Phoenix views over data in HBase, create secondary indexes for faster performance, and query data. You can use Phoenix as a performant SQL interface over existing HBase tables or use Phoenix directly to populate and manage tables using HBase behind the scenes as an underlying data store. To learn more about Phoenix, see the Amazon EMR documentation or the Apache documentation.

If you have any questions about using Phoenix on Amazon EMR or would like to share interesting use cases that leverage Phoenix, please leave a comment below.

——————————-

Related

Combine NoSQL and Massively Parallel Analytics Using Apache HBase and Apache Hive on Amazon EMR

Want to learn more about Big Data or Streaming Data? Check out our Big Data and Streaming data educational pages.