The Internet of Things on AWS – Official Blog

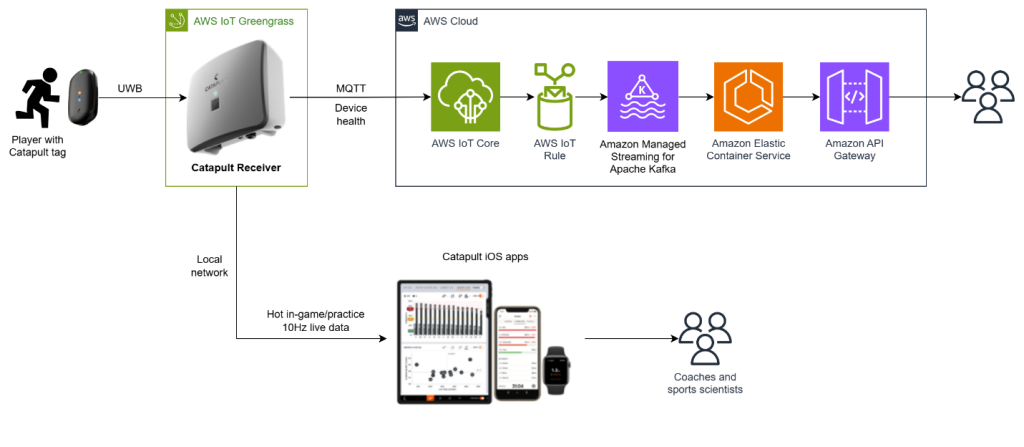

The data behind the win: How Catapult and AWS IoT are transforming pro sports

In professional sports, the difference between winning and losing often comes down to fine margins. Teams across the globe are turning to data-driven insights to optimize athlete performance, mitigate injuries, and gain competitive advantages. Catapult Sports, is a sports technology company that empowers professional teams to make data-driven decisions. By using AWS IoT services, Catapult […]

Optimize long-term video storage costs with Amazon Kinesis Video Streams warm storage tier

Introduction Amazon Kinesis Video Streams provides powerful solutions for cloud-based video management in physical security and surveillance organizations. While organizations need continuous recording capabilities, traditional storage approaches often lead to increased costs. Kinesis Video Streams excels at real-time video management and short-term storage, serving enterprise deployments with extensive camera networks. Now, the innovative Kinesis Video […]

AWS IoT: A 10-year foundation for an intelligent, connected future

December 2025 marks ten years since AWS for Internet of Things (AWS IoT) set out to connect the physical world to the digital world. Today, hundreds of millions of devices connect to AWS IoT services daily, enabling organizations to extract real-time insights, automate processes, and unlock entirely new business models across virtually every industry. This […]

AWS IoT Services Alignment with the European Union Cyber Resilience Act (EU CRA)

Introduction In today’s digital world, Internet of Things (IoT) security and compliance continues to evolve. The European Union’s Cyber Resilience Act (CRA) is reshaping how IoT manufacturers, developers, and service providers approach their work. Let’s explore what this means for AWS IoT customers and manufacturers using connected devices. Understanding the CRA’s impact The CRA was enacted on […]

Streamlining Amazon Sidewalk Device Fleet Management with AWS IoT Core’s New Bulk Operations

Amazon Sidewalk is a shared, community-sourced network that leverages existing Amazon Echo and Ring devices as gateways to provide secure, low-power connectivity for IoT devices—enabling applications ranging from asset tracking and smart home security to remote diagnostics for appliances and tools. AWS IoT Core for Amazon Sidewalk device management is evolving to meet the needs […]

When data is all you need: An overview of IoT communication with the cloud

Technical and marketing teams working on internet of things (IoT) programs, sooner or later, manage a project that requires data flow between a fleet of devices and the cloud. This data is critical because marketing wants to provide more features to the users, business teams require data driven decisions, and technical teams work to optimize […]

Deploying Small Language Models at Scale with AWS IoT Greengrass and Strands Agents

Introduction Modern manufacturers face an increasingly complex challenge: implementing intelligent decision-making systems that respond to real-time operational data while maintaining security and performance standards. The volume of sensor data and operational complexity demands AI-powered solutions that process information locally for immediate responses while leveraging cloud resources for complex tasks.The industry is at a critical juncture […]

High availability patterns for AWS IoT Greengrass using Pacemaker

Edge computing downtime in industrial IoT environments can be both inconvenient and costly. Systems at the edge require continuous operation to maintain business continuity. While AWS IoT Greengrass delivers powerful edge computing capabilities, achieving true enterprise-grade high availability requires additional orchestration. This post shows how to use Pacemaker, a cluster resource manager, to build resilient […]

Harnessing the power of AWS IoT rules with substitution templates

AWS IoT Core is a managed service that helps you to securely connect billions of Internet of Things (IoT) devices to the AWS cloud. The AWS IoT rules engine is a component of AWS IoT Core and provides SQL-like capabilities to filter, transform, and decode your IoT device data. You can use AWS IoT rules […]

Securing Vehicle Identification Number (VIN) with Reference ID in connected vehicle platforms with AWS IoT

With over 470 million connected cars expected by end of 2025, protecting sensitive vehicle data, particularly Vehicle Identification Numbers (VINs), has become crucial for automakers. VINs serve as unique identifiers in automotive processes from manufacturing to maintenance, making them attractive targets for cybercriminals. This post explores how automakers can help securing VINs in connected vehicle […]