AWS Public Sector Blog

Federated learning for biobank data at the CMU-NVIDIA Hackathon

Biobanks around the world are sitting on rich genomic, clinical, and imaging datasets, but strict privacy, governance, and regulatory requirements make it difficult to centralize this data for large-scale machine learning (ML) purposes. Federated learning (FL) offers a way forward by keeping sensitive data at each institution while sending only model updates to a coordinating server, preserving data sovereignty and reducing privacy risks.

During the January 2026 Carnegie Mellon University (CMU)–NVIDIA Federated Learning Hackathon for Biomedical Applications, ten teams built end‑to‑end prototypes on NVIDIA FLARE (NVIDIA Federated Learning Application Runtime Environment), with data prepared for modeling on Amazon Web Services (AWS), to test how FL could support real‑world biobank collaboration at scale. Several teams used datasets from the Registry of Open Data on AWS, which has a collection of over 225 life sciences datasets across genomics, imaging, and proteins. In this post, we detail the projects the teams worked on, with links to the GitHub repositories from each team, so anyone can access and build on these projects.

The following photograph shows CMU–NVIDIA Hackathon organizers Dr. Melanie Gainey, STEM Librarian and Open Science Program Director at CMU, and Dr. Ben Busby, Global Omics Alliances Manager at NVIDIA, introducing the event to a packed room of participants.

Figure 1: CMU–NVIDIA Hackathon. Photographer: Rebecca Devereaux, CMU Multimedia Specialist

Laying the groundwork with FedGen

Before you can test federated AI workflows, you need realistic data that respects biobank constraints. FedGen tackled this by generating synthetic genomic datasets that mimic real-world properties such as linkage disequilibrium, site-specific variability, covariates, and site-level imbalance across multiple virtual biobanks.

The team built a FLARE-based client–server framework where each client trained a logistic regression model locally for a binary trait, with only weight updates shared across the federation and optional client-side privacy filters applied. This synthetic infrastructure now serves as a reusable testbed for future federated genome-wide association studies (GWAS) on AWS and on-premises biobank environments.

Harmonizing pathology with FedPathHarmony

Histopathology images are notoriously sensitive to site-specific staining protocols, scanner hardware, and local pipelines, all of which can cause models to learn institutional signatures instead of biology. The FedPathHarmony team explored harmonization of the CAMELYON dataset in a federated setting, using stain normalization and style transfer to align images across sites before or during FL training.

By integrating these harmonization steps into NVIDIA FLARE workflows, the team showed that it’s possible to reduce domain shift while keeping raw pathology images at each biobank. Their prototype highlights how algorithmic harmonization and federated orchestration can work together to improve generalization of diagnostic models across hospitals.

Auditing federated readiness at scale with FedViz

Before researchers can use data from different biobanks, they need to first understand what data each biobank has. They need to be able to compare these features and understand the sample size and data quality from each biobank before they can decide which biobank’s data they should use. FedViz tackles this challenge by providing a visualization command center built on harmonized metadata from large-scale consortia, allowing researchers to audit gaps in harmonization and quantify federated readiness across the cohorts.

Pangenome graphs with OmniGenome

Pangenome graphs need data from multiple ancestries and sequencing centers, but these datasets are often siloed across biobanks. OmniGenome focused on building a federated framework for pangenome construction and analysis, using data from the Human Pangenome Reference Consortium (HPRC) as a proof of concept alongside federated genomic hashing without exposing raw sequences. They validated this approach on data from 1000 Genomes, focused on variants with Alzheimer’s disease risk. This work suggests that comparable pangenome representations can be built in a federated setting, which can then be shared across biobanks without exposing raw sequence data.

Untangling ancestry with Med_SNP_Deconvolution

Polygenic risk scores and ancestry inference tools built on existing genomic studies have shown limited generalizability across diverse populations, leaving a critical gap in equitable genomic medicine. Current approaches to ancestry inference require access to individual-level genotype data, making cross-institutional collaboration difficult under increasingly strict data privacy regulations.

Med_SNP_Deconvolution tackles this issue by providing an end-to-end computational framework that transforms phased variant data into recombination-defined haploblock cluster identifiers—discrete categorical features that preserve population structure while obscuring individual-level variation—and integrates GPU-accelerated machine learning with NVIDIA FLARE federated infrastructure, enabling multiple research sites to collaboratively train ancestry classification models without centralizing raw genotype data.

Federated rare disease subtyping with RAIDers

Rare diseases such as Amyotrophic Lateral Sclerosis (ALS) present a unique challenge for genomic research: patient cohorts are too small and too scattered across institutions to yield statistically meaningful results, and strict privacy regulations prevent researchers from pooling raw data across biobanks.

RAIDers (Rare Disease and AI) tackles this problem by building a federated computational framework that integrates 480 pathogenic variants from ClinVar and population allele frequency data from gnomAD to generate a synthetic cohort of more than 8,000 simulated patients across five institutions. They create a tool that validates a federated subtyping pipeline on synthetic data. RAIDers demonstrates that coherent ALS molecular subtypes can be discovered without centralizing sensitive genomic data, establishing a scalable architecture for rare disease subtyping that is ready for integration with real-world controlled-access biobank datasets.

Multi-omic cancer subtyping with OncoLearn

Cancer research increasingly depends on integrating genomic, transcriptomic, and clinical data to define meaningful subtypes. Current multi-omic models are constrained by patient privacy regulations, the logistical barriers of aggregating sensitive genetic datasets, and the poor generalizability of single-site cohorts across diverse global populations.

OncoLearn built a federated multi‑omic cancer subtyping pipeline for classifying breast cancer (BRCA) subtypes using the cancer genome atlas (TCGA) dataset. The framework evaluates the efficacy of traditional supervised learning against modern transfer learning, validating this approach across five subtypes and demonstrating that federated transfer learning achieves high accuracy despite local computational constraints. The team’s open source codebase demonstrates how federated multi‑omic models could help cancer centers collaborate on precision oncology while maintaining their institutional data boundaries.

The following photograph shows CMU–NVIDIA Hackathon researchers working together on multi-omic cancer subtyping with OncoLearn.

Figure 2: Researchers working with OncoLearn. Photographer: Rebecca Devereaux, CMU Multimedia Specialist.

Aligning risk prediction with PRSAggregator

Polygenic risk scores (PRS) aggregate effects across thousands of variants to stratify disease risk, but most PRS are derived from European-ancestry dominant cohorts and thus transfer poorly to other populations due to differences in linkage disequilibrium and allele frequencies. PRSAggregator set out to harmonize PRS computation in a federated framework by enabling sites to collaboratively train or calibrate risk models and then aggregate scores in a privacy-preserving fashion.

The prototype explored federated strategies for aligning model parameters and evaluation metrics across sites, making it easier to interpret PRS distributions and thresholds globally. This work points toward a future in which federated pipelines help standardize genetic risk prediction across institutions and ancestries.

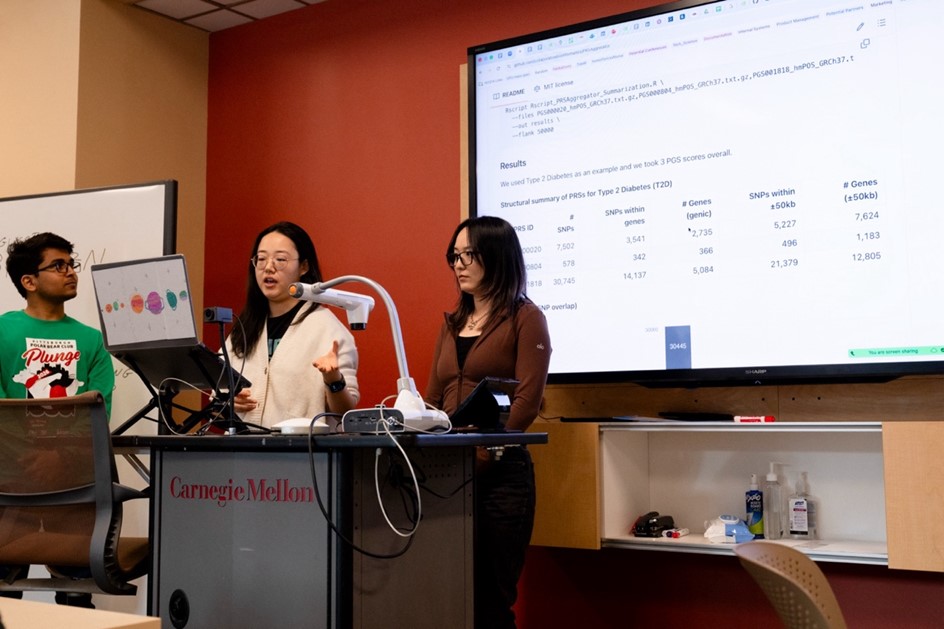

The following photograph shows the PRSAggregator team presenting their project on the third day of the CMU–NVIDIA Hackathon.

Figure 3: PRS Aggregator team presenting their project on day 3. Photographer: Rebecca Devereaux, CMU Multimedia Specialist.

Fusing modalities with MuFFLe

Cancer prognosis models require multimodal data, such as RNA sequencing, clinical variables, and imaging, drawn from patients across multiple institutions. But privacy regulations and unequal access to healthcare technology mean that not every hospital can collect the same data types or share raw patient records across sites.

MuFFLe (Multimodal Framework for Federated Learning) tackles this problem by providing a privacy-preserving federated learning framework that integrates RNA sequencing and clinical features for tumor progression prediction, using NVIDIA FLARE to ensure each hospital trains only on its local data and shares encrypted model updates—never raw patient records—with a central aggregation server.

A key innovation is MuFFLe’s ability to handle modality gaps between institutions. When a smaller hospital lacks RNA sequencing capabilities, the framework selectively disables that encoder and zeroes out its embeddings, allowing the global model to train collaboratively even when data modalities are unequal across sites.

Validated on bladder cancer recurrence data from the CHIMERA Challenge, MuFFLe successfully stratified 176 patients into three distinct risk clusters with interpretable attention heatmaps that highlight the tissue regions and gene features driving each prediction, establishing a scalable, extensible architecture for multimodal federated cancer prognosis.

Predicting protein fitness with FedProFit

Predicting how combinatorial protein mutations affect protein function is a critical challenge in protein engineering, but the experimental data needed to train accurate models—deep mutational scanning (DMS) assays—is scattered across hospitals, academic labs, and industry partners who can’t easily share proprietary or sensitive sequence data.

FedProFit tackles this problem by providing a federated learning framework with NVIDIA FLARE for predicting protein fitness scores across distributed DMS datasets, using NVIDIA BioNeMo’s ESM-2 650M protein language model as a frozen backbone and training only lightweight prediction heads locally at each client site. This way, only model weights, never raw sequence data, are shared with the central aggregation server.

To simulate realistic cross-institutional collaboration, the framework partitions the ProteinGym substitution benchmark, which comprises approximately 2.4 million missense variants across 217 DMS assays, into four federated client nodes representing a clinical hospital (human proteins), a virology lab (viral proteins), an antibiotic resistance lab (prokaryote proteins), and an academic bio-foundry (eukaryote proteins). This demonstrates that a single federated model can learn meaningful fitness predictions across highly heterogeneous biological domains without any site needing to expose its underlying data.

Shared infrastructure, shared impact

Across these ten projects, a consistent pattern emerged. By combining NVIDIA FLARE’s federated orchestration with cloud-scale compute and Open Data on AWS, teams could prototype sophisticated biomedical AI workflows in only a few days. The CMU–NVIDIA Hackathon projects collectively explored disease subtyping, GWAS, pangenomes, ancestry stratification, rare diseases, polygenic risk scores, multimodal data integration, and protein function through a federated lens grounded in real biobank constraints. Although each prototype is an early step, together they outline a technical blueprint for how biobanks, hospitals, and research organizations might collaborate on next‑generation biomedical AI without moving their most sensitive data.

The following is a group photograph of participants and organizers.

Figure 4: All participants and organizers gather on the final day of the hackathon. Photographer : Rebecca Devereaux, CMU Multimedia Specialist.

: Rebecca Devereaux, CMU Multimedia Specialist.

What’s next?

Researchers, engineers, and students who want to build on this work can start today by exploring the open source repositories from the CMU–NVIDIA Hackathon and reusing data pipelines for their own biobank scenarios. A full preprint shares deeper technical details for each project so that others can replicate and extend these prototypes. We also invite collaborators and biobanks interested in piloting federated learning workflows to connect with the organizing team and to join us at the next Federated Learning Hackathon at CMU Libraries in January 2027, where we’ll continue pushing the boundaries of privacy‑preserving biomedical AI.

To learn more about the CMU–NVIDIA Hackathon, read the CMU blog post about the event, Where Industry Meets Experimentation.

Acknowledgements:

Ayush Tripathi – Solutions Architect at AWS

Sphia Sadek – Solutions Architect at AWS