AWS News Blog

New – AWS Application Load Balancer

We launched Elastic Load Balancing (ELB) for AWS in the spring of 2009 (see New Features for Amazon EC2: Elastic Load Balancing, Auto Scaling, and Amazon CloudWatch to see just how far AWS has come since then). Elastic Load Balancing has become a key architectural component for many AWS-powered applications. In conjunction with Auto Scaling, Elastic Load Balancing greatly simplifies the task of building applications that scale up and down while maintaining high availability.

On the Level

Per the well-known OSI model, load balancers generally run at Layer 4 (transport) or Layer 7 (application).

A Layer 4 load balancer works at the network protocol level and does not look inside of the actual network packets, remaining unaware of the specifics of HTTP and HTTPS. In other words, it balances the load without necessarily knowing a whole lot about it.

A Layer 7 load balancer is more sophisticated and more powerful. It inspects packets, has access to HTTP and HTTPS headers, and (armed with more information) can do a more intelligent job of spreading the load out to the target.

Application Load Balancing for AWS

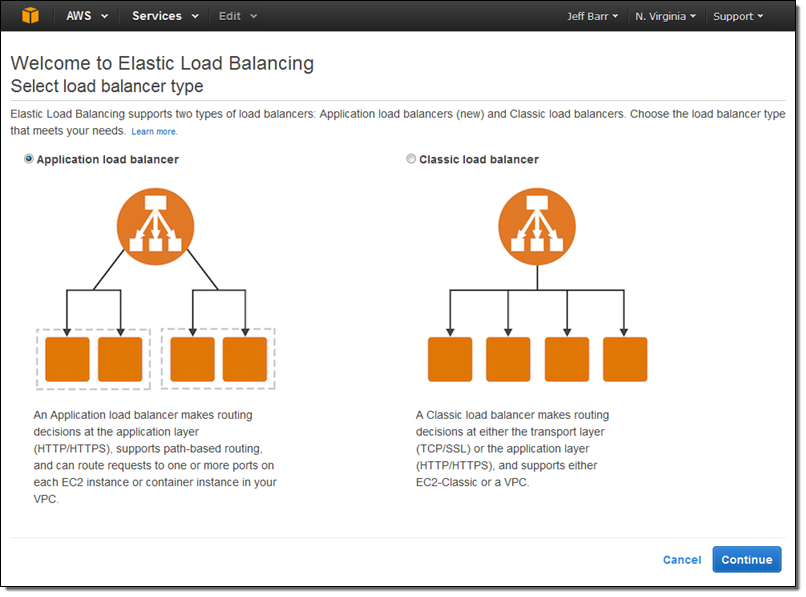

Today we are launching a new Application Load Balancer option for ELB. This option runs at Layer 7 and supports a number of advanced features. The original option (now called a Classic Load Balancer) is still available to you and continues to offer Layer 4 and Layer 7 functionality.

Today we are launching a new Application Load Balancer option for ELB. This option runs at Layer 7 and supports a number of advanced features. The original option (now called a Classic Load Balancer) is still available to you and continues to offer Layer 4 and Layer 7 functionality.

Application Load Balancers support content-based routing, and supports applications that run in containers. They support a pair of industry-standard protocols (WebSocket and HTTP/2) and also provide additional visibility into the health of the target instances and containers. Web sites and mobile apps, running in containers or on EC2 instances, will benefit from the use of Application Load Balancers.

Let’s take a closer look at each of these features and then create a new Application Load Balancer of our very own!

Content-Based Routing

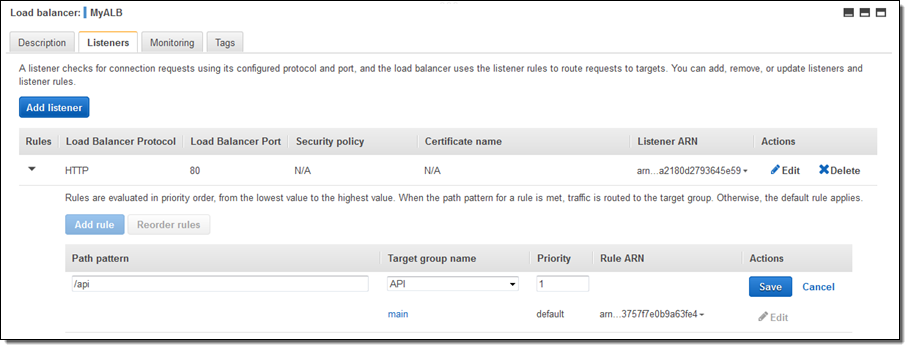

An Application Load Balancer has access to HTTP headers and allows you to route requests to different backend services accordingly. For example, you might want to send requests that include /api in the URL path to one group of servers (we call these target groups) and requests that include /mobile to another. Routing requests in this fashion allows you to build applications that are composed of multiple microservices that can run and be scaled independently.

As you will see in a moment, each Application Load Balancer allows you to define up to 10 URL-based rules to route requests to target groups. Over time, we plan to give you access to other routing methods.

Support for Container-Based Applications

Many AWS customers are packaging up their microservices into containers and hosting them on Amazon EC2 Container Service. This allows a single EC2 instance to run one or more services, but can present some interesting challenges for traditional load balancing with respect to port mapping and health checks.

The Application Load Balancer understands and supports container-based applications. It allows one instance to host several containers that listen on multiple ports behind the same target group and also performs fine-grained, port-level health checks

Better Metrics

Application Load Balancers can perform and report on health checks on a per-port basis. The health checks can specify a range of acceptable HTTP responses, and are accompanied by detailed error codes.

As a byproduct of the content-based routing, you also have the opportunity to collect metrics on each of your microservices. This is a really nice side-effect that each of the microservices can be running in its own target group, on a specific set of EC2 instances. This increased visibility will allow you to do a better job of scaling up and down in response to the load on individual services.

The Application Load Balancer provides several new CloudWatch metrics including overall traffic (in GB), number of active connections, and the connection rate per hour.

Support for Additional Protocols & Workloads

The Application Load Balancer supports two additional protocols: WebSocket and HTTP/2.

WebSocket allows you to set up long-standing TCP connections between your client and your server. This is a more efficient alternative to the old-school method which involved HTTP connections that were held open with a “heartbeat” for very long periods of time. WebSocket is great for mobile devices and can be used to deliver stock quotes, sports scores, and other dynamic data while minimizing power consumption. ALB provides native support for WebSocket via the ws:// and wss:// protocols.

HTTP/2 is a significant enhancement of the original HTTP 1.1 protocol. The newer protocol feature supports multiplexed requests across a single connection. This reduces network traffic, as does the binary nature of the protocol.

The Application Load Balancer is designed to handle streaming, real-time, and WebSocket workloads in an optimized fashion. Instead of buffering requests and responses, it handles them in streaming fashion. This reduces latency and increases the perceived performance of your application.

Creating an ALB

Let’s create an Application Load Balancer and get it all set up to process some traffic!

The Elastic Load Balancing Console lets me create either type of load balancer:

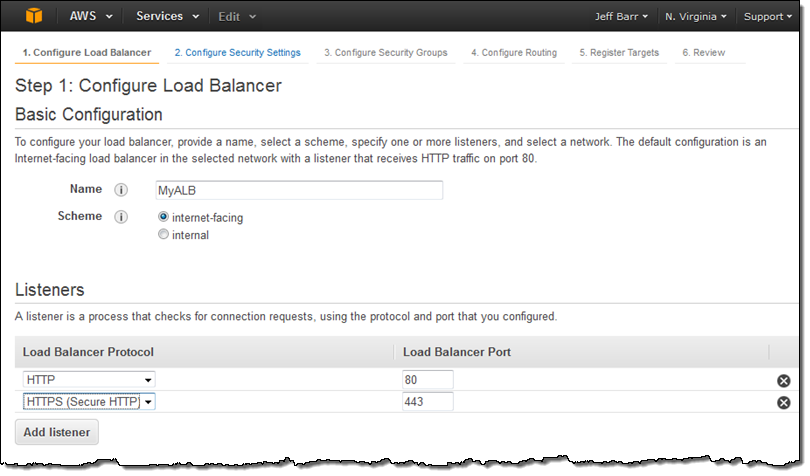

I click on Application load balancer, enter a name (MyALB), and choose internet-facing. Then I add an HTTPS listener:

I click on Application load balancer, enter a name (MyALB), and choose internet-facing. Then I add an HTTPS listener:

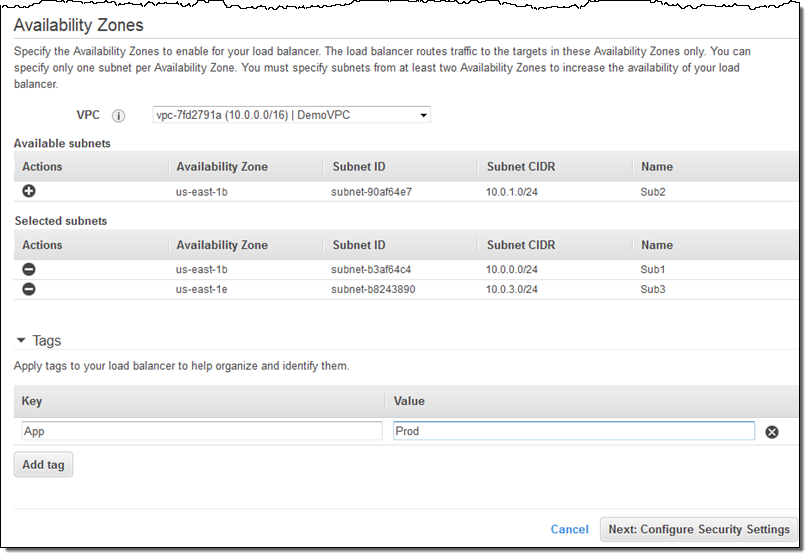

On the same screen, I choose my VPC (this is a VPC-only feature) and one subnet in each desired Availability Zone, tag my Application Load Balancer, and proceed to Configure Security Settings:

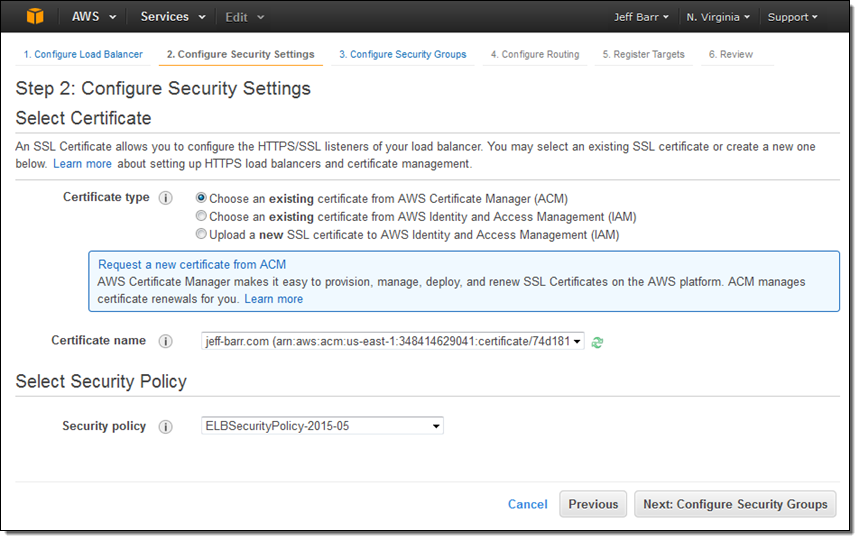

Because I created an HTTPS listener, my Application Load Balancer needs a certificate. I can choose an existing certificate that’s already in IAM or AWS Certificate Manager (ACM), upload a local certificate, or request a new one:

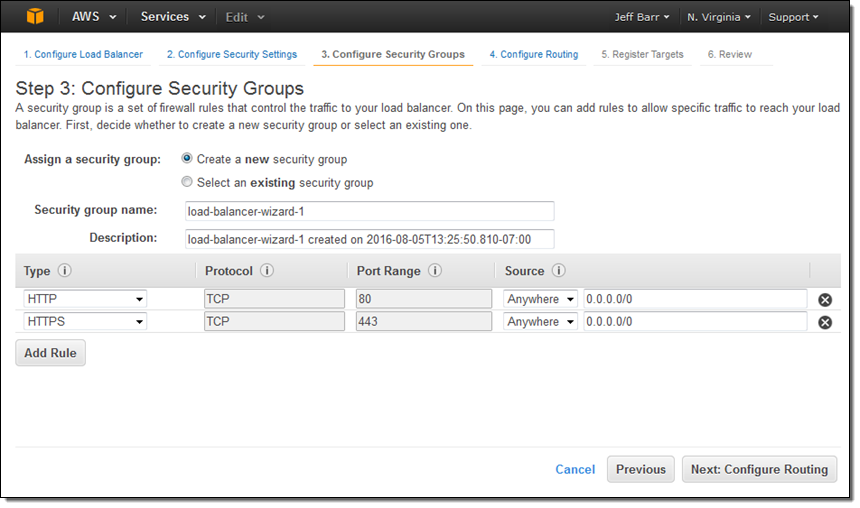

Moving right along, I set up my security group. In this case I decided to create a new one. I could have used one of my existing VPC or EC2 security groups just as easily:

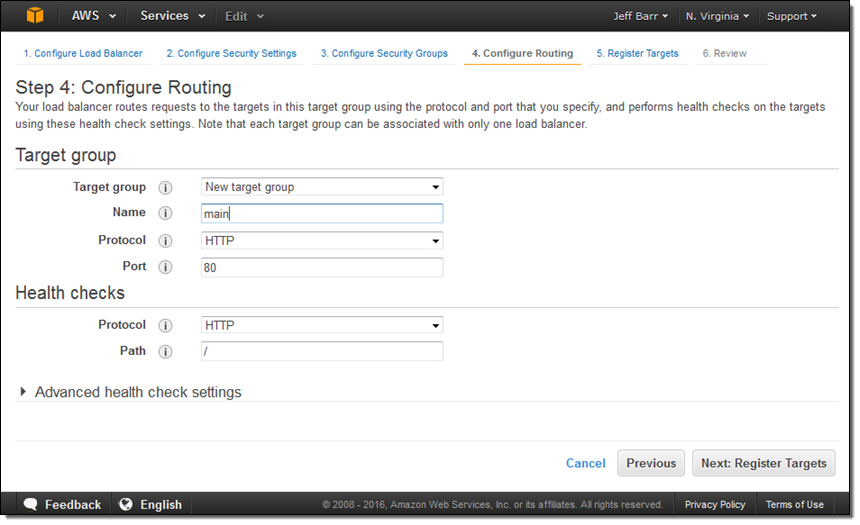

The next step is to create my first target group (main) and to set up its health checks (I’ll take the defaults):

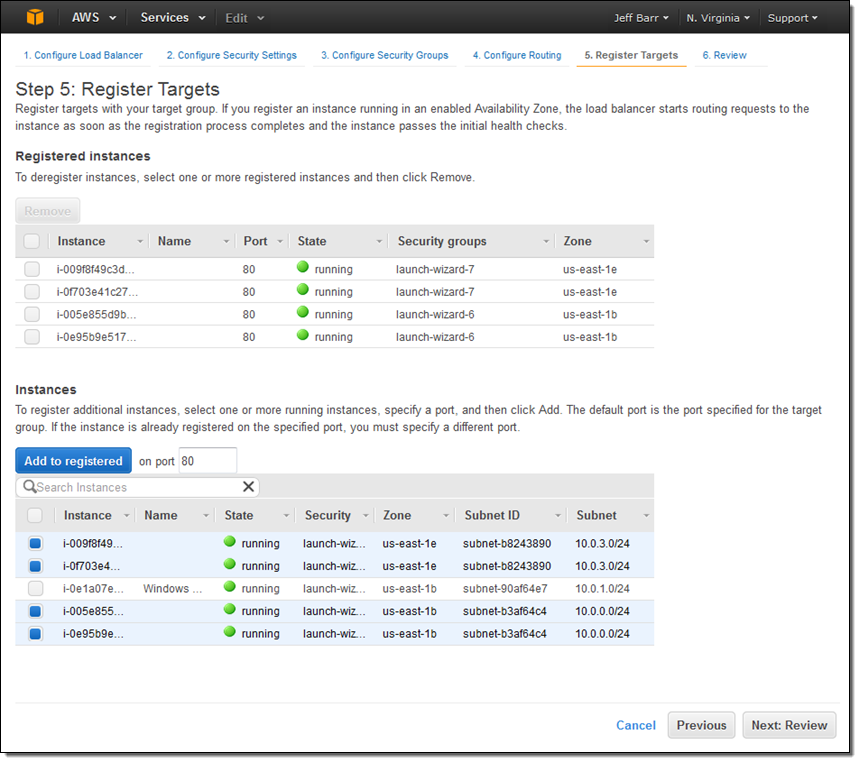

Now I am ready to choose the targets—the set of EC2 instances that will receive traffic through my Application Load Balancer. Here, I chose the targets that are listening on port 80:

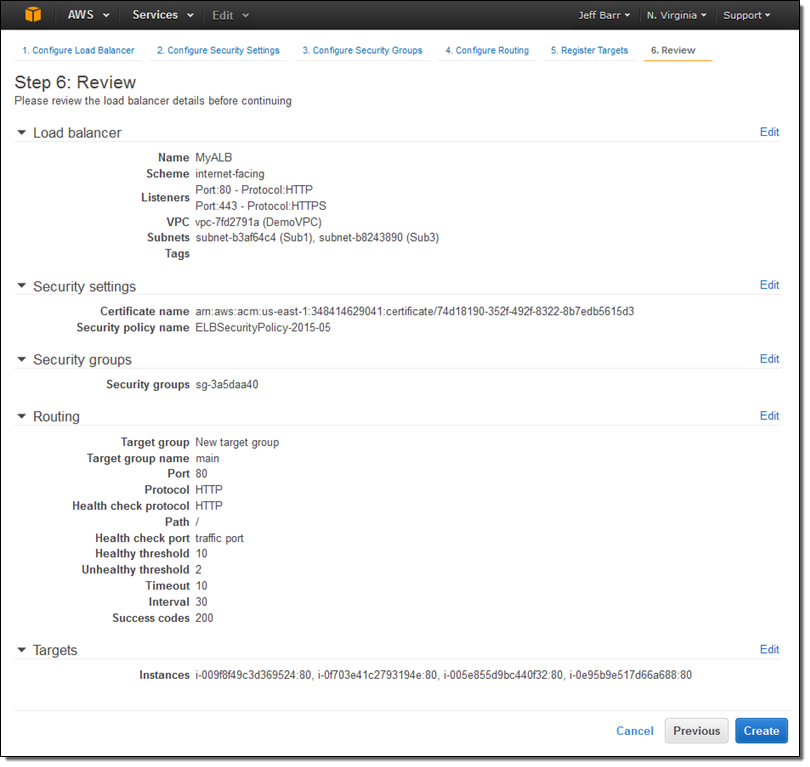

The final step is to review my choices and to Create my ALB:

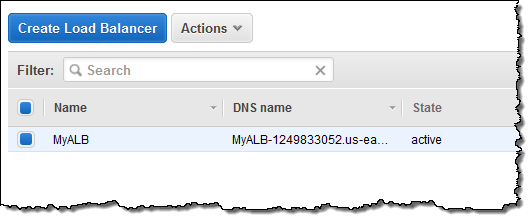

After I click on Create the Application Load Balancer is provisioned and becomes active within a minute or so:

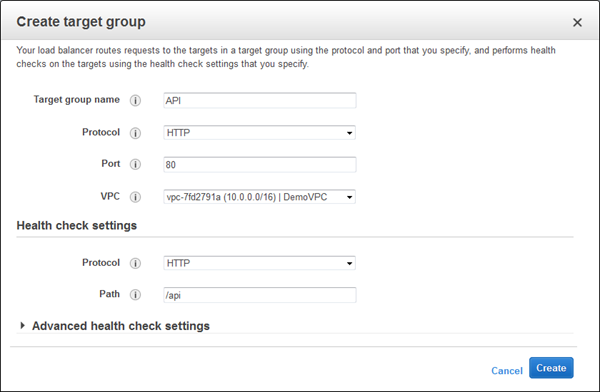

I can create additional target groups:

And then I can add a new rule that routes /api requests to that target:

Application Load Balancers work with multiple AWS services including Auto Scaling, Amazon Elastic Container Service (Amazon ECS), AWS CloudFormation, AWS CodeDeploy, and AWS Certificate Manager (ACM). Support for and within other services is in the works.

Moving on Up

If you are currently using a Classic Load Balancer and would like to migrate to an Application Load Balancer, take a look at our new Load Balancer Copy Utility. This Python tool will help you to create an Application Load Balancer with the same configuration as an existing Classic Load Balancer. It can also register your existing EC2 instances with the new load balancer.

Availability & Pricing

The Application Load Balancer is available now in all commercial AWS regions and you can start using it today!

The hourly rate for the use of an Application Load Balancer is 10% lower than the cost of a Classic Load Balancer.

When you use an Application Load Balancer, you will be billed by the hour and for the use of Load Balancer Capacity Units, also known as LCU’s. An LCU measures the number of new connections per second, the number of active connections, and data transfer. We measure on all three dimensions, but bill based on the highest one. One LCU is enough to support either:

- 25 connections/second with a 2 KB certificate, 3,000 active connections, and 2.22 Mbps of data transfer or

- 5 connections/second with a 4 KB certificate, 3,000 active connections, and 2.22 Mbps of data transfer.

Billing for LCU usage is fractional, and is charged at $0.008 per LCU per hour. Based on our calculations, we believe that virtually all of our customers can obtain a net reduction in their load balancer costs by switching from a Classic Load Balancer to an Application Load Balancer.

— Jeff;