Comment a été ce contenu ?

- Apprendre

- Articul8 AI bridges the industrial expertise gap with specialized Llama 4 models and security-first AWS architecture

Articul8 AI bridges the industrial expertise gap with specialized Llama 4 models and security-first AWS architecture

Industrial enterprises face significant financial risks and operational delays when using general-purpose AI models that treat specialized technical data as white noise. These approaches struggle to interpret complex machine logs and sensor data, leading to imprecise root cause analysis and prolonged downtime. To address this, Articul8 AI developed an enterprise-grade generative AI platform using specialized Meta Llama models. Working with Meta and Amazon Web Services (AWS), Articul8 created domain-specific models that identify equipment failures with an overall 88 percent accuracy— delivering the highest domain-specific intelligence density. This empowers industrial leaders to shift effort from manual data collection to proactive risk mitigation and faster maintenance resolution.

Breaking through the white noise of general-purpose AI

Articul8 AI provides an enterprise-grade generative AI platform that allows industries like energy and manufacturing to build applications that go far beyond your basic chatbot. In these sectors, general-purpose models often fall short because they lack the deep technical expertise required to interpret complex datasets, such as machine logs and sensor data from a manufacturing floor. For example, in energy generation and storage, a model must be able to distinguish between highly specialized technical nuances to assist with design problems or plan for critical maintenance outages. For a non-expert, these technical particularities might seem like white noise, but for an engineer, a model’s inability to grasp these details makes the output unreliable for high-stakes root cause analysis.

The impact of this expertise gap is measured in massive financial losses and a lack of competitive differentiation. In manufacturing, equipment failure translates directly into costly production downtime; as Felipe Viana, head of applied research at Articul8, explains: “When a plant isn’t producing, it’s essentially not making money. Bringing that facility back online faster isn’t just a technical goal—it translates directly into recovered revenue.” Beyond these immediate operational costs, organizations relying solely on off-the- shelf models face a long-term defensibility problem. If every utility company uses the same general model, their only differentiation is prompt engineering—a shallow barrier that fails to protect or leverage their proprietary data.

Beyond these strategic hurdles, industrial enterprises are challenged by a critical tension between intelligence density—the reasoning power of a model relative to its size—and infrastructure constraints. To achieve expert-level performance, organizations have traditionally been forced to scale their compute footprint indefinitely, which quickly becomes cost- prohibitive. Additionally, many customers require applications to be completely air-gapped or deployed within strictly controlled virtual private clouds (VPCs) to ensure security. This creates a high-stakes trade-off: enterprises need a solution with enough intelligence density to handle complex, expert-level agentic workflows, yet efficient enough to fit within a hardware footprint compatible with their existing budgets.

Building specialized industrial AI with Meta Llama 4 on Amazon SageMaker HyperPod

To bridge the gap between raw infrastructure and enterprise applications, Articul8 developed a platform that transforms broad-based models into specialized, high-performance assets. Central to this strategy is its proprietary ModelMesh—a sophisticated agentic reasoning engine that serves as the functional core reasoning engine for industrial AI. Articul8 used AWS to power this platform, using Amazon SageMaker HyperPod to manage complex, distributed training workloads with the granular flexibility required for large-scale industrial models. Utilizing both the Slurm and Amazon Elastic Kubernetes Service (Amazon EKS) versions of HyperPod, the Articul8 applied research team performs continued pre-training on Meta Llama-4-Maverick base models. This process injects domain-specific knowledge—sourced from a curated mix of technical and scientific corpora—directly into the model architecture. Articulate then refines these models through an iterative loop of supervised fine-tuning and reinforcement learning to ensure expert-level reasoning. To execute this intensive training for sectors like energy and manufacturing, Articul8 relies on Amazon Elastic Compute Cloud (Amazon EC2) instances, which maintain high performance and data integrity throughout the entire pipeline.

Articul8 selected Meta Llama as the core of this engine because it provides the first stable, open-source framework capable of merging multimodal inputs—like CAD drawings and sensor logs—with sophisticated agentic text workloads. This intelligence density allows Articul8 to deliver expert-level insights while keeping parameter counts low. This is a vital requirement for industrial customers operating within strict hardware budgets who cannot afford to run massive, general-purpose models. Viana notes, “Before Llama, we had to orchestrate separate, fragmented models for text and vision. Llama was the first solid foundation that empowered us to build a single, unified system that masters both multimodal applications and complex agentic workflows simultaneously.”

Deploying on AWS allows Articul8 to provide a security-first architecture that meets the stringent demands of regulated industries. Because many utility and manufacturing clients must maintain strict control over their environments, Articul8 uses Amazon Virtual Private Cloud (Amazon VPC) to host its platform within a customer’s own secure infrastructure. Opting for this deployment model helps verify that a company’s proprietary data remains protected under its own security protocols while still benefiting from the scale of the cloud. A close technical collaboration with AWS further refined this architecture, providing Articul8 with deep-dive support and early access to infrastructure optimizations that accelerated the transition from prototype to production. Ultimately, the synergy between AWS infrastructure and Meta’s open-weight models offers a reliable, cost-effective alternative to generic frontier models, giving industrial leaders a specialized path to production-grade AI.

Establishing SOTA accuracy and industrial-grade reliability

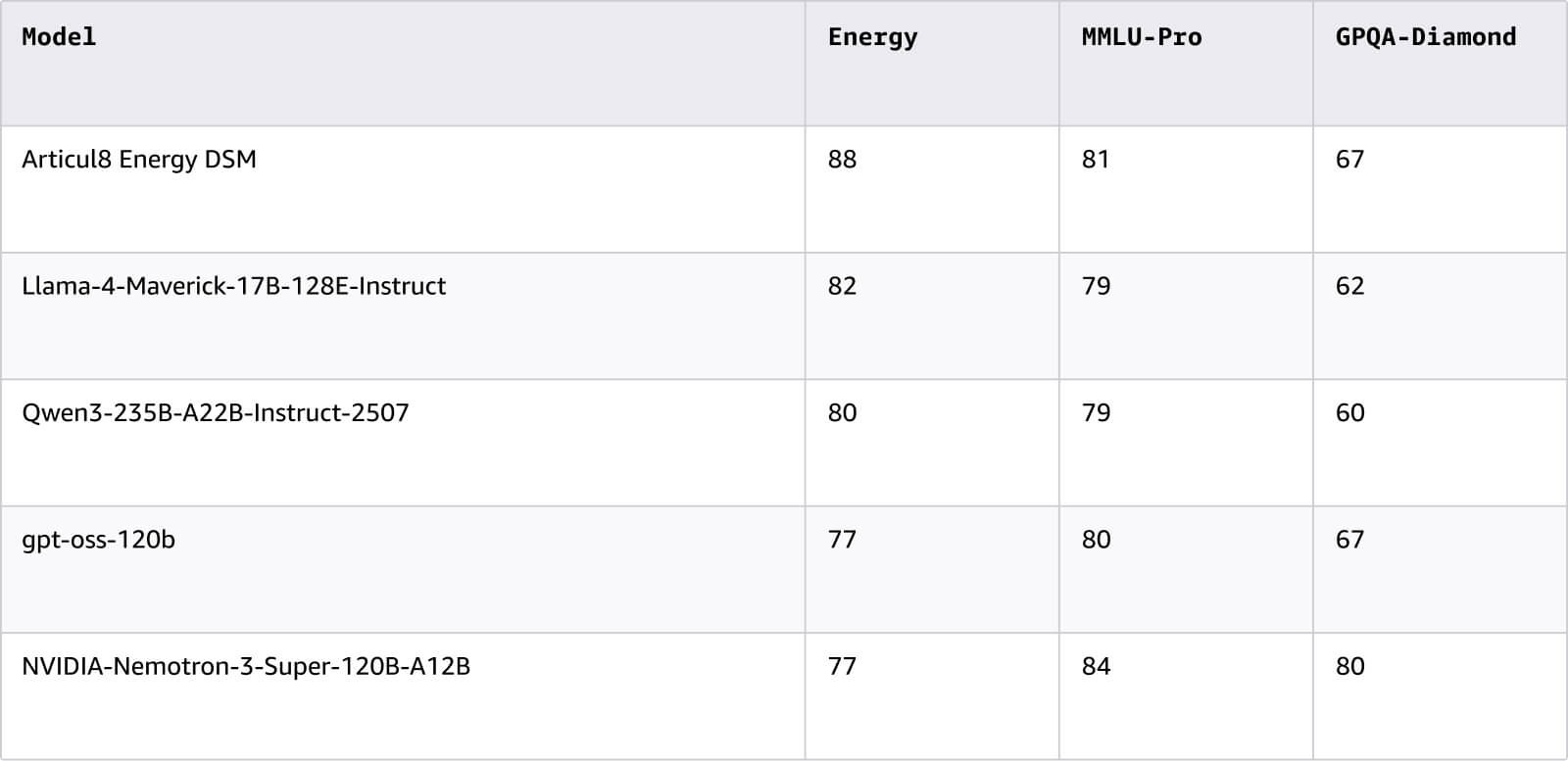

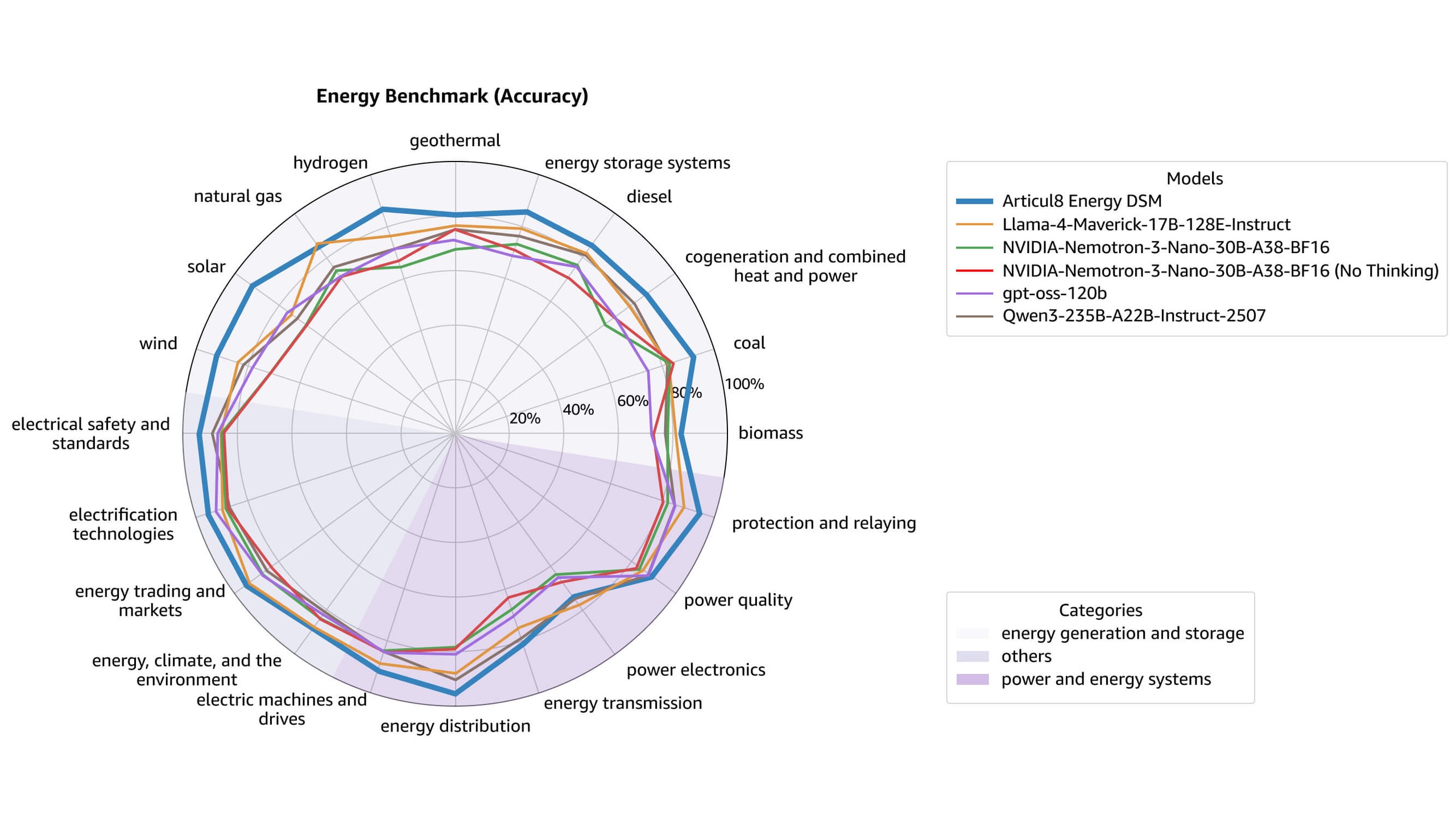

By architecting its platform on AWS with Meta Llama, Articul8 fundamentally redefined the performance ceiling for industrial generative AI. The transition to a domain-specific model (DSM) architecture delivered a massive leap in technical precision, particularly for the energy sector. According to the company's energy benchmarks, the Articul8 Energy DSM achieved an overall 88 percent accuracy, as shown in the table below. The figure that follows illustrates the benchmark by topic.

By grounding the platform in proprietary technical data rather than simple prompt engineering, Articul8 enables its customers to build a defensible, long-term competitive advantage. The operational impact is most evident in complex manufacturing and utility environments, where specialized agentic workflows now identify and resolve equipment failures with unprecedented speed. By transforming technical particularities from white noise into actionable intelligence, the platform allows facilities to return to production rapidly, effectively recovering revenue that was previously lost to downtime.

The synergy between AWS infrastructure and Llama’s intelligence density also delivered critical system stability. Articul8 successfully increased domain expertise while maintaining a compact parameter count, allowing customers to deploy expert-level AI in their own Amazon VPC without exceeding strict hardware budgets. Viana notes, “The fact that the system is less complex means that the system is more stable and more reliable. You don’t see these stories when you look at benchmarks, but our customers do.” Building on this reliable foundation does more than solve immediate operational inefficiencies; it provides the scalable framework necessary for enterprises to move toward fully autonomous, expert-level agentic workflows across the global energy grid. Ready to train and deploy models at scale? Learn how Amazon SageMaker and Amazon EC2 can help you build, iterate, and run AI workloads for even the most demanding real-time environments.

Comment a été ce contenu ?