AWS Partner Network (APN) Blog

Using Solano CI with Amazon ECS and Amazon ECR Services to Automatically Build and Deploy Docker Containers

Joseph Fontes is a Partner Solutions Architect at AWS.

Solano Labs, an AWS DevOps Competency Partner and APN Technology Partner, provides a continuous integration and deployment (CI/CD) solution called Solano CI.

This solution provides a build, deployment, and test suite focused on performance, with support for multiple programming languages and application frameworks, numerous build and test frameworks, and many database and backend frameworks, in addition to third-party tool integrations.

Solano Labs provides a software as a service (SaaS) offering with their Solano Pro CI and Solano Enterprise CI products along with a private installation offering known as Solano Private CI.

In this blog post, we’ll demonstrate a CI/CD deployment that uses the Solano CI SaaS offering for build and test capabilities. This implementation integrates with Amazon EC2 Container Service (Amazon ECS) and Amazon EC2 Container Registry (Amazon ECR), creating a build and deployment pipeline from source code to production deployment.

Recently, Solano Labs announced the release of a new AWS feature integration, the ability to use the AWS AssumeRole API within a Solano CI build pipeline. This feature, used in this demonstration deployment, supports AWS security best practices by using AWS cross-account roles to replace AWS access keys and secret keys for authentication with AWS services from within Solano CI.

Overview

The examples in this blog post demonstrate how you can integrate Amazon ECR with the Solano CI platform in order to automate the deployment of Docker images to Amazon ECS. Once you’ve completed the integration, you’ll be able to automate the deployment of a new codebase to a production environment by simply committing your application changes to a GitHub repository. The following diagram illustrates the workflow.

To deploy this pipeline, we’ll explain how you can perform the following tasks.

- Create a new GitHub repository

- Install and configure Docker

- Create an AWS Identity and Access Management (IAM) role for Solano CI

- Create an Amazon ECR repository

- Set Amazon ECS registry repository policy

- Build a Docker image and push it to Amazon ECR

- Create an Elastic Load Balancing load balancer

- Create an Amazon ECS cluster

- Install Solano CI CLI and set environment variables

- Integrate Solano CI with your GitHub repository

Requirements

These examples will make use of the Solano CI Docker capabilities. For more information about these features, see the Solano CI documentation.

You’ll need a source code versioning system that will allow Solano CI to process your source code, as updates are committed. In our examples, we will be using GitHub. You can also choose any Git or Mercurial compatible service.

Configuring GitHub

We will be using GitHub as our managed source code versioning system. If you do not already have a GitHub account, you can find instructions for creating a new account on the GitHub website.

You’ll want to create a new repository to follow along with the examples. Once your GitHub repository is available, you can import the code for the examples we’re using from the repository:

https://github.com/awslabs/aws-demo-php-simple-app.git

Once you import the code, the contents of your new GitHub repository should mirror the contents of the example repository. You should now be able to clone your repository to your development environment:

git clone your-github-repo-location

In your local GitHub repository, there will be two files named solano.yml and solano-docker.yml. These files contain the build and deployment instructions for use by Solano CI. You’ll need to update the instructions for use with Docker. Within your repository root directory, run the commands:

rm –f solano.yml

cp solano-docker.yml solano.yml

git add solano.yml

Configuring Docker

To build the initial Docker image you’ll use to populate the Amazon ECS service, you’ll need to install the Docker utilities and service. Instructions for installing and starting the service can be found in the AWS documentation.

Solano CI Account Creation

To use Solano CI, you’ll either need to have an active account with Solano Labs or use your GitHub credentials to log in. The account creation and the Solano CI platform management console can be found on the Solano Labs website:

Creating the AWS IAM Role

You’ll now need to configure the IAM role used by Solano CI to automate Amazon ECS integration. From your Solano CI console, navigate to the Organization Settings page, and then choose AWS AssumeRole.

You’ll need to copy the AWS account ID and external ID from the AWS AssumeRole Settings screen—you’ll use these when you create the IAM role. Copy the contents of this Github Gist to the file solano-role-policy-doc.json on your local development environment, replacing AWSACCOUNTIDTMP with the AWS account ID provided from Solano and replacing SOLANOEXTERNALIDTMP with the external ID provided.

aws iam create-role --role-name solano-ci-role --assume-role-policy-document file://solano-role-policy-doc.json

aws iam attach-role-policy --role-name solano-ci-role --policy-arn arn:aws:iam::aws:policy/AmazonEC2ContainerServiceFullAccess

aws iam attach-role-policy --role-name solano-ci-role --policy-arn arn:aws:iam::aws:policy/AmazonEC2ContainerRegistryPowerUser

The output of the create-role command includes an IAM role ARN. Copy this value and paste it into the Solano CI AWS AssumeRole Settings box, and then choose Save AWS AssumeRole ARN.

Your Solano CI IAM role will now have the permissions necessary to update the Docker image in your Amazon ECR repository and to update your Amazon ECS cluster with the configuration of the new images.

Creating the Amazon ECR Repository

You can use the AWS Management Console, AWS Command Line Interface (AWS CLI), or the AWS SDK to create the Amazon ECR repository. In our example, we’ll be using the command line. Here’s the command used to create a new Amazon ECR repository named solano:

aws ecr create-repository --repository-name solano

If you prefer to use the AWS Management Console, see the following screen illustrations.

Next, you’ll need a policy that allows an IAM cross-account role access to push and pull images from this repository. Copy the information in this Github Gist to a file named solano-ecs-push-policy.json.

Replace the <ACCOUNT NUMBER> value with your AWS account number and save the file. Now that you have the policy document defined, you need to apply this policy to the repository:

aws ecr set-repository-policy --repository-name solano --policy-text file://solano-ecs-push-policy.jsonThis produces the following output:

Initial Deployment

Ensure that your current working directory is your GitHub repository root. You should see a file named Dockerfile. This file is used to instruct the Docker command on how to build the Docker image that contains your entire web application. Using this configuration, you can now test the build of the Docker image.

docker build -t solano:init .

docker images

Using Amazon ECR

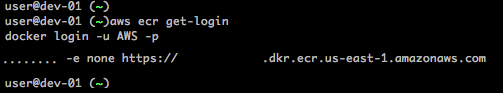

Now that you have a local copy of the image, you’ll want to upload this to your private Docker registry. First, retrieve the temporary credentials that will allow you to authenticate to Amazon ECR:

aws ecr get-loginThis will return a string containing the full docker login command. Copy and paste this to your command line to initiate the login to your Amazon ECR repository. Alternatively, the Amazon ECR Credentials Helper repository provides an option for users wishing to eliminate the necessity of using the docker login and docker logout commands.

docker login -u AWS –p ……. -e none https://<ACCOUNT_ID>.dkr.ecr.<REGION>.amazonaws.com

To get this initial image into your registry, you can now tag the instance and push it to the repository with the docker push command. Replace the string ACCOUNT_ID with the AWS account number of your account and REGION with your desired AWS Region in the following commands:

docker tag solano:init ACCOUNT_ID.dkr.ecr.REGION.amazonaws.com/solano:init

docker push ACCOUNT_ID.dkr.ecr.REGION.amazonaws.com/solano:init

You can confirm the status of the Docker image via the Repositories section of the Amazon EC2 Container Service page in the AWS Management Console.

Configuring Amazon ECS

Now that your Amazon ECR repository is configured and populated with your initial Docker image, you can configure Amazon ECS. You can follow the instructions in the AWS documentation to deploy a new Amazon ECS cluster and add the necessary instances to get started. For the examples here, we used solano-ecs-cluster as the Amazon ECS cluster name. You can choose an instance type of t2.micro to minimize costs. To ensure high availability, we chose two instances for the cluster deployment. You’ll want to use the default value for the security group to allow access to TCP port 80 (HTTP) from anywhere. When asked for the virtual private cloud (VPC) to use, select the VPC ID associated with the VPC in which you will deploy the AWS ELB and Amazon EC2 instances. You can choose to use your default VPC. Ensure that your subnet choices are recorded so that they are the same when you create the AWS ELB.

Creating the AWS ELB

This web service will be deployed behind an ELB load balancer, so you need to create an ELB resource. You can use the following command, replacing each <AZ> reference with a space-separated list of each Availability Zone used for Amazon ECS:

Make sure to record the DNSName returned from the command. You will use this to connect to the service later.

Amazon ECS Tasks

The next step is to create a task definition. Copy the text from this GitHub Gist and paste it into a text editor, substituting <ACCOUNT ID> for your AWS account ID and <REGION> with your AWS region of choice. Save the file with the name solano-task-definition.json. You can now create the task definition using the CLI:

You can now run the task on Amazon ECS. You must use the taskDefinitionArn produced from the previous command in the call below. This value will be the task-definition argument:

You can always check the status of current running tasks with the following command:

You just started the tasks manually, but the goal is to control the tasks via an Amazon ECS service. With that in mind, let’s shut down the tasks we started. Run the following command for each taskArn from the output of list-tasks:

Creating the Amazon ECS Service

Finally, to get your site running, you need to create the Amazon ECS Service. This process can be done via the Create Service page of the Amazon ECS Management Console. Using the console will provide the ability to configure an AWS ELB along with other ECS options. The example below creates the service using the CLI:

You can view details about the cluster from the command output.

Installing and Configuring the Solano CI CLI

To install the Solano CI CLI, on the same machine you’re using to build the Docker image, you’ll need to install the Solano CI client utility. For login instructions, see the Solano Labs website. For installation instructions, see the Solano CI documentation.

Once you have the command installed, you’ll need to set your AWS account number along with the AWS Region you’ll be using. To use the Solano Labs CLI, find your access token by following the instructions in the Solano CI documentation. From the Solano CI console dropdown menu, choose User Settings, and then choose Login Token. Run the commands shown.

Make sure that you’re in the root directory of your GitHub repository before you start working with the Solano CI CLI.

Use the following commands, and paste the solano login command into your development environment as the first line shows. On the second line, replace the XXXXXXXX with your AWS account ID (without dashes). The next command sets your region to US-EAST-1 (set accordingly). Finally, replace the SOLANO EXTERNAL ID and SOLANO IAM ROLE ARN values with the external ID value from the Solano console configuration and the value of the IAM role you entered into that same page.

solano login …

solano config:add org AWS_ACCOUNT_ID XXXXXXX

solano config:add org AWS_DEFAULT_REGION us-east-1

solano config:add org AWS_EXTERNAL_ID <SOLANO EXTERNAL ID>

solano config:add org AWS_ASSUME_ROLE <SOLANO IAM ROLE ARN>

solano config:add org AWS_ECR_REPO solano

These values are now available for your use within the Solano CI build and deployment process and will be referenced on the backend through various scripts.

You can view a list of the variables set with the command:

solano config orgFrom your GitHub repository root, you’ll want to make sure that all your changes are in place before the initial build:

git add *

git commit –m “initial CI-CD commit”

git push –u origin master

Creating the Solano CI Repository

You can now create your Solano CI repository. In the Solano CI console, choose Add New Repo. Follow the on-screen instructions for creating the repository or review the Solano Labs GitHub integration documentation.

Once the repository has been created, you will be able to view the repository build status from the Solano CI dashboard.

Conclusion and Next Steps

You now have the default application deployed and available for viewing via the hostname of the ELB load balancer you saved earlier. You can view the site by entering the URL into your web browser. To test the continuous integration components, simply change the files in your GitHub repository and then push the changes to your Github repository. This will start the process of Solano rebuilding the Docker image and instructing Amazon ECS to deploy a new application version. This automates the entire process of building new Docker images and deploying the new codebase to services without any down time, by taking advantage of Amazon ECS automated deployments and scheduling.

In this blog post, we explained how to automate building and deploying Docker containers by using a single Docker image with a single application. This process can be expanded from simple deployments of a single application to complex designs requiring vast fleets of resources to build and serve. Automating these components allows for a faster development and deployment model focusing resources on product testing and application improvements. Moving forward, readers can expand upon these examples and apply this process to applications currently using Amazon ECS or applications being migrated to containers.

This blog is intended for educational purposes and is not an endorsement of the third-party product. Please contact the vendor for details regarding performance and functionality.