AWS Contact Center

Accelerate Amazon Connect AI agent development with Kiro

Introduction

Building Amazon Connect AI agents presents developers with a familiar challenge: tight timelines meet complex integration requirements. You need to connect multiple backend APIs, implement robust error handling, generate realistic test data, and debug multi-service interactions, all while maintaining code quality and consistency. A proof-of-concept that integrates 10-15 APIs can easily consume 2-3 weeks of development time, even for experienced teams. The complexity multiplies when you lack direct access to backend systems and must create mock implementations that behave realistically.

Amazon Kiro changes this equation. As an AI coding assistant, Kiro acts as an expert pair programmer that understands your entire system architecture. It generates production-quality code for AWS Lambda functions, designs Amazon DynamoDB schemas, creates MCP tool schemas, and analyzes Amazon CloudWatch logs to identify issues, all while maintaining consistency across your codebase. This AI-assisted approach transforms what would typically be weeks of manual coding into days of guided, accelerated development.

In this post, I’ll show you how we built a fully functional Amazon Connect AI agent with 15 backend APIs in just 3 days using Kiro. You will see how conversational development, combined with Kiro’s ability to automatically analyze CloudWatch logs and fix issues, enables rapid iteration that makes ambitious timelines achievable.

The challenge: From API specifications to working agent

The project began with a common scenario: a customer needed an Amazon Connect AI agent that could handle complex customer service workflows. The requirements arrived as 15 API specifications documented in an Excel spreadsheet, each row describing an endpoint with its parameters, expected responses, and business logic. These APIs covered the full spectrum of customer service operations: authentication and profile lookup, search and retrieval operations, booking modifications and cancellations, payment processing and validation, document generation and delivery, and administrative functions for testing and data management.

The use case was comprehensive: customers would interact with an AI agent through voice, and the agent needed to orchestrate calls across all 15 APIs to complete end-to-end workflows. A customer might authenticate, search for existing bookings, modify their reservation, calculate the difference in price, process a payment, and request confirmation documents, all in a single conversation. The AI agent had to understand context, make intelligent decisions about which API/Tools to call and when, handle errors gracefully, and provide natural, helpful responses throughout the interaction.

This was all made even more challenging by the fact that we had no access to the customer’s development or test environments. Their backend systems were still under development. This meant we couldn’t simply point the AI agent at existing APIs and start testing. We needed a complete mock backend that could simulate realistic API behavior, maintain state across multiple calls, and generate responses that accurately reflected the business logic described in those Excel specifications.

The timeline added pressure: we had three days to deliver a working demonstration. In that time, we needed to design and implement the mock backend architecture, create Lambda functions for all 15 APIs, design and populate a database with realistic test data, integrate Bedrock to generate dynamic responses, create MCP schemas for seamless AI agent integration, and debug the entire system to ensure smooth operation during the live demo.

This scenario reflects a common challenge in modern software development: rapid prototyping under constraints. Whether you’re building a POC for a customer, demonstrating a concept to stakeholders, or validating an architecture before committing to full development, you often face similar pressures: complex integration requirements, incomplete or inaccessible backend systems, tight timelines, and the need for production-quality code that can evolve into a real implementation.

How Kiro accelerates Amazon Connect AI agent development

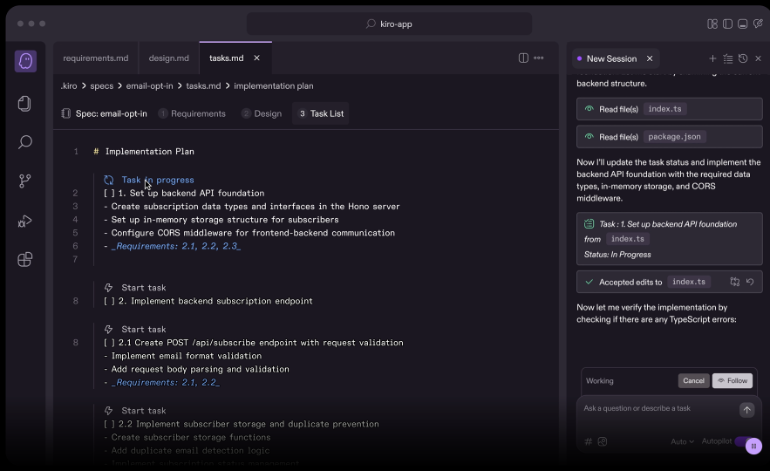

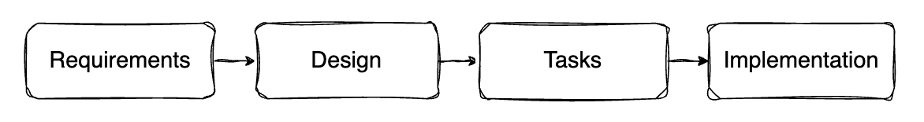

AI assisted design

Traditional architecture design involves hours of research, design meetings, and documentation. With Kiro, we took a different approach: AI-assisted specs-driven design. We described our requirements (15 APIs, no backend access, need for realistic responses, Amazon Connect integration via AgentCore Gateway), and Kiro transformed this into a formal requirements document, then a detailed design document, and finally an actionable task list. This spec-driven workflow ensured nothing was missed and provided clear traceability from requirements through implementation.

What would typically consume a full day of architecture design took an hour or two of interactive discussion with Kiro, resulting in a clear, well-reasoned design ready for implementation.

Rapid code generation

With architecture defined, Kiro generated all 15 Lambda functions with associated Amazon DynamoDB tables, Amazon Bedrock integrations and AWS IAM configurations in a few hours. Each function included:

– Complete implementation of the API specification

– Proper error handling with structured error codes

– Comprehensive logging with correlation ID tracking

– DynamoDB integration for state management

– Bedrock calls for realistic response generation

– Input validation and defensive programming

In addition to developing the code very quickly Kiro also helped me maintain consistency. Every function followed identical patterns for error handling, logging, and integration. When we needed to adjust a pattern (perhaps changing how we handle authentication tokens or modifying the error response format), we described the change once, and Kiro updated all 15 functions consistently. This eliminated the drift and inconsistency that inevitably occurs when manually implementing multiple similar components.

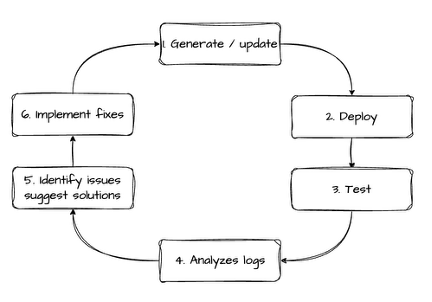

Automatic CloudWatch log analysis and rapid iteration

The most powerful aspect of working with Kiro was the rapid iteration feedback loop it enabled. Here’s how the development cycle worked:

1. Kiro generates/updates code (Lambda functions, MCP schemas, infrastructure)

2. Deploy to AWS using CDK

3. Test the AI agent conversation flow

4. Kiro can be prompted to read CloudWatch logs (AI agent + Lambda functions)

5. Kiro identifies issues, explains root causes, provides fixes

6. Ask Kiro to implement fixes and redeploy

7. Repeat until working correctly

This feedback loop was incredibly fast. Each complete cycle, deploy, test, analyze logs, fix, redeploy, took just 10-20 minutes. Without Kiro’s automatic log analysis, and ability to research the root cause of issues, each cycle could have taken hours of manual debugging.

To enable Kiro to analyze your AI agent logs, you need to enable CloudWatch logging for your Amazon Connect AI agents.

Real debugging examples

DynamoDB query failures: Kiro spotted partition key mismatches, suggested schema adjustments.

AI agent intent recognition problems: Kiro reviewed conversation logs, identified ambiguous phrasing in MCP tool descriptions.

Error handling gaps: Kiro found edge cases in logs that weren’t being handled, generated defensive code.

Over three days, we completed dozens of these rapid iteration cycles. Each time, Kiro’s automatic log analysis eliminated the manual debugging bottleneck, keeping development momentum high.

Conclusion

In this post, I showed how Kiro accelerates Amazon Connect AI agent development through conversational spec-driven design and automatic CloudWatch log analysis. We built a fully functional AI agent with 15 backend APIs in just 3 days, a timeline that would have taken 2-3 weeks with traditional development approaches.

Three capabilities made this possible:

Spec-driven design: Interactive requirements gathering replaced days of meetings

Automated debugging: Direct CloudWatch log access eliminated manual troubleshooting

Fast feedback loops: 10-20 minute iteration cycles enabled continuous refinement

This approach is broadly applicable beyond our specific use case. Whether you’re building customer service agents, technical support bots, or sales assistants, the combination of conversational development and automatic debugging with Kiro can dramatically accelerate your Amazon Connect AI agent projects.

Ready to accelerate your Amazon Connect AI agent development? Get started with Kiro and experience how conversational development and automatic debugging transform what’s possible in days instead of weeks.

Have you tried using AI coding assistants for your Amazon Connect development? Share your experiences in the comments below.

Resources

Kiro

Amazon Connect AI Agents

Amazon Connect AI Agents Documentation

Workshops and Learning

re:Invent 2025 Workshop: Building AI Agents for Amazon Connect

Amazon Connect AI Agents Workshop

Enable CloudWatch Logging for AI Agents

Related AWS Documentation

Amazon AgentCore Gateway (MCP)

About the authors

Thomas Rindfuss is the WW Lead SA for Agentic and Conversational AI for Amazon Connect. He invents, develops, prototypes, and evangelizes new technical features and solutions for conversational AI services that improves the customer experience and eases adoption. When not building AI agents, he enjoys exploring emerging AI technologies and helping customers transform their contact center experiences.

Amazon Kiro is an agentic IDE that helps you do your best work with features such as specs, steering, and hooks. Kiro contributed to this blog post by helping structure the narrative, ensuring technical accuracy, and providing editorial assistance. When not co-authoring blog posts, Kiro helps developers write code, analyze logs, and debug systems faster.

Amazon Kiro is an agentic IDE that helps you do your best work with features such as specs, steering, and hooks. Kiro contributed to this blog post by helping structure the narrative, ensuring technical accuracy, and providing editorial assistance. When not co-authoring blog posts, Kiro helps developers write code, analyze logs, and debug systems faster.